Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Hot Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Report text tools currently only give the option to allign left, right or center. Would be great if we could have the option to have a true 'Justify' option also as it makes chunks of text look so much cleaner

Hello all,

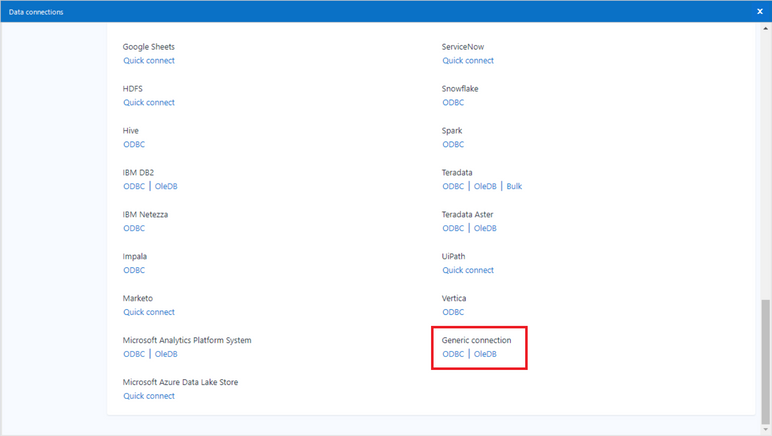

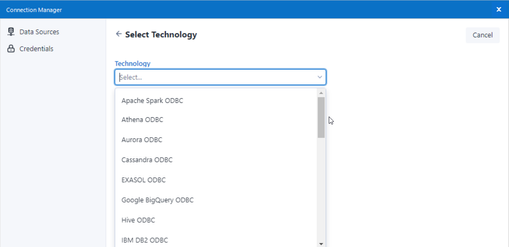

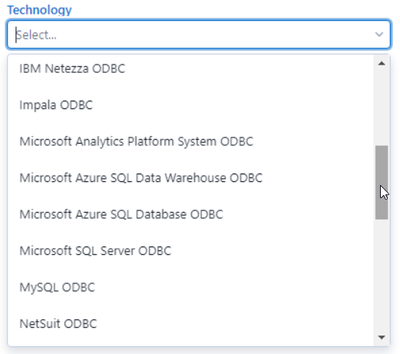

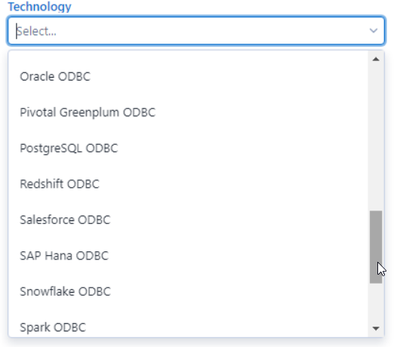

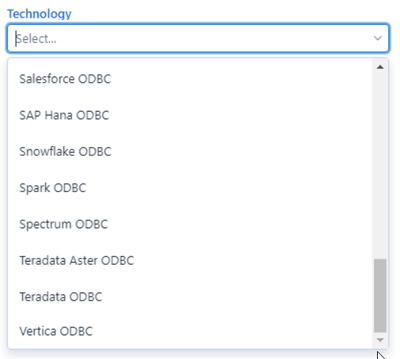

I really love the DCM feature present in the last two releases. However, I have noticed the Generic ODBC Connection is missing :

Classic Connection Manager :

Data Connection Manager :

Best regards,

Simon

Idea: Allow the user to set the data type including character field width in the Text Input tool.

The Text Input tool currently auto-senses the correct type and width of the field in a Text Input tool. However, this sometimes restricts the usage of the data downline.

Examples:

1 - I often run into the situation where I've copied some data from a browse tool and then pasted that as an input to a new workflow. Then I'll turn that workflow into a macro. But then I run into an issue where the data that comes into the macro is larger than the original width in the Text Input tool. This causes problems.

2 - The tool senses that a field containing zip codes should be numeric and then converts the data. This corrupts the data and makes me insert a Select/Formula tool combo to pad the zeros to the left.

Hello!

I'm submiting this idea to put other products into alteryx students program, I think that we (students) should have access to study these products (not only the Intelligence Suite, but Server as well).

Hello,

More and more databases have complex data types such as array, struct or map. This would be nice if we could use it on Alteryx as input, as internal and as output, with calculations available on it.

https://cwiki.apache.org/confluence/display/hive/languagemanual+types#LanguageManualTypes-ComplexTyp...

Best regards,

Simon

Please add official support for newer versions of Microsoft SQL Server and the related drivers.

According to the data sources article for Microsoft SQL Server (https://help.alteryx.com/current/DataSources/SQLServer.htm), and validation via a support ticket, only the following products have been tested and validated with Alteryx Designer/Server:

Microsoft SQL Server

Validated On: 2008, 2012, 2014, and 2016.

- No R versions are mentioned (2008 R2, for instance)

- SQL Server 2017, which was released in October of 2017, is notably missing from the list.

- SQL Server 2019, while fairly new (~6 months old), is also missing

This is one of the most popular data sources, and the lack of support for newer versions (especially a 2+ year old product like Sql Server 2017) is hard to fathom.

ODBC Driver for SQL Server/SQL Server Native Client

Validated on ODBC Driver: 11, 13, 13.1

Validated on SQL Server Native Client: 10,11

- ODBC Driver 17+ is not mentioned, even though it was released in February of 2018. https://docs.microsoft.com/en-us/sql/connect/odbc/windows/release-notes-odbc-sql-server-windows?view...

- SQL Server Native Client is deprecated. It is being replaced by Microsoft OLE DB Driver for SQL Server. However, there is not a mention of Microsoft OLE DB Driver for SQL Server. The latest version of this is 18.3.0. https://docs.microsoft.com/en-us/sql/connect/oledb/release-notes-for-oledb-driver-for-sql-server?vie...

From what I can tell using ProcMon, presently when using the Directory tool to list files (including subdirectories) the Alteryx Engine runs a single threaded process.

When you're trying to find files by checking recursively in large network paths, this can take hours to run.

It would be great if the tools would split up lists of directories (maybe by getting two or three levels down first) and then run each of those recursive paths in parallel.

While it is possible to do this using a custom Python or cmd->PS command, it would be great if this could just be a native part of the application.

Who needs a 1073741823 sized string anyways? No one, or close enough to no one. But, if you are creating some fancy new properties in the formula tool and just cranking along and then you see that your **bleep** data stream is 9G for nine rows of data you find yourself wondering what the hell is going on. And then, you walk your way way down the workflow for a while finding slots where the default 1073741823 value got set, changing them to non-insane sized strings, and the your data flow is more like 64kb and your workflow runs in 3 seconds instead of 30 seconds.

Please set the default value for formula tools to a non-insane value that won't be changed by default by 99.99999% of use cases. Thank you.

Please add ablity to globally, within a module, forget all missing fields.

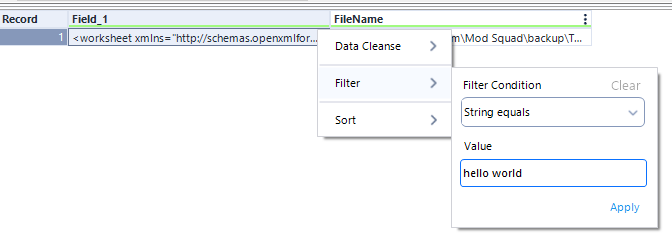

After I type something into the filter box, I should be able hit enter and then it just applies my change (ie enter hits the apply button). It used to be this way, but it's not working as of 2021.2. This feels like a very tiny move in the wrong direction. Currently enter does nothing. It looks like if I hit tab twice and then enter, it finds the apply button. I shouldn't have to hit tab twice.

We all love seeing this. And, it's fairly easy to fix, just go find the macro and insert a new copy. But, then you have to remember the configuration and hope that it was simple.

With the tool that's there, the XML still contains the configuration, all that's missing is the tool path. It would be great to be able to right click and repair the path from the context of the missing macro.

Trying to solve some use cases, I realized that I had to simulate the factorial behaviour.

Having a factorial formula can make this process easier.

Thanks!

Hey YXDB Bosses,

Let's move forward with our YXDB. Maybe give AMP a real edge over e1. Here are some things that could may YXDB super-powered:

- Metadata

- Workflow information about what created that poorly named output file.

- When was the file originally created/updated.

- SORT order. If there is a sort order for the data, what is it?

- Other stuff

- INDEX. Currently you get spatial indexes (or you can opt out). If I want to search through a 100+MM record file, it is a sequential read of all of the data. With an index I could grab data without the expense of a calgary file creation. Don't go crazy on the indexing option, just allow users to set 1+ fields as index (takes more time to write).

- I'm sure that you've been asked before, but CREATE DIRECTORY if the output directory doesn't exist.

- Old School - Crazy Idea

- Generation Data Groups (GDG)

This will likely make @NicoleJ 's eyes roll 🙄 but back in the days, we could write our data to the SAME filename and the old data became 1 version older. You could read the (0) version of the file or read from 1, 2, 3 or more previous versions of the data using the same name (e.g. .\Customers|||3). The write of the output file would do all of the backing up of the data (easy to use) and when the initial defined limit expires, the data drops off.

- Generation Data Groups (GDG)

Just a little more craziness from me

cheers

It would be nice to be able to concatenate numeric values (integers, doubles, etc.) directly in the Summarize Tool.

I know this would involve converting it to a string on the backend, but I don't believe there would be any data loss when going from numeric to string. I know this can be done by using other tools like Select of Formula to convert to string before the summarize but I don't see any reason why this couldn't be accomplished in a single tool.

Thanks,

Paul

For in-DB use, please provide a Data Cleansing Tool.

when you render out to an excel file, the excel file is created as a new file. You cannot render to an existing excel file.

I'd like to see this functionality. I have a client who has a workbook with multiple formatted sheets and they'd like to render an addiitional sheet of formatted data out from Alteryx into the existing workbook.

Apologies if this has been suggested already - did a search and didn't see anything similar.

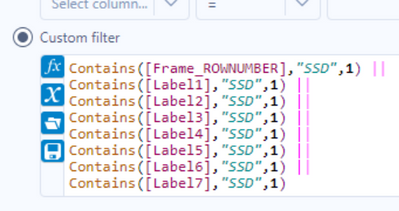

This is a quality of life/UX idea. The search functionality in the results pane essentially does a 'contains' search on all of the columns (see below screenshots for the filter inserted by the 'apply data manipulations button). As I build workflows and profile the data, it'd be helpful if I could click one or more columns and limit the search bar to just those fields.

Right now, depending on the dataset I could get rows returned by the search due to the search term appearing in columns that aren't relevant. To workaround this I could add select tools to limit the columns or do more robust filters in a filter tool, but having it built in would be very helpful.

Hello all,

A few weeks ago Alteryx announced inDB support for GBQ. This is an awesome idea, however to make it run, you should use Oauth2 Authentication means GBQ API should be enabled. As of now, it is possible to use Simba ODBC to connect GBQ. My idea is to enhance the connection/authentication method as we have today with Simba ODBC for Google BigQuery and support inDB. It is not easy to implement by IT considering big organizations, number of GBQ projects and to enable API for each application. By enhancing the functionality with ODBC, this will be an awesome solution.

Thank you for voting

Albert

A common problem with the R tool is that it outputs "False Errors" like the following: "The R.exe exit code (4294967295) indicted an error"

I call this a false error because data passes out of the R script the same as if there were no error. As such, this error can generally be ignored. In my use case, however, my R tool is embedded within an iterative macro, and the error causes the iterator to stop running.

I was able to create a workaround by moving the R tool to a separate workflow and calling it from the CReW runner macro within my iterator, effectively suppressing the error message, but this solution is a bit clumsy, requires unnecessary read/writes, and uses nonstandard macros.

I propose the solution suggested by @mbarone (https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Boosted-Model-Error/td-p/5509) to only generate an error when the R return code is 1, indicating a true error, and to either ignore these false errors or pass them as warnings. This will allow R scripts and R-based tools to be embedded within iterative macros without breaking.

At the moment containers either expand and overlap other tools, or you have to leave space for them (defeating the original purpose of using them). Is there a way we can have the containers expansion shift the workflow so the others tools shift down / right to account for this expanision?

- New Idea 249

- Accepting Votes 1,818

- Comments Requested 25

- Under Review 167

- Accepted 56

- Ongoing 5

- Coming Soon 11

- Implemented 481

- Not Planned 118

- Revisit 65

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

112 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

244 -

Category Data Investigation

76 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

636 -

Category Interface

238 -

Category Join

102 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

390 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

957 -

Data Products

1 -

Desktop Experience

1,518 -

Documentation

64 -

Engine

125 -

Enhancement

309 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

11 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

New Request

184 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

77 -

UX

222 -

XML

7

- « Previous

- Next »

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

- nzp1 on: Easy button to convert Containers to Control Conta...

-

Qiu on: Features to know the version of Alteryx Designer D...

- DataNath on: Update Render to allow Excel Sheet Naming

- aatalai on: Applying a PCA model to new data

- charlieepes on: Multi-Fill Tool

- seven on: Turn Off / Ignore Warnings from Parse Tools

- vijayguru on: YXDB SQL Tool to fetch the required data

- bighead on: <> as operator for inequality