Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

It really would be great if Alteryx supports the 'set' data type.

I often have situations where I really wish I could make a field with a data type of "set".

For example, I have a table of pets owned by each person.

The "Pets" Field would be perfect if it could be processed as a "set" type.

| Person | Pets |

| John | Dog, Cat |

| Susan | Fish |

(I sort the pet values in alphabetical order, and concatenate the string values using the summarize tool. This is the best I could think of)

Hi Team,

I have an idea where we can use Alteryx to build a virtual Assistant. As we are currently using Intelligent suite extract information through pdfs. Now we can connect the VA and Intelligent suite to offer a complete product.

Please let us know your views,

Today the Autofield tool transforms the fields into byte by default when it considers that the content is suitable while we expect text in it and that it can simply be a field not filled in in the context current but which may be later.

The idea would be to be able to choose which type by default to implement on text or empty fields and not the default byte because a byte field is not recognized on a formula using an IN for example which can produce errors in the following workflows.

Hello,

My idea is that the current Download tool does not handle errors and continues its path even if it does not find for example a file in the transmitted URL or if it does not find the hostname it crashes.

In the case of a user with several URLs in a row, this is penalizing.

In the case of downloading files with recording, it still writes a file (thus overwriting the existing file) but which is not openable and is not in the correct format. (BLOCKED file!) Which then causes problems in workflows reading these files.

The idea would be to put a second output to this tool for all the URLs where there was a problem (non-existent hostname, file not found, HTTP KO) and one where it received the expected elements so as not to prejudice the processing. and allow better management of error cases.

Regards,

Bruno

Hi Team,

I have a dataset of x,y values that I am plotting with an interactive chart tool. These values will vary widely so I can't use custom display ranges and have to rely on the "Auto" function for display range.

My problem is that the two axis are Auto scaled to different scaling and it is turning all my ellipse shapes into circles. Not a huge setback as you can check the Axis labels to see the scale but for this use case the shape of the data is what I am trying to portray and this makes my reports somewhat misleading.

I'm suggesting adding an option, maybe a tick box, under the interactive chart's "Auto" config that would allow both Axis to be scaled the same amount (That of the highest value).

Cheers,

Stephen

While using a Find & replace we get a message that says "Info: Find Replace (3): 5893 records were found and 14318 records were not found."

Why do not you add a Flag column say "Found & Replaced?" with "Yes" for those where it is found for 5893 records and "NO" for those 14318 records where we did not find anything.

This will help everyone I believe where data comes from multiple sources and used for consolidation

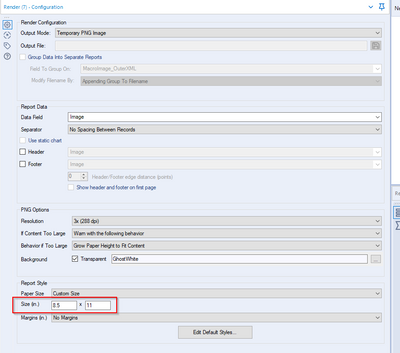

Currently users have the option of outputting to a PNG image but currently can only use Inches to get the size set. I want to be able to output with a pixel size not in inches.

Enable multiple sheet selection for excel

I would like to be able to connect to an AWS EMR resource.

And an AWS gov cloud endpoint.

Hello Team,

I have been using Alteryx for sometime now. The final output of any kind be it analytics or simple Excel outputs is expected to have some basic formatting.

A minimum of 3 Tools and humongous effort is need to format even basic headers and Cells with a border and color.

Using Table followed by Render and then column level setting for having borders for the set of data is little time consuming considering it has 0 impact on the actual data output.

Additionally Render tool to Excel will be in number format regardless of the data type. Same is the case for date fields.

Also the Render tool Layout changes impact the data as well as the column lengths adversely.

Can we have some simple option of overwriting a file where the formatted excel is merely overwritten with data without changing the datatype or format out the excel sheet.

It would be huge win if this is easily do-able wither via a new tool or as an option in the output tool.

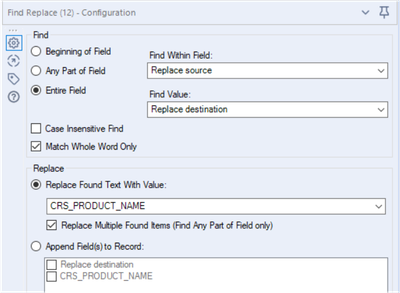

While doing Find replace with the following settings,

The output limits to the number of characters comes with "Replace source". If the number of characters in "Replace Found Text With Value" is more than the source then it will truncate the value to source. I feel it's not correct.

Suggesting, that there should be an option to overwrite the "field size" or keep as it coming from source.

A lot of business processes require encryption and decryption, for either data transfer or authentication processes. It would be great if Alteryx could expose some of the C# encryption functions from the System.Security.Cryptography library, as either functions in the Formula tool, or as new tools.

I know that some of these algorithms require byte input, so a new tool might be the sensible option, so that user can pass various data types that are pre-coded to byte arrays before going into the algorithms.

I get the following message when running a macro with the Render tool inside

" Alteryx ppt testing1 (162) Tool #26: You have found a bug. Replicate, then let us know. We shall fix it soon."

I believe this happens when there is multiple line breaks next to each other and trying to put this into the render tool outputting to powerpoint within a table.I have the formula for updating the line breaks "Replace([Layout],"<br />","<br />")" which works when there aren't very many line breaks but now I have integrated Python to regex out HTML the Render tool has stopped working

When using Dynamic Input with databases, the Database may be returning errors or other information that the tool cannot parse into a dataset.

It would be great if we could see the 'raw' response from the database somehow, as this might provide insight into why we are not getting the expected results.

If the tool could output an optional error column that has the unparsed response from the database server, it might allow us to debug the problem ourselves.

If the returned data is actually a string response from the database, but one that is flawed in some way, we might still be able to parse data out of it to 'ride over' the error.

Configuration window - Add feature to zoom in or out of the configuration window similar to the canvas. There is alot going on in the Configuration window and it would be helpful (especially for those of us with eyesight challenges) to be able to zoom in/out similar to the Canvas.

More and more people are making use of Plotly and Plotly Express to develop really great graphics. However it is extremely difficult to extract either interactive charts (HTML format) or static images (PNG or JPEG).

The main issue for Plotly to save static images is the ability to install and use Orca (application) for R and kaleido (library) for Python. Despite my best efforts I have had no luck getting either approach to work with the respective Alteryx environments

Migrate old R based charts and create new statistical charts in the interactive chart tool to provide enhanced statistical charting and visual data exploration capabilities.

This includes:

- Error Bars

- Distribution Plots

- 2D Histograms

- Scatterplot Matrix

- Facet & Trellis Plots

- Tree Plots

- Violin Plots

- Heatmaps

- Log Plots

- Parallel Coordinates Plot

This these URLs for more examples:

The ability to create, modify and enhance interactive chart types through custom plotly code in either R or Python. This would allow new style of visualisation to be created and shared with other authors.

needs a much simpler way to configure this tool. Its way more obfuscated than it needs to be and the wording on the config is very confusing.

For heavy workflows (e.g. reading massive amount of data, processing it and storing large datasets through in db tools in Cloudera), a random error is sometime generated: 'General error: Unexpected exception has been caught'

This seems due to Kerberos ticket expiration and the related setting may not be modifiable by the Alteryx developer 'especially when GPO). Suggestion is to enhance the indb tools in such a way that they are able to automatically renew the Kerberos ticket like other applications do.

Br, Lookman

- New Idea 209

- Accepting Votes 1,837

- Comments Requested 25

- Under Review 150

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 68

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

123 -

Enhancement

276 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- apathetichell on: Github support

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...