Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Alteryx Support recreated the same issue on Designer 2024.1.1.93 and SharePoint Tool 2.6.3 for Designer 2024.

- Have anyone experienced the same error?

- If yes, is there any workaround to connect with M365?

Hello

Cartesian product is a common issue when joining dataset with a bad key. What I suggest is an option to check if there will be a cartesian product on the join tool.

-there is a label "Cartesian product (non join key uniqueness) detection"

-under it a drop down menu with three choices

-do nothing

-fail

-warning

Algo :

if do nothing==> well... do nothing more than actual behaviour.

if "fail" or "warning" : count distinct of join key versus count row on each side of the join. If none is unique, display a warning or an error message.

Best regards,

Simon

Hello,

This is a feature I haven't seen in any data prepation/etl. The core feature is to detect the unique key in a dataframe. More than often, you have to deal with a dataset without knowing what's make a row unique. This can lead to misinterpret the data, cartesian product at join and other funny stuff.

How do I imagine that ?

a specific tool in the Data Investigation category

Entry; one dataframe, ability to select fields or check all, ability to specify a max number of field for combination (empty or 0=no max).

Algo : it tests the count distinct every combination of field versus the count of rows

Result : one row by field combination that works. If no result : "no field combination is unique. check for duplicate or need for aggregation upstream".

ex :

order_id line_id amount customer site

| 1 | 1 | 100 | A | U_250 |

| 1 | 2 | 12 | A | U_250 |

| 1 | 3 | 45 | A | U_250 |

| 2 | 1 | 75 | A | U_250 |

| 2 | 2 | 12 | A | U_250 |

| 3 | 1 | 15 | B | U_250 |

| 4 | 1 | 45 | B | U_251 |

The user will select every field but excluding Amount (he knows that Amount would have no sense in key)

The algo will test the following key

-each separate field

-each combination of two fields

-each combination of three fields

-each combination of four fields

to match the number of row (7)

And gives something like that

choice number of fields field combination

| very good | 2 | order_id,line_id |

| average | 3 | order_id,line_id, customer |

| average | 3 | order_id,line_id, site |

| bad | 4 | order_id,line_id, site, customer |

| … | … | …. |

Best regards,

Simon

Hello,

Here is the proposal about an issue that I face frequently at work.

Problem Statement -

Frequent failure of workflows that have either been scheduled or run manually on server because the excel input file is sometimes open by another user or someone forgot to close the file before going out of office or some other reason.

Proposed Solution -

The Input/Dynamic Input tools to have the ability to read excel files even when it is open so that the workflows do not fail which will have a huge impact in terms of time savings and will avoid regular monitoring of the scheduled workflows.

Currently when a unique tool is used, and a field is removed upstream then the workflow fails to move forward. If you have one or two unique fields being used then it is no big deal, but when you have a very complex workflow then you have to click into each one of those tools in order to update. This can be very problematic and creates a lot of time following all the branches that is connected after the 1st unique tool is used. My suggestion is to make this a warning instead of a fail or have an option to select fail or warning like the union tool is setup. This way people can decide how they want this tool to react when fields are removed.

Whenever I overwrite an Excel sheet with data of the same format just different values (e.g. Q2 data versus Q1 data) all of my Pivot Tables break and I have to manually recreate them even though the schema didn't change. Somehow the Table is being deleted/removed and replaced with a completely different Table which is what causes the Pivot Tables to break. The only way to avoid this is to manually set the Cell Range, but who has time for that? The only solution I have found is to manually copy all values and paste them over the existing data which is very inefficient the more sheets you are working with.

A client just asked me if there was an easy way to convert regular Containers to Control Containers - unfortunately we have to delete the old container and readd the tools into the new Control Container.

What if we could just right click on the regular Container and say "Convert to Control Container"? Or even vice versa?!

Hi! I noticed that there is currently no way to use the debug function when working on an analytic app workflow that contains control containers. I'm running 2024.1 and I use the debug feature in my workflows that currently do not have control containers for me to troubleshoot when data changes in a dynamic workflow. Currently, when running in test mode, I have no way to review the data step by step in the flow when selected dynamically through the interface apps. I can only view the final output and make tweaks.

The idea behind encrypting or locking a workflow is good for users to maintain the workflow as designed.

However, when a user reaches a level of maturity equivalent to that of the builder or more, or even when changes are required - the current practice is to keep a locked and unlocked version of the workflow so that it allows for a change in the future.

It would be much simpler if we can have the power to lock and unlock workflows with a password. Users can then maintain and keep the passwords so that they can continue with the workflow.

Not everybody is on Server yet so this feature is very helpful for control before Server migration. Otherwise it’s just password protecting a folder containing the workflow package, then re-locking a new save file each time a change is made or when someone new takes over on prem.

I would like to propose three feature enhancements for the Cross Tab tool under the Transform tool category.

1. Bringing Concat Unique functionality, which is an idea that is currently in Coming Soon status.

2. Adding Start and End in addition to Separator, similar to the Concatenate Properties found in the Summarize tool.

3. Changing the Default Size from 2048 to 1073741823 (max V_WString size). It is common for especially new users to ignore the truncation errors and potentially miss important data that may need to be processed downstream.

The TO field (and I assume other fields) in the Email tool seem to have a 254 character limit - this should be increased heavily as there are many distribution lists that will go above this character limit!

- Solved: Email tool recipients list truncating emails - Alteryx Community

- Solved: Email Widget: Cut off all the emails in the "To" r... - Alteryx Community

- Re: Email Address Truncated in the "To" Field - Alteryx Community

A distribution list works but is not ideal. Thumbs up if you like this idea!

Hi everyone! I have been trying to find a way to do this without creating a new idea, but I have decided to make it an official 'Idea' to see if there is anyone else that might appreciate a feature like this (or has found there own way to do it!)

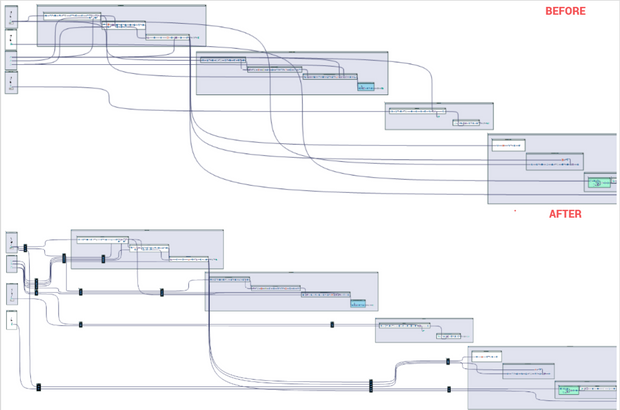

Do your workflows look like this...

but you wish they could look like this?

Well... they can with your help!

Okay, I might be crazy...but its worth a shot.

While I understand this is an extremely niche issue, in my experience, it can become very difficult to trace the data through unmanaged lines in large workflows. I think it will be great to cable manage canvas lines so workflows are easier to follow. Heck, while I am already at it, I think it we should all start calling these canvas lines cables... They don't carry electricity, but they sure do carry data!

Here is an example I created in Alteryx using select tools and containers:

Sounds simple :

Best regards,

Simon

Idea

I feel the necessity of the features to know the version of Alteryx Designer Desktop for each user within an organization.

As well as some usage data of each user like 'Last Used' are available in License Portal, if 'Version of Alteryx Designer Desktop' for each user is also available in License Portal, it would be more manageable and could enhance the governance in organization.

Background

When the organization uses Alteryx Server and Designer Desktop, it is more challenging to make alignment of version of these products.

We frequently see our users install/upgrade to newer version of Alteryx Designer than that of Alteryx Server, and cause incompatibility issue when interacting with Alteryx Server.

Although we instruct our users to install the particular version, they sometimes upgrade to newer version later on by themselves, but it's not detectable.

I mean, even if they're using a wrong version of Alteryx Designer Desktop, we won't realize it until a problem occurs.

In order to identify such users and rectify their version, administrator shall be able to know which version they use whenever needed.

License Portal would be one of the best platform to make that information available in my opinion.

Similar to the setting that you have in many individual tools (join, append, select, et al) where you can go to options and choose to "forget missing fields" it would be nice where you could go to options for the entire flow and "forget missing fields".

This would remove the headache that you have with large flows where you make a change(s) then have to go back through each and every tool to "forget" within that tool. Yes you could still do it individually, but if you chose, you could also do it universally for the entire flow all at once to all the 'missing fields'.

Hello all,

We all know for sure that != is the Alteryx operator for inequality. However, I suggest the implementation of <> as an other operator for inequality. Why ?

<> is a very common operator in most languages/tools such as SQL, Qlik or Tableau. It's by far more intuitive than != and it will help interoperability and copy/paste of expression between tools or from/to in-database mode to/from in-memory mode.

Best regards,

Simon

Hello all,

This is a very interesting feature of the List Box and Drop Down interface tool : the ability to select fields

However such a feature is not available for in-database, highly limiting the use of macros.

Please change.

Best regards,

Simon

Hi all,

When preparing reports with formatting for my stakeholders. They want these sent straight to sharepoint and this can be achieved via onedrive shortcuts on a laptop. However when sending the workflow for full automation, the server's C drive is not setup with the appropriate shortcuts and it is not allowed by our admin team.

So my request is to have the sharepoint output tool upgraded to push formatted files to sharepoint.

Thank you!

Currently if I have a connection between two tools as per the example below:

I can drag and drop a new tool on the connection between these tools to add it in:

And designer updates the connections nicely, however if I select multiple tools and try and collectively drop them inbetween, on a connection then it won't allow me to do this, and will move the connection out of the way so it doesn't cause an overlap.

Therefore as a QoL improvement it would be great if there was a multi-drop option on connections between tools.

- New Idea 273

- Accepting Votes 1,818

- Comments Requested 24

- Under Review 174

- Accepted 56

- Ongoing 5

- Coming Soon 11

- Implemented 481

- Not Planned 116

- Revisit 62

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

246 -

Category Data Investigation

77 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

640 -

Category Interface

239 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

394 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

88 -

Configuration

1 -

Content

1 -

Data Connectors

962 -

Data Products

2 -

Desktop Experience

1,533 -

Documentation

64 -

Engine

126 -

Enhancement

326 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

12 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

192 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

79 -

UX

222 -

XML

7

- « Previous

- Next »

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update - StarTrader on: Allow for the ability to turn off annotations on a...

-

AkimasaKajitani on: Download tool : load a request from postman/bruno ...

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

- maryjdavies on: Lock & Unlock Workflows with Password

- noel_navarrete on: Append Fields: Option to Suppress Warning when bot...