Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Hello!

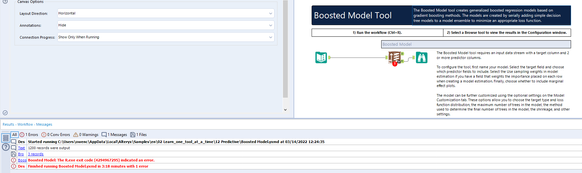

I remember a while ago running into a peculiar error:

'The R.exe exit code (4294967295) indicted an error'. This was peculiar, as the data output was still seemingly correct, however, the error made me double-check the community for answers.

There are some very technical sources here:

https://community.alteryx.com/t5/Alteryx-Designer-Discussions/R-tool-Fake-Errors/td-p/25163

https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Boosted-Model-Error/td-p/5509

but in short, this seems to be caused by a return code from C++ libraries, being understood by R as an error. Its a very inconsistent error, typically caused by low memory. This creates what most call a 'fake error' - the code runs perfectly fine, but seems to produce an error that doesn't actually indicate anything wrong.

Within those threads, its also stated that calling the garbage collection function (gc()) does tend to solve the problem on R exit, however this requires a user to understand basic R, and have access to the macro to be able to change the code - thus making predictive analytics more intimidating than it already is for new Alteryx users.

The first occurrence of this error seems to be way back in 2015, however the error is still being reported by users (see posts from 2020 and 2021):

https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Password-protected-Excel-files-R-solut...

https://community.alteryx.com/t5/Alteryx-Designer-Knowledge-Base/Error-The-R-exe-exit-code-n-indicat...

An important issue of these 'fake errors', is not only that they cause confusion, but also that they will cause analytic apps and server workflows to not work as expected, and stop running depending on the configuration.

My suggestion would be to revisit this issue, as by my understanding it occurs inconsistently, and calling garbage collection does not always seem to fix it. Even if the Error message is still created, it may be worth Alteryx suppressing these errors, in the case they are not real errors.

Steps to reproduce:

(as mentioned, its very inconsistent)

1. Open the Boosted Model example workflow

2. *10 the number of maximum trees in the model, in the boosted model configuration (Model customization)

3. Run the workflow, inspect the results (which are seemingly correct), and the error message in the results window.

Hope this helps!

TheOC

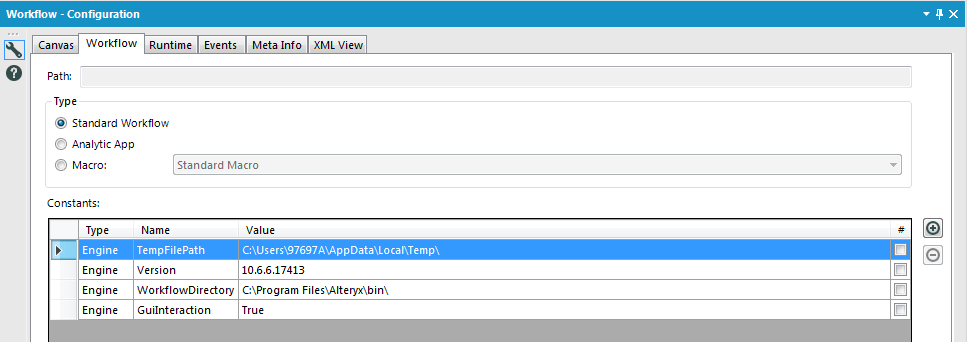

Hi all,

Something really interesting I found - and never knew about, is there are actually in-DB predictive tools. You can find these by having a connect-indb tool on the canvas and dragging on one of the many predictive tools.

For instance:

boosted model dragged on empty campus:

Boosted model tool deleted, connect in-db tool added to the canvas:

Boosted Model dragged onto the canvas the exact same:

This is awesome! I have no idea how these tools work, I have only just found out they are a thing. Are we able to unhide these? I actually thought I had fallen into an Alteryx Designer bug, however it appears to be much more of a feature.

Sadly these tools are currently not searchable for, and do not show up under the in-DB section. However, I believe these need to be more accessible and well documented for users to find.

Cheers,

TheOC

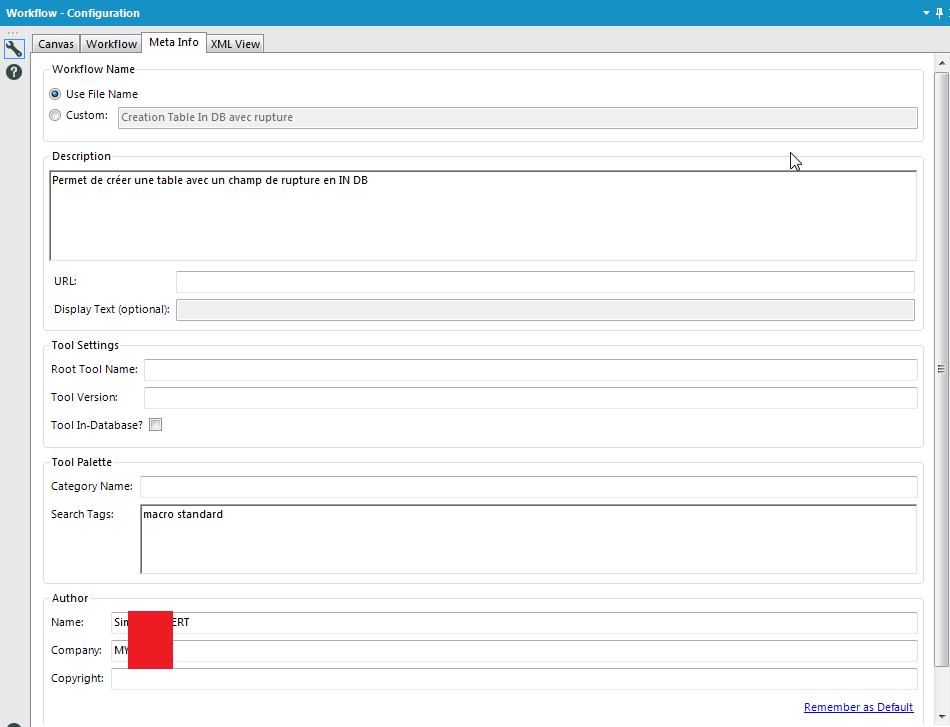

I love Workflow Meta info, especially the ability to put the Author, the search tags,the version, the description, etc...

But why can't we use it as Engine Constant? It doesn't seem very hard to implement and it would change life for development.

Hi,

I have 2 simple ideas that would help me a little bit while working with the explorer box:

- I think it would be amazing if we could pick the Internet Browser while using the Explorer box.

While opening certain websites, I am getting this information:

I know probably the answer to it isn't so simple, but that would give us a little bit extra flexibility while using Explorer box.

My goal is to open a word or excel file with specific documentation. If I were able to use a newer browser, I could easily open a file with a link to a webpage.

- Second, can we give the Explorer box a header similar to what we got in the containers? The address bar does not always give us information about what the explorer box shows and a small extra header that we can configure would add some additional clarification

Alternatively, if I could merge a comment tool with the Explorer box tool that would also work.

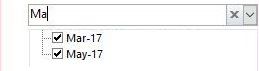

Hi there,

My idea comes when I've built an application, where user select filter from drop-down list. However it contains thousands of records, so it takes lot's of time to find desired record.

In Excel and MS Access when you use filter you can put many letter and filter shows rows that match the input. In Alteryx user can only put first letter, which is huge drawback to my users.

This is how it works in Excel:

Hope you like it!

I try to use the Comment tool for documentation within workflows for team members (and my future self when I have to revisit it months after I built it). It would be helpful to be able to use markdown formatting inside the tool.

This might even encourage more documentation. *fingers crossed*

Similar to the thoughts in this idea, it would be great if the parenthesis matching functionality could be added to the formula tool as well.

Can you put a check box on the container title bar to make it easier to enable/disable containers in the process window? And can you make the minimize/maximize option for a conatiner a separate option from enable/disable?

Currently, when one uses the Google BigQuery Output tool, the only options are to create a table, or append data to an existing table. It would be more useful if there was a process to replace all data in the table rather than appending. Having the option to overwrite an existing table in Google BigQuery would be optimal.

I like the new cache option in 2018.3, but I would like it to function a little bit different. Let's say you cache at a certain point and then continue to build after that. If I reach another checkpoint and want to cache, it currently re-runs the entire workflow (ie it ignores my cache upstream and just goes back to the beginning of the workflow); instead, I would rather have it utilize the upstream cache. Personally, caching is usually an iterative effort during development where I keep caching along the way. The current functionality of the cache is not conducive to this. Thanks!

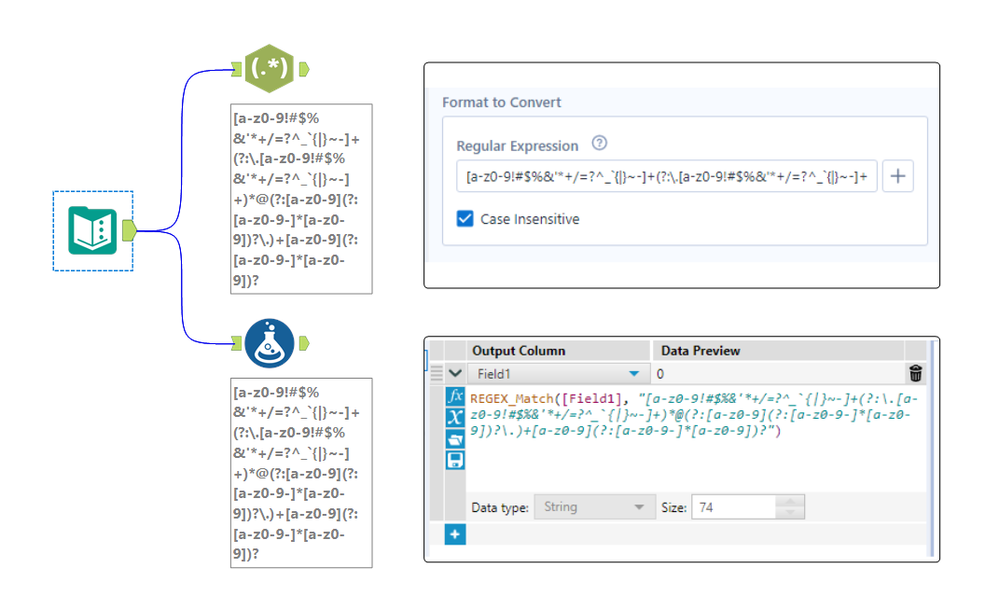

The expression editor in the RegEx tool is only a single line, which makes it really hard to edit long regular expressions. See attached photo comparing the expression editor in the RegEx tool compared to the formula tool for the same expression. Please make the RegEx editor box either wrap to multiple lines, have a pop-out expression editor, or something so we can see long expressions.

When I create a new table in a in-Db workflow, I want to specify some contraints, especially the Primary Key/Foreign Key

For PK/FK, the UX could be either the selection of some fields of the flow or a free field (to let the user choose a constant).

From wikipedia :

In the relational model of databases, a primary key is a specific choice of a minimal set of attributes (columns) that uniquely specify a tuple (row) in a relation (table).[a] Informally, a primary key is "which attributes identify a record", and in simple cases are simply a single attribute: a unique id.

So, basically, PK/FK helps in two ways :

1/ Check for duplicate, check if the value inserted is legit

2/ Improve query plan, especially for join

(1) I would like to have more text formatting options available in the Comment Tool, such as:

- set different format for selected words (color, bold, underline, size..)

- indenting

- bullet list or numbered list

(2) Option to remove or recolor the blue outline of the comment box. (Especially when I have a comment in a color-filled comment box, I would prefer a comment box without a dark outline.)

(3) UX - Add an arrow cursor to indicate resizing functionality

Hi all,

I think it would be great if Alteryx could send calendar invites in Outlook (and perhaps other calendaring systems) like it sends emails.

Currently the only way to accomplish this is to send it as an attached ICS file on a regular email.

In my use case, rather than auto-populating a Shared Mailbox/calendar, someone has to go into the inbox, the email has to be opened then the ICS has to be clicked on to interact with.

There are ways in Outlook to send an item like this but have it appear automatically without the end user ever seeing the actual invite. (Our Company adds holidays and other important dates in this manner)

So basically I want this functionality available in Alteryx to do the same. I have posted about it before in the discussions threads, but basically right now we enter our time in HR system, then have to manually enter the same info on our personal calendar in Outlook and any team calendar whether it be a SharePoint calendar, a group calendar in Outlook etc.

If Alteryx had the ability to send these types of invites, employees could enter the info in our HR system then Alteryx can get the data feed and automatically populate the other calendar (whichever type it may be).

Hopefully this gets some likes.

Hello,

In Datascience, Levenshtein and Jaro Winkler distances are used to quanitify a similarity between two strings.

Here the wikipedia pages

https://en.wikipedia.org/wiki/Levenshtein_distance

https://en.wikipedia.org/wiki/Jaro%E2%80%93Winkler_distance

Note 1 : the Levenshtein and Jaro Distances are already used in Fuzzy Matching tool, so that shouldn't be a huge work to include it in formula

Note 2 : there is a useful macro on the galley https://gallery.alteryx.com/#!app/LevenshteinDistance/5c54701f826fd30988f02779

Note 3 : some product already have it implemented such as Apache Hive or Qlik Sense

Best regards,

Simon

I noticed through the ODBC driver log that Alteryx doesn't care about the kind of base I precise. It tests every single kind of base to find the good one and THEN applies the queries to get the metadata info.

Here an example. I have chosen an Hive in db connection. If I read the simba logs, i can find those lines :

Mar 01 11:37:21.318 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select USER(), APPLICATION_ID() from system.iota

Mar 01 11:37:22.863 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select USER as USER_NAME from SYSIBM.SYSDUMMY1

Mar 01 11:37:23.454 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select * from rdb$relations

Mar 01 11:37:23.546 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select first 1 dbinfo('version', 'full') from systables

Mar 01 11:37:23.707 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select #01/01/01# as AccessDate

Mar 01 11:37:23.868 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: exec sp_server_info 1

Mar 01 11:37:24.093 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select top (0) * from INFORMATION_SCHEMA.INDEXES

Mar 01 11:37:24.219 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: SELECT SERVERPROPERTY('edition')

Mar 01 11:37:24.423 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select DATABASE() as `database`, VERSION() as `version`

Mar 01 11:37:24.635 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select * from sys.V_$VERSION at where RowNum<2

Mar 01 11:37:25.230 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select cast(version() as char(10)), (select 1 from pg_catalog.pg_class) as t

Mar 01 11:37:25.415 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select NAME from sqlite_master

Mar 01 11:37:25.756 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select xp_msver('CompanyName')

Mar 01 11:37:26.156 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select @@version

Mar 01 11:37:26.376 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: select * from dbc.dbcinfo

Mar 01 11:37:26.522 INFO 5264 HardyDataEngine::Prepare: Incoming SQL: SELECT @@VERSION;

I can understand that when Alteryx doesn't know the kind of base he tries everything.. (eg : in memory visual query builder) but here, I have selected the Hive database and I have to loose more than 5 seconds for nothing.

Here's a reason to get excited about amp! Create a runtime setting that gets Alteryx working even faster.

when you configure a file input you see 100 records. Imagine the delight that after you run your workflows all input tools are automatically cached. You run so much faster.

now think of the absolute delight that even before you run the workflows that a configured input tool causes a background read off the input data. Whether it is a new workflow or an opened existing flow that reading can start ahead of the time button.

what do you think 🤔?

Hi all,

The Publish to Tableau Server tool is great.. but requires username and password. If you are using AD, there is a chance that your users don't have a password. In that case, you probably have a technical user that you share across the team. This is not an ideal situation and you loose the governance around the data.

Fortunately, there is an easy workaround. You can leverage personal token authentication : https://help.tableau.com/v2019.4/server/en-us/security_personal_access_tokens.htm

The advantage of this method is that it logs in with your user and your data source is uploaded under your name. This is still using the Tableau REST API so the changes to do in the current macro is MINOR.

Changes to do in the current macro :

1- Add a parameter authentication method with choices : Username/Password ; Personal Token

2- If Personal Token is selected, add two parameters : Token_Name and Token_Value

3 - In the TableauServer.Login supporting macro, improve the formula(13) to change the payload based on user selection. If Username/Password, keep it as is. Else use the syntax here : https://help.tableau.com/current/api/rest_api/en-us/REST/rest_api_concepts_auth.htm#make-a-sign-in-r...

This is quite a straight forward change but could help a lot of companies using Alteryx.

Can you please implement that changes to strengthen this tool ?

Thanks a lot,

Desperately looking for a way to connect to SQL Server Analysis Services through Alteryx as more and more of our large datasets from our older systems are moving to here in the next few months. We can connect using PowerBi with limitations (connecting 'Live' does not allow merge and connecting with Import, you need to use MDX or DAX syntax). We run into import and export limitations, too. We are not allowed access to the underlying tables, but the tables with the measures, dimensions and fields. PowerBi is a big step up from pivot tables, but Alteryx would be so much better. Ideas for connecting this up are are welcome!

I believe many have voiced out this as their pain point within the Community. Essentially, there is no straightforward method to import multiple Excel files which are password protected.

I understand that there is an R solution suggested by several users, however, that is not ideal as it can be difficult to obtain permission from internal Tech team to install the package on the users' computers.

Re-saving them without password is not only a hassle, but also raises concerns for data protection and security.

- New Idea 209

- Accepting Votes 1,836

- Comments Requested 25

- Under Review 152

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 67

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

123 -

Enhancement

276 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- apathetichell on: Github support

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...