Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Hot Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

I would really love to have a tool "Dynamic change type" or "Dynamic re-type" which is used just as "Dynamic Rename".

- "Take Type from First Row of Data": By definition, all columns are of a string type initially. Sets the type of the column according to the string in the first row of data.

Col 1 Col 2 Col 3 Col 4 Double Int32 V_String Date 123.456 17 Hello 2023-10-30 3.4e17 123 Bye 2024-01-01 - "Take Type from Right Input Metadata": Changes the types of the left input table to the ones by right input.

- "Take Type from Right Input Rows": Changes the types based on a table with columns "Name" and "New Type".

Name New Type Col 1 Double Col 2 Int32 Col 3 V_String Col 4 Date

I am having to render my Alteryx formatted reports to Excel and then upload the report to Google Sheets

It would be very useful (and improve the less well known Alteryx Reporting capabilities) to be able to render straight to a Google Sheet and preserve the formatting.

Thanks

Hello,

According to wikipedia :

A partition is a division of a logical database or its constituent elements into distinct independent parts. Database partitioning is normally done for manageability, performance or availability reasons, or for load balancing. It is popular in distributed database management systems, where each partition may be spread over multiple nodes, with users at the node performing local transactions on the partition. This increases performance for sites that have regular transactions involving certain views of data, whilst maintaining availability and security.

Well, basically, you split your table in several parts, according to a field. it's very useful in term of performance when your workflows are in delta or when all your queries are based on a date. (e.g. : my table helps me to follow my sales month by month, I partition my table by month).

So the idea is to support that in Alteryx, it will add a good value, especially in In-DB workflows.

Best regards,

Simon

Two very useful functions

According to https://www.w3schools.com/sql/func_mysql_least.asp

The LEAST() function returns the smallest value of the list of arguments.

example : SELECT LEAST("w3Schools.com", "microsoft.com", "apple.com");

returns "apple.com"

GREATEST works exactly the same but returns the greatest value of the list of argument

As of today, Alteryx proposes max and min to deal with that, but it only works with number and , I think, it's an ambiguous syntax : Max and Min works both as an aggregation function and as a row function. I love to separate these two notions.

Having a more standard means also more interoperability.

On a related topic, the coalesce function is proposed here : https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Coalesce-function/idi-p/841014

Best regards,

Simon

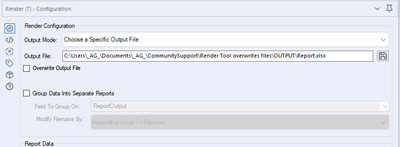

Please consider adding a new setting to the Render Tool, so the users can select or deselect if an existing File should be overwritten (Otherwise throw an error, like the Output Data Tool does, when configured to create a new Sheet and that Sheet already exists)

Please add ablity to globally, within a module, forget all missing fields.

The bak file that is automatically created (and re-created if deleted) really clutters up our folders.

Please allow us to either turn it off, or specify a different location to hold our back up files.

Thanks

In order to make the connections between Alteryx and Snowflake even more secure we would like to have the possibility to connect to snowflake with OAuth in an easier way.

The connections to snowflake via OAuth are very similar to the connections Alteryx already does with O365 applications. It requires:

- Tenant URL

- Client ID

- Client Secret

- Get Authorization token provided by the snowflake authorization endpoint.

- Give access consent (a browser popup will appear)

- With the Authorization Code, the client ID and the Client Secret make a call to retrieve the Refresh Token and TTL information for the tokens

- Get the Access Token every time it expires

With this an automated workflow using OAuth between Alteryx and Snowflake will be possible.

You can find a more detailed explanation in the attached document.

Who needs a 1073741823 sized string anyways? No one, or close enough to no one. But, if you are creating some fancy new properties in the formula tool and just cranking along and then you see that your **bleep** data stream is 9G for nine rows of data you find yourself wondering what the hell is going on. And then, you walk your way way down the workflow for a while finding slots where the default 1073741823 value got set, changing them to non-insane sized strings, and the your data flow is more like 64kb and your workflow runs in 3 seconds instead of 30 seconds.

Please set the default value for formula tools to a non-insane value that won't be changed by default by 99.99999% of use cases. Thank you.

We all love seeing this. And, it's fairly easy to fix, just go find the macro and insert a new copy. But, then you have to remember the configuration and hope that it was simple.

With the tool that's there, the XML still contains the configuration, all that's missing is the tool path. It would be great to be able to right click and repair the path from the context of the missing macro.

I would like to suggest creating a fix to allow In-DB Connect tool's custom SQL to read Common Table Expressions. As of 2018.2, the SQL fails due to the fact that In-DB tools wrap everything in a select * statement. Since CTE's need to start with With, this causes the SQL to error out. This would be a huge help instead of having to write nested sub selects in a long, complex SQL code!

Please update the Render tool to allow users to name the Excel sheet for the output. Alteryx currently errors when using same naming convention that works in normal Output tool.

Please add official support for newer versions of Microsoft SQL Server and the related drivers.

According to the data sources article for Microsoft SQL Server (https://help.alteryx.com/current/DataSources/SQLServer.htm), and validation via a support ticket, only the following products have been tested and validated with Alteryx Designer/Server:

Microsoft SQL Server

Validated On: 2008, 2012, 2014, and 2016.

- No R versions are mentioned (2008 R2, for instance)

- SQL Server 2017, which was released in October of 2017, is notably missing from the list.

- SQL Server 2019, while fairly new (~6 months old), is also missing

This is one of the most popular data sources, and the lack of support for newer versions (especially a 2+ year old product like Sql Server 2017) is hard to fathom.

ODBC Driver for SQL Server/SQL Server Native Client

Validated on ODBC Driver: 11, 13, 13.1

Validated on SQL Server Native Client: 10,11

- ODBC Driver 17+ is not mentioned, even though it was released in February of 2018. https://docs.microsoft.com/en-us/sql/connect/odbc/windows/release-notes-odbc-sql-server-windows?view...

- SQL Server Native Client is deprecated. It is being replaced by Microsoft OLE DB Driver for SQL Server. However, there is not a mention of Microsoft OLE DB Driver for SQL Server. The latest version of this is 18.3.0. https://docs.microsoft.com/en-us/sql/connect/oledb/release-notes-for-oledb-driver-for-sql-server?vie...

At the moment containers either expand and overlap other tools, or you have to leave space for them (defeating the original purpose of using them). Is there a way we can have the containers expansion shift the workflow so the others tools shift down / right to account for this expanision?

If a tool fails, there should be a way to customise the error message. Currently a way to do it: log all messages in a file, read that file with another workflow, then customise the messages (Alteryx workflow error handling - Alteryx Community). However, there should be a more convenient solution. We should be able to:

- Find/replace parts of a message.

- Specify, which tools messages to modify.

- Change the message type.

- Change the order of the messages in the results window, to prioritise the critical ones.

- Pick which messages cannot be hidden by "xxx more errors not displayed".

This would especially help for macros, as sometimes we have a specific tool failing within a macro and producing a non-user friendly message.

Alteryx hosting CRAN

Installing R packages in Alteryx has been a tricky issue with many posts over the years and it fundamentally boils down to the way the install.packages() function is used; I've made a detailed post on the subject. There is a way that Alteryx can help remedy the compatibility challenge between their updates of Predictive Tools and the ever-changing landscape that is open-source development. That way is for Alteryx to host their own CRAN!

The current version of Alteryx runs R 4.1.3, which is considered an 'old release', and there are over 18,000 packages on CRAN for this version of R. By the time you read this post, there is likely a newer version of one of these packages that the package author has submitted to the R Foundation's CRAN. There is also a good chance that package isn't compatible with any Alteryx tool that uses R. What if you need that package for a macro you've downloaded? How do you get the old version, the one that is compatible? This is where Alteryx hosting CRAN comes into full fruition.

Alteryx can host their own CRAN, one that is not updated by one of many package authors throughout its history, and the packages will remain unchanged and compatible with the version of Predictive Tools that is released. All we need to do as Alteryx users is direct install.packages() to the Alteryx CRAN to get our new packages, like so,

install.packages(pkg_name, repo = "https://cran.alteryx.com")

There is a R package to create a CRAN directory, so Alteryx can get R to do the legwork for them. Here is a way of using the miniCRAN package,

library(miniCRAN)

library(tools)

path2CRAN <- "/local/path/to/CRAN"

ver <- paste(R.version$major, strsplit(R.version$minor, "\\.")[[1]][1], sep = ".") # ver = 4.1

repo <- "https://cran.r-project.org" # R Foundation's CRAN

m <- available.packages() # a matrix of all packages and their meta data from repo

pkgs4CRAN <- m[,"Package"] # character vector of all packages from repo

makeRepo(pkgs = pkgs4CRAN, path = path2CRAN, type = c("win.binary", "source"), repos = repo) # makes the local repo

write_PACKAGES(paste(path2CRAN, "bin/windows/contrib", ver, sep = "/"), type = "win.binary") # creates the PACKAGES file for package binaries

write_PACKAGES(paste(path2CRAN, "src/contrib", sep = "/"), type = "source") # creates the PACKAGES files for package sources

It will create a directory structure that replicates R Foundation's CRAN, but just for the version that Alteryx uses, 4.1/.

Alteryx can create the CRAN, host it to somewhere meaningful (like https://cran.alteryx.com), update Predictive Tools to use the packages downloaded with the script above and then release the new version of Predictive Tools and announce the CRAN. Users like me and you just need to tell the R Tool (for example) to install from the Alteryx repo rather than any others, which may have package dependency conflicts.

This is future-proof too. Let's say Alteryx decide to release a new version of Designer and Predictive Tools based on R 4.2.2. What do they do? Download R 4.2.2, run the above script, it'll create a new directory called 4.2/, update Predictive Tools to work with R 4.2.2 and the packages in their CRAN, host the 4.2/ directory to their CRAN and then release the new version of Designer and Predictive Tools.

Simple!

95% of the times I see myself using the Directory Tool, it is only to access the FullPath content, so I immediatly add a Select tool to deselect the other attributes the tool returns.

Is there any chance to add a checkbox to only retrieve FullPath?

I couldn't find a previous idea on this, but let me know if it already exists.

A common problem with the R tool is that it outputs "False Errors" like the following: "The R.exe exit code (4294967295) indicted an error"

I call this a false error because data passes out of the R script the same as if there were no error. As such, this error can generally be ignored. In my use case, however, my R tool is embedded within an iterative macro, and the error causes the iterator to stop running.

I was able to create a workaround by moving the R tool to a separate workflow and calling it from the CReW runner macro within my iterator, effectively suppressing the error message, but this solution is a bit clumsy, requires unnecessary read/writes, and uses nonstandard macros.

I propose the solution suggested by @mbarone (https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Boosted-Model-Error/td-p/5509) to only generate an error when the R return code is 1, indicating a true error, and to either ignore these false errors or pass them as warnings. This will allow R scripts and R-based tools to be embedded within iterative macros without breaking.

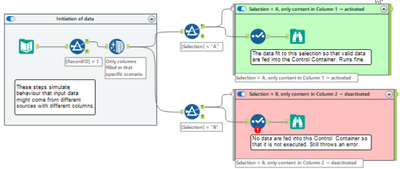

Sometimes, Control Containers produce error messages even if they are deactivated by feeding an empty table into their input connection.

(Note that this is a made up example of something which can happen if input tables might be from different sources and have different columns so that they need separated treatment.)

According to the product team, this is expected behaviour since a selection does not allow zero columns selected. This might be true (which I doubt a bit), but it is at least counter-intuitive. If this behaviour cannot be avoided in total, I have a proposal which would improve the user experience without changing the entire workflow validation logic.

(The support engineer understands the point and has raised a defect.)

Instead of writing messages inside Control Containers directly to the log output (on screen, in logfile) and to mark the workflow as erroneous, I propose to introduce a message (message, warning, error) stack for tools inside Control Containers:

- When the configuration validation is executed:

- Messages (messages, warnings, errors) produced outside of Control Containers are output to the screen log and to the log files (as today).

- Messages (messages, warning, errors) produced inside of Control Containers are not yet output but stored in a message stack.

- At the moment when it is decided whether a Control container is activated or deactivated:

- If Control Container activated: Write the previously stored message stack for this Control Container to the screen and to the log output, and increase error and warning counts accordingly.

- If Control Container deactivated: Delete the message stack for this Control Container (w/o reporting anything to the log and w/o increasing error and warning count).

This would result in a different sequence of messages than today (because everything inside activated Control Containers would be reported later than today). Since there’s no logical order of messages anyways, this would not matter. And it would avoid the apparently illogical case that deactivated Control Containers produce errors.

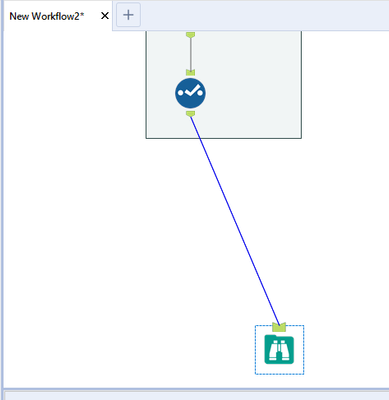

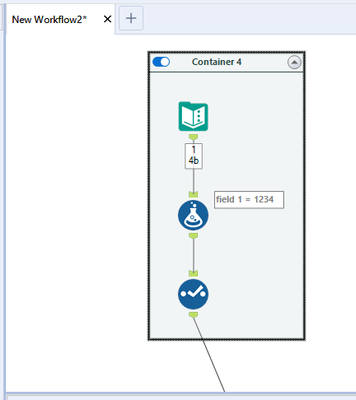

When I'm organizing my workflow, sometimes I want to move a whole tool container on the canvas. Currently, the only way to do this is to first find the header then select and drag this. When the ends of the container is off screen, it can be hard to know how much I wanted to move my container to get it where I wanted relative to the other tools around it. I feel like it would be nice to be able to select anywhere on the tool container and drag it around (possibly holding right click and dragging so that current tool selection capabilities aren't hindered).

In the (simplified) images below, you'll see that I want my tool container to vertically align just above the browse tool:

I can't currently see the top of the tool container to move it, though, so I must first navigate to that part of the workflow to select the header.

- New Idea 275

- Accepting Votes 1,815

- Comments Requested 23

- Under Review 173

- Accepted 58

- Ongoing 6

- Coming Soon 19

- Implemented 483

- Not Planned 115

- Revisit 61

- Partner Dependent 4

- Inactive 672

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

77 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

641 -

Category Interface

240 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

394 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

964 -

Data Products

2 -

Desktop Experience

1,538 -

Documentation

64 -

Engine

126 -

Enhancement

331 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

12 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

194 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

80 -

UX

223 -

XML

7

- « Previous

- Next »

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update - StarTrader on: Allow for the ability to turn off annotations on a...

- simonaubert_bd on: Download tool : load a request from postman/bruno ...

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

| User | Likes Count |

|---|---|

| 16 | |

| 9 | |

| 7 | |

| 5 | |

| 4 |