Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Top Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Take this example macro

I've build in a message on the tool to inform the user that the macro is set up in test mode. What this macro does is it will either filter the records based on a condition which the user provides in the macro configuration via a text input tool, for example Contains([Name],"Goodman") or they can select a check box to override the testing mode.

What I want is the user to be notified when there is a filter condition being applied, so they can quickly identify where in the workflow data might not be the full dataset. At the moment this is achieved using the error tool, but due to be it being the error tool you are limited to only specifying the red !

Therefore my idea is to update the error tool to allow the user to specify additional indicators, such as a warning triangle, because the message I am displaying is actually a warning to the user. Additionally it would be great if you could provide custom images (for example a glass flask) to show it's in a test mode, like you can with the macro image tools.

-

Category Interface

-

Category Macros

-

Desktop Experience

In Powerpoint, you can right-click on a picture and replace it with a different picture without losing formatting.

Similar functionality would be useful for replacing custom macros.

- I would like to be able to switch an old version of a custom macro with a new version in situ, without losing the connections to other tools, interface tools, or location in a container.

Currently, the only option is to insert the new custom macro and then reset all incoming and outgoing connections. Some downstream tools (e.g., crosstab) lose their existing settings and that has to be reset too. On complicated workflows, this can introduce silent errors.

This capability would be especially helpful for team coding, where different team members are revising different modules for a parent workflow.

Currently:

Right-clicking on the canvas shows Insert > Macro > (choose from list of open macros)

Right-clicking on an existing macro shows Open Macro

New functionality:

Right-clicking on an existing macro shows Replace/Change Macro > (choose from list of open macros)

-

Category Macros

-

Desktop Experience

Hi All,

Did you all experience when building a iterate macro this situation?

When you have no idea why the output is different from what you want,

hence, you remove the rows/ data to force the data run only 2 iterate, review the result.

then, you add back the rows/ data to force the data run only 3 iterate, review the result

then 4, 5 and etc... until we found the issue.

so it was important that we can view how the result of each iterate to enable us to identify the issue quicker and more efficient.

Example output

The output may like below: (with a option to let user to choose of cause)

if input data is 3 and the macro is to multiply 2 and power of 2 every iterate. (1st iterate=3*2^2, 2nd iterate=12*2^2)

| Iterate | Amount |

| 1 | 12 |

| 2 | 48 |

just add one column in front to show the iterate and rest is the result.

-

Category Macros

-

Desktop Experience

Hello gurus -

I think it would be an important safety valve if at application start up time, duplicate macros found in the 'classpath' (i.e., https://help.alteryx.com/current/server/install-custom-tools, ) generate a warning to the user. I know that if you have the same macro in the same folder you can get a warning at load time, but it doesn't seem to propagate out to different tiers on the macro loading path. As such, the developer can find themselves with difficult to diagnose behavior wherein the tool seems to be confused as to which macro metadata to use. I also imagine someone could also arrive at a situation where a developer was not using the version of the macro they were expecting unless they goto the workflow tab for every custom macro on their canvas.

Thank you for attending my TED talk on the upsides of providing warnings at startup of duplicate macros in different folder locations.

-

API SDK

-

Category Developer

-

Category Macros

-

Desktop Experience

Hi alteryx can you please create a poll or an forms to fill or approval processes kind of tools . I know we have some analytics app tools but can we create something like google forms where we can easily create forms and get data outputs. Emails notifications for those forms and approvals .. etc ..

-

API SDK

-

Category Data Investigation

-

Category Developer

-

Category Macros

Figuring out who is using custom macros and/or governing the macroverse is not an easy task currently.

I have started shipping Alteryx logs to Splunk to see what could be learned. One thing that I would love to be able to do is understand which workflows are using a particular macro, or any custom macros for that matter. As it stands right now, I do not believe there is a simple way to do this by parsing the log entries. If, instead of just saying 'Tool Id 420', it said 'Tool Id 420 [Macro Name]' that would be very helpful. And it would be even *better* if the logging could flag out of the box macros vs custom macros. You could have a system level setting to include/exclude macro names.

Thanks for listening.

brian

-

API SDK

-

Category Developer

-

Category Macros

-

Category Reporting

You could create an area under the Interface Designer - Properties when editing a macro that allows users to select the order the anchor abreviations will appear on the final macro. This is useful if we want an input or output to be at the top, for example. Currently, the only way is by deleting and adding them again on the corect order. Not user friendly. Thank you!

-

Category Macros

-

Desktop Experience

Sometimes a dependency with a macro breaks, or I am local 'versioning' a macro and want to replace it in another workflow, without losing all of the connections.

If we could replace a tool, or if it is missing upon opening a workflow select a tool to take the missing one's place but keep the same connections, that would be incredibly helpful.

-

Category Macros

-

Desktop Experience

Currently, you can right click on an input file and convert into a Macro input. however, in order for a fellow user to see what file was used as input, one has to click on it output anchor, copy the data and paste it on a new canvas. It would be nice to right click on the input macro tool and be able to bring up the original input or convert it into a regular input in one step. Thanks

-

Category Macros

-

Desktop Experience

-

Category Macros

-

Desktop Experience

Dear Dev Team

The ? Help page of macros is by default an Alteryx one (https://help.alteryx.com/11.7/index.htm#cshid=MacroPlugin.htm) : not very informative for a user

Why not changing the default Help landing page and applying rather the macro Description written by the macro developper : it would be far more useful for users

Thanks for reading this idea

Arno

-

Category Macros

-

Desktop Experience

A typical macro does the same job every time. I therefore want it to have the same annotation each time.

I want it to have a default annotation that I save in the Interface Designer. This annotation will be shown on the canvas whenever the macro is added.

-

Category Macros

-

Desktop Experience

I will try to make this short but the back story is a bit long.

I was recently tasked with scraping a website requiring repeated call to the URL with about 10,000 different queries. Pushing all 10,000 at the Download tool caused intermittent DownloadData to be returned with HTML from what appeared to be a default fallback help page. Not what I needed. I suspected the site may have seen all the calls in rapid succession as a DDoS attack or something, so I put a Throttle tool in line to lessen the burden on their server. It reduced the failed calls, but there was still more than I found acceptable, requiring pulling out the failed queries and repeating the same throttled processing. Putting time between each record was what I needed. And then I found this Wait/Pause Between Processing Records Just what I needed.

Now the constructive criticism. I hope I don't offend anybody.

The macro does the job using a simple ping statement inside a grouped batch macro, pinging until a selected time interval has passed. It does this repeated pinging for every record. That can add up to a lot of pings especially if the time interval is rather large and a lot of records are being processed. Then DDoS popped into my head. The same issue that led me to find this very solution.

So, I started thinking how could I accomplish this same wait between records and iterative macro seemed plausible, seeing that loops can be used in code to do this very thing.

I have attached the macro I came up with. Feel free to check it out, critique the hell out of it, and/or used it if it will solve any problem you might have. I only ask that you keep the macro intact and give credit where credit is deserved.

Thanks for your time,

Dan Kelly

-

Category Macros

-

Desktop Experience

The "Detour" tool is incredibly useful in Macros. However, it really isn't much use in the normal workflow area.

We need a "Detour" tool suitable for normal Workflow (not from within a Macro) which would greatly aid in workflow controls and logic.

-

Category Macros

-

Desktop Experience

I have used Publish to Tableau Server macro for over a years. It works fine when I want to overwrite the data.

However, the current macros (from Alteryx Gallery and Invisio) won't work with appending the data. Please modify or develop a workable macro for 'Append the data to Tableau Server'. It will save a lot of time in the daily update process.

Note: I am using Alteryx 11 and Tableau 10.1. Thank you very much.

-

Category Macros

-

Desktop Experience

In GIS, spatial data is regularly stored/transmitted as text. With this comes metadata, including the projection used.

Example Issue: When extracting data from ESRI's ArcGIS REST Directories, the projection can be extracted from the information, but must be manually defined in the Make Points Tool. If you are trying to compile data from several different sources, all using different projections, you cannot automate the process.

Suggested Solution: Add WKT to macro interface configuration options so that an Action Interface Tool can update the Create Points Tool.

Attachments:

JSON extract.png - This is a screenshot of the spatial reference metadata in a JSON formatted query from an ArcGIS REST Directory.

action tool.png - Current configuration options for Create Points Tool in the Action Interface Tool.

-

Category Interface

-

Category Macros

-

Category Spatial

-

Desktop Experience

I use macros all the time, and I would love if the metadata could persist between runs no matter what tools exist in the macro. I've attached a simple example which should demonstrate the issue. After running the module once, the select tool (4) is populated with the expected data; however, as soon as anything changes (like a tool is dropped onto the canvas), the select tool (4) is no longer receiving the metadata to properly be populated. I've added the crosstab tool from the macro onto my workflow to demonstrate that it's only a problem when the tool is inside a macro. It makes it difficult at times to work with workflows that utilize macros due to the metadata constantly disappearing anytime a change is made. The solution would be for the metadata from the last run to persist until the next run. This is how the crosstab tool is working on my workflow, but putting it inside the macro changes its behavior.

-

Category Macros

-

Desktop Experience

The macros included in the CReW macro pack are excellent. However, having to install them each time and hope that your users have the macros installed can be a pain.

Suggest adding them as default tools in the next version of alteryx.

-

Category Macros

-

Desktop Experience

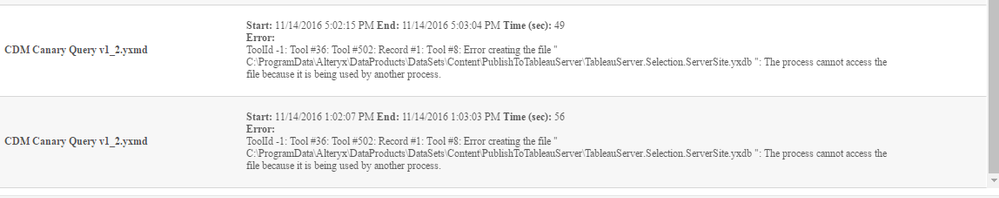

We are running into errors on our scheduler if we have multipe workflows with the Publish to Tableau server macro running at the same time. The macro writes to a local yxdb file with a fixed naming convention and is locked if another workflow is using it at the same time. We like to see if the cached filename TableauServer.Selection.ServerSite.yxdb can be made somehow unique.

Error experience:

Talking with support this is a known issue and needs Macro enhancement.

-

Category Macros

-

Desktop Experience

I have a large dataset (~200k) of routers with the utilisation figures per month over 36 months. What I have been doing till now is using the TS Model Factory to config and TS Forecast Factory to generate the forecast for the next 6 months grouping by router. Great! Except the values returned per device are exactly the same for the next 6 months. Obviously by not using the ETS / ARIMA macros I lose the ability to configure in more detail.

What I would like is be able to set the parameters of the ETS/ARIMA model in advance then run the batch macro for the number of routers and return a 6 month forecast that takes into account all the parameters.

Happy to supply data if required!

Thanks in advance

Mark

-

Category Macros

-

Category Time Series

-

Desktop Experience

- New Idea 209

- Accepting Votes 1,837

- Comments Requested 25

- Under Review 151

- Accepted 55

- Ongoing 7

- Coming Soon 8

- Implemented 473

- Not Planned 123

- Revisit 67

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

111 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

239 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

77 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

76 -

Category Preparation

384 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

80 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

948 -

Desktop Experience

1,493 -

Documentation

64 -

Engine

123 -

Enhancement

276 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

79 -

Machine Learning

13 -

New Request

177 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- vijayguru on: YXDB SQL Tool to fetch the required data

- apathetichell on: Github support

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

- simonaubert_bd on: Supporting QVDs

- simonaubert_bd on: In database : documentation for SQL field types ve...

| User | Likes Count |

|---|---|

| 54 | |

| 31 | |

| 16 | |

| 10 | |

| 6 |