Alteryx Designer Desktop Knowledge Base

Definitive answers from Designer Desktop experts.- Community

- :

- Community

- :

- Support

- :

- Knowledge

- :

- Designer Desktop

- :

- What Can't Be Cached?

What Can't Be Cached?

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

08-30-2018 08:38 AM - edited 01-18-2023 10:11 AM

With the release of 2018.3 comes very exciting new functionality – workflow caching! Caching can save a lot of time during workflow development by saving data at “checkpoints” in the workflow, so that each time you add a new step to your workflow, it does not need to rerun the workflow in its entirety, rather it can pick up from your last cache point.

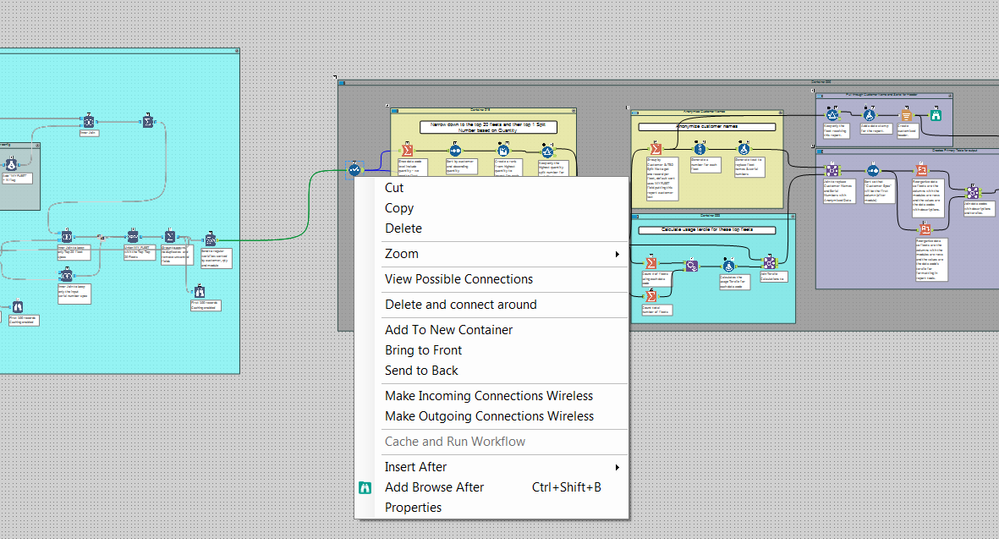

To create a cache, simply right-click on the point in your workflow that you would like the data to be cached at, and select the Cache and Run Workflow option from the drop-down menu.

Note: If you have configured your workflow to enable the Disable All Tools that Write Output option, caching will still be available as an option, but no data will be cached. This is because caching writes a temporary output file to be referenced when the workflow is re-run from the caching point.

For versions below 2022.3, there are a few tools in Alteryx that cannot be used as cache points due to two major conditions that prevents a tool from being eligible for caching. The first and most straightforward is tools with multiple outputs.

Tools with multiple output anchors cannot be cached. This includes the Join Tool, many (but not all) of the Predictive Tools, the R Tool, the Python Tool, as well as a few others. Starting in 2022.3, tools that have multiple output anchors (maximum of 5 anchors), can be cached. This feature extends the caching functionality to over 50 additional tools. Please note that some tools (including those with more than 5 output anchors and In-DB tools) might still not be cacheable.

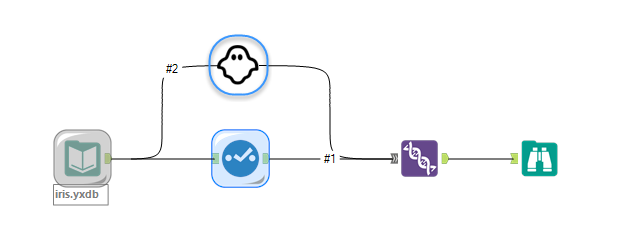

The second condition is a little trickier to understand conceptually. Any tool that is in a “circle” cannot be cached.

What is meant by a “circle” is the condition where the output of a tool is being combined with a different component of the same data stream, effectively creating a circle around the tool with the connection lines. Here are some examples of un-cachable circles:

The reason tools in this condition cannot be cached is similar to why tools with multiple output anchors are excluded. In a “circle situation”, the downstream tool requires data from both stream #1 and stream #2 in order to proceed. The only way to effectively cache in this situation would be to create an additional, invisible cache for the tools being joined in parallel.

To make sure only expected data is being cached and prevent unintentional overuse of resources, no ghost cache is created, which disqualifies tools in "circles" from being caching checkpoints.

The good news is that tools with single outputs downstream from tools with multiple outputs or “circles” can be cached without any issue!

Now that you are well versed in the limitations of workflow caching, you should be able to develop new workflows and test and modify old workflows faster than ever before!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I opened a workflow and tried to cache at a union and I got a grayed out "Cache and Run Workflow". So I ran the workflow and went back to the same union and it now allowed me to "Cache and Run Workflow" Problem is it reran the workflow.

Is it truly necessary to run the workflow 2 times before you can cache anything?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @_richardr,

I'm not able to replicate this behavior on my machine. Can you please try posting your workflow in a thread in Designer Discussions, and posting the link here? I would be happy to take a look at it.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Did the ability to cache the Dynamic Input tool get killed off?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @Brad2 - You can still cache the Dynamic Input tool since it has a single output anchor. For instructions on how, check out Just take the Cache and Run! Caching in 2018.3.

Are you thinking of the Cache Data option in the Input Data tool? We did indeed remove this option since the new feature replaces its functionality.

Cheers,

Alex

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

One consideration that might not be obvious is that if you configure your workflow to Disable All Tools that Write Output, caching (or clearing the cache) will still be available as an option, but no data will get cached (or cleared).

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I can't seem to cache after In-DB tools. Is that a real limitation or am I doing something wrong? I'm using version 2018.3.

I can't attach my workflow, but here's a screen shot - you'll note upstream of the select tool (to ensure a single output tool), I have a bunch of In-DB tools doing the heavy lifting, then streaming only what I need for my report.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @DawnR,

You should be able to use the cache functionality downstream of in-DB tools, as long as the standard tool you are trying to cache with isn't in a loop and only has one output. Do you have any wireless connections around tool you are attempting to cache on, creating an un-cachable circle? Do other tools further downstream in your workflow give you the option to cache? If not, please post to the Designer Discussion Forum for additional help, or reach out to our support team.

Thanks,

Sydney

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi @SydneyF,

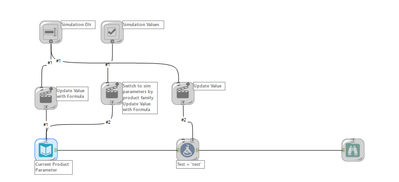

Ha - I found my problem, I do have a wireless connection from an upstream interface tool that connects in downstream of where I was trying to cache.

Thanks for the help!

Dawn

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thanks for the very well written and easy to follow guide

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I like the old way better in some cases. I don't always get my joins and formulas correct on the first try. It was nice to be able to cache just the Inputs as I refined the workflow. Any chance we could have it as an option again?

Thanks,

Troy

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

All you need to do is right-click on the Input Data tool and the do the cache from there. It is essentially the same thing as the old way of checking the cache box in the former Input Data tool.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I have multiple inputs in my workflow. Is there a way to cache all of their data at once, or I do have to go through the right click > select "Cache and Run Workflow" option once for each input and wait for the whole workflow to run in between each run?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@TroyXman @Brad2 I have good news. In the 2019.1 release you will see the ability to select multiple tools and cache multiple tools at once.

Deciding when to release features is tricky business sometimes. We want to release the feature as soon as you'll find some value in it, but sometimes that means it's not as awesome as we want it to be. That was the case with caching. We thought caching was valuable, so we released it. We also knew that it would be even more awesome with the ability to cache multiple tools at once. The good news is that we are adding that functionality in 2019.1. I hope you enjoy it!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@RachelW Thank you for continuing to improve caching. It is certainly useful functionality, and my first experience of caching within Alteryx has been with this new functionality.

I only started using Alteryx on the release prior to the introduction of caching and it felt then like a very much needed piece of functionality so was glad to see it arrive in the next release.

However, a key problem to me is that the cache cannot be retained between sessions, and that is a real draw back to me especially if it means that the first hour of each day is spent calculating and retrieving the 2 million row data set again.

I understand the reasons from a data-protection point of view in terms of local caches being retained, but personally I'd rather have the option since not all data is sensitive.

Having tried to work with "cache-and-run" for a few months, there came a point where it felt unworkable for some workflows and so I finally tried out the Cache Dataset v2 macro. For my particular use case, that macro is a better solution. It can be configured to retain the cache between sessions. With the inclusion of a configurable file name for the specific cache, and the ability to run in write, read or bypass modes, it is also more flexible since I can create different local caches for parts of the workflow and switch those caches on or off as I am building/testing different aspects.

I believe there is a place for both approaches and both work well for their use cases. I shall now be using both mechanisms depending on circumstances:

- The built in "cache and run" solution requires no modification to the workflow, and is the quick-win solution, working well, in my view, for smaller datasets (i.e. less-time-consuming queries).

- The Cache Dataset v2 macro provides greater flexibility and can be configured to retain information across sessions, so is better suited for those expensive (e.g. hour-to-load datasets) but at the cost of (relatively straightforward) modification to the workflow, provided the information being retrieved and cached is not sensitive!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@bbtak Would setting up the workflow to run on the scheduler so the data's ready when you come in the next day be an option?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

To @lepome's point, it is not obvious at all that the Cache and Run Workflow option doesn't work when 'Disable All Tools that Write Output' is selected (as it appears available and just does nothing). It took some digging for me to figure that out and it seems that it would be a really common option to select when testing workflows with the Cache. I'd highly suggest this be changed to make that more clear.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@Brad2 , thanks for the suggestion but it won't work for me as I am only using Designer and not the scheduler.

Now I've tried it out, the cache macro works fine for me in these circumstances.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Please post that suggestion to the Ideas page, if it's not there already. If it has been suggested already, Star the post, and add your use case in the comments. Our product team pays particular attention to ideas submitted by users, especially when many users agree that a feature would add value.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thanks @lepome . I've just done that. https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Cache-option-doesn-t-function-when-Disable-A...

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

This is another pain point & it seems 2019.3 is creating many of these.

I added the select - caching doesn't work.

I switch off writing files - caching doesn't work.

Far from improving things, it has made it worse.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

What Select are you referring to? Please elaborate. [We know that when you switch off writing files, caching doesn't work (see my post above).]

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I work out of the office regularly. Accessing data network is about 10-20 times slower than when in the office. So i do a 1-time data pull to grab the data.

I then need to work on iterating the workflow, but need the Cache to work so it runs quickly.

I tick the disable file output option as i don't need to save the outputs (but don't want to remove the connections). Some of my workflows have numerous outputs of different formats. As I'm also iterating the workflow, I don't want to save the outputs as they may over-write existing files with incorrect data.

Caching now no longer works if i do this.

If there is an easy work-around then I'm all ears.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

@rmwillis1973 I'd consider just putting your outputs in a container and then disabling the container. That will allow you to still use the cache. Or, alternatively to using the cache, save your input to a yxdb file, use that as the input while developing, and then switch it back when your done (i.e., what we used to do prior to the cache being available). Hopefully they'll change the function in future so that the 'Disable all tools that Write Output' option doesn't apply to the cache.

-Kevin-

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thank you for those ideas. The tool container looks like the only viable option for me.

Looking forward to when this issue is fixed.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Thank you for providing feedback on caching. It is actually expected behavior for caching to be disabled when "Disable All Tools that Write Output" is enabled. This is because caching writes temporary yxdb files. Disabling outputs disables caching.

With that said we are reviewing the experience because this is not communicated clearly to our users. We are looking to enable caching with the option checked. Again your feedback is very much appreciated!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Is there a limit on how much data can be cached? I have a workflow that usually takes about 90 minutes to run. I've been caching the part that makes it take so long but I don't seem to have my full data set after caching.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

I don't believe there is a limit on the cache feature of Alteryx. The main limiter would be the local disc space where Alteryx is writing those temporary files.

I would check your machine to see what kind of temp storage is available.

I hope this helps!

TrevorS

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hello Everyone ?

I was wondering if i can cache something that is already in a container.

Thanks a lot

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

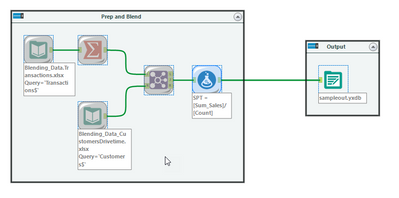

Hi @emorits002, yes you can - with the same rules that @SydneyF mentions above. Here's a quick example of caching within a container:

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Notify Moderator

Hi,

I recently upgraded my Alteryx Designer to Version 2020.4 and have noticed some changes in the caching behavior to input tools linked to interface tools.

In version 2019.4 regardless of any "loops" caused by the interface tool, I always had the ability to cache the input data set.

Since the upgrade it can no longer cache the input tool or simply caches the whole flow, which isn't of much use.

Was this an intended change or is there a possibility of getting back the previous behavior?

-

2018.3

17 -

2018.4

13 -

2019.1

18 -

2019.2

7 -

2019.3

9 -

2019.4

13 -

2020.1

22 -

2020.2

30 -

2020.3

29 -

2020.4

35 -

2021.2

52 -

2021.3

25 -

2021.4

38 -

2022.1

33 -

Alteryx Designer

9 -

Alteryx Gallery

1 -

Alteryx Server

3 -

API

29 -

Apps

40 -

AWS

11 -

Computer Vision

6 -

Configuration

108 -

Connector

136 -

Connectors

1 -

Data Investigation

14 -

Database Connection

196 -

Date Time

30 -

Designer

204 -

Desktop Automation

22 -

Developer

72 -

Documentation

27 -

Dynamic Processing

31 -

Dynamics CRM

5 -

Error

267 -

Excel

52 -

Expression

40 -

FIPS Designer

1 -

FIPS Licensing

1 -

FIPS Supportability

1 -

FTP

4 -

Fuzzy Match

6 -

Gallery Data Connections

5 -

Google

20 -

In-DB

71 -

Input

185 -

Installation

55 -

Interface

25 -

Join

25 -

Licensing

22 -

Logs

4 -

Machine Learning

4 -

Macros

93 -

Oracle

38 -

Output

110 -

Parse

23 -

Power BI

16 -

Predictive

63 -

Preparation

59 -

Prescriptive

6 -

Python

68 -

R

39 -

RegEx

14 -

Reporting

53 -

Run Command

24 -

Salesforce

25 -

Setup & Installation

1 -

Sharepoint

17 -

Spatial

53 -

SQL

48 -

Tableau

25 -

Text Mining

2 -

Tips + Tricks

94 -

Transformation

15 -

Troubleshooting

3 -

Visualytics

1

- « Previous

- Next »