Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Hi there,

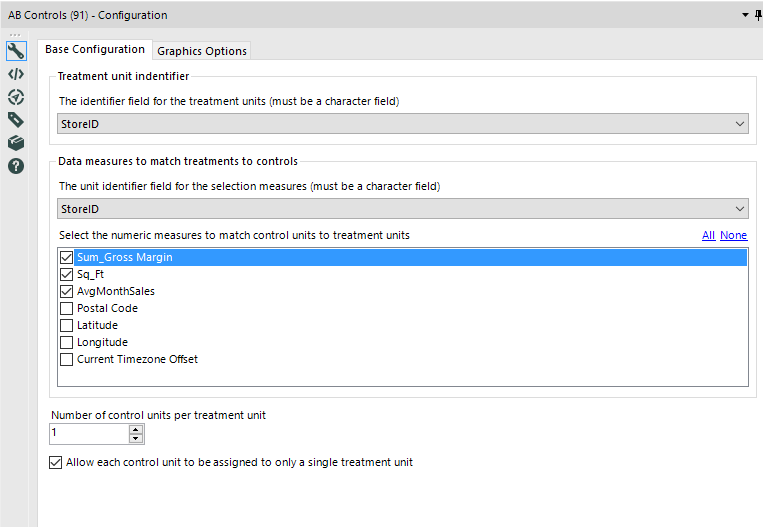

In working through the Udacity assignment on AB testing (again, thank you @PatrickN ), it seems that there's no obvious way to use location directly as a distance factor for selecting control units.

Additionally - the identifier field for a unit has to be a character field.

It seems that here would be a lot of value in making 2 changes to this tool:

a) allowing for integer unit identifiers (like store ID - not sure why a character is required)

b) using an actual point (location) to determine closest potential control unit.

- This is particularly important in the US where two towns may be very close to each other although they are in different states

- So the actual distance on the earth's surface would be a better indicator of closeness between test & control units than state or county.

-

New Tool

Hi there,

Adam ( @AdamR_AYX ), Mark ( @MarqueeCrew) and many others have done a great job in putting together super helpful add-in macros in the CREW pack - and James ( @jdunkerley79 ) has really done an incredible job of filling in some gaps in a very useful way in the formula tools.

Would be possible to include a subset of these in the core product as part of the next release?

I'm thinking of (but others will chime in here to vote for their favourite):

- Unique only tool (CReW)

- Field Sort (CReW)

- Wildcard XLSX input (CReW) - this would eliminate a whole category of user queries on the discussion boards

- Runner (CReW - although this may have issues with licensing since many people don't have command line permission - Alteryx does really need the ability to do chained dependancy flows in a more smooth way.

- Date Utils (JDunkerly) - all of James's Date utils - again, these would immediately solve many of the support questions asked on the discussion forum

I think that these would really add richness & functionality to the core product, and at the same time get ahead of many of the more common queries raised by users. I guess the only question is whether the authors would have any objection?

Thank you

Sean

-

General

-

New Tool

It would be nice to have the ability to have a workflow wait/pause tool that would pause the workflow for a given amount of time before proceeding to the next step (i.e. wait 300 seconds).

I have a workflow that uses the Run Command to run a batch file that kicks of a terminal emulator that cycles through steps the ultimately result in an exported text file that I use in an Alteryx workflow for further processing. The generation of the text file can take a few minutes. A delay could be placed in the batch file or terminal emulator script, but I think having a tool in the Alteryx workflow might be useful for other processes as well.

-

New Tool

It seems that version 10.6 (still in beta) will have easy to use linear programming tool... We'll be able to allocate assets optimally, optimize our marketing decisions by inputting the predictions we had with predictive tools etc.

But when it comes to Non-linear models what happens? The idea is to add Alteryx designer an evolutionary optimization capability as well...

I've used a similar tool in excel which was very useful called Evolver; http://www.palisade.com/evolver/ It will be awesome to see that in the coming versions...

To note that one optimisation method does not rule them all and evolutionary algorithms are the slowest probably,

But I believe it will enable us to optimize hyperparameters of our models and greatly get better results...

-

New Tool

I find the concept of Batch and/or Iterative macros, when done specifically for the simple purpose of iteration, to be a fair bit of overhead. If we could extract the fundamental qualities of a loop and get that into an "Iteration Tool," it could become a well-used tool from the pallette.

Implementation Ideas:

- Assume that the iteration is over the rows of a given input data set.

- For the "body of the loop" allow multiple expressions, each of which iteratively assigns the i'th position of a given variable (which could be either existing or derived just like the Formula tool and it's expression).

- Allow referencing of the loop index variable from within expressions

An example problem this could solve is from: http://community.alteryx.com/t5/Data-Preparation-Blending/Looping-and-dynamically-changing-output/m-.... As discussed therein, the concept of "row dependent iteration" makes this difficult to solve with standard tools.

If the input data set from that example were sent into the proposed Iteration Tool... it would automatically loop over the dataset rows; and three expressions could be supplied in the Tool configuration to solve the problem:

VarE: IF [i] > 1 THEN VarF[i-1] + VarG[i-1] ELSE VarE ENDIF VarF: VarA + VarB VarG: VarC + VarD

For implementation purposes, this would be logically equivalent to:

VarE[i]: IF [i] > 1 THEN VarF[i-1] + VarG[i-1] ELSE VarE[i] ENDIF VarF[i]: VarA[i] + VarB[i] VarG[i]: VarC[i] + VarD[i]

(so, basically, the i'th row is assumed unless otherwise provided in the expression syntax).

I hope this isn't too outlandish - I've tried to think through how this could be accomplished (1) as a tool that is not too fiendishly difficult for Alteryx to implement and (2) which would also be easy for us, the end users, to utilize. Thanks!

-

New Tool

Hi, I'm new to Alteryx; we've had for just about a month. We started publishing our workflows to Tableau and it's working great.

One issue I foresee:

User credentials to the Tableau server are updated occasionally. When this occurs, I will have to update the credentials manually in each workflow.

The number of workflows we are publishing is growing. Is there a way to automate this process?

-

New Tool

-

Tool Improvement

Assuming some source control or versioning is in place, a formal compare tool would be a nice addition. This would be useful for determining what is different between two versions of a workflow, and that knowledge is very useful when modifying a production process: when formally moving a new (modified) process into production, part of the checks and balances would be to run a formal comparison against the workflow being replaces, and ensure that all differences are accounted for.

This sort of audit is notoriously difficult when the differences are buried deep in the configuration settings of various tools within Alteryx. I do see that the .yxmd files are XML based, so perhaps we could create our own compare tool based thereon, but it would be better (more trustworthy) to have one formally provided by Alteryx. Thanks!

-

General

-

New Tool

I have many aggregation in the same workflow which product one or two different column each, total could be xx different columns. Wouldn't it be nice to have a multiple append tool that could take connections from many other tools? At the moment I would have to use many separate append fields tools and then one transpose, or multiple transposes and union. Either way you have to attach something to each tool.

-

New Tool

Visio is our organization's most common method of communicating business processes and workflows. Being able to export an Alteryx workflow to Visio would help us communicate the tool's functionality to process owners.

-

General

-

New Tool

Create a tool that allows user to create calculated fields for Tableau to output along with a .tde so they are available when openning the tde.

There are several situations where precalculated materialized data will visualize inaccurately in Tableau and calcualted fields need to be used.

- 1:* measures - Fixed Lod expersions for selected measures

- Count Distinct

- Percentages and Ratios

-

General

-

New Tool

I constantly find my using pre and post SQL Commands in the Output tool to run SQL when I don't actually have any data to output.

One example is when I load data into S3 and want to load it into Redshift. I have SQL code to run but no data to Output - I end up running a dummy row into a temp table.

So can we have an SQL tool that simply acts the same as a Pre-SQL command without the associated data output. Once the command is run we should be able to continue the workflow, so the tool should have an option input and output, like the Run Command tool.

-

Feature Request

-

New Tool

-

New Tool

- New Idea 219

- Accepting Votes 1,825

- Comments Requested 25

- Under Review 155

- Accepted 61

- Ongoing 5

- Coming Soon 6

- Implemented 480

- Not Planned 122

- Revisit 67

- Partner Dependent 4

- Inactive 674

-

Admin Settings

19 -

AMP Engine

27 -

API

11 -

API SDK

217 -

Category Address

13 -

Category Apps

112 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

240 -

Category Data Investigation

75 -

Category Demographic Analysis

2 -

Category Developer

206 -

Category Documentation

78 -

Category In Database

212 -

Category Input Output

632 -

Category Interface

236 -

Category Join

101 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

75 -

Category Predictive

77 -

Category Preparation

385 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

949 -

Data Products

1 -

Desktop Experience

1,499 -

Documentation

64 -

Engine

124 -

Enhancement

284 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

10 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

New Request

179 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

21 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

73 -

UX

220 -

XML

7

- « Previous

- Next »

- aatalai on: Applying a PCA model to new data

- charlieepes on: Multi-Fill Tool

- vijayguru on: YXDB SQL Tool to fetch the required data

- apathetichell on: Github support

- Fabrice_P on: Hide/Unhide password button

- cjaneczko on: Adjustable Delay for Control Containers

-

Watermark on: Dynamic Input: Check box to include a field with D...

- aatalai on: cross tab special characters

- KamenRider on: Expand Character Limit of Email Fields to >254

- TimN on: When activate license key, display more informatio...

| User | Likes Count |

|---|---|

| 52 | |

| 12 | |

| 7 | |

| 6 | |

| 6 |