Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

I'm adding a 'Dynamic Input' tool to a macro that will dynmaically build the connection string based on User inputs. We intend to distribute this macro as a 'Connector' to our main database system.

However, this tool attempts to connect to the database after 'fake' credentials are supplied in the tool, returning error messages that can't be turned off.

In situations like this, I think you'd want the tool to refrain from attempting connections. Can we add a option to turn off the checking of credentials? I assume that others who are building the connection strings at runtime would also appreciate this as well.

As a corollary, for runtime connection strings, having to define a 'fake' connection in the Dynamic Input tool seems redundant, given we have already set the 'Change Entire File Path' option. There are some settings in the data connection window that are nice to be able to set at design time (e.g. caching, uncommitted read, etc.), but the main point of that window to provide the connection string is redundant given that we intend to replace it with the correct string at runtime. Could we make the data connection string optional?

To combine the above points, perhaps if the connection string is left blank, the tool does not attempt to connect to the connection string at runtime.

-

Category Apps

-

Category Macros

-

Desktop Experience

-

Tool Improvement

When I first started using Alteryx I did not use macros or the Runtime Tab much at all and now I use both a lot but...I can't use them together.

When working in a macro there is no Runtime Tab. While working on a macro and testing it you can't take advantage of any of the handy features in the Runtime Tab. I am assuming a macro will inherit any settings from the Flow that calls it, can't find anything in the community or "help" to confirm that though, but this is not helpful while developing and testing.

-

Category Macros

-

Desktop Experience

There is currently no way to export interactive output from the network graph tool. I would like to be able to export a png of the static network graph image, a pdf of the report, and a complete html of the whole (which means including the JSON and vis.js files necessary for creating the report).

-

Category Interface

-

Category Macros

-

Category Reporting

-

Desktop Experience

Dynamic macros that fetch the current version at every run time vs storing a static copy of the macro with the workflow at publish time are challenging to pull off using shared drives.

This suggestion is to store dynamic macros in the gallery and secure their use with collections.

-

Category Macros

-

Enhancement

Thinking of something along the lines of the NuGet package manager: https://www.nuget.org/

-

Category Macros

-

Desktop Experience

1. A User repository for macros in the Users folder, e.g. My DocumentsMy Alteryx Macros

This would make it easier to install macros without needing any administrator rights

2. A right click operation on a yxmc file (or a menu operation in Alteryx) that Install the macro ie. will move any macro into the folder above.

This would make it very simple to show new users how to install any macro you send them

Both these ideas will make it easier for partners and the Alteryx user community to share macros.

-

Category Macros

-

Desktop Experience

Hi,

when I right-click on an Input tool, I can select "Convert To Macro Input" from the context menu. I would like the similar functionality when right-clicking a Browse tool to "Convert To Macro Output".

-

Category Interface

-

Category Macros

-

Desktop Experience

The "Detour" tool is incredibly useful in Macros. However, it really isn't much use in the normal workflow area.

We need a "Detour" tool suitable for normal Workflow (not from within a Macro) which would greatly aid in workflow controls and logic.

-

Category Macros

-

Desktop Experience

-

Category Macros

-

Desktop Experience

Idea:

A funcionality added to the Impute values tool for multiple imputation and maximum likelihood imputation of fields with missing at random will be very useful.

Rationale:

Missing data form a problem and advanced techniques are complicated. One great idea in statistics is multiple imputation,

filling the gaps in the data not with average, median, mode or user defined static values but instead with plausible values considering other fields.

SAS has PROC MI tool, here is a page detailing the usage with examples: http://www.ats.ucla.edu/stat/sas/seminars/missing_data/mi_new_1.htm

Also there is PROC CALIS for maximum likelihood here...

Same useful tool exists in spss as well http://www.appliedmissingdata.com/spss-multiple-imputation.pdf

Best

-

Category Macros

-

Category Predictive

-

Desktop Experience

Currently there is no option to edit an existing macro search path from Options-> User Settings -> Macros. Only options are Add / Delete. Ideally we need the Edit option as well.

Existing Category needs to be deleted and created again with the correct path, if search path is changed from one location to another.

-

Category Interface

-

Category Macros

-

Desktop Experience

There is an irony in asking for what is essentially the Alteryx version of 'Formula Wizard' from Excel

As great as the guides have been in the community, the Batch Macro is one of most difficult to repeat and explain.

It would be great for users to have a prompt that recognises a Directory input of excel files and at the point of adding a Macro, having a series of prompts at each stage help build out the desired result (whether that be returning all sheets or specific sheets).

It would further highlight one the great features & key enablers of Alteryx

-

Category Macros

-

Desktop Experience

"Enable Performance Profiling" a great feature for investigating which tools within the workflow are taking up most of the time.This is ok to use during the development time.

It would be ideal to have this feature extended for the following use cases as well:

- Workflows scheduled via the scheduler on the server

- Macros & apps performance profiling when executed from both workstation as well as the scheduler/gallery

Regards,

Sandeep.

-

Category Apps

-

Category Macros

-

Desktop Experience

When building macros - we have the ability to put test data into the macro inputs, so that we can run them and know that the output is what we expected. This is very helpful (and it also sets the type on the inputs)

However, for batch macros, there seems to be no way to provide test inputs for the Control Parameter. So if I'm testing a batch macro that will take multiple dates as control params to run the process 3 times, then there's no way for me to test this during design / build without putting a test-macro around this (which then gets into the fact that I can't inspect what's going on without doing some funkiness)

Could we add the same capability to the Control Parameter as we have on the Macro Input to be able to specify sample input data?

-

Category Macros

-

Desktop Experience

Think of a pivot table on steroids. In my industry, "strats" are commonly used to summarize pools of investment assets. You may have several commonly used columns that are a mix of sums and weighted averages, capable of having filtering applied to each column. So you may see an output like this:

| Loan Status | Total Balance | % of Balance | % of Balance (in Southwest Region) | Loan to Value Ratio (WA) | Curr Rate (WA) | FICO (WA) | Mths Delinquent (WA) |

| Current | $9,000,000 | 90 | 80 | 85 | 4.5 | 720 | 0 |

| Delinquent | $1,000,000 | 10 | 100 | 95 | 5.5 | 620 | 4 |

| Total | $10,000,000 | 100 | 90 | 86 | 4.6 | 710 | 0.4 |

Right now, I feel like to create the several sums and weighted averages, it's just too inefficient to create all the different modules, link them all together and run them through a transpose and/or cross tab. And to create a summary report where I may have 15 different categories outside of Loan Status, I'd have to replicate that process with those modules 15 times.

Currently, I have a different piece of software where I can simply write out sum and WA calcs for each column, save that column list (with accompanying calcs) and then simply plug in a new leftmost category for each piece of data I'm looking at. And I get the Total row as well auto-calculated as well.

-

Category Apps

-

Category Data Investigation

-

Category In Database

-

Category Macros

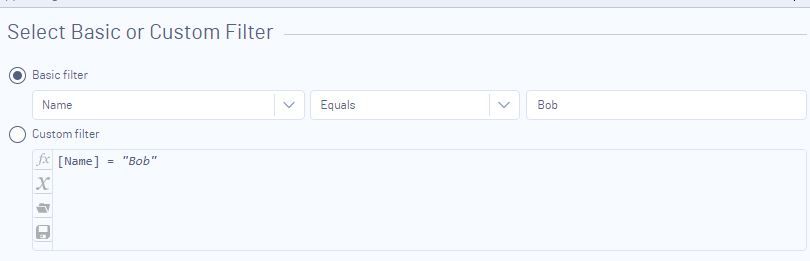

Never noticed this, because I always use the custom filter option, not the basic. But I had a user come to me asking why his app wasn't updating his filter properly.

He configured the filter tool thusly (dummy data):

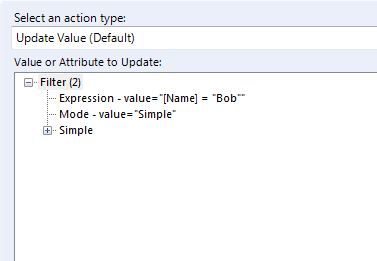

And here is the what the action tool looks like when you connect it to the filter tool:

So he simply highlighted the "Bob" line and picked to update "Bob".

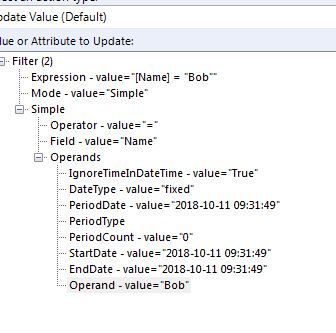

However, since he used a basic filter, and not a custom one, this is how he should've configured the action tool:

I realize that "well, it's spelled out for you - there's an expression section & a simple section in the action tool". But for beginners or even non-beginners, it might not be obvious.

It would be nice if when you connect the action too, it only displayed the appropriate option (either custom or simple, but not both).

-

Category Apps

-

Category Macros

-

Desktop Experience

-

Category Macros

-

Desktop Experience

Problem : when I develop a macro, I often change the configuration in the "Template input" part of the "Macro Input" tool from "Text Input" to "File Input".

Doing that loses the previous data : moving from "Text Input" to "File Input" removes the data entered and moving from "File Input" to "Text Input" removes the pointer to the file.

Which is annoying.

Solution : keep the data or file pointer in the "Template Input" so that it doesn't disappear when changing configuration choice.

-

Category Macros

-

Desktop Experience

Hello gurus -

I think it would be an important safety valve if at application start up time, duplicate macros found in the 'classpath' (i.e., https://help.alteryx.com/current/server/install-custom-tools, ) generate a warning to the user. I know that if you have the same macro in the same folder you can get a warning at load time, but it doesn't seem to propagate out to different tiers on the macro loading path. As such, the developer can find themselves with difficult to diagnose behavior wherein the tool seems to be confused as to which macro metadata to use. I also imagine someone could also arrive at a situation where a developer was not using the version of the macro they were expecting unless they goto the workflow tab for every custom macro on their canvas.

Thank you for attending my TED talk on the upsides of providing warnings at startup of duplicate macros in different folder locations.

-

API SDK

-

Category Developer

-

Category Macros

-

Desktop Experience

Hi

While the download tool, does a great job, there are instances where it fails to connect to a server. In these cases, there is no download header info that we can use to determine if the connection has failed or not.

Currently the tool ouputs a failure message to the results window when such a failure occurs.

Having the 'failed to connect to server' message coming into the workflow in real time would allow for iterative macro to re-try.

Thanks

Gavin

-

Category Connectors

-

Category Input Output

-

Category Macros

-

Data Connectors

- New Idea 376

- Accepting Votes 1,784

- Comments Requested 21

- Under Review 178

- Accepted 47

- Ongoing 7

- Coming Soon 13

- Implemented 550

- Not Planned 107

- Revisit 56

- Partner Dependent 3

- Inactive 674

-

Admin Settings

22 -

AMP Engine

27 -

API

11 -

API SDK

228 -

Category Address

13 -

Category Apps

114 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

252 -

Category Data Investigation

79 -

Category Demographic Analysis

3 -

Category Developer

217 -

Category Documentation

82 -

Category In Database

215 -

Category Input Output

655 -

Category Interface

246 -

Category Join

108 -

Category Machine Learning

3 -

Category Macros

155 -

Category Parse

78 -

Category Predictive

79 -

Category Preparation

402 -

Category Prescriptive

2 -

Category Reporting

204 -

Category Spatial

83 -

Category Text Mining

23 -

Category Time Series

24 -

Category Transform

92 -

Configuration

1 -

Content

2 -

Data Connectors

982 -

Data Products

4 -

Desktop Experience

1,604 -

Documentation

64 -

Engine

134 -

Enhancement

406 -

Event

1 -

Feature Request

218 -

General

307 -

General Suggestion

8 -

Insights Dataset

2 -

Installation

26 -

Licenses and Activation

15 -

Licensing

15 -

Localization

8 -

Location Intelligence

82 -

Machine Learning

13 -

My Alteryx

1 -

New Request

226 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

26 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

85 -

UX

227 -

XML

7

- « Previous

- Next »

- abacon on: DateTimeNow and Data Cleansing tools to be conside...

-

TonyaS on: Alteryx Needs to Test Shared Server Inputs/Timeout...

-

TheOC on: Date time now input (date/date time output field t...

- EKasminsky on: Limit Number of Columns for Excel Inputs

- Linas on: Search feature on join tool

-

MikeA on: Smarter & Less Intrusive Update Notifications — Re...

- GMG0241 on: Select Tool - Bulk change type to forced

-

Carlithian on: Allow a default location when using the File and F...

- jmgross72 on: Interface Tool to Update Workflow Constants

-

pilsworth-bulie

n-com on: Select/Unselect all for Manage workflow assets

| User | Likes Count |

|---|---|

| 7 | |

| 5 | |

| 3 | |

| 2 | |

| 2 |