Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

This idea has arisen from a conversation with a colleague @Carlithian where we were trying to work out a way to remove tools from the canvas which might be redundant, for example have you added a select tool to the canvas which hasn't been configured to change a data type or rename a field. So we were looking for ways of identifying in the workflow xml for tools which didn't have a configuration applied to them.

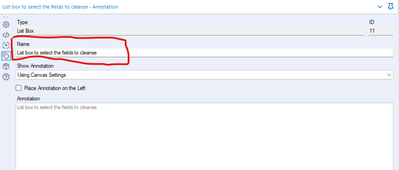

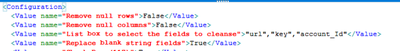

This highlighted to me an issue with something like the data cleanse tool, which is a standard macro.

The xml view of the data cleanse configuration looks like this:

<Configuration>

<Value name="Check Box (135)">False</Value>

<Value name="Check Box (136)">False</Value>

<Value name="List Box (11)">""</Value>

<Value name="Check Box (84)">False</Value>

<Value name="Check Box (117)">False</Value>

<Value name="Check Box (15)">False</Value>

<Value name="Check Box (109)">False</Value>

<Value name="Check Box (122)">False</Value>

<Value name="Check Box (53)">False</Value>

<Value name="Check Box (58)">False</Value>

<Value name="Check Box (70)">False</Value>

<Value name="Check Box (77)">False</Value>

<Value name="Drop Down (81)">upper</Value>

</Configuration>

As it is a macro, the default labelling of the drop downs is specified in the xml, if you were to do something useful with it wouldn't it be much nicer if the interface tools were named properly - such as:

So when you look at the xml of the workflow it's clearer to the user what is actually specified.

Our company has a need to link a new data source in Athena. We have been able to establish a connection using the input functionality however the connection is so slow it is unusable. We need to have Alteryx build an In Database option for Athena to allow us to link our data lake to Alteryx.

As we do more work analyzng the canvasses that our folk are producing - it's becoming more and more necessary to have a well documented definition and schema for the XML that is used for Alteryx Canvasses.

Please could you publish the full XML definition and schema for Alteryx canvasses - this will allow groups to perform deeper analytics on how people are using Alteryx, automate quality checks; look for learning gaps; scan for dependencies etc?

Note: this relates to an idea from @dataprep here: https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Documentation-tool-list-fileformat/idi-p/184...

Can we please have a tickbox (ideally one that remembers your preference to be ticked or unticked) on the Save to Gallery pop up that would allow us to save a (timestamped?) copy of that workflow on a local drive (perhaps one that is preset in the user settings)?

I would like Alteryx to create an internship support program that provides a license similar to a trial but for an extended period, say 6 to 8 weeks, and tied to core certification. you could repackage much of the existing training into a curriculum aimed at educating new users sufficiently on the elements necessary to pass the Core certification within a short time frame.

Our organization just launched an internship program and had our first group of interns start 5 weeks ago. I had to come up with a plan that provided the intern a valuable experience. I decided to make Alteryx Core certification a key objective and put him on a spare license we had for the duration and worked with him to get his core.

I think this could be a great marketing tool for Alteryx. It would get more people entering the workforce educated about your product so that no matter where they end up they might already be a fan and suggest the tool as a solution in a new job that doesn’t currently know about you. Conversely it gives interns a certification that shows they know more than the other applicants for a job where Alteryx is already a tool. I am sure there are tax benefits to Alteryx as well for each license used.

This is kind of how we discovered Alteryx, we had issues with volume of data and technology limitations (Excel) and someone had used Alteryx at a prior company and suggested we try it out. We purchased a couple licenses, then within a couple years we had 16 licenses. You can’t sell someone who doesn’t know you exist…the internship type license is a good idea to expand the list of people in the workplace who know you exist. Even better they will have have reached a level of knowledge, core certification, to have a basic appreciate your value.

The idea is to store credentials, login/pw in a "credential alias".

Then, those credential aliases can be used in :

-traditional aliases/connection

-in database aliases/connection

-hdfs aliases/connection

-API

-on user aliases for connected controllers/gallery

...etc.

The idea is that I only have to change the credentials once for all the connection type (on Hive, I have the in db alias, the traditional alias and even an HDFS alias using exactly the same credentials !! and I have to change all that manually).

When building API calls within Alteryx there are a few common steps required

1) Build out the URI for the API call (base URL plus any query parameters)

2) Deal with authentication, such as basic authentication requires taking a key and secret, base 64 encoding and passing this into the tool

3) parsing the results out and processing these downstream

For this idea I am specifically focusing on step 3 (but it would be great to have common authentication methods in-built within the download tool (step 2)!).

There are common steps required to parse out the results, such as using Filter (to check for a 200 response), JSON parse, text to columns and then cross tab to get the results into a readable format. These will all be common steps anyone who has worked with APIs will be familiar with:

This is all fine for a regular user to quickly add in and configure these tools. However there is no validation here for the JSON result being as expected, which when embedding an API into a batch macro or analytic app means it can easily fail.

One example of a failure which I've recently come across is where the output JSON doesn't have all fields (name:value pairs) depending the json response. For example using the UK Companies House API, when looking at the ceased to act field at this endpoint - https://developer-specs.company-information.service.gov.uk/companies-house-public-data-api/resources... the ceased to act field only appears in the results if a person has actually ceased to act. This is important if you have downstream tools such as a formula to create a field [Active] where you have:

IF ISNull([ceased_to_act]) THEN "Active" ELSE "Ceased to Act" ENDIFHowever without modification the macro / app will error if any results are returned where there is not this field.

A workaround is to add in the Crew Ensure Fields or union on a list of fields, to ensure that the Cease to Act field is present in the output for all API calls. But looking at some other tools it would be good if an expected Schema could be built in to the download tool to do this automatically.

For example in Power Automate this is achieved as follows:

I am a big advocate of not making things unnecessarily complicated. Therefore I would categorise this as an ease of use feature to improve the experience of working with APIs within Alteryx and make APIs (as load of integrations are API based) accessible to as many users as possible.

Sometimes I need to connect to the data in my Database after doing some filtering and modeling with CTEs. To ensure that the connection runs quicker than by using the regular input tool, I would like to use the in DB tool. But is doesn't working because the in DB input tool doesn't support CTEs. CTEs are helpful for everyday life and it would be terribly tedious to replicate all my SQL logic into Alteryx additionally to what I'm already doing inside the tool.

I found a lot of people having the same issue, it would be great if we can have that feature added to the tool.

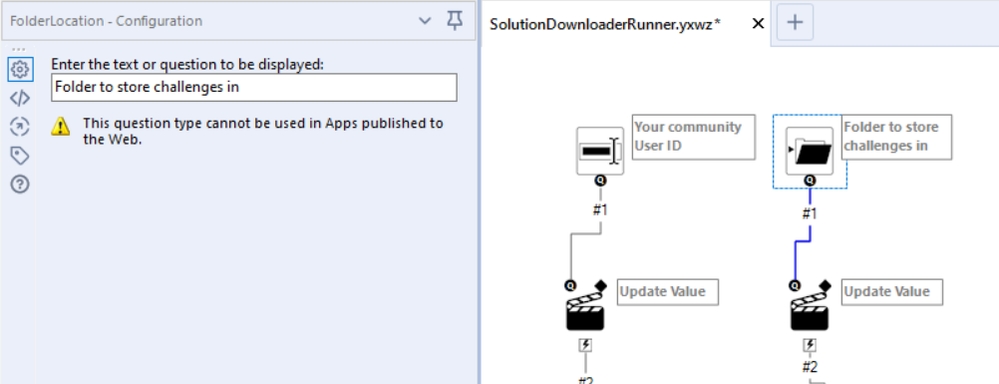

Similar to previous ideas from @patrick_mcauliffe and @shailesh_patel - would like to request 2 things:

Default on Folder Picker Interface tool

The folder picker tool does not currently allow a default value - this unnecessarily adds work if users have the same value 90% of the time.

Please add a field for the default value that will show when the interface starts up

Similar ideas:

- Default on Date interface: https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Default-Date-for-Interface-Tool/idi-p/35770

- Default on File Selector: https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Default-file-location-in-file-broswer-Interf...

Hi Alteryx

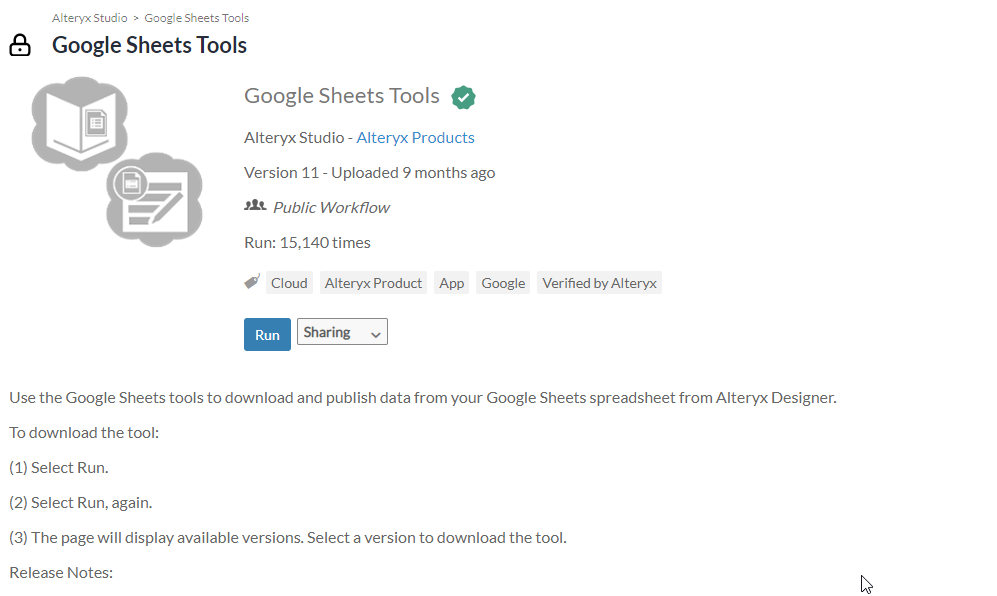

I understand why you need to keep bloat away from the product and have tools available to download instead, it means you can iterate and update them outside the usual cadence cycles. But please, for the love of everything holy, make it easier to find them and download them.

Let me give you an example of downloading the Google Sheets Input Tool:

1. I type in the amazing search and find a help article on it, so far so good:

2. I am pointed to the Gallery:

3. I click but where do I look? I need to revert to the tiny search in the top left. This isn't obvious for new users

4. but the search doesn't come top, how some of these search results get in above what I need I have no idea. I get to page 4 before I see something that looks like what I need before I realise it is a third party tool having installed it. I come back, can't find the tool and so give up. If it's there somewhere then it needs to be more obvious.

5. I google - I finally find (third item) something that's more useful but only because I know what I'm looking for

6. I run the workflow, then run it again as per the instructions. At this point I'm losing the will to live tbh.

7. Finally something that looks useful, I bang the huge download button twice and wonder why it didn't work.

9. I read the text and realise I need to click the link - finally I have the installer.

That was a five minute job. It was painful. And I'm a seasoned Alteryx user. If I was a new user, I'd have given up at step 2 or 3.

But what was the thing I downloaded in Step 8? A set of release notes and links....why aren't these simply added to the help article I found in Step 1/2? It would surely be easier for you, and would be a whole lot easier for users. Why do we need this painful process?

Please please please make it easier for me to install new tools.

When training people on the use of action tools, something that I always have to hit on is that when you are telling the tool which piece of the XML that you are adjusting, it's sort of difficult to tell what you have selected, and super easy to accidentally select something else.

Example:

When you initially select the action to take it's this nice Blue Color. However, it still doesn't feel exactly like you have actually selected anything or told the Action Tool what to do, since it's so easy to just select any other one of these actions.

A slightly different problem is that if you are selecting an action that has been previously configured, it is just this light grey color. So it can be easy to accidentally change your settings because you may not realize it's actually set up.

Here is a recent community post that sort of outlines a few of these problems.

Hello all,

Some Database, including Hive, support natively scheduled queries (yes, the scheduling configuration is inside the database, not through etl/dataprep system). I think this would be an interesting feature for in-db workflow output : you play the worflow once and then only have to run it when it changes, the database do the scheduling.

https://cwiki.apache.org/confluence/display/Hive/Scheduled+Queries

Intro

Executing statements periodically can be usefull in

- Pulling informations from external systems

- Periodically updating column statistics

- Rebuilding materialized views

Best regards,

Simon

To get simple information from a workflow, such as the name, run start date/time and run end date/time is far more complex than it should be. Ideally the log, in separate line items distinctly labelled, would have the workflow path & name, the start date/time, and end date/time and potentially the run time to save having to do a calculation. Also having an overall module status would be of use, i.e. if there was an Error in the run the overall status is Error, if there was a warning the overall status is Warning otherwise Success.

Parsing out the workflow name and start date/time is challenge enough, but then trying to parse out the run time, convert that to a time and add it to the start date/time to get the end date/time makes retrieving basic monitoring information far more complex than it should be.

I would love to see a "Product" option added to the summarize tool. I can currently count, sum, mean etc., but I can't multiply my data while grouping. There are numerous "work arounds", but a native product function built into the summarize tool would be great.

Thanks for listening!

Most databases treat null as "unknown" and as a result, null fails all comparisons in SQL. For example, null does not match to null in a join, null will fail any > or < tests etc. This is an ANSI and ISO standard behaviour.

Alteryx treats null differently - if you have 2 data sets going into a join, then a row with value null will match to a row with value null.

We've seen this creating confusion with our users who are becoming more fluent with SQL and who are using inDB tools - where the query layer treats null differently than the Alteryx layer.

Could we add a setting flag to Alteryx so that users can turn on ISO / ANSI standard processing of Null so that data works the same at all levels of the query stack?

Many thanks

Sean

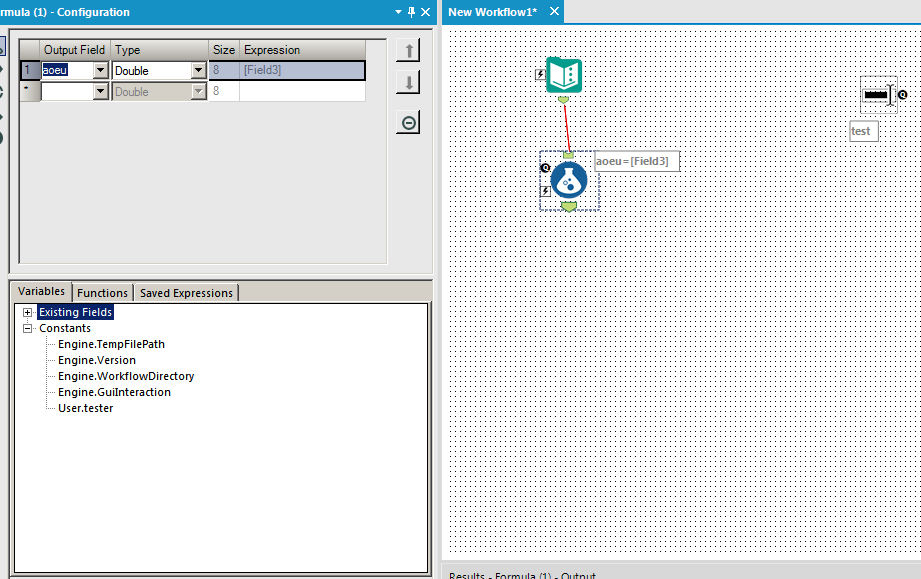

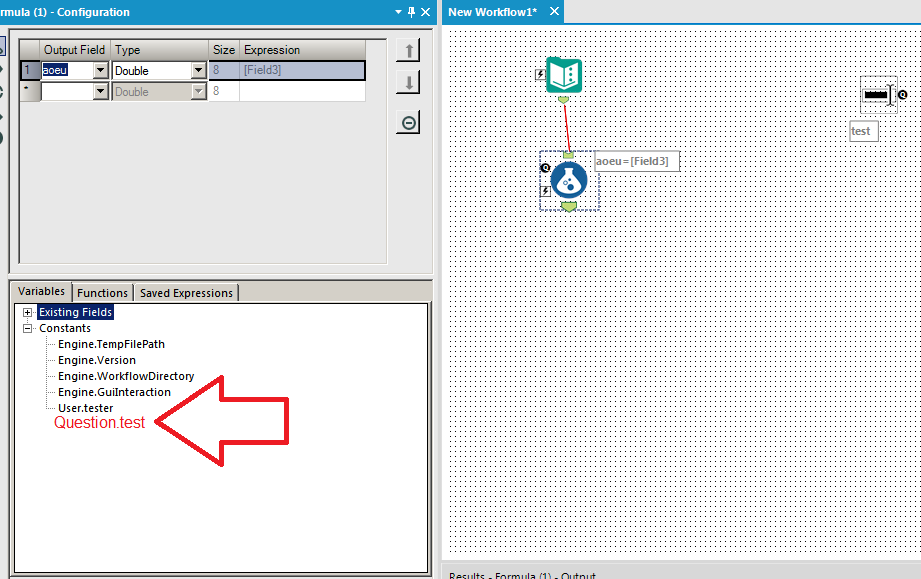

Whenever I add an interface tool, it adds a constant just like the 4 engine constants and any user constants. It would be useful if tools like the formula and filter automatically added question constants to the list for you to use. This would be identical to how user constants behave currently. Here is the before and after for visual effect:

BEFORE:

AFTER:

Hello all,

I try to use Alteryx and MonetDB, a very cool column store database in Open Source.

When I use the Visual Query Builder, I get this :

The fields names are totally absent.

The reason is Alteryx does not use the standard ODBC SQLColums() function at all but send some query (here "select * from demo.exemplecomparetable.fruit1 a where 0=1" ) to get a sample of data. In the same time, Monetdb sends the error "SELECT: only a schema and table name expected" (not shown to user, totally silent)

I think this should be implemented like that :

1/use of SQLColums() function which is a standard for ODBC and should work most of the time

2/if SQLColums() does not work, send the current queries.

It's widely discussed here with the MonetDB team.

https://github.com/MonetDB/MonetDB/issues/7313

Best regards,

Simon

Create new connector to pull Salesforce Reports

We are a large company with tens of thousands employees using Salesforce on a daily basis. Over the years, we have worked with Salesforce to make many customizations and create many reports to provide data for various reporting needs. However, we have increasingly found it inefficient and prone to error to download the reports manually. We have many teams using the Salesforce reports as a base to create additional business insights.

Alteryx is a great tool to manage data ETL and workflows, but it does not support pulling data from Salesforce reports directly. Instead, it only offers connectors to pull data from base Salesforce objects. The data from Salesforce objects such as tables can be useful, but do not necessarily offer the logical view of Salesforce reports, and may require a lot of efforts to reconcile the data consistency against the reports our users are used to. Sometimes, it may be impossible to repeat producing the same data from Salesforce tables as those from Salesforce reports. That in turn would cause a lot of efforts spent by the reporting teams, their audience, and users of the Salesforce reports to match things up.

Salesforce does not have any out-of-box solution to schedule downloading the reports. At our request, their support team did some research and have not found a good 3rd-party solution in the Salesforce App Exchange ecosystem that supports this need.

I strongly believe this is a great opportunity for Alteryx. Salesforce already has an API that allows for building custom applications to pull Salesforce reports. However, most Salesforce users are more business oriented and do not necessarily have the appetite to engage with their IT staff or external resources provide to develop such apps and bear the burden to main them.

I have attached the Salesforce Reports and Dashboards API Developer Guide for your reference.

Sincerely,

Vincent Wang

Would it be possible to Hide all annotations by default rather than each time a new workflow is created? It's a simple thing but can save time.

I find the myself often needing to create unique IDs for a given category. Currently I end up using the multi row tool and leveraging the "group by" option. Enabling the record ID tool to create a unique count by grouping on distinct categories in an underlying data set would unlock an new level of grouping that would consolidate record keeping functionality in a single tool.

- New Idea 377

- Accepting Votes 1.784

- Comments Requested 21

- Under Review 178

- Accepted 47

- Ongoing 7

- Coming Soon 13

- Implemented 550

- Not Planned 107

- Revisit 56

- Partner Dependent 3

- Inactive 674

-

Admin Settings

22 -

AMP Engine

27 -

API

11 -

API SDK

228 -

Category Address

13 -

Category Apps

114 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

252 -

Category Data Investigation

79 -

Category Demographic Analysis

3 -

Category Developer

217 -

Category Documentation

82 -

Category In Database

215 -

Category Input Output

655 -

Category Interface

246 -

Category Join

108 -

Category Machine Learning

3 -

Category Macros

155 -

Category Parse

78 -

Category Predictive

79 -

Category Preparation

402 -

Category Prescriptive

2 -

Category Reporting

204 -

Category Spatial

83 -

Category Text Mining

23 -

Category Time Series

24 -

Category Transform

92 -

Configuration

1 -

Content

2 -

Data Connectors

982 -

Data Products

4 -

Desktop Experience

1.605 -

Documentation

64 -

Engine

134 -

Enhancement

407 -

Event

1 -

Feature Request

218 -

General

307 -

General Suggestion

8 -

Insights Dataset

2 -

Installation

26 -

Licenses and Activation

15 -

Licensing

15 -

Localization

8 -

Location Intelligence

82 -

Machine Learning

13 -

My Alteryx

1 -

New Request

226 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

26 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

86 -

UX

227 -

XML

7

- « Vorherige

- Nächste »

- abacon auf: DateTimeNow and Data Cleansing tools to be conside...

-

TonyaS auf: Alteryx Needs to Test Shared Server Inputs/Timeout...

-

TheOC auf: Date time now input (date/date time output field t...

- EKasminsky auf: Limit Number of Columns for Excel Inputs

- Linas auf: Search feature on join tool

-

MikeA auf: Smarter & Less Intrusive Update Notifications — Re...

- GMG0241 auf: Select Tool - Bulk change type to forced

-

Carlithian auf: Allow a default location when using the File and F...

- jmgross72 auf: Interface Tool to Update Workflow Constants

-

pilsworth-bulie

n-com auf: Select/Unselect all for Manage workflow assets

| Benutzer | Anzahl |

|---|---|

| 32 | |

| 5 | |

| 4 | |

| 3 | |

| 2 |