Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

It would be great to have an outbound connector on output tools for 2 reasons:

a) if this outbound connector can carry key results of the output process, this can be saved in an audit log. For example - rowcounts; success/failure. This kind of capabiltiy (to generate a log, or to be able to check the rowcount of rows committed to a database) is important for any large BI ETL process

b) this woudl also allow the process to continue after the output process and also act as a flow of control. For example:

- First output the product dimension

- once done - then connect (using the outbound connector) to the next macro which then updates the Sales fact table using this product dimension (foreign key dependancy)

I would love the R tool editor to work like a standard text field....it might be better explained in this scenario. Pretend the character text is a script youve written with the function being at the top. Let's say you'd like to move the function closer to the script, look at the weird output. This editor pastes text like we are pasting images.

The use case is that I like to break my code into mini functions that I work on in the r console with sample data. Once it works, I post it into alteryx and experiment with it on a small sample, then a larger sample. If I have to have a document for my overall r cost in notepad ++, my function, and the console, it’s a little nusance, especially since I usually have to go back and forth with multiple functions. I am not askin for a full blown editor, I like my notepad ++ for that, just a text input that works conventionally.

SampleFunction <- function(x)

{

print(x)

}

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

when you paste the function (or any other text in the middle, look at this funky output)

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

SampleFunction <- function(x)abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

{abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

print(x)abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

}abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

Would be nice to make it so if I change from RowCount to index it all is linked together.

Something like a $FieldName$ which would automatically get substituted.

Also nice in other formula tools, but most useful in places where name needs to be duplicated over and over

Assuming some source control or versioning is in place, a formal compare tool would be a nice addition. This would be useful for determining what is different between two versions of a workflow, and that knowledge is very useful when modifying a production process: when formally moving a new (modified) process into production, part of the checks and balances would be to run a formal comparison against the workflow being replaces, and ensure that all differences are accounted for.

This sort of audit is notoriously difficult when the differences are buried deep in the configuration settings of various tools within Alteryx. I do see that the .yxmd files are XML based, so perhaps we could create our own compare tool based thereon, but it would be better (more trustworthy) to have one formally provided by Alteryx. Thanks!

In Dec I had an issue where I could not uninstall or upgrade Alteryx. As part of troubleshooting and the eventual solution I had to manually delete any registry key related to Alteryx. As these were hundreds of entries this took a long time. It would be handy if Alteryx could provide a tool that cleaned the registry of all Alteryx related entries. Related: "Case 00088264: Unable to uninstall Alteryx"

Would be nice to have the regex tool allow you to drop original input field and report and error if any records fail to parse.

The SQL Editor window could have a better presentation of the SQL code; two issues observed

- First, that it's simply plain text without even a fixed-width font, much less syntax highlighting

- Second, if you type in some manually formatted SQL code (e.g. with line feeds and indentation), then click on the "Visual Query Builder" button, then click back to the "SQL Editor" button, all the formatting is lost as it is converted to one run-on line of code, which is very difficult to read.

I understand that going between the Visual Query Builder and the SQL Editor is bound to have some issues; nonetheless the "idea" is to allow a user friendly display in the SQL Editor window:

- Use a fixed width font, (should be trivial to implement)

- SQL formatter, implementation ideas can be found here: https://github.com/TaoK/PoorMansTSqlFormatter

- SQL syntax highlighting, implementation ideas can be found here: https://github.com/jdorn/sql-formatter

My "implementation ideas" are based on a couple minutes with google, so hopefully this is a very feasible request; my user base is very likely to spend more time in the SQL editor than not, so this would be a valuable UX addition. Thanks!

The option to "Allow Shared Write Access" is only available under CSV and HDFS CSV in the Data Input tool. It would be very helpful to have this feature also included for Excel files.

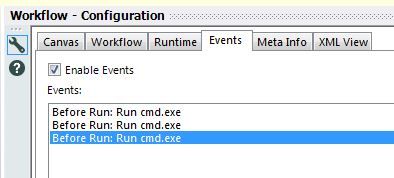

I've been using Events a fair bit recently to run batches through cmd.exe and to call Alteryx modules.

Unfortunately, the default is that the events are named by when the action occurs and what is entered in the Command line.

When you've got multiple events, this can become a problem -- see below:

It would be great if there was the ability to assign custom names to each event.

It looks like I should be able do this by directly editing the YXMD -- there's a <Description> tag for each event -- but it doesn't seem to work.

It would be useful to have a function or a tool to convert a number into a currency like excel. Where it would add the $ before and you could choose to have commas and a period for cents.

ex:

Input: 15978423.89

output: $15,978,423.89

of course, i'm sure there would be a need for other currency values besides the '$'.

415978423.89

There is a lot of usage of calendar events in business world. Having a native sync and input functions for popular calendar formats like ical or google calendars will save a lot of time

The are a lot of SQL engines on top of Hadoop like:

- Apache Drill / https://drill.apache.org/

Schema-free, low latency SQL Query Engine for Hadoop, NoSQL and Cloud Storage

It's backed up at enterprise level by MapR - Apache Kylin / http://kylin.apache.org/

Apache Kylin™ is an open source Distributed Analytics Engine designed to provide SQL interface and multi-dimensional analysis (OLAP) on Hadoop supporting extremely large datasets, original contributed from eBay Inc. - Apache Flink / https://flink.apache.org/

Apache Flink is an open source platform for distributed stream and batch data processing. Flink’s core is a streaming dataflow engine that provides data distribution, communication, and fault tolerance for distributed computations over data streams. The creators of Flink provide professional services trought their company Data Artisans. - Facebook Presto / https://prestodb.io/

Presto is an open source distributed SQL query engine for running interactive analytic queries against data sources of all sizes ranging from gigabytes to petabytes.

It's backed up at enterprise level by Teradata - http://www.teradata.com/PRESTO/

My suggestion for Alteryx product managers is to build a tactical approach for these engines in 2016.

Regards,

Cristian.

Improve HIVE connector and make writable data available

Regards,

Cristian.

Hive

| Type of Support: | Read-only |

| Supported Versions: | 0.7.1 and later |

| Client Versions: | -- |

| Connection Type: | ODBC |

| Driver Details: | The ODBC driver can be downloaded here. Read-only support to Hive Server 1 and Hive Server 2 is available. |

Please add Parquet data format (https://parquet.apache.org/) as read-write option for Alteryx.

Apache Parquet is a columnar storage format available to any project in the Hadoop ecosystem, regardless of the choice of data processing framework, data model or programming language.

Thank you.

Regards,

Cristian.

I have many aggregation in the same workflow which product one or two different column each, total could be xx different columns. Wouldn't it be nice to have a multiple append tool that could take connections from many other tools? At the moment I would have to use many separate append fields tools and then one transpose, or multiple transposes and union. Either way you have to attach something to each tool.

Functions such as Year([Date Field]), Month([Date Field]), and Day([Date Field]) would really help with date-based formulas and filter tests.

It would be helpful to have the Read Uncommitted listed as a global runtime setting.

Most of the workflows I design need this set, so rather than risk forgetting to click this option on one of my inputs it would be beneficial as a global setting.

For example: the user would be able to set specific inputs according to their need and the check box on the global runtime setting would remain unchecked.

However, if the user checked the box on the global runtime setting for Read Uncommitted then all of the workflow would automatically use an uncommtted read on all of the inputs.

When the user unchecks the global runtime setting for Read Uncommitted, then only the inputs that were set up with this option will remain set up with the read uncommitted.

Although we can write a temporary file with all the parameters used it would be very helpful to pass simple parameters (or go all the way) between the Apps. I imagine any parameter created using Interface Tools should be available throughout the whole Chained App.

There is "update:insert if new" option for the output data tool if using an ODBC connection to write to Redshift.

This option really needs to be added to the "amazon redshift bulk loader" method of the output data tool, and the write in db tool.

Without it means you are forced to use the "Delete and append" output which is a pain because then you need to keep reinserting data that you already have, slowing down the process.

Using the ODBC connection option of the output data tool to write to Redshift is not an option as it is too slow. Trying to write 200MB of data, the workflow runs for 20 minutes without any data reaching the destination table. End up just stopping the workflow.

It would be good to have the ability to select what column to use for Primary key when using the "create new table" output option of the output data tool.

When using the "update: insert if new" output option, you receive the error "Primary Key required for Update" if table does not have primary key.

Workaround is to manually create table with primary key constraint.

- New Idea 255

- Accepting Votes 1,818

- Comments Requested 25

- Under Review 168

- Accepted 56

- Ongoing 5

- Coming Soon 11

- Implemented 481

- Not Planned 118

- Revisit 64

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

112 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

245 -

Category Data Investigation

76 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

636 -

Category Interface

238 -

Category Join

102 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

391 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

87 -

Configuration

1 -

Data Connectors

958 -

Data Products

3 -

Desktop Experience

1,522 -

Documentation

64 -

Engine

125 -

Enhancement

314 -

Feature Request

212 -

General

307 -

General Suggestion

4 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

11 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

New Request

187 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

24 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

77 -

UX

223 -

XML

7

- « Previous

- Next »

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

- nzp1 on: Easy button to convert Containers to Control Conta...

-

Qiu on: Features to know the version of Alteryx Designer D...

- DataNath on: Update Render to allow Excel Sheet Naming

- aatalai on: Applying a PCA model to new data

- charlieepes on: Multi-Fill Tool

- seven on: Turn Off / Ignore Warnings from Parse Tools

- vijayguru on: YXDB SQL Tool to fetch the required data