Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

I keep making the same changes to the table tool rules, using the same formulas when I build new reports. For example, Row Rule 1: Font, bold; Background Color, green; Row Rule 2; Font, bold; Background Color, blue; Row Rule 3; Font, bold; Background Color, yellow. Each is based on a formula: IsEmpty([Column Name]). I do this over and over and over again. The only thing that changes is the column name. It would be nice to have the Row Style Rules saved so they can be browsed to" or inserted.

Still waiting for the Default Table Settings to include "CENTER" in the header tab.

When the append tool detects no records in the source, it throws a warning. I would like to have the ability to supress this warning. In general, all tools should have similar warning/error controls.

I find that to do a simple concatenation of multiple fields, it takes multiple tools where it seems one would suffice. For example, if I had an address parsed into multiple fields (House Number, Street, Apt, City, State, Zip Code, Country), to combine these into a single address field, I'd have two options: Formula that manually adds each field with +' '+ in between each field, which is a lot of typing and selecting...Or Transpose data and then Summarize (concatenating) the values field with a space delimiter between each record.

Seems to me that a simpler solution would be a concatenate tool that might look and feel much like the Select tool, allowing you to choose a name for your concatenated string, input a delimiter, select the fields to concatenate, and re-order them within the tool. Bonus if it automatically converted everything to string fields (or at least allows you to designate whether you want to concatenate all your fields as numbers or strings, and then translates accordingly). Extra bonus if you also had the option to put a different delimiter after every field...

Not a super complex thing to do this task with the given tools, but it does seem like a fairly straightforward add that would likely save a whole bunch of folks at least a few minutes here and there.

I would like to see a way to partially execute a workflow (specifically for an App) for the purposes of allowing user to make selections based on a dynamic data flow.

Ex:

1. Database Selection Interface

Click Next

2. Select from available columns to pass through to the output file.

Click Next

3. Pick from selected fields which fields should be pivoted.

Output file and complete run time

This was a simple example to explain a case, but the most common use I could see is for APIs.

The dynamic input tool allows some fairly complex transformations to the underlying query - but it's not always easy to debug this when it doesn't behave as expected.

Could we add the ability to inspect the resulting query (just like you can on the InDB queries using the dynamic output component?)

It is currently possible to see this in the results / messages pane, but I can't find a way to get this into a data-stream to persist it or manipulate it.

With complex ETL jobs, we often have a very similar ETL process that needs to be run for multiple different tables (with different surrogate and natural key column IDs)

While you can do a bulk-replace by opening this up in notepad (in XML format) - it would be better if the user could do a find/replace for all instances of a table-name or a columnID from the designer UI (a deep find/replace into all the tools).

This can also be used when a field is renamed in the beginning of the flow, so that we can update this for the remainder of the flow without having to do this by trial/error.

In the browse tool, and the cell viewer attached to the browse tool - the standard control keys (control A for select all; control C for copy) do not behave as they would normally - in order to select all in the cell viewer, you have to right-click and say "Select All".

Please could you include these capabilities in the basic browse tool (control-A and control-C)?

Many thanks

Sean

Not sure if any of you have a similar issue - but we often end up bringing in some data (either from a website or a table) to profile it - and then an hour in, you realise that the data will probably take 6 weeks to completely ingest, but it's taken in enough rows already to give us a useful sense.

Right now, the only option is to stop (in which case all the profiling tools at the end of the flow will all give you nothing) and then restart with a row-limiter - or let it run to completion. The tragedy of the first option is that you've already invested an hour or 2 in the data extract, but you cannot make use of this.

It feels like there's a third option - a option to "Stop bringing in new data - but just finish the data that you currently have", which terminates any input or download tools in their current state, and let's the remainder of the data flush through the full workflow.

Hopefully I'm not alone in this need 🙂

The download tool is currently a general purpose tool that is used for many different things; from downloading FTP files; to scraping websites.

However, as a general purpose tool, it cannot serve the specific need of scraping a website without doing a huge amount of work to get there. What makes Alteryx great is the fact that it drops the barrier so that regular folks can do some really powerful analytics, but the web scraping capabilities are not yet there and still require a tremendous amount of technical skill to accomplish.

I'll go through this from top to bottom:

- Split capability: The download tool tries to be too many things to too many people. Break it up into its component parts - one for FTP; one for Web Scraping; etc - with deep speciality. You can still keep the download tool as the super-user version but by creating the specialized tools, we can make this much more user-friendly

- Connection: For enterprise users, where there's a locked down connectivity to the internet - there is no way to scrape web content without using CURL. So we need the ability to connect to websites in a way that does not require curl or complex connectivity setups for users to navigate through web proxy settings.

- Alteryx could auto-detect settings by allowing the user to point to the site within a controlled browse form like Excel does

- Parameters: Many websites explicitly support named parameters (using ? notation) - it would be very useful to allow the user to link to these parameters explicitly without having to do complex string conjugations or %20 scrubbing to get of non-URL friendly characters

- Content: Alteryx presents the user with no native ability to process HTML, so all scrubbing to pull out a specific field has to be done through complex read-through of the underlying source of the website (delivered in "DownloadedData") followed by guessing on patterns on how the site does tables or spans etc, followed by complex regex.

- Instead, we could present the user with a view of the web-page and ask them to select the elements that they want

- This would serve the dual purpose of making this user-friendly for regular folks and abstract away the technicalities; but also would allow the download tool to eliminate all the other bits of the page that are not wanted like scripts; interstitial adverts; images; headers & footers etc.

- Improved post / parse capability: Sometimes the purpose of a URL is to generate a download (like the Google Finance API) - again, would be good to observe the user using the target site to record & interpret what they are looking for and what they get (e.g. the file from google)

- HTML & XML types: why not an explicit type in Alteryx for web content?

- Finally - HTML aware. The browse tools are not currently HTML aware, so all the useful formatting to be able to see what's going on, expand nodes, find patterns etc - all this has to be copied out of Alteryx into Notepad ++. Given the ubiquity of HTML parsers and pretty printers and editors, it should be reasonably easy to get a cheap component that can provide this capability

Access to only MD5 hashes via MD5_ASCII(String) and MD5_UNICODE(String) found under string functions is limiting. Is there a way to access other hashing algorithms, ideally via the crypto algorithms from OpenSSL or the .NET framework?

- https://msdn.microsoft.com/en-us/library/system.security.cryptography.hashalgorithm(v=vs.110).aspx

- https://wiki.openssl.org/index.php/Command_Line_Utilities#Signing_.2F_Digest

Hashing functions are a very useful tool to have. There are many different types of hashes and each one has tradeoffs for different uses. This can range from error checking, privacy shielding, password protection, forensic analysis, message authentication (HMAC) and much more. See: http://stackoverflow.com/questions/800685/which-cryptographic-hash-function-should-i-choose

- For workflows with data containing existing hashes, being able to consistently create hashes from non-hashed data for comparison is useful.

- Hashes are also useful because they are the same outside the Alteryx environment. They can be used to confirm correct operation of a production system or a third party's external process.

Access to only MD5 hashes via MD5_ASCII(String) and MD5_UNICODE(String) found under string functions in the formula tool is a start, but quite limiting.

Further, the ability to use non-cryptographic hashes and checksums would be useful, such as MurmurHash or CRC. https://en.wikipedia.org/wiki/List_of_hash_functions

Having the implementation benefit from hardware acceleration (AES-NI / CUDA) would be a great plus for high volume applications.

For reference, these are some hash algorithms that could be useful in workflows:

SHA-1

SHA-256

Whirlpool

xxHash

MurmurHash

SpookyHash

CityHash

Checksum

CRC-16

CRC-32

CRC-32 MPEG-2

CRC-64

BLAKE-256

BLAKE-512

BLAKE2s

BLAKE2b

ECOH

FSB

GOST

Grøstl

HAS-160

HAVAL

JH

MD2

MD4

MD6

RadioGatún

RIPEMD

RIPEMD-128

RIPEMD-160

RIPEMD-320

SHA-224

SHA-256

SHA-384

SHA-512

SHA-3 (originally known as Keccak)

Skein

Snefru

Spectral Hash

Streebog

SWIFFT

Tiger

I have used Publish to Tableau Server macro for over a years. It works fine when I want to overwrite the data.

However, the current macros (from Alteryx Gallery and Invisio) won't work with appending the data. Please modify or develop a workable macro for 'Append the data to Tableau Server'. It will save a lot of time in the daily update process.

Note: I am using Alteryx 11 and Tableau 10.1. Thank you very much.

Hi All,

My company has been using Alteryx designer for several years now but recently started a pilot for Alteryx server. One of the issues we have found is users keep trying to publish workflows that have aliased data connections. As our current Gallery Admin, i do not have permission nor do I know the wide range of connections that the users are trying to connect to. W would like to give users the ability to create/publish an aliased connection directly to Gallery. When this happens, it would automatically add that user to the data connection. They would also be given the ability to share the data source just like they can share a work flow.

One of our goals with this pilot is to promote self service, but having users wait on Admins to create the connections slows their process down.

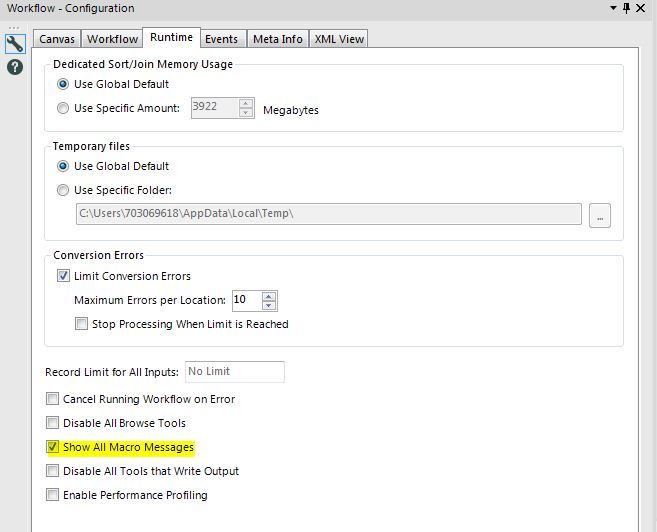

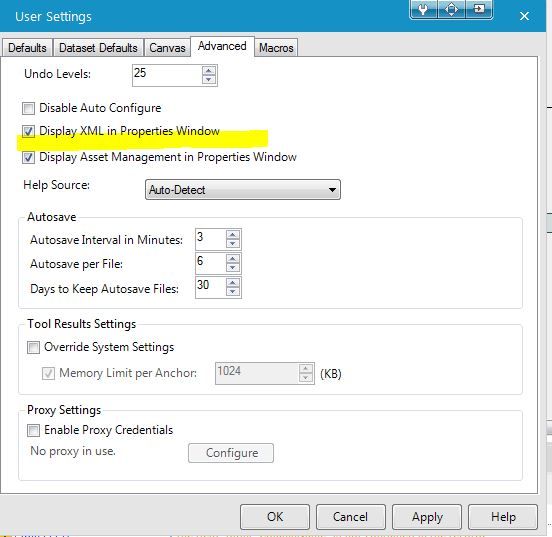

I would love to have a User Setting Default where it allows the "Show All Macro Messages" to be on for all workflows instead of having to turn it on for each workflow.

As a GIS department, we use numerous spatial datasets on a daily basis. Many of these are quite large and we are looking for ways to optimize their performance. Right now, we are forced to use an indexed folder system to increase performance, but we would like to move to Calgary databases. The problem is, that Calgary databases only hold point features which limits the number of our datasets that we can use it with. If we could spatially index line and polygon features as well, that would dramatically increase the usefulness of a Calgary database.

Hi there,

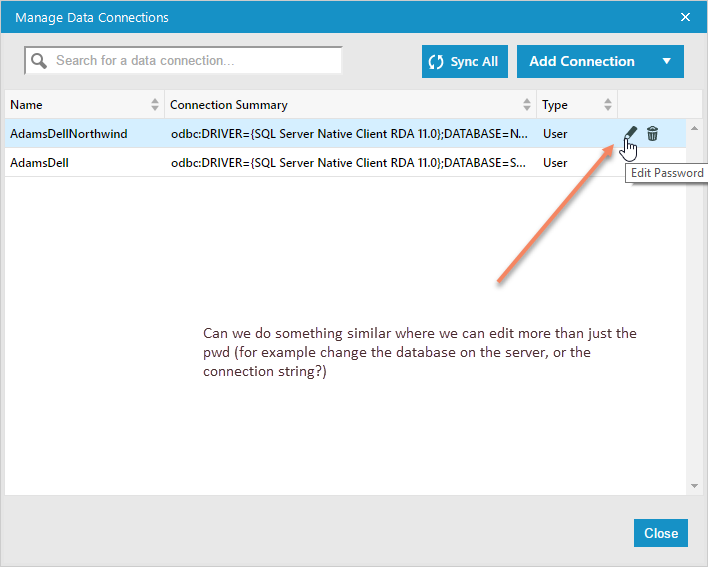

When you use DB connection aliases that are saved in Alteryx, it's currently not easy to edit them when you move a database to a different location.

Can we do something simliar to the "Edit Password" function, but which allows the user to also edit the database or server, so that this applies to all workflows using this alias?

When dealing with very large tables (100M rows plus), it's not always practical to bring the entire table back to the designer to profile and understand the data.

It would be very useful if the power of the field summary tool (frequency analysis; evaluating % nulls; min & max values; length of strings; evaluating if the type is appropriate or could be compressed; whether there is whitespace before or after) could be brought to large DB tables without having to bring the whole table back to the client.

Given that each of these profiling tasks can be done as a discrete SQL query; I would think that this would be MASSIVELY faster than doing this client-side; but it would be a bit of a pain to write this tool.

If there is interest in this - I'm more than happy to work with the Alteryx team to look at putting together an initial mockup.

Cheers

Sean

Hi there,

When you connect to a SQL server in 11, using the native SQL connection (thank you for adding this, by the way - very very helpful) - the database list is unsorted. This makes it difficult to find the right database on servers containing dozens (or hundreds) of discrete databases.

Could you sort this list alphabetically?

I would like to request that IBM Big Insights become a supported data source. Currently I have been unable to connect Alteryx Designer to Big Insights through any ODBC driver.

Or ability to batch change the connection string from A --> B for all tools using A.

Or ability to set a default "saved connection" for a workflow and if you change it cascade the change to all tools.

Case: you have numerous connections in a workflow to a database. You then either:

a) move your data somewhere else and need to replace all the connecitons

b) OR have a standard process for working against DEV, Staging, etc. and want to switch the workflow to use a different saved connection

- New Idea 268

- Accepting Votes 1,818

- Comments Requested 24

- Under Review 173

- Accepted 56

- Ongoing 5

- Coming Soon 11

- Implemented 481

- Not Planned 116

- Revisit 63

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

245 -

Category Data Investigation

76 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

639 -

Category Interface

239 -

Category Join

102 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

394 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

88 -

Configuration

1 -

Content

1 -

Data Connectors

960 -

Data Products

2 -

Desktop Experience

1,530 -

Documentation

64 -

Engine

126 -

Enhancement

322 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

12 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

190 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

79 -

UX

222 -

XML

7

- « Previous

- Next »

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update - StarTrader on: Allow for the ability to turn off annotations on a...

-

AkimasaKajitani on: Download tool : load a request from postman/bruno ...

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

- maryjdavies on: Lock & Unlock Workflows with Password

- noel_navarrete on: Append Fields: Option to Suppress Warning when bot...

- nzp1 on: Easy button to convert Containers to Control Conta...

| User | Likes Count |

|---|---|

| 9 | |

| 8 | |

| 5 | |

| 5 | |

| 5 |