Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

At present, users can create a new field or update an existing one within the formula tool.

When making changes to an additional column, I often have to then add a select tool to rename this (or remove the original column if I make another with the correct name). Therefore, it'd be great if there was an option to rename the output of the formula as part of the configuration. Perhaps a tick box to 'Rename column upon output' along with a text box, where the Data Type selection is.

This is a fairly minor QoL thing but it could definitely trim down the number of tools used in some cases. In terms of referencing the field itself, maybe the formula tool could use [This field] or something descriptive to dynamically reference it, rather than the actual name which will be edited.

As an aside, I'm not sure if it's a technical limitation, but it'd also be brilliant if the field size could be changed for existing columns (within the limitations of the data type), rather than being static.

While the result window allows sorting and filtering, every time the user switches to another tool within the same run, the configuration is lost. It would be good if there was a 'Retain" button so that the user does not have to keep setting this each time the tool is switched or when the canvas is retriggered.

Most organizations have rules for password expiry every 60-90 days. We have to go into each workflow that has an email tool and update it manually and reschedule it.

If the username and password for an email tool is coming from a field, we can then use a macro to update it.

The Input Data and Text Input Tools are visually distinct, so it's easy to see when a workflow is inputting live (File) or static (Text) data.

The Macro Input tool has the same appearance whether it's inputting a File or Text data, so you have to open the tool configuration to see whether it's inputting live (File) or static (Text) data. It would be great if there was a way to visually distinguish these two cases, perhaps even separating the macro tool into two tools, one for Files and one for Text.

The current Export Workflow user experience is extremely frustrating and it sometimes takes me several attempts to export the workflow with all of the correct assets. Some ideas for improving the UX:

- Allow the width of the window to be expanded or maximized. I often have many assets that start with the same folder structure name and I have to scroll to the right for each one to decide whether to check or uncheck it.

Have a display option for "Group asset by Type" (e.g., Input, Output, Macro). I typically only package up my workflows with the embedded macros, not the Inputs or Outputs. (This is especially important during development and testing, when interim yxdb's are saved to facilitate QC and trouble-shooting.) I would like an easy way to "Check all Macros" without having to go through the list one-by-one. I may have over 100 assets; with the current UX, it's really hard to get all the right assets checked.

- Add an option to filter the display to see only the assets that have been checked.

- Add a way to copy the asset list (checked and/or unchecked) to the clipboard. This would allow us to confirm that all of the assets needed are included BEFORE EXPORTING.

- Add an option Select All or Deselect All

On the SELECT object - add a column "Value if Null". This would work like a COALESCE in SQL. For string fields, an empty string or "" would need to be an available option.

When numerous formulae exist within a single formula object, being able to "Expand All / Collapse All" would be most welcomed. :-)

Also - the ability to Disable/Enable a single formula in the formula object - also very nice to have.

Debugging could be dramatically simplified if each canvas object had the ability to be disabled/enabled. If disabled, the workflow would still pass through the object, but the object itself would be ignored.

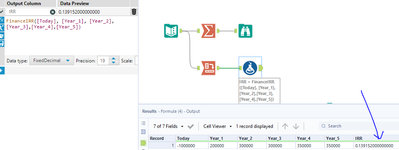

Currently both the formula and summarise tools round to 6.d.p for finance calculations such as IRR. People coming from Excel will be used to a higher precision then this. It would be great to up the precision in line with other platforms to 8.d.p +

The find and replace tool currently does not run row by row, and finds anything in the find column, and replaces it with anything in the replace column. I was under the impression and designed my workflow to use this as a row by row find and replace, not entire columns.

A simple fix would be to allow users to group by RecordID, which should also speed up the find / replace tool for larger data sets I would imagine.

What I am going to do in the meantime is use Regex to replace the word out.

Thanks!

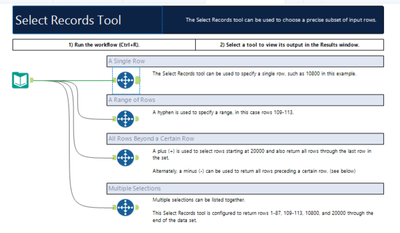

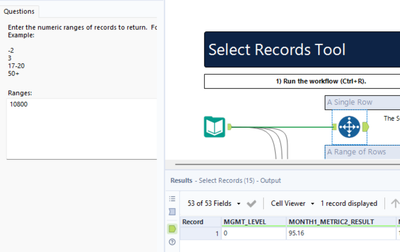

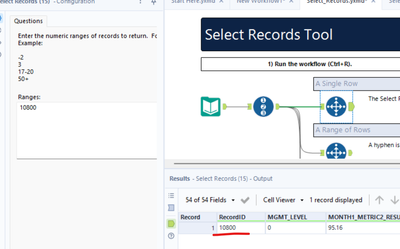

I wanted to understand the purpose of the Select Records Tool. The text explains the tool, but by adding the Record ID Tool the result is obvious and also connects to the record selection, not having to read the explanation first. At the same time, one gets to know the Record ID Tool.

Without the Record ID Tool

With the Record ID Tool

I was just responding to a post about the Make Columns tool, and I noticed that there is not an example workflow for this tool built into Designer. It is also missing from the Transform category, so I never think of it.

Hello,

In Formula tool beneficial will be implementation conditional formatting (similar like in Excel) allowing to change cell style (i.e. background color or bolding) based on specific rule. Currently such functionality is available in Table tool however it might be more convenient to use it in Formula tool and avoid Table tool.

Hello,

Enhancement of 'IN' functionality (ie. in Filter tool), so using range instead of citing particular values for example:

instead [ID] IN (1,2,3,52,53,54,100,101,102) something like that [ID] IN (1-3,52-54,100-102).

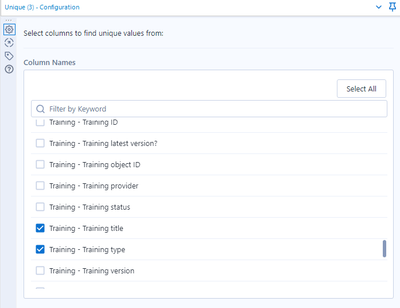

It would be nice if the fields which are selected for the Unique tool can be easily visible. (by way of grouping selected fields etc)

The issue is that if a few out of many fields are selected to be considered for Unique, it is hard to review/check which are the fields that have been selected in the Unique Tool configuration.

Here's an example. It is difficult to see all the fields which have been selected. (There are 7 fields selected in this example.)

It'd be great to have all DCM connections available in the Data connections window.

And when Use Data connection Manager (DCM) is ticked, The screen defaults to DCM Connection list.

The XML Parse tool has a checkbox to ignore errors and continue. This idea works for all options that allow you to ignore errors. It would be great if XML Parse had 2 outputs, 1 for successful records and another for the errored records. This would make it much easier to identify and update (if necessary) errored records.

In my view this would make it more similar to other tools like Filter or Spatial Match where records that don't fit your criteria follow a different flow.

Thanks for considering

I would to suggest to add a configuration in the Block Until Done tool, which allow the user to prioritize the release of a data stream through multiple Block Until Done tools in the same module.

In the example below, the objective is to update multiple sheets in a single Excel workbook. Each sheet is a different data stream, that cannot be unioned together, therefore making the filtering of a single stream feeding into multiple Block Until Done from that filter solution impossible.

What I would like to be able to do is have a configuration, where Block Until Done #2 will not allow the data stream to pass through until Block Until Done #1 is complete, Then Block Until Done #3 will not pass through the data stream until Block Until Done #2 is complete, and so forth through the all the Block Until Done instances.

I the current Output Data Tool, choosing a bulk Loader option, say for Teradata, the tool automatically requests the first column to be the primary index. That is absolutely incorrect, especially on Teradata because of how it might be configured. My Teradata Management team notifies me that the created table, whether in a temp space or not, becomes very lopsided and doesn't distribute the "amps" appropriately.

They recommend that instead of that, I should specify "NO PRIMARY INDEX" but that is not an option in the Output Tool.

The Output tool does not allow any database specific tweaks that might actually make things more efficient.

Additionally, when using the Bulk Loader, if the POST SQL uses the table created by the bulk loading, I get an error message that the data load is not yet complete.

It would be very useful if the POST SQL is executed only and only after the bulk data is actually loaded and complete, not probably just cached by Teradata or any database engine to be committed.

Furthermore, if I wanted either the POST SQL or some such way to return data or status or output, I cannot do so in the current Output Tool.

It would be very helpful if there was a way to allow that.

Today, I am able to take an excel file from a folder and drag it onto the canvas, which automatically creates an Input Data tool.

I would like to be able to drag an excel file right from outlook to do the same!

- New Idea 275

- Accepting Votes 1,815

- Comments Requested 23

- Under Review 173

- Accepted 58

- Ongoing 6

- Coming Soon 19

- Implemented 483

- Not Planned 115

- Revisit 61

- Partner Dependent 4

- Inactive 672

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

77 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

641 -

Category Interface

240 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

394 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

964 -

Data Products

2 -

Desktop Experience

1,538 -

Documentation

64 -

Engine

126 -

Enhancement

331 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

12 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

194 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

80 -

UX

223 -

XML

7

- « Previous

- Next »

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update - StarTrader on: Allow for the ability to turn off annotations on a...

- simonaubert_bd on: Download tool : load a request from postman/bruno ...

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

| User | Likes Count |

|---|---|

| 25 | |

| 9 | |

| 6 | |

| 6 | |

| 5 |