Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

For most of our "Production" mode, we launch our apps with an xml file containing the parameter send to the app.

We would like to have the path of this file in the Engine Constant.

-

Category Apps

-

Desktop Experience

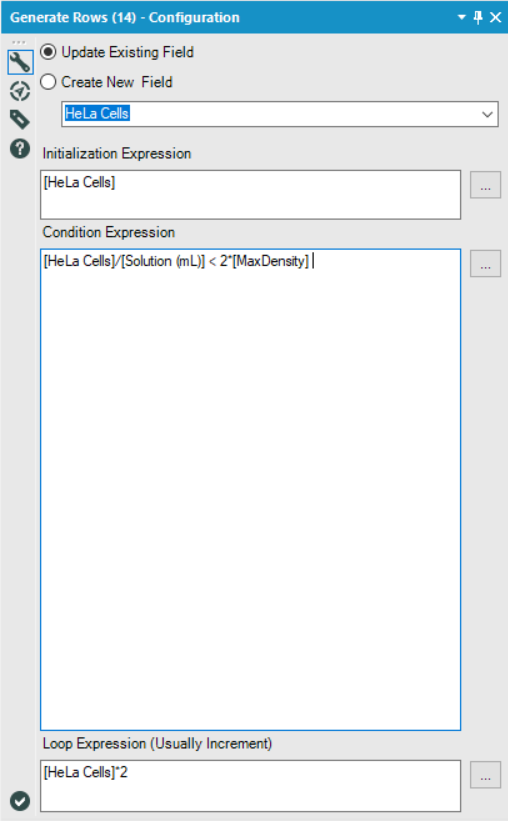

When working on the Weekly Challenge #108, I was trying to design a non-macro solution.

I ended up settling on the Generate Rows tool and was trying to find a way to generate rows until I had reached or exceeded the maximum density, however, I ran into an issue where I'd always have one too few rows, since the final row I was looking for was the one that broke the condition I specified.

In order to get around this, I came up with the following solution:

Essentially, I just set my condition to twice that of the true threshold I was looking for. This worked because I was always doubling the current value in my Loop Expression, and so anything which broke the 'actual' condition I was looking for ([MaxDensity]), would necessarily also break the second condition if doubled again.

However, for many other loop expressions, this sort of solution would not work.

My idea is to include a checkbox which, when selected, would also generate the final row which broke the specified condition.

By adding such a checkbox, it would allow users to continue using the Generate Rows tool as they already do, but reduce the amount of condition engineering that users are required to do in order to get that one extra row they're looking for, and reduce the number of potentially unseen errors in their workflows.

-

Category Preparation

-

Desktop Experience

-

Feature Request

-

Tool Improvement

Hi,

I am sure that I can't be the only person that would be interested in an output tool that allows categorical fields on both axes. THis would allow you to visualise the following example and I would suggest that this was either similar to the heatmap with boxes or the colour / size of the entry was determined by a third numerical value - such as 'Confidence' from the table below. THere might be ways to extend the idea as well as having a fourth parameter that puts text in the box or another number but it would be useful and not too hard I am sure.

LHS | RHS | Support | Confidence | Lift | NA |

{Carrots Winter} | {Onion} | 5.01E-02 | 0.707070707 | 1.298568507 | 210 |

{Onion} | {Carrots Winter} | 5.01E-02 | 9.20E-02 | 1.298568507 | 210 |

{Carrots} | {Onion} | 4.39E-02 | 0.713178295 | 1.309785378 | 184 |

{Onion} | {Carrots} | 4.39E-02 | 8.06E-02 | 1.309785378 | 184 |

{Peas} | {Onion} | 3.20E-02 | 0.428115016 | 0.786253301 | 134 |

{Onion} | {Peas} | 3.20E-02 | 5.87E-02 | 0.786253301 | 134 |

{Bean} | {Onion} | 2.20E-02 | 0.372469636 | 0.68405795 | 92 |

{Carrots Nantaise} | {Onion} | 2.08E-02 | 0.483333333 | 0.88766433 | 87 |

Many thanks in advance for considering this,

Peter

-

Category Input Output

-

Category Reporting

-

Data Connectors

-

Desktop Experience

I am parsing retailer promotions and have two input strings:

1. take a further 10%

2. take an additional 10%

I am using the regex parse tool to parse out the discount value, using the following regex:

further|additional (\d+)%

When the input contains examples of both options (i.e 'further' and 'additional'), the tool only seems to parse the first one encountered.

E.g if I state the regex string as:

further|additional (\d+)%

It only parses line 1 above

And if I state the regex string as:

additional|further (\d+)%

It only parse line 2

-

Category Parse

-

Desktop Experience

Create a standardized Mailbox application that could bolt onto Alteryx Server, to handle incoming attachments from sources like a Service Desk (Service Now for example) and other applications.

Essentially anything that regularly exports data in the form of an emailed attachments to which Alteryx could, using a series of predefined user rules and a designated email address, put those attachments into various directories ready for processing by automated Alteryx workflows.

This would save a huge amount of time as people currently have to manually drag and drop files. At least the on board Alteryx designers here haven't been able to come with a solution. Would also save any messy programming around systems like Outlook and bending any security issues within those systems. Many, many other applications have this simple feature built in to their products, especially service desks. I believe there would be a huge benefit to this very simple bolt on.

Why do we need yxmd files? Why shouldn't the default be yxmz? The workflow logic is the same. If you don't add any interface tools it will run, and it you want to have a interface you can.

If you start off with an yxmd and then decide to make it an app you now have two files to worry about.

As a habit I no longer save things as yxmd. As soon as I start a new workflow I save it as an yxmz.

Thoughts?

-

Category Apps

-

Desktop Experience

It would be a huge time saver if you had an option to unselect the fields selected and select the fields not selected in the Select tool.

-

Category Preparation

-

Desktop Experience

Yes, I know, it's weird to have a situation where a decision tree decides that no branches should be created, but it happened, and caused great confusion, panic, and delay among my students.

v1.1 of the Decision Tool does a hard-stop and outputs nothing when this happens, not even the succesfully-created model object while v1.0 of the stool still creates the model ("O") and the report ("R") ... just not the "I" (interactive report). Using the v1.0 version of the tool, I traced the problem down to this call:

dt = renderTree(the.model, tooltipParams = tooltipParams)

Where `renderTree` is part of the `AlteryxRviz` library.

I dug deeper and printed a traceback.

9: stop("dim(X) must have a positive length")

8: apply(prob, 1, max) at <tmp>#5

7: getConfidence(frame)

6: eval(expr, envir, enclos)

5: eval(substitute(list(...)), `_data`, parent.frame())

4: transform.data.frame(vertices, predicted = attr(fit, "ylevels")[frame$yval],

support = frame$yval2[, "nodeprob"], confidence = getConfidence(frame),

probs = getProb(frame), counts = getCount(frame))

3: transform(vertices, predicted = attr(fit, "ylevels")[frame$yval],

support = frame$yval2[, "nodeprob"], confidence = getConfidence(frame),

probs = getProb(frame), counts = getCount(frame))

2: getVertices(fit, colpal)

1: renderTree(the.model)

The problem is that `getConfidence` pulls `prob` from the `frame` given to it, and in the case of a model with no branches, `prob` is a list. And dim(<a list>) return null. Ergo explosion.

Toy dataset that triggers the error, sample from the Titanic Kaggle competition (in which my students are competing). Predict "Survived" by "Pclass".

-

Category Predictive

-

Desktop Experience

Dear Team

If we are having a heavy Workflow in development phase, consider that we are in the last section of development. Every time when we run the workflow it starts running from the Input Tool. Rather we can have a checkpoint tool where in the data flow will be fixed until the check point and running my work flow will start from that specific check point input.

This reduces my Development time a lot. Please advice on the same.

Thanks in advance.

Regards,

Gowtham Raja S

+91 9787585961

-

Category Input Output

-

Category Preparation

-

Data Connectors

-

Desktop Experience

The error message is:

Error: Cross Validation (58): Tool #4: Error in tab + laplace : non-numeric argument to binary operator

This is odd, because I see that there is special code that handles naive bayes models. Seems that the model$laplace parameter is _not_ null by the time it hits `update`. I'm not sure yet what line is triggering the error.

-

Category Predictive

-

Desktop Experience

The CrossValidation tool in Alteryx requires that if a union of models is passed in, then all models to be compared must be induced on the same set of predictors. Why is that necessary -- isn't it only comparing prediction performance for the plots, but doing predictions separately? Tool runs fine when I remove that requirement. Theoretically, model performance can be compared using nested cross-validation to choose a set of predictors in a deeper level, and then to assess the model in an upper level. So I don't immediately see an argument for enforcing this requirement.

This is the code in question:

if (!areIdentical(mvars1, mvars2)){

errorMsg <- paste("Models", modelNames[i] , "and", modelNames[i + 1],

"were created using different predictor variables.")

stopMsg <- "Please ensure all models were created using the same predictors."

}

As an aside, why does the CV tool still require Logistic Regression v1.0 instead of v1.1?

And please please please can we get the Model Comparison tool built in to Alteryx, and upgraded to accept v1.1 logistic regression and other things that don't pass `the.formula`. Essential for teaching predictive analytics using Alteryx.

-

Category Predictive

-

Desktop Experience

-

Tool Improvement

This would allow for a couple of things:

Set fiscal year for datasource to a new default.

Allow for specific filters on the .tde (We use this for row level security with our datasources).

Thanks

-

Category Apps

-

Desktop Experience

The Multi-Field Binning tool, when set to equal records, will assign any NULL fields to an 'additional' bin

e.g. if there are 10 tiles set then a bin will be created called 11 for the NULL field

However, when this is done it doesn't remove the NULLs from the equal distribution of bins across the remaining items (from 1-10).Assuming the NULLs should be ignored (if rest are numeric) then the binning of remaining items is wrong.

Suggestion is to add a tickbox in the tool to say whether or not NULL fields should be binned (current setup) or ignored (removed/ignored completely before binning allocations are made).

-

Category Preparation

-

Desktop Experience

I've run into an issue where I'm using an Input (or dynamic input) tool inside a macro (attached) which is being updated via a File Browse tool. Being that I work at a large company with several data sources; so we use a lot of Shared (Gallery) Connections. The issue is that whenever I try to enter any sort of aliased connection (Gallery or otherwise), it reverts to the default connection I have in the Input or Dynamic Input tool. It does not act this way if I use a manually typed connection string.

Initially, I thought this was a bug; so I brought it to Support's attention. They told me that this was the default action of the tool. So I'm suggesting that the default action of Input and Dynamic Input tools be changed to allow being overridden by Aliased connections with File Browse and Action tools. The simplest way to implement this would probably be to translate the alias before pushing it to the macro.

The chart tool is really nice to create quick graphics efficiently, especially when using a batch macro, but the biggest problem I have with it is the inability to replace the legend icon (the squiggle line) with just a square or circle to represent the color of the line. The squiggly line is confusing and I think the legend would look crisper with a solid square, or circle, or even a customized icon!

Thank You!!!!

-

Category Preparation

-

Category Reporting

-

Desktop Experience

Some of the predictive tools put out a "Score" field when output is run through the scoring tool, and some put out a "Score_1" and/or "Score_0". Since I frequently reuse the same workflow template for different predictive model types, it would be nice if they were consistent so that I wouldn't have to crash the workflow the first time through to get the input field names correct for downstream tools (e.g., Sort). Thank you

-

Category Predictive

-

Desktop Experience

I have long and large workflows, IMO, that are getting difficult to follow. I'd like the ability to highlight the joins and set specific colors or at the very least highlight and toggle on/off highlights. I'd also like to be able to move my joins and so they are not curving all over the canvas.

-

Category Join

-

Desktop Experience

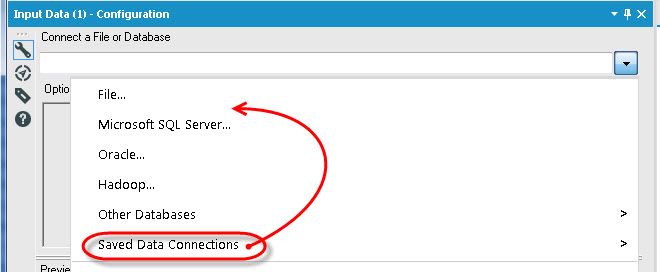

Hi,

In the Input tool, it would be useful to have the Saved Database Connections options higher in the menu, not last. Most users I know frequently use this drop down, and I find myself always grabbing the Other Databases options instead as it expands before my mouse gets down to the next one. I would vote to have it directly after File..., that way the top two options are available, either desktop data or "your" server data. To me, all the other options are one offs on a come by come basis, don't need to be above things that are used with a lot more frequency. Just two cents from a long time user...love the product either way!

Thanks!!

Eli Brooks

Recently, I posted one problem regarding on merging a column with same value using the table tool. I do have a hard time to make a solution until @HenrietteH helped on how to do it. What she showed was helped me a lot to do what I want in my module, however it would be more easier if we are going to add this feature on the table tool itself.

Thank you

-

Category Reporting

-

Desktop Experience

- New Idea 300

- Accepting Votes 1,790

- Comments Requested 22

- Under Review 168

- Accepted 54

- Ongoing 8

- Coming Soon 7

- Implemented 539

- Not Planned 111

- Revisit 59

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

222 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

79 -

Category Demographic Analysis

2 -

Category Developer

211 -

Category Documentation

80 -

Category In Database

215 -

Category Input Output

646 -

Category Interface

242 -

Category Join

105 -

Category Machine Learning

3 -

Category Macros

154 -

Category Parse

76 -

Category Predictive

79 -

Category Preparation

395 -

Category Prescriptive

1 -

Category Reporting

199 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

969 -

Data Products

3 -

Desktop Experience

1,557 -

Documentation

64 -

Engine

127 -

Enhancement

348 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

13 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

208 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

24 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

81 -

UX

223 -

XML

7

- « Previous

- Next »

- nkmcn on: Auto rename fields

- Shifty on: Copy Tool Configuration

- simonaubert_bd on: A formula to get DCM connection name and type (and...

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- haraldharders on: Improve Text Input tool

- simonaubert_bd on: Unique key detector tool

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections