Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Top Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Yes, I know, it's weird to have a situation where a decision tree decides that no branches should be created, but it happened, and caused great confusion, panic, and delay among my students.

v1.1 of the Decision Tool does a hard-stop and outputs nothing when this happens, not even the succesfully-created model object while v1.0 of the stool still creates the model ("O") and the report ("R") ... just not the "I" (interactive report). Using the v1.0 version of the tool, I traced the problem down to this call:

dt = renderTree(the.model, tooltipParams = tooltipParams)

Where `renderTree` is part of the `AlteryxRviz` library.

I dug deeper and printed a traceback.

9: stop("dim(X) must have a positive length")

8: apply(prob, 1, max) at <tmp>#5

7: getConfidence(frame)

6: eval(expr, envir, enclos)

5: eval(substitute(list(...)), `_data`, parent.frame())

4: transform.data.frame(vertices, predicted = attr(fit, "ylevels")[frame$yval],

support = frame$yval2[, "nodeprob"], confidence = getConfidence(frame),

probs = getProb(frame), counts = getCount(frame))

3: transform(vertices, predicted = attr(fit, "ylevels")[frame$yval],

support = frame$yval2[, "nodeprob"], confidence = getConfidence(frame),

probs = getProb(frame), counts = getCount(frame))

2: getVertices(fit, colpal)

1: renderTree(the.model)

The problem is that `getConfidence` pulls `prob` from the `frame` given to it, and in the case of a model with no branches, `prob` is a list. And dim(<a list>) return null. Ergo explosion.

Toy dataset that triggers the error, sample from the Titanic Kaggle competition (in which my students are competing). Predict "Survived" by "Pclass".

-

Category Predictive

-

Desktop Experience

Dear Team

If we are having a heavy Workflow in development phase, consider that we are in the last section of development. Every time when we run the workflow it starts running from the Input Tool. Rather we can have a checkpoint tool where in the data flow will be fixed until the check point and running my work flow will start from that specific check point input.

This reduces my Development time a lot. Please advice on the same.

Thanks in advance.

Regards,

Gowtham Raja S

+91 9787585961

-

Category Input Output

-

Category Preparation

-

Data Connectors

-

Desktop Experience

The error message is:

Error: Cross Validation (58): Tool #4: Error in tab + laplace : non-numeric argument to binary operator

This is odd, because I see that there is special code that handles naive bayes models. Seems that the model$laplace parameter is _not_ null by the time it hits `update`. I'm not sure yet what line is triggering the error.

-

Category Predictive

-

Desktop Experience

The CrossValidation tool in Alteryx requires that if a union of models is passed in, then all models to be compared must be induced on the same set of predictors. Why is that necessary -- isn't it only comparing prediction performance for the plots, but doing predictions separately? Tool runs fine when I remove that requirement. Theoretically, model performance can be compared using nested cross-validation to choose a set of predictors in a deeper level, and then to assess the model in an upper level. So I don't immediately see an argument for enforcing this requirement.

This is the code in question:

if (!areIdentical(mvars1, mvars2)){

errorMsg <- paste("Models", modelNames[i] , "and", modelNames[i + 1],

"were created using different predictor variables.")

stopMsg <- "Please ensure all models were created using the same predictors."

}

As an aside, why does the CV tool still require Logistic Regression v1.0 instead of v1.1?

And please please please can we get the Model Comparison tool built in to Alteryx, and upgraded to accept v1.1 logistic regression and other things that don't pass `the.formula`. Essential for teaching predictive analytics using Alteryx.

-

Category Predictive

-

Desktop Experience

-

Tool Improvement

This would allow for a couple of things:

Set fiscal year for datasource to a new default.

Allow for specific filters on the .tde (We use this for row level security with our datasources).

Thanks

-

Category Apps

-

Desktop Experience

The Multi-Field Binning tool, when set to equal records, will assign any NULL fields to an 'additional' bin

e.g. if there are 10 tiles set then a bin will be created called 11 for the NULL field

However, when this is done it doesn't remove the NULLs from the equal distribution of bins across the remaining items (from 1-10).Assuming the NULLs should be ignored (if rest are numeric) then the binning of remaining items is wrong.

Suggestion is to add a tickbox in the tool to say whether or not NULL fields should be binned (current setup) or ignored (removed/ignored completely before binning allocations are made).

-

Category Preparation

-

Desktop Experience

I've run into an issue where I'm using an Input (or dynamic input) tool inside a macro (attached) which is being updated via a File Browse tool. Being that I work at a large company with several data sources; so we use a lot of Shared (Gallery) Connections. The issue is that whenever I try to enter any sort of aliased connection (Gallery or otherwise), it reverts to the default connection I have in the Input or Dynamic Input tool. It does not act this way if I use a manually typed connection string.

Initially, I thought this was a bug; so I brought it to Support's attention. They told me that this was the default action of the tool. So I'm suggesting that the default action of Input and Dynamic Input tools be changed to allow being overridden by Aliased connections with File Browse and Action tools. The simplest way to implement this would probably be to translate the alias before pushing it to the macro.

(1) The green banner saying that the workflow has finished running should stay until dismissed

(2) The indicator on the tabs showing which workflows had run should be colour coded (still running / completed without errors / completed with errors)

Thanks!

-

Desktop Experience

-

Enhancement

The Email tool does not send out e-mails after an error occurred in the workflow. Since this usually is a good thing, it sometimes would be helpful being able to send out e-mails also in case of errors.

In particular, I want to send out an e-mail with a detailed and formatted custom error message.

Thus, please add a check box "Also send mail in case of errors" which is off by default.

Side note: The Event "Send mail After Run With Errors" does not work for me because it is too inflexible. Just sending out the OutputLog is not helpful because the error message might be hidden after hundreds of rows.

-

Category Reporting

-

Desktop Experience

-

Enhancement

as an analysis software. The result window plays a crucial role.

However, the numbers are not left-aligned, making it difficult to identify the number in the first grant.

and as most coding editor, monospace is recommend. it help to identify text length as well

Suggested Settings Adjustments:

1. Change of Font Type and Size: Include options for different fonts, including monospace.

2. Alignment: Provide options for left, right, and center alignment.

3. Option show whitespace

-

Desktop Experience

-

Enhancement

I would love to have the option to easily disable a section of the workflow while diverting around the disabled tools.

I know the Detour and Detour End tools exist, but I think this functionality could be improved. My idea would be either/both of the following functions.

Break links between tools. Think of a workflow as a circuit board and the connection paths between tools as parts of a circuit. With every tool connected/enabled the full circuit is complete. However, if there is a section of the workflow which is temporarily unneeded, it would be great to have the option to break the connection between tools and then reconnect at a later point to complete the circuit. My idea would be to have the option on a line/path to break the connection temporarily (greying out the tools downstream) and enabling it further downstream. It's similar to what the Detour and Detour End do, but without needing additional tools on the canvas

| Everything enabled | [ tool ] ---- [ tool ] ---- [ tool ] ---- [ tool ] ---- [ tool ] ---- [ tool ] |

| First and last enabled but links to 4 tools in the middle are broken, diverting around them with no other tools needed. | [ tool ] ->( - )<- [ tool ] --/-- [ tool ] --/-- [ tool ] --/-- [ tool ] ->( + )<- [ tool ] |

Alternatively, if you were to select the unneeded tools in the workflow and place them into a container, then disable it, it could skip those disabled tools without breaking the circuit.

[ tool ] ---- [ tool ] ---- [ tool ] ---- [ tool ] ---- [ tool ] ---- [ tool ]

| [ tool ] -> | <- [ tool ] --/-- [ tool ] --/-- [ tool ] --/-- [ tool ] -> | <- [ tool ] |

-

Desktop Experience

-

Enhancement

Most tools do not result in record changes: Select Tool, Data Cleansing, Record ID, Formula, Auto Field, Multi Field/Row, etc. It would be nice to be able to tell Alteryx which tools to display the Connection Progress; specifically the Record Counts. It would reduce the clutter/noise and allow the Record Counts to only display for the tools that matter to the analyst/user. Right now it displays for all tools regardless of whether the records changed or not. My hope would be to tell Alteryx to only display the Record Counts for tools like: Input, Output, Filter, Join, Summarize, Crosstab, Unique, etc. and ignore all other tools.

-

Desktop Experience

-

New Request

When a workflow group is created/saved, could it by default always open the tabs in the order they were in when the Workflow Group was created?

As of now, the workflow tabs open at some undeterminable order and the user must take great care to switch from tab to tab in intended order. Sometime they are in the "correct" order, other times they randomly appear in different order.

-

Desktop Experience

-

Enhancement

When running a job in the gallery, a file output has to be chosen every time, even if there is no other option. I propose that under "My Profile >> Workflow Defaults" users be able to choose a preferred default file format for outputs. If it is available then the gallery will automatically choose that, otherwise the user can pick.

-

Desktop Experience

-

Enhancement

-

User Settings

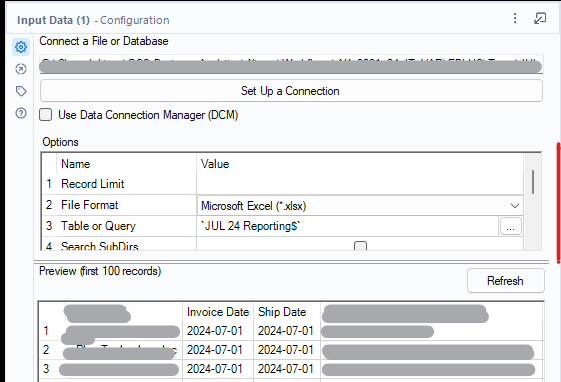

I work with lots of Excel files, and because they don't always consistent schema, I'm often changing the configuration.

The default Window frame/window for the "Options" panel in the Input data tool only shows 3-4 rows, plus title (the section in RED in the image below). As I have large screens

The panel size can be resized by dragging the line above the "Preview (first 100 records)". However, once I move from this tool, if I return to the tool, it defaults back to 3-4 rows, plus title.

I would like to be able to set the default size of the Options panel/frame/window.

- This would improve my efficiency when setting up flows

- This would improve debugging - I would be able to see all my parameters at a glance

-

Category Input Output

-

Category Interface

-

Data Connectors

-

Desktop Experience

Whenever I start something in Alteryx Designer which takes some time (e.g. opening a workflow), and I want to do something in another application in the meantime (e.g., Explorer), Alteryx Designer repeatedly catches the Windows focus back and brings Alteryx Designer to the front, interrupting my work in the other application. And Alteryx does this really multiple time during an action (often multi times per second), not even only when finished, causing me to have to press Alt-Tab multiple times to get the other window in the front again).

First, this is annoying: If I purposely select a different tool to be in the front I want to work in that tool and not be disturbed by a different tool that catches the focus back.

Second: This cannot be good for performance. Sending the "I want the focus" signals to Windows also takes time.

Suggestion:

Switch off all requests for getting the focus in entire Alteryx Designer. Instead, the Alteryx entry in the task bar might blink once or twice in green when the background action is completed.

If there are people who like this catching focus thing, then please introduce a setting so that it's possible to switch it off.

-

Desktop Experience

-

Enhancement

When using "Find and Replace", the content of the search term field ("Find") is cleared when switching between workflow windows. From my perspective, there's no reason for that. Why does Alteryx Designer decide that I don't want to search for the same term in another workflow?

Please change that behaviour that content in "Find", "Search Locations", and "Replace:" are preserved when switching between Designer windows.

-

Desktop Experience

-

Enhancement

I have a sales column in my dataset that includes both a dollar sign and a period (e.g., '$320,000.00'). When I use the data cleaning tool and select 'Remove unwanted characters' with the punctuation checkbox, it removes both the dollar sign and the period. However, I only want to remove the dollar sign. It would be great if @Alteryx could allow users to specify which character they want to remove after selecting the punctuation checkbox. Thanks!

-

Category Preparation

-

Desktop Experience

-

Enhancement

Hello team,

It would be really nice if user interface tool can be set with a default set up that will flow into the connected tool. Currently it will always been blank as no data flow in.

There are ways to bypass it as run the automation in Open Debug, but then if you want to amend the the automation you need to go back to the original WF and then run it again with Open Debug.

Of course you can set a static data for these fields however then you must remove them before saving it to the Gallery, which might create future errors if you are forgetting to delete the static data.

So if I added a Select Date, it will be nice if it will be possible to select a data in that tool and that date will reflected in the WF. It is less an issue at the development part as normally at that stage these tools will not be set up, however when you need to upgrade existing WF or amend one due to changes, that's were it will be very handy and will save a lot of time.

-

Category Interface

-

Desktop Experience

-

Enhancement

- New Idea 294

- Accepting Votes 1,790

- Comments Requested 22

- Under Review 167

- Accepted 55

- Ongoing 8

- Coming Soon 7

- Implemented 539

- Not Planned 111

- Revisit 59

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

221 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

79 -

Category Demographic Analysis

2 -

Category Developer

210 -

Category Documentation

80 -

Category In Database

215 -

Category Input Output

646 -

Category Interface

240 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

79 -

Category Preparation

395 -

Category Prescriptive

1 -

Category Reporting

199 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

969 -

Data Products

3 -

Desktop Experience

1,552 -

Documentation

64 -

Engine

127 -

Enhancement

346 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

13 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

204 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

24 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

81 -

UX

223 -

XML

7

- « Previous

- Next »

- Shifty on: Copy Tool Configuration

- simonaubert_bd on: A formula to get DCM connection name and type (and...

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- haraldharders on: Improve Text Input tool

- simonaubert_bd on: Unique key detector tool

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update

| User | Likes Count |

|---|---|

| 4 | |

| 3 | |

| 3 | |

| 2 | |

| 2 |