Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Hello,

Here is the proposal about an issue that I face frequently at work.

Problem Statement -

Frequent failure of workflows that have either been scheduled or run manually on server because the excel input file is sometimes open by another user or someone forgot to close the file before going out of office or some other reason.

Proposed Solution -

The Input/Dynamic Input tools to have the ability to read excel files even when it is open so that the workflows do not fail which will have a huge impact in terms of time savings and will avoid regular monitoring of the scheduled workflows.

-

Category Input Output

-

Data Connectors

-

Enhancement

Whenever I overwrite an Excel sheet with data of the same format just different values (e.g. Q2 data versus Q1 data) all of my Pivot Tables break and I have to manually recreate them even though the schema didn't change. Somehow the Table is being deleted/removed and replaced with a completely different Table which is what causes the Pivot Tables to break. The only way to avoid this is to manually set the Cell Range, but who has time for that? The only solution I have found is to manually copy all values and paste them over the existing data which is very inefficient the more sheets you are working with.

-

Category Input Output

-

Data Connectors

-

New Request

Improve the user experience by enable search filtering options in browse tool result just as in the canvas. See attached pics.

-

Category Input Output

-

Category Preparation

-

Desktop Experience

-

Enhancement

The management of connections, especially in a collaborative environment is not cohesive or intuitive.

- When configuring an input tool for a server / gallery connection, hunting for the correct connection in a long list is quite frustrating. There is no search, no sort and the list of connections does not sort in any logical order by default.

SUGGEST:- List of Server Connections is sorted Alphabetically by default

- Give the ability to search and sort

- Add additional connection metadata

- Primary Owner (add metadata element to Curator screen & surface in the connection list)

- Secondary Owner (add metadata element to Curator screen & surface in the connection list)

- Connection String (surface the server name and login name to the connections list, omitting the password)

- Rethink the concepts of RCM, Gallery Connections and the external file method of storing credentials for In-DB connections.

- Make one overarching, cohesive method of storing and sharing credentials across the platform.

- Enable Artisans to create

- Enable Artisans to share

- Retire concept of external files to store credentials, as is used with In-DB connections

-

Category Input Output

-

Data Connectors

-

Enhancement

Apologies if this has been suggested or exists. I often find myself using manual Excel files as a data source. These files frequently use cell formatting elements, such as cell color and text color, to convey important information. However, when these files are imported into Alteryx, this valuable formatting information is unfortunately lost.

To address this, a dedicated input tool that can read Excel files with separate fields for these formatting elements would be very helpful. This would be incredibly beneficial, especially when the data lacks other fields that relate to the coloring. Currently, I manage to achieve this using a Python tool, but integrating this as a built-in feature in Alteryx would undoubtedly be more efficient and user-friendly. This enhancement would not only simplify data preparation but also ensure the preservation of the full context of the original Excel file.

-

Category Input Output

-

Data Connectors

-

New Request

Hi is it possible to add sheet names (to spreedsheet files) to the output of a file directory tool

-

Category Input Output

-

Data Connectors

-

Enhancement

Hi all,

When preparing reports with formatting for my stakeholders. They want these sent straight to sharepoint and this can be achieved via onedrive shortcuts on a laptop. However when sending the workflow for full automation, the server's C drive is not setup with the appropriate shortcuts and it is not allowed by our admin team.

So my request is to have the sharepoint output tool upgraded to push formatted files to sharepoint.

Thank you!

-

Category Input Output

-

Data Connectors

-

Enhancement

Please update the Render tool to allow users to name the Excel sheet for the output. Alteryx currently errors when using same naming convention that works in normal Output tool.

-

Category Input Output

-

Data Connectors

-

Enhancement

Hello all,

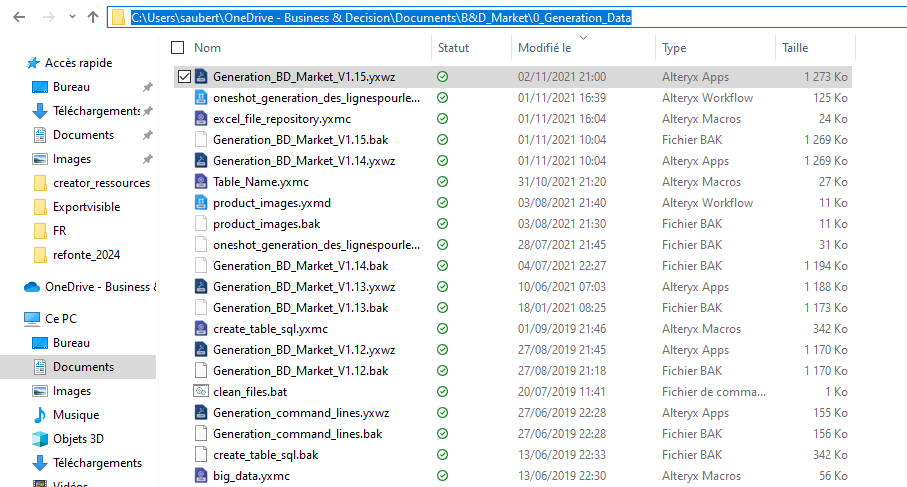

Here the issue : I have a workflow in my One Drive folder

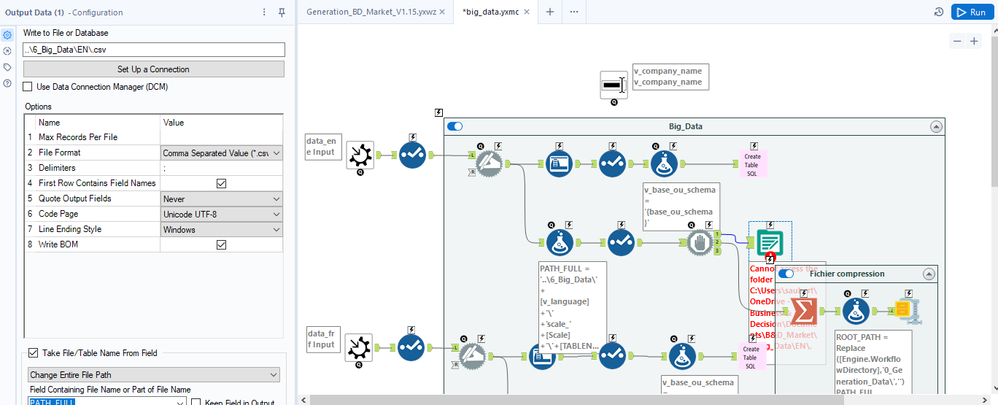

In that workflow, I use a macro that writes a file with a relative path (..\6_Big_Data\EN\.csv ) :

Strangely, it doesn't work and the error message seems to relate to a folder that doesn't exist (but also, not the one I have set)

ErrorLink: Output Data (1): https://community.alteryx.com/t5/*/*/ta-p/724327?utm_source=designer&utm_medium=resultsgrid|Cannot access the folder C:\Users\saubert\OneDrive - Business & Decision\Documents\B&D_Market\6_Big_Data\EN\.

I really would like that to work :)

Best regards,

Simon

-

Category Input Output

-

Engine

-

Enhancement

Please allow disable or ignore conversion errors in SharePoint List Input.

In SharePoint List Input I see the same conversion error about 10 times. Then....

"Conversion Error Limit Reached".

Can you simply show the error once or allow users to choose to ignore the error? (Union Tool allows users to ignore errors).

I am not using that SP column in my workflow. Meanwhile I have to show my workflow to a 3rd party within the company. SO annoying to see errors that do not apply to my workflow being shown.

-

Category Input Output

-

Desktop Experience

-

New Request

-

User Settings

It would be neat to add a feature to the Output tool to allow grouping by rows, with all the data related to the group column viewable under a drop-down of the selected field.

I've heard that this is possible with a power pivot but would be a nice feature in Alteryx.

Ex. A listing of all customers in a specific city -> Group by the "Neighborhood" column, the output should be a list of all neighborhoods in the city, with an option to drop down on each neighborhood to see its residents and their relevant data.

Thanks!

-

Category Input Output

-

Data Connectors

-

Enhancement

Problem statement -

Currently we are storing our Alteryx data in .yxdb file format and whenever we want to fetch the data, the whole dataset first load into the memory and then we can able to apply filter tool afterwards to get the required subset of data from .yxdb which is completely waste of time and resources.

Solution -

My idea is to introduce a YXDB SQL statement tool which can directly apply in a workflow to get the required dataset from .YXDB file, I hope this will reduce the overall runtime of workflow and user will get desired data in record time which improves the performance and reduce the memory consumption.

-

Category Input Output

-

Data Connectors

Hello all,

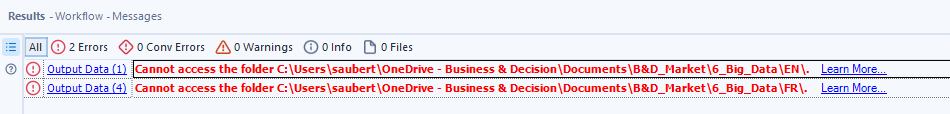

ADBC is a database connection standard (like ODBC or JDBC) but specifically designed for columnar storage (so database like DuckDB, Clickhouse, MonetDB, Vertica...). This is typically the kind of stuff that can make Alteryx way faster.

more info in https://arrow.apache.org/blog/2023/01/05/introducing-arrow-adbc/

Here a benchmark made by the guys at DuckDB : 38x improvement

https://duckdb.org/2023/08/04/adbc.html

Best regards,

Simon

-

Category In Database

-

Category Input Output

-

New Request

Writing to XLSB Files using Delete and Append does not behave properly.

Alteryx currently is having an issue with writing to an XLSB file using the Delete and append option with Take the file/table name From field.

Issue:

- Old data gets deleted, and new data is added but on the wrong row.

- New data is added after location where old data was originally.

- This output error for XLSB files only shows when using Take the file/table name From field. Static paths are fine.

Workaround:

Create a Batch macro to simulate the Take the file/table name From field function without actually using it.

Example of Issue:

| Record ID | Original File | ----> | Updated File |

| 1 | Old Data | ----> | |

| 2 | Old Data | ----> | |

| 3 | Old Data | ----> | |

| … | Old Data | ----> | |

| 1200 | Old Data | ----> | |

| 1201 | ----> | New Data | |

| 1202 | ----> | New Data | |

| 1203 | ----> | New Data | |

| … | ----> | New Data |

-

Category Input Output

-

Enhancement

I am using Alteryx as an ETL Tool and then QlikSense for data visualization.

Alteryx only gives QVX outputs which are the old version QlikView files. It works for QlikSense but it slows down the system. So, the QlikSense support suggested using QVD outputs.

I want to suggest supporting QVD files as QlikSense is being used more widely instead of QlikView, most users are migrating to QlikSense.

It would be more useful and efficient if Alteryx supports latest file format.

-

Category Input Output

-

Enhancement

Hello all,

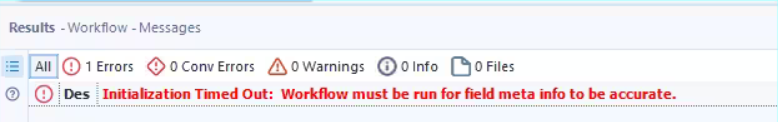

Sometimes, when you have too much time to retrieve your tables metadas, you can have this message

Initialization Timed Out: Workflow must be run for field meta info to be accurate.

From what I understand, it's Alteryx and the source system that drives the time out value. However, I have some cases where the long time is "normal" and that really hurts the user experience.

So, I would like the ability in settings to change the default value.

Best regards,

Simon

-

Category In Database

-

Category Input Output

-

New Request

-

User Settings

Hello all,

Apache Doris ( https://doris.apache.org/ ) is a modern datawarehouse with a lot of ambitions. It's probably the next big thing.

You can read the full doc here https://doris.apache.org/docs/get-starting/what-is-apache-doris but to sum it up, it aims to be THE reference solution for OLAP by claiming even better performance than Clickhouse, DuckDB or MonetDB. Even benchmarks from the Clickhouse team seem to agree.

Best regards,

Simon

-

AMP Engine

-

Category In Database

-

Category Input Output

-

New Request

Hello all,

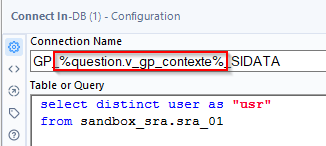

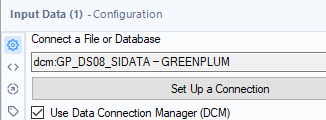

As of today, we use the good old alias in-memory to connect to our datasources in in-memory. We have several environments so we use constants in order to change the name of the in-memory alias during execution.

To illustrate :

Depending of the environment, the constant « v_gp_contexte » will take different values :

- GP_DS08_SIDATA for la dev.

- GP_EE_SIDATA for prod.

Sounds nice, right? But now, we would like to use DCM and the nightmare begins :

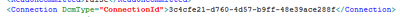

We can't manually change the name and set the question :

if we look at the xml of the workflow, we only find an id so editing it is useless :

(for informationDCM connections are stored in some sqlite db in C:\Users\{yourname}\AppData\Local\Alteryx

So, I would like to use the DCM inside the in-memory alias (the in-memory alias is stored and can be edited), just like for in-db connection alias.

Best regards,

Simon

-

Category Input Output

-

Enhancement

-

User Settings

The ability to output to Amazon Workdocs via a special Output tool would be very helpful for anyone looking into using Workdocs for personal or professional purposes. This is similar in functionality to the OneDrive connector.

-

Category Input Output

-

New Request

Hi all,

At present, Alteryx does not support DSN-free connections to Snowflake using the Bulk Connector. This is a critical functionality for any large company that uses Alteryx - and so I'm hoping that this can be changed in the product in an upcoming release. As a corollary - every DB connection type has to be able to work without DSNs for any medium or large size server instance - so it's worth extending this to check every DB connection type available in Alteryx.

Here are the details:

What is DSN-Free?

In order to be able to run our Alteryx canvasses on a multi-node server - we have to avoid using DSNs - so we generally expand connection strings that look like this:

odbc:DSN=DSNSnowFlakeTest;UID=Username;PWD=__EncPwd1__|||NEWTESTDB.PUBLIC.MYTESTTABLE

to instead have the fully described connection string like this:

odbc:DRIVER={SnowflakeDSIIDriver};UID=Username;pwd=__EncPwd1__;authenticator=Snowflake;WAREHOUSE=compute_wh;SERVER=xnb27844.us-east-1.snowflakecomputing.com;SCHEMA=PUBLIC;DATABASE=NewTestDB;Staging=local;Method=user

For Snowflake BL:

Now - for the Snowflake Bulk Loader the same process does not work and Alteryx gives the classic error below

With DSN:

snowbl:DSN=DSNSnowFlakeTest;UID=Username;pwd=__EncPwd1__;Staging=local;Method=user|||NEWTESTDB.PUBLIC.MYTESTTABLE

Without DSN:

snowbl:driver=SnowflakeDSIIDriver;UID=SeanBAdamsJPMC;pwd=__EncPwd1__;SERVER=xnb27844.us-east-1.snowflakecomputing.com;WAREHOUSE=compute_wh;SCHEMA=PUBLIC;DATABASE=NewTestDB;Staging=local;Method=user|||NEWTESTDB.PUBLIC.MYTESTTABLE

Many thanks

Sean

-

Category Input Output

-

Data Connectors

- New Idea 273

- Accepting Votes 1,818

- Comments Requested 24

- Under Review 174

- Accepted 56

- Ongoing 5

- Coming Soon 11

- Implemented 481

- Not Planned 116

- Revisit 62

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

246 -

Category Data Investigation

77 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

640 -

Category Interface

239 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

394 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

88 -

Configuration

1 -

Content

1 -

Data Connectors

962 -

Data Products

2 -

Desktop Experience

1,533 -

Documentation

64 -

Engine

126 -

Enhancement

326 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

12 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

192 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

79 -

UX

222 -

XML

7

- « Previous

- Next »

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update - StarTrader on: Allow for the ability to turn off annotations on a...

-

AkimasaKajitani on: Download tool : load a request from postman/bruno ...

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

- maryjdavies on: Lock & Unlock Workflows with Password

- noel_navarrete on: Append Fields: Option to Suppress Warning when bot...