Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: New Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

All the input tools like Input Data and Dynamic Input will have a new flag "Skip on fail" that will process all the data, or none of the input data, or partial of the data requested and will return the data that could be read and do not return any error in the WFs.

If the 'Skip on fail' flag is false - the system should act like it is now.

if the 'Skip on fail' flag is true - the system should return the only the accepted or manager to read data on the default out put, and can have a second output connection for the error log, so we can parse it and do something with it, but the WFs should still run,

-

Category Input Output

-

New Request

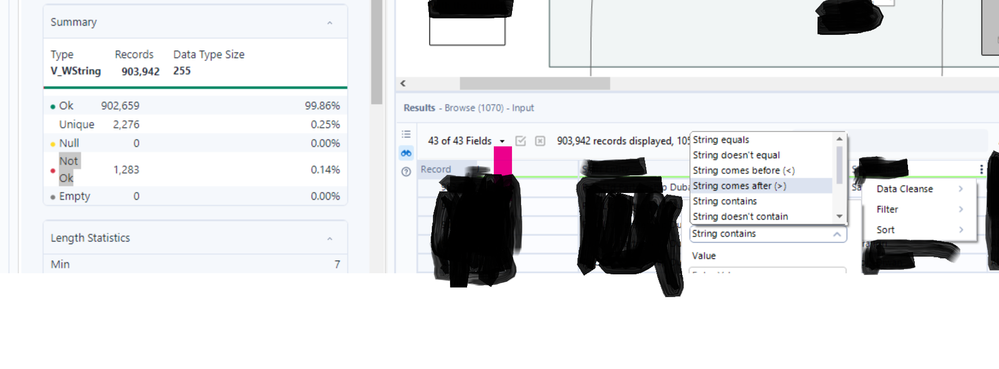

I am a big user of the browse tool and the filter option within the browse tool. In many cases I filter on multiple columns at the same time as I'm sure many users do. I am suggesting the following 2 enhancements to filter functionality in the browse tool:

1. After applying some filters, although I can see the filter icon activate at the top of the tool, it is difficult to know at a glance which columns have filters applied without clicking on every column heading and examining the filter settings. In the event a column is filtered, a filter icon could be provided at the top of the column to easily identify filtered columns, removing the need for users to memorise filtered columns.

2. After applying multiple filters, if a user clicks onto another tool with the workflow or anywhere else on the canvas - even accidentally - all filters will be removed and the user will need to reapply them. In my view it would make more sense to make the filters persistent, or at least give users the option of doing so. Doing so would be a big time saver.

-

Category Input Output

-

Data Connectors

Have you ever had the business deliver an Excel (EEK!) file to be passed into Alteryx with a different number of header rows (because it looks pretty and is convenient)? Never, you say? Lies!

I would suggest adding an option to the Input Data Tool that would give us the ability concatenate multiple header rows. This would help enable accurate data profiling for columns when output and eliminate loss from unnecessary conversion errors. Currently, the options allow us to Start Data Input on Line X; however, if the header for the column is on multiple rows, they would have to be manually entered after input due to only being able to select the lowest possible row to assure the data is accurately passed. The solution would be to be able to specify the number of rows that contain headers, concatenate them to a single row (ignoring null and carriage return) and then output that as the header.

The current functionality, in a situation where each row has a variable number of header rows, causes forced errors such as a scientific string conversion of a numeric value.

-

Category Input Output

-

Data Connectors

If you cancel a workflow while its writing into a file, the file creation will not be rollbacked and hence a partial file would have been created.

This is problematic when working with incremental load relying on file from the past.

-

Category Input Output

-

Enhancement

To embed the "Not ok" filter option in the browse tool

-

Category Input Output

-

Enhancement

-

UX

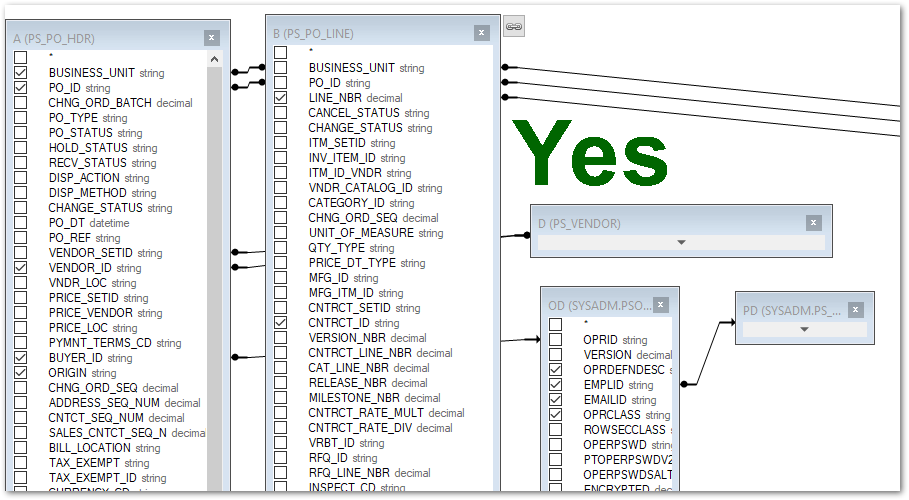

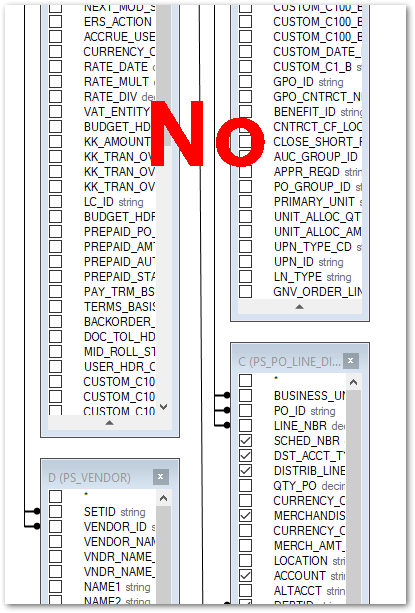

After closing the Table or Query option on an Input Data tool, the table layout in the Visual Query Builder view gets reset to stacking the tables/views on top of each other. It would be great if the layout stayed the way I left it the last time I closed it.

-

Category Input Output

-

Enhancement

Hi everyone,

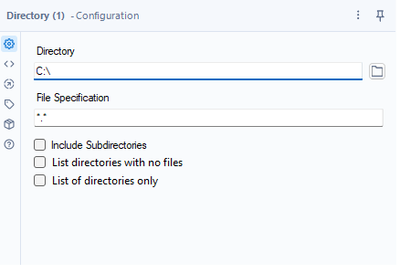

Add two additional features to a directory tool. Something like this:

Use cases:

1. Since it is not possible to use a folder browse on the Gallery, this could help a basic user create a list of possible folders to select from with the help of a drop-down

2. Directory analysis for cleaning purposes - currently, if you want to get a list of the folders with Alteryx, it takes forever for big file servers since Alteryx is mapping all the files

Both are achievable today through regex or a bat script.

Thank you,

Fernando Vizcaino

-

Category Input Output

-

Data Connectors

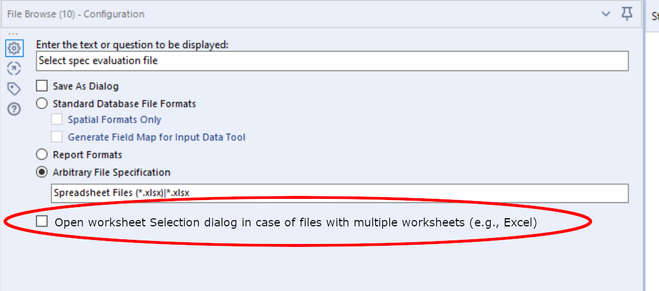

Using File Browse on Excel files first of all is inconsistent between running the Analytical App in the Designer and in the Gallery:

- In the Designer, the user is not being asked which Excel workflow shall be selected.

- In the Gallery, the user is always asked which Excel workflow shall be selected.

Depending on the use case, both behaviours can be the right one:

- To load a specific Excel file worksheet, the dialog for workflow selection is appropriate.

- When working with the entire Excel file (copying, getting the list of worksheets, etc.), the dialog is not helpful.

Thus, my idea is as follows:

- Add a checkbox to the File Browse tool which determines whether the worksheet selection dialog shall be opened (and the output will be <filename>.<ext>|<worksheet>) or not (and the output will be <filename>.<ext>) in case of Excel file selected.

- Make behaviour consistent in Alteryx Designer and Gallery.

-

Category Input Output

-

Enhancement

Enhancement request for the option to Encrypt ODBC credentials instead of just hashing them

-

Category Input Output

-

Enhancement

For companies that have migrated to OneDrive/Teams for data storage, employees need to be able to dynamically input and output data within their workflows in order to schedule a workflow on Alteryx Server and avoid building batch MACROs.

With many organizations migrating to OneDrive, a Dynamic Input/Output tool for OneDrive and SharePoint is needed.

- The existing Directory and Dynamic Input tools only work with UNC path and cannot be leveraged for OneDrive or SharePoint.

- The existing OneDrive and SharePoint tools do not have a dynamic input or output component to them.

- Users have to build work arounds and custom MACROS for a common problem/challenge.

- Users have to map the OneDrive folders to their machine (and server if published to the Gallery)

- This option generates a lot of maintenance, especially on Server, to free up space consumed by the local version when outputting the data.

The enhancement should have the following components:

OneDrive/SharePoint Directory Tool

- Ability to read either one folder with the option to include/exclude subfolders within OneDrive

- Ability to retrieve Creation Date

- Ability to retrieve Last Modified Date

- Ability to identify file type (e.g. .xlsx)

- Ability to read Author

- Ability to read last modified by

- Ability to generate the specific web path for the files

OneDrive/SharePoint Dynamic Input Tool

- Receive the input from the OneDrive/SharePoint Directory Tool and retrieve the data.

Dynamic OneDrive/SharePoint Output Tool

- Dynamically write the output from the workflow to a specific directory individual files in the same location

- Dynamically write the output to multiple tabs on the same file within the directory.

- Dynamically write the output to a new folder within the directory

-

Category Connectors

-

Category Input Output

-

Data Connectors

My team always run into the issue that two people running two workflow at the same but those workflow using the same excel flat file, then it clash into each other.

I want Alteryx to develop a feature to allow read only capability to a input excel, that way two workflow use the same input file will not clash into each other, it's very good for running workflow in parallel, this way really increase our efficiency.

I know this feature is not easy to achieve, we have had chat with Alteryx team before.

I am opening to alternative solution to this problem.

Thanks!

-

Category Input Output

-

New Request

My Team Heavily rely on Dremio.

It would be great for Alteryx team to add Dremio as a dedicated data source Input for Alteryx, it would be so much easier for us to configure and run things in the future.

Thanks!

-

Category Input Output

-

Data Connectors

A new type of Browse tool which can dynamically be renamed through a field could be helpful for the cases where Analytic Apps display output results in Browse tabs. It could both help create the name of the Browse tab dynamically and create multiple Browse tabs automatically.

-

Category Input Output

-

New Request

Being able to specify a name for the FileName field in the Input Tool configuration would be helpful for cases where a field named FileName is already present in the input data and has a different purpose than the newly added FileName field. Instead of having to use Field Info and other tools to rename the last field into something else (i.e. AYX_FileName), this would be an easier approach.

-

Category Input Output

-

Enhancement

Hello all,

As of now, you have two very distinct kinds of connection :

-in memory alias

-in database alias

It happens than every single time I use a in-database alias I have to create the same for in memory since some operations cannot be realized in in-database (such as pre-sql or interface tools)

What does that mean for us :

-more complex settings operations/training/tests

-unefficient worflows that have to deal with two kinds of alias.

What I propose :

-a single "connection alias", that can be used either for in-db either for in-memory,

-one place to configure

-the in-db or in-memory being dependant on the tools you use

Best regards,

Simon

-

Category In Database

-

Category Input Output

-

New Request

-

User Settings

User should get an Alert that file is open when using Input Tool. Currently Alteryx just clocks when attempting to use an open file in an Input Tool.

-

Category Input Output

-

Data Connectors

From what I can tell using ProcMon, presently when using the Directory tool to list files (including subdirectories) the Alteryx Engine runs a single threaded process.

When you're trying to find files by checking recursively in large network paths, this can take hours to run.

It would be great if the tools would split up lists of directories (maybe by getting two or three levels down first) and then run each of those recursive paths in parallel.

While it is possible to do this using a custom Python or cmd->PS command, it would be great if this could just be a native part of the application.

-

Category Input Output

-

Data Connectors

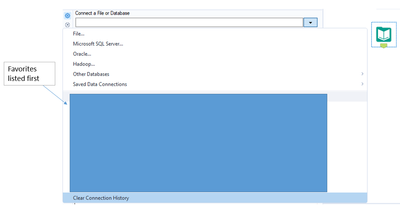

In the Input tool, I rely heavily on the recent connection history list. As soon as a file falls off of this list, it takes me a while to recall where it's saved and navigate to the file I'm wanting to use. It would be great to have a feature that would allow users to set their favorite connections/files so that they remain at the top of the connection history list for easy access.

-

Category Input Output

-

Data Connectors

I was working on the file and there are multiple sheets need to be pulled from one excel file. I was not sure how we can give one source of information and pull multiple sheet from one source as per the requirement. So wanted to submit this idea to create a toll which can pull any sheet(s) from one input tool as per requirement.

-

Category Input Output

-

Data Connectors

- New Idea 272

- Accepting Votes 1,818

- Comments Requested 24

- Under Review 174

- Accepted 56

- Ongoing 5

- Coming Soon 11

- Implemented 481

- Not Planned 116

- Revisit 62

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

218 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

245 -

Category Data Investigation

77 -

Category Demographic Analysis

2 -

Category Developer

208 -

Category Documentation

80 -

Category In Database

214 -

Category Input Output

640 -

Category Interface

239 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

77 -

Category Preparation

394 -

Category Prescriptive

1 -

Category Reporting

198 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

88 -

Configuration

1 -

Content

1 -

Data Connectors

961 -

Data Products

2 -

Desktop Experience

1,533 -

Documentation

64 -

Engine

126 -

Enhancement

325 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

12 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

192 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

23 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

79 -

UX

222 -

XML

7

- « Previous

- Next »

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update - StarTrader on: Allow for the ability to turn off annotations on a...

-

AkimasaKajitani on: Download tool : load a request from postman/bruno ...

- rpeswar98 on: Alternative approach to Chained Apps : Ability to ...

-

caltang on: Identify Indent Level

- simonaubert_bd on: OpenAI connector : ability to choose a non-default...

- maryjdavies on: Lock & Unlock Workflows with Password

- noel_navarrete on: Append Fields: Option to Suppress Warning when bot...