Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Top Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Would love to see an option to disable a specific Output tool (rather than the global "Disable All Tools that Write Output" option). I'm envisioning the inverse of the Email tool, where there is a checkbox to enable Email... rather, the Output tool could have a check box that would disable that output (and ONLY that output), similar/consistent with the "Disable All Tools" function. A "Disable This Output" check box. The benefits would be a quick way to make sure not to overwrite something in one output (but still getting all the good content in all the other outputs) rather than having to go through the multiple clicks of adding to a container and then disabling the container. Could have benefits for connecting with Action tools/interface toggles as well. It would likely need to contain the same/similar formatting in Designer to indicate it has been disabled, though maybe a slightly different color so you could tell it was disabled differently?

(On a similar vein, would love to take this opportunity to bring up my favorite idea-that-has-not-been-implemented-yet-that-would-love-your-vote-and-attention, implementing a Warning that outputs are disabled when posting to Gallery...)

Cheers!

NJ

I like to suggest having a Batch Macro Container (besides the existing Container) which acts as a Batch Macro within a Workflow and is stored within the Workflow.

I understand that having a batch macro available as a separate tool can be very powerful and reduces redundant work. However, very often Batch Macros are set up for a specific workflow only and are of no use for other workflows. The Creation of a Batch Macro in a container will significantly reduce the time to deploy a batch macro and keeps the Macro folder clean of one-time Batch Macros.

Attached a picture of how this could look like

Thanks

Manuel

Could we please have a Type field added to the "Select Fields to Cleanse" configuration window for the Data Cleansing Tool? This small feature would save a lot of time (saving the time needed to check the Metadata for every field every time I use the Data Cleansing Tool). Similar functionality to the way the Summarize Tool displays both Field and Type (just one additional field).

Today:

Future Version:

Pardon my sad photoshopping 🙂

Note: I realize the Data Cleansing is a macro and this functionality is not currently available with the "Check Box" interface tool.

Thank you!

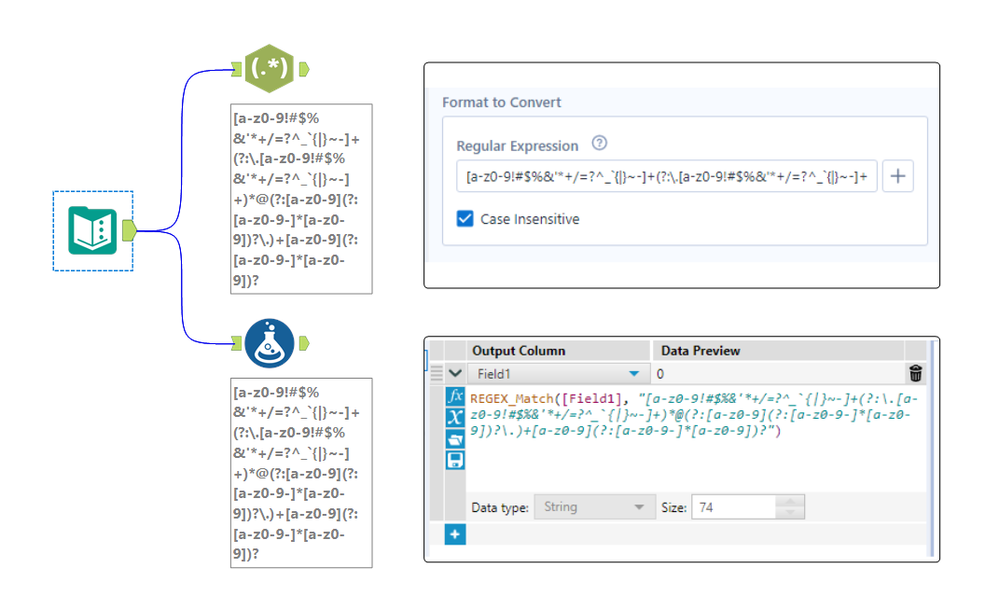

The expression editor in the RegEx tool is only a single line, which makes it really hard to edit long regular expressions. See attached photo comparing the expression editor in the RegEx tool compared to the formula tool for the same expression. Please make the RegEx editor box either wrap to multiple lines, have a pop-out expression editor, or something so we can see long expressions.

Currently when you add an event to notify you of workflow failure / success - you have to enter the SMTP settings every time. It would be more efficient to set this up as a user setting which can be used for the default across all canvasses that this user creates.

It would be great if there was an output option for excel files where you could overwrite the data in the sheet, but keep the formatting in the sheet. Similar to how the Paste Values option works in Excel. This would allow me to create a template with data validation, conditional formatting, column widths, cell fill colors, etc and set a workflow to run on a schedule and just paste the data into the existing template.

To get around this right now I have to output it to a separate tab and then paste the columns as values over the existing template. This is fine unless I am out of the office and need to bother someone else to do it. I know there have been many times where i wish this was an option outside of the report I am currently building. I am honestly surprised I couldn't find an idea already submitted about this!

Thanks,

Wes

When developing and/or troubleshooting workflows, I frequently disable the outputs using the checkbox in the Runtime configuration settings to speed up the workflow and prevent sending emails and/or overwriting data in the output sources... however, 9/10 times I forget to turn off this checkbox when I save my workflow back up to the Gallery. This results in countless emails from users to the tune of "I ran the workflow successfully, but there was no output?" 🙂

Would love love love to see some sort of warning notification (similar to the ones that already shown for data sources etc.) when saving to the Gallery if the "Disable All Tools that Write Output" option is selected in the Runtime settings.

Thank you!!

NJ

Hello,

Tableau has a veru useful "split" function that allows you to split a string with a delimiter and specify the number of the result you want

https://onlinehelp.tableau.com/current/pro/desktop/en-us/functions_functions_string.htm

Qlik has the same function, subfield : https://help.qlik.com/en-US/sense/February2019/Subsystems/Hub/Content/Sense_Hub/Scripting/StringFunc...

I think this is quite useful and a very standard feature.

Best regards,

Simon

Idea:

A tool for encryption/decription of a column with multiple encrypiton options is the idea.

Both one way and two way encription should be possible.

Rationale:

Clients are in need of encrypting customers' personal identification data

before sharing it with a third party like consultants and analytics service providers etc.

When insights are provided back the data owner needs to quickly decrypt the ID field and get results or decide actions.

Clients:

This is especially an important case for banks, non bank financial institutions and telecom companies in EU countries and similar (Turkey has similar strict rules)

Best

Every time we create a file output - you first have to check if the folder exists - and if not then create it.

Currently it's quite onerous to do a directory create - especially with all the error trapping to make this production safe - and everyone is reinventing the wheel in their own companies.

Given the commonality of this need - could we add a tool that allows you to check for existance of a directory and attempt to create it (with nested directories and useful status / error descriptions to act upon)

The Source field of the field metadata is very useful, but has some problems.

- It is repetitious. A long connection string repeated for many fields from the same source can bloat the size of the workflow above 10 MB, and when removed is around 0.5 MB.

- It exposes sensitive information about a company's infrastructure, such as server names, ports, user ids, and proprietary data structures.

I first started paying attention when we found a user's password in the metadata because they had passed it as a string to the Dynamic Input Tool (separate Idea submitted for that - LINK). Then when I had to share an App with the Alteryx Support team for support with an issue, I thought to check the metadata, and I noticed that the file was too big and was exposing information that I would not normally share with another company.

I'm not sure how you want to handle this, but here's some thoughts:

- Default the Source field to 'off' and provide users the option to turn it 'on' in the workflow/app settings.

- Provide a mechanism to strip the 'Source' field at time of saving or exporting the workflow.

- If nothing else, provide education to users on the implications of including this information in the file.

Thanks for listening!

Cameron

During the design phase, we make some experimentations and create tables with Alteryx.

But, sometimes, after this phase or after a mistake, we need to drop those tables.

We know that it's possible to write a drop table statement in Pre-SQL or Post-SQL but it requires SQL skills and it could be done only if you write in a table.

It will be great if we could drop a table directly in the Query builder of the Input tool by making a right click on the table in the discovery tree.

Extension : It also be great to have the same thing in the HDFS browse.

I feel like I must be missing something, but saw a similar suggestion for TDE outputs, so maybe this really doesn't currently exist. We sometimes add descriptions to fields we create, and some inputs come with descriptions, but we can't seem to get them into the final database using the Output tool. Can there be a checkbox to persist the metadata along with the data when writing to a database?

We now have the ability to output to an ESRI File Geodatabase, which is great, but it only allows you to output it to the WGS84 coordinate system. I would like to have the same functionality to export it to other projections or coordinate systems similar to the ESRI Shapefile or ESRI Personal Geodatabase output tools (we specifically need NAD83 but I'm sure others would like other options as well).

It would be great if the "fields from connected tool" option pulled fresh data at runtime when used in the gallery and pulling data from non-interface tools. The external source option doesn't have many settings (i.e. I can just point to one file), whereas the possibilities would be endless if I could use the full suite of tools to create a data set, at runtime, to pass to the list box/dropdown.

When converting data types while In-DB, it would be really helpful if I could change the data type with the "Select In-DB" tool in a similar manner to the "Select" tool. Currently, we are having to use the "Formula In-DB" tool in order to create a "Cast" Statement.

As a designer, I need to output data only when no data quality errors are encountered within a workflow. I suppose that I wouldn't want to see any errors, but if I am writing multiple output files and errors are encountered during the output processes (e.g. #3 of 4 fails), then I'm kind of out of luck. So let's focus on data quality. If Nulls are encountered in "Actual" data or unjoined records are found or dates are out of range, you name the issue, I don't want to output any data to specific output tools. Work-arounds exist. I can output to a staging file and conditionally schedule or use a conditional runner macro to output to the production data. But what I really want to do is to stop an output tool from receiving any data to output.

Today I handle this by counting error records that would be caught by a TEST tool and appending the count of these bad records to the data that would go to output(s). I filter for IsNull([Count]) and only when 0 ERRORS are found by the test tool, can data be output. Otherwise null records are received by the output tool and it quietly makes no changes.

My ask is to configure an output tool to be disabled if ERRORs exist. That means that the LAST thing to happen in the execution of a workflow will be the output processes. They will all be blocking tools and can't happen until there are no tools left to run except for the outputs (configured as blocked). Maybe this is a big ask.

There would be great usefulness in having event triggers in 2 different places:

- Similar to Informatica - it would be useful to have event triggers for workflow - specifically "trigger when file arrives" or "trigger when value exceeds X"

- It would be also useful to have an event trigger component with an input so that we can use semaphore type flags to control sequencing in complex sets of flows. For example:

- When the ETL is done - mark the "Completed" flag as true

- The reporting job is running, waiting for a completed flag to complete

Overall, it would be useful for Alteryx to have event-driven triggers.

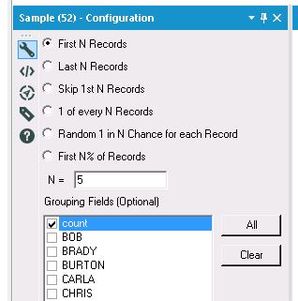

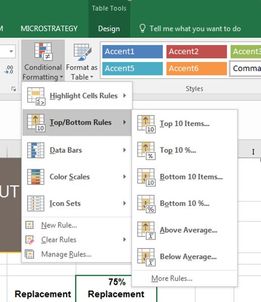

Would love to see a tool that allows you to find the Top N or Bottom N% etc. using a single tool, rather than the current common practices of using 2-3 tools to accomplish this simple task. It's possible some/all of this functionality could be added by simply expanding the current Sample tool to include more options, or at least mirroring the configuration of the Sample Tool in the creation of a new "Top/Bottom Tool."

For example, let's say I wanted to find the top 5 student grades, and then compare all scores to those top 5 grades. I would currently need to do something along the lines of Sort descending (and/or Summarize Tool, if grouping is needed) + Sample Tool (First N Records) + Join the results back to the data. That's anywhere from 3-4 tools to accomplish a simple task that could potentially be done with 1-2.

I'm envisioning this working somewhat like the Top/Bottom rules in Excel Conditional Formatting (see below), and similar to some of the existing options in the Sample Tool (also see below). For example, rather than only being able to select the First N Records in the Sample Tool, I could indicate that I want to select the Top N Records, or the Bottom N% Records. This would prevent the additional step of having to group/sort your data before using the Sample Tool, especially in cases where you're then having to put your records back into their original order rather than leaving them in their grouped/sorted state. You'd still want to have the option of choosing grouping fields if desired. You would also need to have a drop-down field to indicate which field to apply the "Top/Bottom rules" to.

A list of potential "Top/Bottom" options that I believe would be great additions include:

- Top N

- Bottom N

- Top N %

- Bottom N %

- Above Average

- Below Average

- Within a Percentile Range (i.e. "Between 20-30%")

- Skip Top N

- Skip Bottom N

The value added with just the options above would be huge in helping to streamline workflows and reduce unnecessary tools on the canvas.

Hi to all,

I have seen one or two posts requesting ability to total up rows and/or columns of numbers, however this idea also requests the ability to subtotal data by a field and also produce an overall total.

This could be an extension to existing tools such as 'Summarise' and 'Cross Tab' or could be a stand alone tool. Desired output of using a tool like this would produce something like this:

This would be incredibly useful for building reports within Alteryx as well as analysing the data, and cut down the amount of tools currently required to produce this. I have seen a third party tool which does some of this but this adds the ability to subtotal.

thanks - Roger

- New Idea 291

- Accepting Votes 1,791

- Comments Requested 22

- Under Review 166

- Accepted 55

- Ongoing 8

- Coming Soon 7

- Implemented 539

- Not Planned 111

- Revisit 59

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

220 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

79 -

Category Demographic Analysis

2 -

Category Developer

209 -

Category Documentation

80 -

Category In Database

215 -

Category Input Output

645 -

Category Interface

240 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

79 -

Category Preparation

395 -

Category Prescriptive

1 -

Category Reporting

199 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

968 -

Data Products

3 -

Desktop Experience

1,551 -

Documentation

64 -

Engine

127 -

Enhancement

343 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

13 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

204 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

24 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

81 -

UX

223 -

XML

7

- « Previous

- Next »

- Shifty on: Copy Tool Configuration

- simonaubert_bd on: A formula to get DCM connection name and type (and...

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- haraldharders on: Improve Text Input tool

- simonaubert_bd on: Unique key detector tool

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update

| User | Likes Count |

|---|---|

| 7 | |

| 7 | |

| 5 | |

| 3 | |

| 3 |