Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

I notice that at least my Output files are tied up "being used by another program" after the workflow is closed. I have to actually close out of Alteryx to release the file. The file s/b released as soon as the workflow using it is done running. Failing that, as soon as the workflow is closed vs having to close Alteryx completely.

...or is this just my issue?

-

Category Input Output

-

Data Connectors

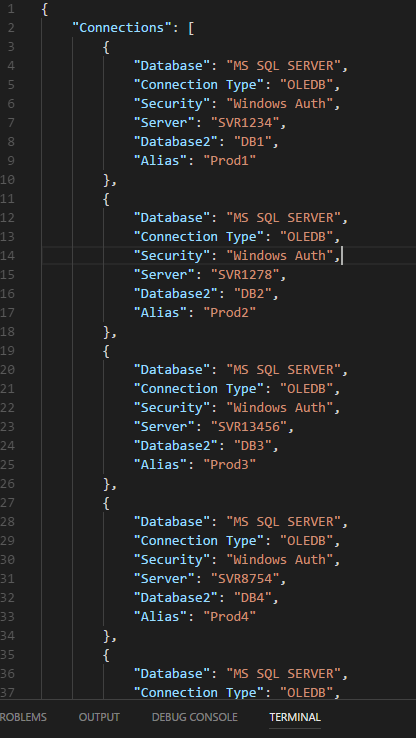

Right now as far as I know you need to add each DB connection manually. This works... but is quite time consuming when trying to run tasks against a cluster of prod databases. It would be awesome to pass a JSON config file,example below, to the Alteryx Engine and have Alteryx create those connections upon parsing the file. This would save tons of time, and allow teams to share a central config file with consistent aliases across their clusters to ensure their app connections point to the same DBs across workflows. It would also make on boarding a breeze for new developers on team.

-

Category In Database

-

Data Connectors

Sometimes, when I am working with new data sources, it would be nice to have a dockable pane that would allow me to view the schema of all of my connected data sources. That way I could rename fields and change data types as needed without having to jump from one select tool to the other to see how the schemas compare.

-

Category Connectors

-

Category Data Investigation

-

Category In Database

-

Data Connectors

My organization requires users to change their active directory passwords every so often. That's fine. That does create an issue with changing passwords on all workflows that contain a tableau server publish, but that's not the real issue here.

What's more annoying is that once that password is changed, the data source name and project is lost. When updating multiple data sources across workflows (or within the same workflow) this becomes quite cumbersome and annoying.

I know there are limitations on the partner side as this is technically a tableau controlled thing, but is there anything that can be done here?

-

Category Connectors

-

Data Connectors

Hello All,

I received from an AWS adviser the following message:

_____________________________________________

Skip Compression Analysis During COPY

Checks for COPY operations delayed by automatic compression analysis.

Rebuilding uncompressed tables with column encoding would improve the performance of 2,781 recent COPY operations.

This analysis checks for COPY operations delayed by automatic compression analysis. COPY performs a compression analysis phase when loading to empty tables without column compression encodings. You can optimize your table definitions to permanently skip this phase without any negative impacts.

Observation

Between 2018-10-29 00:00:00 UTC and 2018-11-01 23:33:23 UTC, COPY automatically triggered compression analysis an average of 698 times per day. This impacted 44.7% of all COPY operations during that period, causing an average daily overhead of 2.1 hours. In the worst case, this delayed one COPY by as much as 27.5 minutes.

Recommendation

Implement either of the following two options to improve COPY responsiveness by skipping the compression analysis phase:

Use the column ENCODE parameter when creating any tables that will be loaded using COPY.

Disable compression altogether by supplying the COMPUPDATE OFF parameter in the COPY command.

The optimal solution is to use column encoding during table creation since it also maintains the benefit of storing compressed data on disk. Execute the following SQL command as a superuser in order to identify the recent COPY operations that triggered automatic compression analysis:

WITH xids AS (

SELECT xid FROM stl_query WHERE userid>1 AND aborted=0

AND querytxt = 'analyze compression phase 1' GROUP BY xid)

SELECT query, starttime, complyze_sec, copy_sec, copy_sql

FROM (SELECT query, xid, DATE_TRUNC('s',starttime) starttime,

SUBSTRING(querytxt,1,60) copy_sql,

ROUND(DATEDIFF(ms,starttime,endtime)::numeric / 1000.0, 2) copy_sec

FROM stl_query q JOIN xids USING (xid)

WHERE querytxt NOT LIKE 'COPY ANALYZE %'

AND (querytxt ILIKE 'copy %from%' OR querytxt ILIKE '% copy %from%')) a

LEFT JOIN (SELECT xid,

ROUND(SUM(DATEDIFF(ms,starttime,endtime))::NUMERIC / 1000.0,2) complyze_sec

FROM stl_query q JOIN xids USING (xid)

WHERE (querytxt LIKE 'COPY ANALYZE %'

OR querytxt LIKE 'analyze compression phase %') GROUP BY xid ) b USING (xid)

WHERE complyze_sec IS NOT NULL ORDER BY copy_sql, starttime;

Estimate the expected lifetime size of the table being loaded for each of the COPY commands identified by the SQL command. If you are confident that the table will remain under 10,000 rows, disable compression altogether with the COMPUPDATE OFF parameter. Otherwise, create the table with explicit compression prior to loading with COPY.

_____________________________________________

When I run the suggested query to check the COPY commands executed I realized all belonged to the Redshift bulk output from Alteryx.

Is there any way to implement this “Skip Compression Analysis During COPY” in alteryx to maximize performance as suggested by AWS?

Thank you in advance,

Gabriel

-

Category Input Output

-

Data Connectors

There should be a macro which could be used as read input macro for in-db tools.

Similarly, there should be a write macro for in-db tools.

-

Category In Database

-

Data Connectors

Good afternoon,

I work with a large group of individuals, close to 30,000, and a lot of our files are ran as .dif/.kat files used to import to certain applications and softwares that pertain to our work. We were wondering if this has been brought up before and what the possibility might be.

-

Category Input Output

-

Data Connectors

Yeah, so when you have 15 workflows for some folks and you've actually decided to publish to a test database first, and now you have to publish to a production database it is a *total hassle*, especially if you are using custom field mappings. Basically you have to go remap N times where N == your number of new outputs.

Maybe there is a safety / sanity check reason for this, but man, it would be so nice to be able to copy an output, change the alias to a new destination, and just have things sing along. BRB - gotta go change 15 workflow destination mappings.

-

API SDK

-

Category Developer

-

Category Input Output

-

Data Connectors

There should be an option to not update values with Null-values in the database, when using the tool Output Data, with the options:

- File Format = ODBC Database (odbc:)

- Output Options = Update;Insert if new

This apply to MS SQL Server Databases for my part, but might affect other destinations as well?

-

Category Input Output

-

Data Connectors

When outputting files, it is usually beneficial for characters that would cause trouble with formatting/syntax to be properly escaped. However, there are situations where suppressing this behavior is desirable.

Of particular importance for such a feature is in the outputting of JSON files. Currently, if a file is output as JSON it will always have quotations escaped if they occur within a field, regardless of whether this conforms to the JSON standard. There are a variety of current workaround for this, including pre-formatting all fields to look like JSON and then outputting as a \0 delimiter CSV, but in many cases there is no need to escape any characters when outputting a JSON.

A simple toggle--as was created for suppressing BOM in CSVs--to disable character escaping would make the creation of JSON objects simpler and reduce the amount of workarounds required to output proper JSON.

-

Category Input Output

-

Data Connectors

Please add a tool to edit different cells in table randomly and update the source after editing. Similar to the "Edit Top 200 rows in SQL". That would be very much helpful

-

Category Data Investigation

-

Category Input Output

-

Data Connectors

-

Desktop Experience

On the output data component, when outputting to a database, there are Pre & Post Create SQL statement switches. This allows for the execution of a SQL statement in the DB but only after a create table action. To use this functionality you must have populated a table using the 'create new table' or 'overwrite' option, which is no good if you're using the Update or Append options. Change these switches to Pre & Post SQL Statements as per the Input Data component. This will allow you to execute SQL statements in the DB either before or after the population action. Can be really handy if you need to execute database stored procedures for example.

Please note: You can already work around this by branching from your data stream and creating or overwriting a dummy table, then use the pre or post create switch for your SQL statement. This is a bit messy and can leave dummy tables in the DB, which is a bit scruffy.

-

Category Input Output

-

Data Connectors

Add extra capabilities int he Output Tool so that the following can be accomplished without the need for using Post SQL processing:

- Create a new SQL View

- Assign a Unique Index key to a table

Having these capabilities directly available in the tools would greatly increase the usability and reduce the workload required to build routines and databases.

-

Category Input Output

-

Data Connectors

At the moment the salesforce connector does not support view objects like AccountUserTerritory2View. It should be extended to support those objects to facilitate more efficient and above all complete data extraction.

-

Category Connectors

-

Data Connectors

Hi,

I am sure that I can't be the only person that would be interested in an output tool that allows categorical fields on both axes. THis would allow you to visualise the following example and I would suggest that this was either similar to the heatmap with boxes or the colour / size of the entry was determined by a third numerical value - such as 'Confidence' from the table below. THere might be ways to extend the idea as well as having a fourth parameter that puts text in the box or another number but it would be useful and not too hard I am sure.

LHS | RHS | Support | Confidence | Lift | NA |

{Carrots Winter} | {Onion} | 5.01E-02 | 0.707070707 | 1.298568507 | 210 |

{Onion} | {Carrots Winter} | 5.01E-02 | 9.20E-02 | 1.298568507 | 210 |

{Carrots} | {Onion} | 4.39E-02 | 0.713178295 | 1.309785378 | 184 |

{Onion} | {Carrots} | 4.39E-02 | 8.06E-02 | 1.309785378 | 184 |

{Peas} | {Onion} | 3.20E-02 | 0.428115016 | 0.786253301 | 134 |

{Onion} | {Peas} | 3.20E-02 | 5.87E-02 | 0.786253301 | 134 |

{Bean} | {Onion} | 2.20E-02 | 0.372469636 | 0.68405795 | 92 |

{Carrots Nantaise} | {Onion} | 2.08E-02 | 0.483333333 | 0.88766433 | 87 |

Many thanks in advance for considering this,

Peter

-

Category Input Output

-

Category Reporting

-

Data Connectors

-

Desktop Experience

Hi,

Is there an easy way through Alteryx to rename a file once I have processed it... Would like file name to be- FileName.csv.Date.Time (FileName.txt.20180424.055230)

Thanks.

-

Category Input Output

-

Data Connectors

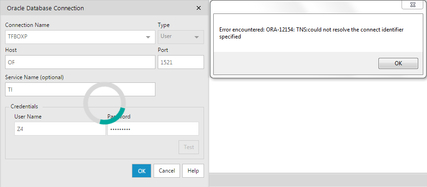

I have encountered problem with Oracle Direct Connection tool. I have the correct host, port, service name, user name and password (the same configuration works with Toad), but Alteryx still complaints the service name is not found on tnsnames.ora (ORA-12154). Well, the reason to use Direct Connection is that I do not have admin rights on my computer to edit the file, so it seems this kind of problem can only be resolved by reaching out to our IT service to edit tnsnames.ora for me.

-

Category In Database

-

Data Connectors

I would like to able to limit the data being read from the source based on the volume , such as 10GB or 5 GB etc. This will help in case of POC's where we can process portion of the dataset and not the entire dataset. This will have many different used cases as well.

-

Category Input Output

-

Data Connectors

Dear Team

If we are having a heavy Workflow in development phase, consider that we are in the last section of development. Every time when we run the workflow it starts running from the Input Tool. Rather we can have a checkpoint tool where in the data flow will be fixed until the check point and running my work flow will start from that specific check point input.

This reduces my Development time a lot. Please advice on the same.

Thanks in advance.

Regards,

Gowtham Raja S

+91 9787585961

-

Category Input Output

-

Category Preparation

-

Data Connectors

-

Desktop Experience

- New Idea 377

- Accepting Votes 1,784

- Comments Requested 21

- Under Review 178

- Accepted 47

- Ongoing 7

- Coming Soon 13

- Implemented 550

- Not Planned 107

- Revisit 56

- Partner Dependent 3

- Inactive 674

-

Admin Settings

22 -

AMP Engine

27 -

API

11 -

API SDK

228 -

Category Address

13 -

Category Apps

114 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

252 -

Category Data Investigation

79 -

Category Demographic Analysis

3 -

Category Developer

217 -

Category Documentation

82 -

Category In Database

215 -

Category Input Output

655 -

Category Interface

246 -

Category Join

108 -

Category Machine Learning

3 -

Category Macros

155 -

Category Parse

78 -

Category Predictive

79 -

Category Preparation

402 -

Category Prescriptive

2 -

Category Reporting

204 -

Category Spatial

83 -

Category Text Mining

23 -

Category Time Series

24 -

Category Transform

92 -

Configuration

1 -

Content

2 -

Data Connectors

982 -

Data Products

4 -

Desktop Experience

1,605 -

Documentation

64 -

Engine

134 -

Enhancement

407 -

Event

1 -

Feature Request

218 -

General

307 -

General Suggestion

8 -

Insights Dataset

2 -

Installation

26 -

Licenses and Activation

15 -

Licensing

15 -

Localization

8 -

Location Intelligence

82 -

Machine Learning

13 -

My Alteryx

1 -

New Request

226 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

26 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

86 -

UX

227 -

XML

7

- « Previous

- Next »

- abacon on: DateTimeNow and Data Cleansing tools to be conside...

-

TonyaS on: Alteryx Needs to Test Shared Server Inputs/Timeout...

-

TheOC on: Date time now input (date/date time output field t...

- EKasminsky on: Limit Number of Columns for Excel Inputs

- Linas on: Search feature on join tool

-

MikeA on: Smarter & Less Intrusive Update Notifications — Re...

- GMG0241 on: Select Tool - Bulk change type to forced

-

Carlithian on: Allow a default location when using the File and F...

- jmgross72 on: Interface Tool to Update Workflow Constants

-

pilsworth-bulie

n-com on: Select/Unselect all for Manage workflow assets

| User | Likes Count |

|---|---|

| 6 | |

| 5 | |

| 4 | |

| 3 | |

| 2 |