Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Top Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

The download tool is currently a general purpose tool that is used for many different things; from downloading FTP files; to scraping websites.

However, as a general purpose tool, it cannot serve the specific need of scraping a website without doing a huge amount of work to get there. What makes Alteryx great is the fact that it drops the barrier so that regular folks can do some really powerful analytics, but the web scraping capabilities are not yet there and still require a tremendous amount of technical skill to accomplish.

I'll go through this from top to bottom:

- Split capability: The download tool tries to be too many things to too many people. Break it up into its component parts - one for FTP; one for Web Scraping; etc - with deep speciality. You can still keep the download tool as the super-user version but by creating the specialized tools, we can make this much more user-friendly

- Connection: For enterprise users, where there's a locked down connectivity to the internet - there is no way to scrape web content without using CURL. So we need the ability to connect to websites in a way that does not require curl or complex connectivity setups for users to navigate through web proxy settings.

- Alteryx could auto-detect settings by allowing the user to point to the site within a controlled browse form like Excel does

- Parameters: Many websites explicitly support named parameters (using ? notation) - it would be very useful to allow the user to link to these parameters explicitly without having to do complex string conjugations or %20 scrubbing to get of non-URL friendly characters

- Content: Alteryx presents the user with no native ability to process HTML, so all scrubbing to pull out a specific field has to be done through complex read-through of the underlying source of the website (delivered in "DownloadedData") followed by guessing on patterns on how the site does tables or spans etc, followed by complex regex.

- Instead, we could present the user with a view of the web-page and ask them to select the elements that they want

- This would serve the dual purpose of making this user-friendly for regular folks and abstract away the technicalities; but also would allow the download tool to eliminate all the other bits of the page that are not wanted like scripts; interstitial adverts; images; headers & footers etc.

- Improved post / parse capability: Sometimes the purpose of a URL is to generate a download (like the Google Finance API) - again, would be good to observe the user using the target site to record & interpret what they are looking for and what they get (e.g. the file from google)

- HTML & XML types: why not an explicit type in Alteryx for web content?

- Finally - HTML aware. The browse tools are not currently HTML aware, so all the useful formatting to be able to see what's going on, expand nodes, find patterns etc - all this has to be copied out of Alteryx into Notepad ++. Given the ubiquity of HTML parsers and pretty printers and editors, it should be reasonably easy to get a cheap component that can provide this capability

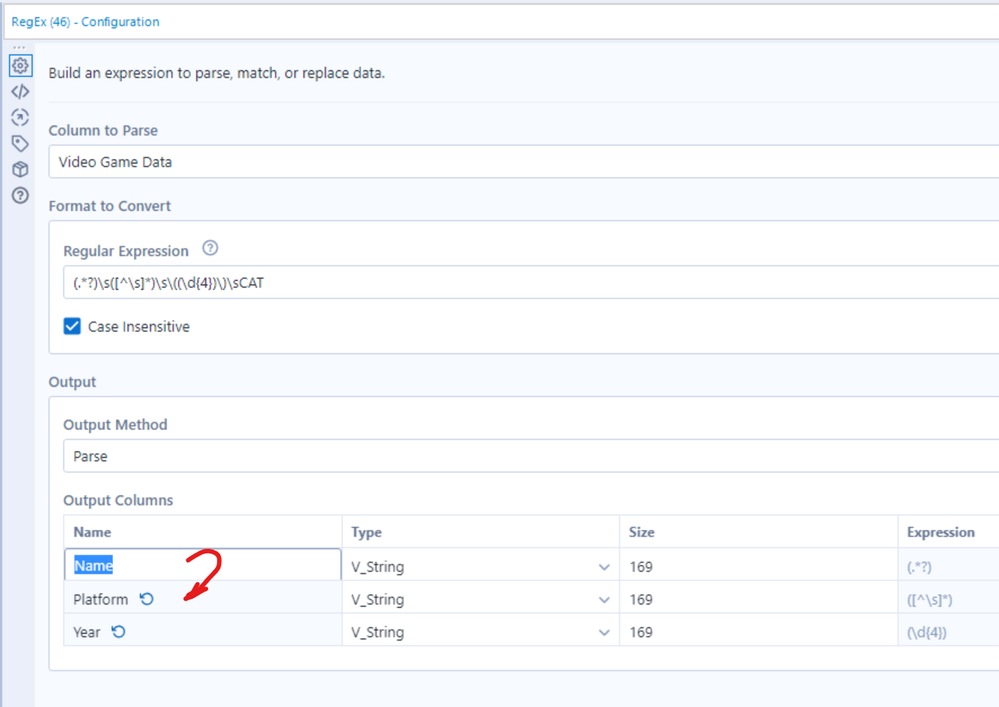

When entering a number of column names in the RegEx parse mode - please can you allow either Enter or down-arrow to move down to the next cell (standard windows convention)?

Currently Enter just exists the edit mode; and down-arrow does nothing.

cc: @Hollingsworth

It can be daunting to find the tool that is currently being processed by the engine in workflows that contain hundreds of tools with many ins, outs, and branches. During runtime, I want to be shown the tool that is running on the canvas. This functionality should be in the form of a button or something to direct focus to that area. It should not be the default.

My team uses a shared macro repository (say F:\AlteryxMacros), and we recently ran into an issue with the default save location for macros. While we save most macros to our repository, there are times when folks save their macros elsewhere (let's say C:\MyAwesomeWorkflow). The issue we've encountered is that if you go to file >> save as with a macro, it will ALWAYS default to the macro repository, even when my macro is currently saved elsewhere (C:\MyAwesomeWorkflow). Speaking for a friend, people have accidentally saved things to the macro repository by accident. Or, they waste time navigating from the macro repository to the their current folder.

If a macro is saved somewhere, please change the file >> save as to default to the current folder. Thanks!

The option to open Hyper files in 2019.4 is great! For some of our use cases it would be even better, if we would be able to directly open Hyper files that have been published to Tableau Server.

It should be possible to achieve this by combining the Tableau REST API method Download Data Source, which returns a Tableau Packaged Data Source (.tdsx), which then would need to be converted to a Zip file to be able to navigate to the contained Hyper file.

It would be helpful to be able to embed a macro within my workflows so in the end I have one single file.

Similar to how Excel becomes a macro enabled file, it would be great if the actual macro could be contained in the workflow. As it stands now, the macro that I insert into a workflow is similar to a linked cell in MS Excel that points to another file. If the macro is moved the workflow becomes broken. I often work on a larger workflow that I save locally while developing. Once it's complete, I then save the workflow to a network drive and have to delete the macros and reinsert these. It also makes it challenging if I were to send a workflow to someone else... I will have to give them instructions on which macros to insert and where. Similar to a container, they could be minimized so to speak to their normal icon, and then expanded/opened if any edits were needed....then collapsed when done.

Thanks for the consideration.

It would be great if we could create more customization of the email output in the Events in the Workflow Configurations. Currently we can output the number of error, warnings, etc. and the entire output log. It would be great if we could only send the error messages in an email instead of sending the whole output log (similar to the output of a workflow run with errors in the Alteryx Gallery). The customization in the Email Tool is great, but this isn't helpful when a scheduled workflow fails. I found this related thread on the discussion forum: https://community.alteryx.com/t5/Alteryx-Designer-Discussions/Customize-Events-Error-Message/td-p/42... Thanks!

There's often a need to do a cascade of filters which would normally be handled in a programming language by a Case or a Switch statement.

For example:

- if it's a cat then go left, otherwise go right

- if it's a dog then go left otherwise carry on right

if it's a fish then go left otherwise carry on right

otherwise do xxxx

This could be handled more elegantly by a conditional split tool that allowed you to specify multiple conditions like a case statement, and which then generated multiple output nodes; with the last one for any leftovers.

When we have too many steps in a workflow, it is mandatory to use container to represent better business flow. It can collapse many steps to represent one business flow.

But, when we open collapsed tool container, workflow canvas not resizing to give space for tool container, it overlap on existing tools.

It is better to resize workflow canvas when we collapse or resize tool containers.

We need some way (unless one exists that I am unaware of - beyond disabling all but the Container I want to run) to fire off containers in particular order. Run Container "Step1" then Run Container "Step2" and so on.

In some of our larger workflows it's sometime tedious to run a workflow in order to see some data, when adding something in the beginning of the workflow. Running und stopping it as soon as the tools gets a green border is sometimes an option.

It would be convenient to have an option in the context menu to run a workflow only until a specific tool.

In effect, only this specific tool has an output visible for inspection and only the streams necessary for this tool have been run - everything else is ignored and I'm fine to not see data for the other tools.

This would speed up the development of small parts in a larger workflow much more convenient.

Regards

Christopher

PS: Yes, I can put everything else in a container and deactivate it. But a straight forward way without turning containers on and off would be preferable in my opinion. (I think KNIME as something similar.)

Make the Container Caption Font Size Adjustable

I find it helpful to see the entire workflow at once. It would be very helpful for the container size font to be adjustable. For example, I am documenting a workflow with many containers and tools. The containers represent segments of my workflow. When I am looking at or printing the entire workflow, the container heading is too small to be read. If the font size were adjustable, it could be increased to be readable and still fit easily into the length of the container.

Thanks to zuojing80 and tcroberts for their comments on 9/10/2018.

@AdamR_AYX did a talk this year at Inspire EU about testing Alteryx Canvasses - and it seems that there is a lot we can do here to improve the product:

https://www.youtube.com/watch?v=7eN7_XQByPQ&t=1706s

One of the biggest and most impactful changes would be support for detailed unit testing for a canvas - this could work much like it does in Visual Studio:

Proposal:

In order to fully test a workflow - you need 3 things:

- Ability to replace the inputs with test data

- Ability to inspect any exceptions or errors thrown by the canvas

- Ability to compare the results to expectation

To do this:

- Create a second tab behind a canvas which is a Testing view of the canvas which allows you to define tests. Each test contains values for one or more of the inputs; expected exceptions / errors; and expected outputs

- Alteryx then needs to run each of these tests one by 1 - and for each test:

- Replace the data inputs with the defined test input.

- Check for, and trap errors generated by Alteryx

- Compare the output

- Generate a test score (pass or fail against each test case)

This would allow:

- Each workflow / canvas to carry its own test cases

- Automated regression testing overnight for every tool and canvas

Example:

For this canvas - there are 2 inputs; and one output.

Each test case would define:

- Test rows to push into input 1

- Test rows to push into input 2

- any errors we're expecting

- The expected output of the browse tool

This would make Alteryx SUPER robust and allow people to really test every canvas in an incredibly tight way!

Love the functionality to create filters on the Calgary database but it would be nice to be able to select the columns you wanted returned. There are times where you only want a couple columns but the input tool will return all columns creating a larger dataset then required. You can add a select right after the input but this is after the entire dataset has been loaded into memory. Combining the two would make the Calgary input tool behave more like a database then a standard "dumb" input source.

It would be a handy feature if it were possible to choose a data type for an input tool to read the data in as. For example, if a dataset has multiple fields with different data types, it would be handy to be able to make the Input Tool read and output them all as a string, if needed. This would also make a handy tool, a sort of blanket data conversion to convert all fields to the specified type.

default file path in "File Browser" interface app would be a nice to have feature. Similar to what we have in Numeric, Text etc. interface app.

The format of these is always:

For Excel, create a summary sheet and set as the first tab, then create detailed sheets as additional tabs in the same .xlsx file.

The summary sheet always has the same fields, but the fields may reference different detail tabs day to day.

After the output, I can manually open Excel and change the field to a formula that references the other tabs (hyperlink function).

It would be great if I could just type the hyperlink formula in Alteryx and have that embedded into the Excel output.

The same goes for PDFs, except I would reference other pages (or if using PDF portfolio I would reference other PDFs in the same portfolio).

Both Input and Output tools should have the ability to read or write any file type from/into standard compression types (ZIP and GZIP). This would be helpful when managing large files.

At present - to identify the dependencies of your workflow - you have to go to to "Advanced Settings" to find this critical capability.

(see @MattB 's great post here: https://community.alteryx.com/t5/Alteryx-Knowledge-Base/Workflow-Dependencies/ta-p/49696 )

Could we instead move this to the workflow properties on the left hand side - this would be a more logical place to keep this info.

CC: @rijuthav; @jithinmony; @HengHe; @RajK; @ydmuley; @revathi; @Deeksha; @MPistone; @Ari_Fuller; @Arianna_Fuller; @JoshKushner; @samN; @avinashbonu; @Sunder_Sriram; @Rahul_Thakur; @Rahul_Sing

It would be really nice if we could save our own custom color palette when coloring tool containers and comments.

I use colors to define the purpose of my tool containers and it would be much easier if I could select a labeled, reusable color.

- New Idea 301

- Accepting Votes 1,790

- Comments Requested 22

- Under Review 168

- Accepted 54

- Ongoing 8

- Coming Soon 7

- Implemented 539

- Not Planned 111

- Revisit 59

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

222 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

79 -

Category Demographic Analysis

2 -

Category Developer

211 -

Category Documentation

80 -

Category In Database

215 -

Category Input Output

646 -

Category Interface

242 -

Category Join

105 -

Category Machine Learning

3 -

Category Macros

154 -

Category Parse

76 -

Category Predictive

79 -

Category Preparation

395 -

Category Prescriptive

1 -

Category Reporting

199 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

969 -

Data Products

3 -

Desktop Experience

1,558 -

Documentation

64 -

Engine

127 -

Enhancement

348 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

13 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

209 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

24 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

81 -

UX

223 -

XML

7

- « Previous

- Next »

- nkmcn on: Auto rename fields

- Shifty on: Copy Tool Configuration

- simonaubert_bd on: A formula to get DCM connection name and type (and...

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- haraldharders on: Improve Text Input tool

- simonaubert_bd on: Unique key detector tool

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections