Alteryx Designer Desktop Ideas

Share your Designer Desktop product ideas - we're listening!Submitting an Idea?

Be sure to review our Idea Submission Guidelines for more information!

Submission Guidelines- Community

- :

- Community

- :

- Participate

- :

- Ideas

- :

- Designer Desktop: Hot Ideas

Featured Ideas

Hello,

After used the new "Image Recognition Tool" a few days, I think you could improve it :

> by adding the dimensional constraints in front of each of the pre-trained models,

> by adding a true tool to divide the training data correctly (in order to have an equivalent number of images for each of the labels)

> at least, allow the tool to use black & white images (I wanted to test it on the MNIST, but the tool tells me that it necessarily needs RGB images) ?

Question : do you in the future allow the user to choose between CPU or GPU usage ?

In any case, thank you again for this new tool, it is certainly perfectible, but very simple to use, and I sincerely think that it will allow a greater number of people to understand the many use cases made possible thanks to image recognition.

Thank you again

Kévin VANCAPPEL (France ;-))

Thank you again.

Kévin VANCAPPEL

Would it be possible to change the default setting of writing to a tde output to "overwrite file" rather than the "create new file" setting? Writing to a yxdb automatically overwrites the old file, but for some reason we have to manually make that change for writing to a tde output. Can't tell you how many times I run a module and have it error out at the end because it can't create a new file when it's already been run once before!

Thanks!

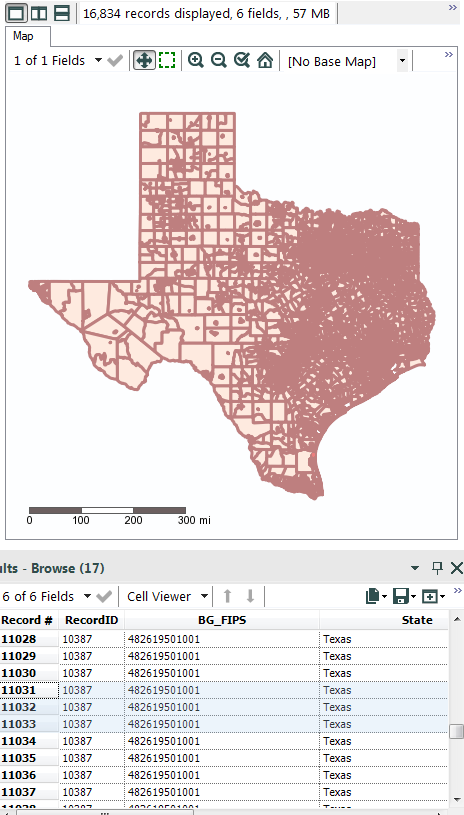

When viewing spatial data in the browse tool, the colors that show a selected feature from a non-selected one are too similar. If you are zoomed out and have lots of small features, it's nearly impossible to tell which spatial feature you have selected.

Would be a great option to give the user the ability to specify the border and/or fill color for selected features. This would really help them stand out more. The custom option would also be nice so we can choose a color that is consistent with other GIS softwares we may use.

As an example, I attached a pic where I have 3 records selected but takes some scanning to find where they are in the "map".

Thanks

As I understand SFTP support is planned to be included in the next release (10.5). Is there plans to support PKI based authentication also?

This would be handy as lots of companies are moving files around with 3rd parties and sometimes internally also and to automate these processes would be very helpful. Also, some company policies would prevent using only Username/Password for authentication.

Anybody else have this requirement? Comments?

Very confusing.

DateTimeFormat

- Format sting - %y is 2-digit year, %Y is a 4-digit year. How about yy or yyyy. Much easier to remember and consistent with other tools like Excel.

DateTimeDiff

- Format string - 'year' but above function year is referenced as %y ?? Too easy to mix this up.

Also, documentation is limited. Give a separate page for each function and an overview to discuss date handling.

The Field Summary tool is a very useful addition for quickly creating data dictionaries and analysing data sets. However it ignores Boolean data types and seems to raise a strange Conversion Error about 'DATETIMEDIFF1: "" is not a valid DateTime' - with no indication it doesn't like Boolean field types. (Note I'm guessing this error is about the Boolean data types as there's no other indication of an issue and actual DateTime fields are making it through the tool problem free.)

Using the Field Summary tool will actually give the wrong message about the contents of files with many fields as it just ignores those of a data type it doesn't like.

The only way to get a view on all fields in the table is using the Field Info tool, which is also very useful, however it should be unnecessary to 'left join' (in the SQL sense) between Field Info and Field Summary to get a reliable overview of the file being analysed.

Therefore can the Field Summary tool be altered to at least acknowledge the existence of all data types in the file?

I have run into an issue where the progress does not show the proper number of records after certain pieces in my workflow. It was explained to me that this is because there is only a certain amount of "cashed" data and therefore the number is basd off of that. If I put a browse in I can see the data properly.

For my team and me, this is actually a great inconvenience. We have grown to rely on the counts that appear after each tool. The point of the "show progress" is so that I do not have to insert a browse after everything I do so that it takes up less space on my computer. I would like to see the actual number appear again. I don't see why this changed in the first place.

Under options/restore defaults, it would be nice if the canvass could be reset (I sometimes lose windows), but the favorites be left intact.

Thanks!

Susan

I have a very large geospatial point dataset (~950GB) . When I do a spatial match on this dataset to a small polygon, the entire large geospatial point dataset has to be read into the tool so that the geospatial query can be performed. I suspect that the geospatial query could be significantly speed up of the geospatial data could be indexed (referenced) to a grid (or multiple grids) so that the geoquery could identify the general area of overlap, then extract the data for just that area before performing the precise geoquery. I believe Oracle used (uses) this method of storing and referencing geospatial data.

I see the mention of VR but has anyone talked about touch screen capabilities with Alteryx? Would make even the tough projects more fun!

I think the scheduler should include another frequency otpion of every other week. Let's say I want a workflow to run every other Monday, there is currently no simple way to do this. There are some workarounds but they are not ideal and include some additional manual work which defeats the purpose of having the scheduler.

Hello all,

It will be great if there is an option to specify sql statement or delete based on condition in write In-DB tool. We have to delete all record even though when we are trying to delete and append only a subset of records. If it allows for "WHERE" statement atleast, it will be very much useful. I have a long post going on about this requirement in http://community.alteryx.com/t5/Data-Preparation-Blending/Is-there-a-way-to-do-a-delete-statement-in... .

Regards,

Jeeva.

I recently began working with chained analytic applications. One of the things that I wanted to do was to take the values selected by the end user at each stage of the app and pass them further down in the application. I was able to do this by dumping the selected values to Alteryx databases and then using drop downs to pull the data into subsequent apps. However, I was wondering if there would be a better way of accomplishing this. One reason is that, with my approach, I wind up with several additional drop downs in my interface--which I really don't want. If there's a way around this, I'd love to hear it. Alternately, if Alteryx could potentially support doing something like this in the future, I think it would be really helpful.

The "idea" here is, for any tool utilized in a macro project, to allow any configuration setting for that tool, if desired, to be exposed to the outside world, so that when the macro is utilized in a parent workflow, the embedded tool's configuration setting is directly available to the parent workflow.

A benefit here would be the ability for users to more easily build custom tools based on the existing tools: e.g. send all inputs and outputs through a validation phase of arbitrary complexity, while leaving the "integration layer" of the encapsulated tool untouched.

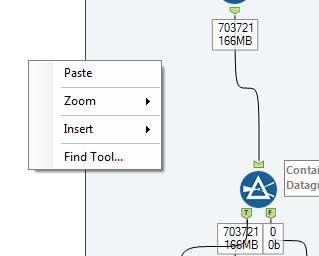

When building workflows, it would be nice to have "Save Workflow" and "Run Workflow" added to the right click menu when in the canvas.

Add to Right Click menu:

Save Workflow

Run Workflow

I would love the R tool editor to work like a standard text field....it might be better explained in this scenario. Pretend the character text is a script youve written with the function being at the top. Let's say you'd like to move the function closer to the script, look at the weird output. This editor pastes text like we are pasting images.

The use case is that I like to break my code into mini functions that I work on in the r console with sample data. Once it works, I post it into alteryx and experiment with it on a small sample, then a larger sample. If I have to have a document for my overall r cost in notepad ++, my function, and the console, it’s a little nusance, especially since I usually have to go back and forth with multiple functions. I am not askin for a full blown editor, I like my notepad ++ for that, just a text input that works conventionally.

SampleFunction <- function(x)

{

print(x)

}

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

when you paste the function (or any other text in the middle, look at this funky output)

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

SampleFunction <- function(x)abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

{abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

print(x)abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

}abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

abcdefghijklmnopqrstuvwxyz abcdefghijklmnopqrstuvwxyz

The option to "Allow Shared Write Access" is only available under CSV and HDFS CSV in the Data Input tool. It would be very helpful to have this feature also included for Excel files.

Improve HIVE connector and make writable data available

Regards,

Cristian.

Hive

| Type of Support: | Read-only |

| Supported Versions: | 0.7.1 and later |

| Client Versions: | -- |

| Connection Type: | ODBC |

| Driver Details: | The ODBC driver can be downloaded here. Read-only support to Hive Server 1 and Hive Server 2 is available. |

I would like to suggest to contemplate the option to add a new SDK based on lua language.

Why Lua?

o is open source / MIT license

o is portable

o is fast

o is powerful and simple

o Lua has been used to extend programs written in C, C++, Java, C#, Smalltalk, Fortran, Ada, Erlang

Sometimes we have workflows that fail and it is a pain to reschedule them. I wish there was a button on the bottom right corner of each workflows result just like Output.

It would be really useful to have a Join function that updated an existing file (not a database, but a flat or yxdb file).

The rough SAS equivalent are the UPDATE and MODIFY Statements

http://support.sas.com/documentation/cdl/en/basess/58133/HTML/default/viewer.htm#a001329151.htm

The goal would be to have a join function that would allow you to update a master dataset's missing variables from a transaction database and, optionally, to overwrite values on the master data set with current ones, without duplicating records, based on a common key.

The use case is you have an original file, new information comes in and you want to fill in the data that was originally missing without overwriting the original data (if there is data on the transaction file for that variable). In this case only missing data is changed.

Or as a separate use case, you had original data which has now been updated and you do want to overwrite the original data. In this case any variable with new values is updated, and variables without new values is left unchanged.

Why this is needed: if you don't have a Oracle type database, it is difficult to do this task inside of Alteryx and information changes over time (customers buy new products, customers update profiles, you have a file that is missing some data, and want to merge with a file that has better data for missing values, but worse data for exisitng values (it is from a different time period (e.g. older)). In theory you could do this with "IF isnull() Then replace" statements, but you'd have to build them for each variable and have a long data flow to capture the correct updates. Now is is much faster to do it in SAS and import the updated file back into Alteryx.

- New Idea 291

- Accepting Votes 1,790

- Comments Requested 22

- Under Review 167

- Accepted 55

- Ongoing 8

- Coming Soon 7

- Implemented 539

- Not Planned 111

- Revisit 59

- Partner Dependent 4

- Inactive 674

-

Admin Settings

20 -

AMP Engine

27 -

API

11 -

API SDK

220 -

Category Address

13 -

Category Apps

113 -

Category Behavior Analysis

5 -

Category Calgary

21 -

Category Connectors

247 -

Category Data Investigation

79 -

Category Demographic Analysis

2 -

Category Developer

209 -

Category Documentation

80 -

Category In Database

215 -

Category Input Output

645 -

Category Interface

240 -

Category Join

103 -

Category Machine Learning

3 -

Category Macros

153 -

Category Parse

76 -

Category Predictive

79 -

Category Preparation

395 -

Category Prescriptive

1 -

Category Reporting

199 -

Category Spatial

81 -

Category Text Mining

23 -

Category Time Series

22 -

Category Transform

89 -

Configuration

1 -

Content

1 -

Data Connectors

968 -

Data Products

3 -

Desktop Experience

1,551 -

Documentation

64 -

Engine

127 -

Enhancement

343 -

Feature Request

213 -

General

307 -

General Suggestion

6 -

Insights Dataset

2 -

Installation

24 -

Licenses and Activation

15 -

Licensing

13 -

Localization

8 -

Location Intelligence

80 -

Machine Learning

13 -

My Alteryx

1 -

New Request

204 -

New Tool

32 -

Permissions

1 -

Runtime

28 -

Scheduler

24 -

SDK

10 -

Setup & Configuration

58 -

Tool Improvement

210 -

User Experience Design

165 -

User Settings

81 -

UX

223 -

XML

7

- « Previous

- Next »

- Shifty on: Copy Tool Configuration

- simonaubert_bd on: A formula to get DCM connection name and type (and...

-

NicoleJ on: Disable mouse wheel interactions for unexpanded dr...

- haraldharders on: Improve Text Input tool

- simonaubert_bd on: Unique key detector tool

- TUSHAR050392 on: Read an Open Excel file through Input/Dynamic Inpu...

- jackchoy on: Enhancing Data Cleaning

- NeoInfiniTech on: Extended Concatenate Functionality for Cross Tab T...

- AudreyMcPfe on: Overhaul Management of Server Connections

-

AlteryxIdeasTea

m on: Expression Editors: Quality of life update

| User | Likes Count |

|---|---|

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 2 |