Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Data-Science Design Patterns: Cost-Sensitive Lear...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

We have already seen that predictive models usually involve several optimization problems, including variable selection, transformation selection, and fitting. We can also search over the space of possible induction algorithms or, more generally, the space of possible models, to find the most effective induction algorithm or model. This post however assumes that we have fixed model form, except for specifying the fitness function and then fitting the model (choosing optimal induction-algorithm parameters). We focus here on one aspect of specifying an optimal fitness function.

The phrase ‘optimal fitness function’ sounds like an oxymoron. We use a fitness function to optimize the induction algorithm’s parameter values. In what sense can we optimize the fitness function before using it to optimize something else?

The Fundamental Pattern of Data Science

To answer this question we must consider the overall goal of a predictive-modeling exercise. With few exceptions the overall goal is to minimize some real-world cost. (Remember that maximizing some function f(x) is the same as minimizing –f(x), so whether we speak of minimizing a cost or maximizing a benefit, we’re saying the same thing.) For example:

- A clinical-medicine model predicting a procedure’s outcomes may seek to minimize the procedure’s rate of loss of life.

- A pricing and revenue optimization model of a retail product’s price elasticity of demand may seek to maximize revenue per time period.

- An industrial-engineering model of a manufacturing process may seek to minimize defects per time period.

Prediction in such contexts is merely a means to an optimization end. This is the fundamental design pattern of data science: every advanced-analytics problem is ultimately an empirical optimization problem.

Much of the work in the disciplines of operations research, industrial engineering, and management science amounts to modeling optimization problems. In this post we’ll consider an elementary pattern for turning a predictive model into an optimization model, and its application to a common problem type.

Cost-Sensitive Learning

Most extant data-science models use a fitness function that has some unitless subset of the real numbers as its range. The function’s optimal value has no empirical interpretation. It’s just the lowest value the fitting algorithm could find. The fitness function is designed to penalize specific kinds of formal errors (such as large residuals and large numbers of model features), and the values of the fitted induction algorithm’s parameters reflect these formal concerns.

Cost-Sensitive Regression

In cost-sensitive regression we modify the fitness function so it weighs each residual by its cost. For example, suppose an inventory-management model predicts demand for a given good at a given retail outlet in a given time period. The retail outlet can only receive one shipment of the good at the start of the period. If demand exceeds supply, the retail outlet loses sales revenue. (This is the cost of underestimating demand.) If supply exceeds demand, the retail outlet must dispose of excess supply at some cost. (This is the cost of overestimating demand.) We could then choose the parameters of the model’s induction algorithm in a way that minimized the retail good’s expected cost at the end of the time period, over some training set of historical data.

Cost-Sensitive Classification

In cost-sensitive classification we assign a cost to each type of classification. For the remainder of this post we’ll focus on binary classification as an example. A binary-classification model has four possible outcomes for each class assignment (retrodiction or prediction), often represented by the model’s confusion matrix. The cost of each type of assignment may be distinct:

|

Assigned Class |

Actual Class |

|

|

negative |

positive |

|

|

negative |

c(true negative) |

c(false positive) |

|

positive |

c(false negative) |

c(true positive) |

Table 1: Costs of Misclassification

We incorporate these costs into the classification model’s fitness function, and the model’s fitting algorithm minimizes the total cost of class assignments over the training data. Assuming the distribution of classes in the training data is consistent with the distribution of classes in the model’s sample space, the model assigns class labels in a way that minimizes each prediction’s expected cost.

The Class-Imbalance Problem

The class-imbalance problem occurs when there is substantial imbalance in the distribution of values (termed class labels) in a supervised-classification problem’s dependent variable. We focus here on the common case of imbalanced binary classification, in which the positive (minority) class occurs far less frequently than the negative (majority) class. Common examples include fraud detection, in which the positive class is the set of fraudulent transactions; customer churn, in which the positive class is the set of customers that stop being customers in a given short time period; and medical diagnostics, in which the positive class is the set of patients having a given illness.

Applying a cost-insensitive classification algorithm to an instance of the class-imbalance problem leads to the accuracy paradox. For example, suppose a certain type of cancer occurs in 1% of patients undergoing CT scans designed to detect the cancer. A classification algorithm could achieve 99% accuracy by assigning all patients to the negative class. We would not consider such a model useful.

Example 1: Cost-Sensitive Classification with rpart()

Cost-sensitive classification is a design pattern for the class-imbalance problem. One way to achieve cost-sensitive binary classification in R is to use the rpart (decision tree) algorithm. This algorithm is built into Alteryx’s Decision Tree tool, but unfortunately that tool does not yet expose the loss (cost) matrix of the rpart() function. (It exposes case weighting, which is another approach to imbalanced classification. We recommend cost-sensitive classification as the more direct route to honoring the fundamental design pattern.)

This example shows you how to do cost-sensitive classification with rpart() in R, taking the caret package’s GermanCredit dataset as an example. The dataset has n = 1,000 and p = 61. We predict the binary column GermanCredit$Class, which has 300 ‘Bad’ values and 700 ‘Good’ values, making it mildly imbalanced. Let’s assume a false negative costs $100, while a false positive costs $5, with these costs adjusted so that the costs (and benefits) of correct classifications are zero:

library(caret)

library(rpart)

data(GermanCredit, package = "caret")

# > str(GermanCredit)

# 'data.frame': 1000 obs. of 62 variables

# > table(GermanCredit$Class)

# Bad Good

# 300 700

false_positive_cost <- 5

false_negative_cost <- 100

rpart_model <- rpart(

Class ~ .,

data = GermanCredit,

method = "class",

parms = list(

split = "information",

loss = matrix(

# Each entry is L(actual, predicted).

# L must have zero diagonals.

c(

0, false_negative_cost, # L(Bad, Good)

false_positive_cost, 0 # L(Good, Bad)

), # default losses are 0/1

byrow = TRUE,

nrow = 2

)

),

control = rpart.control(

usesurrogate = 0,

maxsurrogate = 0

)

)

plotcp(rpart_model)

printcp(rpart_model)

# Let’s use cp = 0.011.

rpart_model <- prune(

rpart_model,

cp = 0.011

)

rpart_prediction_v <- predict(

object = rpart_model,

newdata = GermanCredit,

type = "class"

)

rpart_prediction_v <- as.numeric(rpart_prediction_v)

actual_v <- as.numeric(GermanCredit$Class)

match_count <- sum(rpart_prediction_v == actual_v)

true_positive_count <- sum(

rpart_prediction_v * actual_v == 1

)

true_negative_count <- sum(

rpart_prediction_v * actual_v == 4

)

false_positive_count <- sum(rpart_prediction_v < actual_v)

false_negative_count <- sum(rpart_prediction_v > actual_v)

total_cost <-

false_positive_cost * false_positive_count +

false_negative_cost * false_negative_count

expected_cost <- total_cost / nrow(GermanCredit)

# > match_count

# [1] 547

# > true_positive_count

# [1] 0

# > true_negative_count

# [1] 1

# > false_positive_count

# [1] 453

# > false_negative_count

# [1] 0

# > expected_cost

# [1] 2.265

Figure 1: Cost-Sensitive rpart() Model

We see that the (adjusted) expected cost of a classification is about $2.27, or 2.27% of the cost of a false negative, even though the accuracy (match rate) is only about 55%. If we had used the default loss matrix (which has unity losses off the diagonal) to train the model (and chosen an appropriate cp), the expected cost would have been $15.18, nearly seven times higher!

In this example we embedded the cost matrix directly in the model’s fitness function. When a classifier produces classification probabilities, we can instead tune the decision-threshold probability to minimize expected cost, rather than to optimize a purely formal criterion. Our next example illustrates this wrapper method.

Example 2: Cost-Sensitive Classification with Logistic Regression in Alteryx

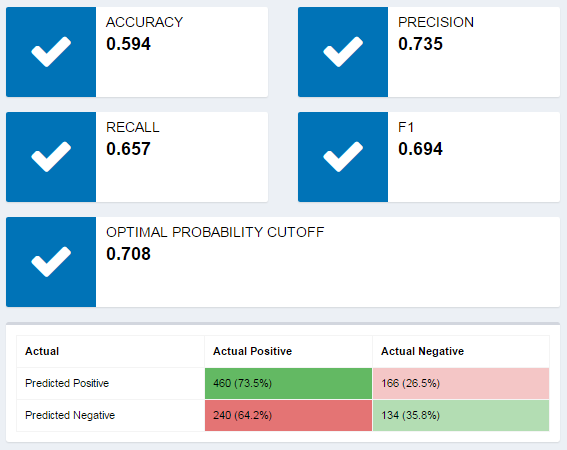

Let’s repeat the GermanCredit analysis using the new regularized, cross-validated version of the Alteryx Logistic Regression tool, which is based on R’s cv.glmnet() in the glmnet package. The logistic-regression model yields class probabilities, and the new Logistic Regression tool’s interactive report displays a probability threshold (0.708) that is optimal in the sense of maximizing the sum of the classifier’s sensitivity and specificity, two common formal measures of classification model quality. (This is a common formal criterion for selecting the probability threshold.)

Figure 2: Logistic-Regression Workflow

Figure 3: Interactive Report

We continue the analysis by replicating the model in R, but tuning the classification probability threshold (a model hyperparameter) using grid search—in this case, exhaustive search over a discretization of the set [0, 1] of possible threshold-probability values to minimize the expected cost of a classification.

library(caret)

library(glmnet)

data(GermanCredit, package = "caret")

false_positive_cost <- 5

false_negative_cost <- 100

x <- GermanCredit

x$Class <- NULL

x <- as.matrix(x)

y <- as.numeric(GermanCredit$Class)

cv.glmnet_model <- cv.glmnet(

x = x,

y = y,

family = "binomial"

)

cv.glmnet_prediction_v <- predict(

cv.glmnet_model,

newx = x,

s = "lambda.1se",

type = "response"

)

result_df <- data.frame(

threshold = seq(from = 0.00, to = 1.0, by = 0.01),

expected_cost = rep(0, 101)

)

i <- 0

for(threshold in seq(from = 0.00, to = 1.0, by = 0.01)){

i <- i + 1

prediction_v <- 1 + as.numeric(cv.glmnet_prediction_v >= threshold)

match_count <- sum(prediction_v == actual_v)

true_positive_count <- sum(

prediction_v * actual_v == 1

)

true_negative_count <- sum(

prediction_v * actual_v == 4

)

false_positive_count <- sum(prediction_v < actual_v)

false_negative_count <- sum(prediction_v > actual_v)

total_cost <-

false_positive_cost * false_positive_count +

false_negative_cost * false_negative_count

expected_cost <- total_cost / nrow(GermanCredit)

result_df$expected_cost[i] <- expected_cost

}

result_df[which(result_df$expected_cost == min(result_df$expected_cost)), ]

# threshold expected_cost

# 91 0.9 3.025

> result_df[which(result_df$threshold == 0.71), ]

threshold expected_cost

72 0.71 7.25

Figure 4: Probability-Threshold Grid Search

The grid search determines that we minimize expected cost by setting the decision threshold at 0.90. At this threshold, the expected cost per class assignment is $3.025. In contrast, if we were to use the value chosen by the sensitivity + specificity threshold of 0.71, the expected cost would be $7.25, more than double the minimum.

Conclusion

You’ve now seen embedded and wrapper approaches to cost-sensitive classification for an imbalanced binary-classification problem. Both methods produced comparable results, achieving a substantial cost reduction by fitting model parameters (or a hyperparameter) to minimize directly the expected cost of a class assignment, rather than minimizing a purely formal fitness function. These examples illustrate the fundamental design pattern of data science, namely: every advanced-analytics problem is an empirical optimization problem.

From now on, when you approach a new modeling problem, you should always start by asking yourself, what empirical optimization problem do I face? If you can, build a model that explicitly optimizes the target empirical outcome, rather than merely optimizing a model’s formal goodness of fit. That way you’ll deliver as much business value as you can, while making your model directly relevant to stakeholder concerns.

Next time we'll look at alternative patterns for the same problem, when we lack estimates for the various (mis)classification costs.

For More Information

The question of how to select an optimal cutoff threshold in binary classification has received a great deal of attention in the research literature. See for example Monica Lopez-Raton et/al, “OptimalCutpoints: An R Package for Selecting Optimal Cutpoints in Diagno....

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.