Alteryx Architectures - Introduction

Alteryx Architectures - Starter Architectures

Alteryx Architectures - SAML SSO Authentication

Alteryx Architectures - Workload Management

Alteryx Architectures - Resiliency and High Availability

Alteryx Architectures - Alteryx Server Demo Environment (you are here)

Welcome to the next installment in the Alteryx Architectures blog series. In this edition, we’ll look at the architecture of the Alteryx Server Demo environment and discuss some of the key reasons we decided on this architecture. As mentioned in previous blogs, we recommend working with your sales representative through a sizing exercise and review to ensure the Alteryx Server environment is setup in an appropriate way to make sure that it fits your specific needs.

Introduction

When deploying Alteryx Server there are many things to consider that can impact the architecture of the environment. This may include things such as 1) the type of workloads being processed and the importance of these processes to the business, 2) requirements for availability of the application, 3) types of failures or disasters you want to protect against, 4) identifying the major data sources that will be used in Alteryx workflows, 5) whether to host the application on-premises, in the cloud, or a hybrid deployment, which may also be dictated by the types and locations of data sources or tools Alteryx will interface with, and much more.

All these factors can have an impact on the deployment model and overall architecture of an Alteryx Server environment. For example, a highly available environment would look different from a partially fault tolerant environment. For additional information on resilient and highly available Alteryx Server deployments, please refer to the Resiliency and High Availability article in the Alteryx Server Architecture blog series.

When we set out to architect an environment for our pre-sales teams to use for demonstrating Alteryx Server, we considered some of these same questions to guide us on the deployment model and architecture of the demo environment. To get started we will talk about what the environment is and what it is used for, look at the architecture of the environment, and then dive into some of the reasons we chose this architecture.

What is the Alteryx Server Demo Environment?

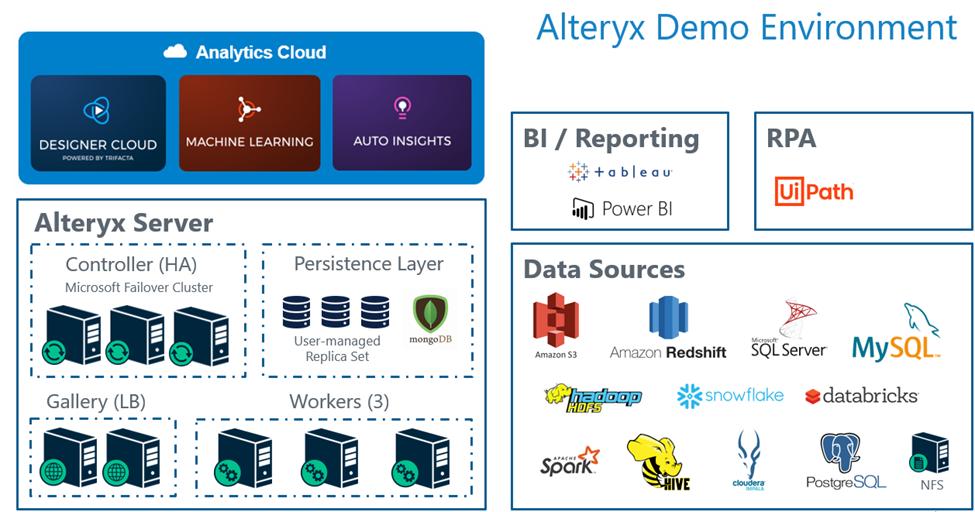

The demo environment is used by our sales teams to demonstrate Alteryx features and functionality to customers and prospective customers. The environment consists of various data sources, visualization tools, and Alteryx products which enable our teams to give live demonstrations of Alteryx in action. This includes the ability to demonstrate Designer, Server, Designer Cloud, Machine Learning, Auto Insights, Connect, and Promote, as well as several supported data sources and visualization tools. The following image is a representation of what’s available in the environment. Not all data sources and visualization tools are available for use in all the Alteryx products.

This article will focus on the Alteryx Server environment which we architected to handle several hundred Gallery users and to process as many as 300 jobs (workflow executions) per hour with an average execution time of 60 seconds.

Alteryx Server – Demo Environment Architecture

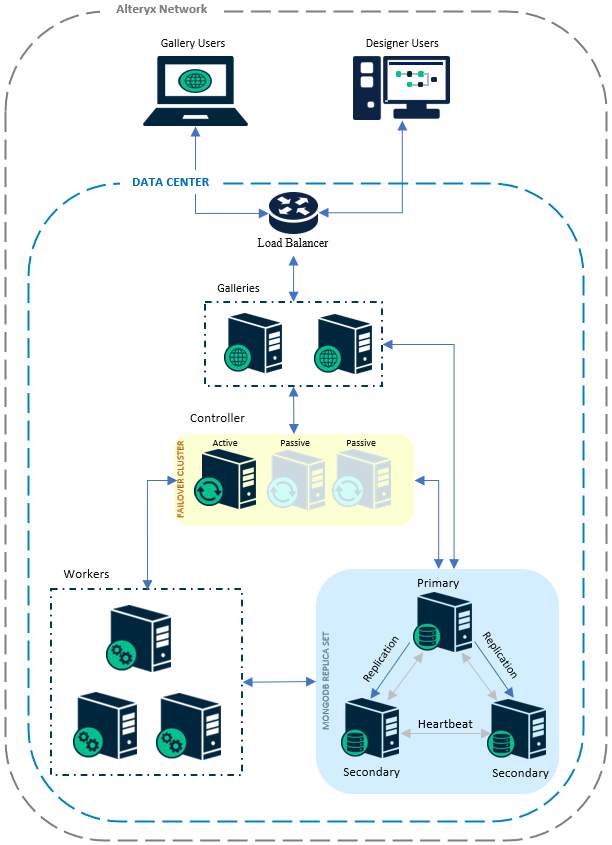

As shown in the architecture diagram above, we went with a multi-node deployment running two load balanced Alteryx Gallery nodes, three Controller nodes in a high-availability configuration, three worker nodes, and a three node MongoDB replica set. All the nodes were deployed in a single data center, with nodes for each component deployed across multiple hosts in a virtualized environment. A few key things to call out with this architecture.

For the Gallery component, the load balancer is used to distribute incoming requests between the two nodes. In the event one of the Gallery nodes were to fail, all traffic would automatically be directed to the remaining active Gallery node.

For the Controller, we deployed three nodes to maintain availability of the application. What’s unique about the Controller is you can only have a single instance of the Controller actively running in an Alteryx Server environment. To automate the process when a failure occurs, we use Microsoft Failover Clustering to manage the Controller failover process. You can read more about this configuration in the help documentation on High-Availability Best Practices.

Strategy

Now that we’ve seen what the environments looks like, let’s dive into a few of the key considerations that guided us on the architecture.

The first major consideration was the availability of the environment. While we wanted the environment to always be available for product demonstrations, we knew we would not be processing mission-critical workloads required to keep the business running. Based on this, we did not see a need to architect a highly available environment that spans multiple data centers. For environments running mission-critical jobs, we would recommend a highly available environment that spans multiple data centers that are physically isolated from each other.

We did, however, want to protect against general failures such as a rack, host, or power failure. To protect against these types of failures each of the Alteryx Server components were deployed across a minimum of two hosts within our virtual environment. This results in the environment being highly available within the data center, which satisfied our needs for the environment.

A second major consideration was the location of systems we would be connecting to for demonstrations. We knew that most of the data sources and tools we were interfacing with would be hosted on-premises. As these systems were primarily hosted on-premises, we did not need a hybrid deployment with nodes hosted on-premises and in the cloud; we felt it would be best to deploy the environment on-premises. Having all the nodes on-premises would maximize performance and minimize latency when reading/writing to the various databases and tools we would be interfacing with.

While we would also be connecting to some cloud data sources, these systems all support In-DB processing. By using In-DB processing, latency would not be a significant issue when connecting to these cloud-based systems as most processing would be offloaded to the cloud database.

The third major consideration for us was the processing capacity of the environment. As the environment would be used for product demonstrations we did not want users to experience delays when a job execution was initiated. We wanted to size the environment to handle up to 300 hourly workflow executions with an average execution time of approximately 60 seconds.

Using these parameters to size the environment and to minimize the potential that a job would queue, we deployed three 8-core worker nodes each configured to run 4 jobs simultaneously. This allows the environment to process a total of 12 jobs simultaneously with an estimated probability that less than 2% of incoming jobs would queue for an estimated average of less than 1 second. These estimates were generated using queueing theory to appropriately size the environment. You can refer to Part 1 and Part 2 of the Tackling Queued Jobs With Queueing Theory series for additional information.

While these were not all the considerations used when architecting the demo environment, these are three of the major factors that guided us on the final architecture. There are many things to consider when architecting an Alteryx Server environment and we encourage you to work with your sales representative when sizing and architecting your environment.

Choosing a Setup Type

When working with your sales representative to choose a deployment model that meets the needs of your business, these are a few of the questions you may want to consider.

- What data sources and/or BI tools will Alteryx read and write data to? Are these systems hosted on-premises, in the cloud, or are they distributed between on-premises and the cloud?

- What would the impact to the business be if the environment were to experience a failure and go offline? How long is it acceptable for the Alteryx Server environment to be offline in the event of a failure, or is it acceptable for the environment to be offline at all?

- Should your Alteryx Server environment be able to survive one or more failures? If so, which component(s) do you want to ensure will survive a failure? Do you want to protect against equipment failures and/or failures of an entire data center?

- How many jobs will be running during peak hours and, on average, approximately how long do the jobs take to run?

Summary

In this blog we’ve introduced you to the architecture of the Alteryx Server environment used by our pre-sales team to demonstrate Alteryx Server capabilities and functionality. There are many factors that influence the number of nodes or architecture that might work best for you. If you need help deciding on an environment configuration that would best suit your organization’s needs, reach out to your sales representative and they can help get the right resources to help you design the right Alteryx Server environment for your business’s needs.