Support Vector Machines (SVM) is a popular supervised learning algorithm. It has been shown to perform well in various settings and is generally considered as one of the best “out of the box” classifiers [1]. Although SVM is best known for its classification capability, it can also be extended to regression (SVR) and outlier detection (one-class SVM). Some research has shown that SVR is robust and insensitive to outliers.

Because of its popularity and overall good performance, we have introduced the SVM tool for Alteryx 9.1 release almost a year ago. Recently I have been working on adding new features to this tool and here's a quick summary of what's new:

- We have now added support to regression problems along side with classification problems supported since its original release.

- We added the option of "machine tunes parameters", thus if you are not sure what parameter to choose, Alteryx will run a 10-fold cross validation and choose the best parameters for you.

Before going any further, let's explain what SVM can do for you. Suppose you are a medical doctor who needs to diagnose if a new patient's breast tumor is malignant or benign. You have historical data of other old patients, such as the patient's age, ethnicity, the size, smoothness, compactness of the tumor etc. and the past diagnosis: malignant or benign. Since the possible outcomes of diagnosis are only several, in this case, two (malignant of benign), we call this type of problems "classification problems". Suppose you are a real estate agent who needs to decide the listing price for a house. You also have some housing data such as which district the house is located, the crime rate in this district, how many rooms, square footage the house has etc. Since the possible outcomes here is a number instead of a class, we call this type of problems "regression problems". It is a good moment to use SVM tool for either case.

SVM is quite complicated when it comes to what model parameters to pick. Although with Alteryx it has become much easier for users to carry out a trial-and-error experiment, it is still beneficial to understand some basics of the parameters in SVM.

- Maximal margin – the over simplied but fundamental motivation of SVM.

- Kernels – what are they and why do they make SVM powerful?

- Cost – how is it related to the margin length and why should you care about it?

Maximal margin

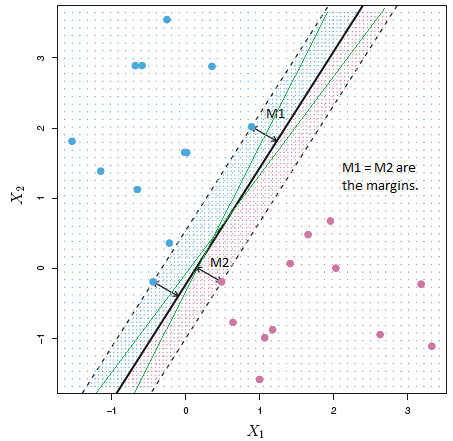

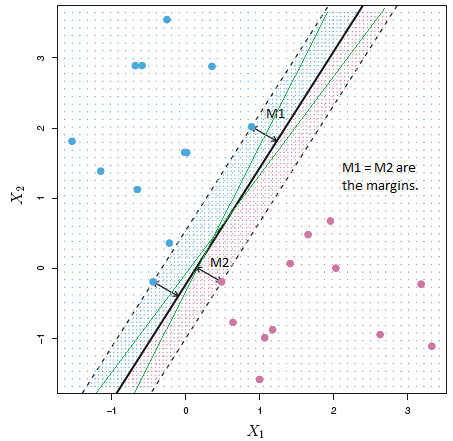

If two classes of data can be separated by a hyperplane (which in the case of two predictor fields is a straight line), there can be infinitely many such hyperplanes (e.g. green lines in the following plot). SVM chooses the hyperplane that maximizes the margins, that is – the hyperplane that is the farthest from the closest points of either class. The closest points from both classes (points that are on the dotted line in the plot here) are called the support vectors. The advantage of doing so is clear: the model (this black straight line here) is not biased against either class, thus it is expected to yield more accurate results.

Kernels

One of the strength of SVM is that it can classify data that are not linearly separable by mapping the data to a higher dimensional space, where they become linearly separable.

The kernel itself is not the mapping function, but an “inner product” of the mapped feature vectors in implicit high dimensional space. It is more of a computation trick. The user can safely ignore the details on the derivation of kernels and their role in computation without affecting using SVM tool effectively.

Four kernels are provided in Alteryx SVM tool:

- Linear kernel: Usually it is only good for linearly separable data.

- Polynomial kernel: Best for polynomially separable data.

- Radial kernel: Also known as radial basis function kernel, Gaussian kernel, can map data to infinite dimensional space. Good for non-linearly separable data. The default kernel.

- Sigmoid kernel: Origins from neural networks, usually not better than radial kernel.

Cost

Cost parameter is also called soft margin constant. It should be a number greater than 0. Cost parameter controls how much misclassification you allow to be in the training set. Generally, the larger the cost, the less misclassified data in the training set. When cost approaches to an infinitely large number, the model doesn’t allow any misclassification in the training set. Thus the model become a hard-margin SVM. Since a model without any errors in the training set is susceptible to overfitting, the cost parameter is not recommended to be too large. For best results, the user can use “Machine tunes parameters” in Alteryx SVM tool to specify a range of cost parameter she wishes to test. The tool will run a 10-fold cross validation in the training set to pick the best one among the specified candidates.

For more discussions on how to set parameters, please refer to [2].

I hope this post has helped to get you started with SVM tool. Feel free to give us your feedback

|

[1]

|

|

V. K. J. R. Q. J. G. Q. Y. Xindong Wu, Top 10 algorithms in data mining, Longdon: Springer-Verlag, 2008.

|

|

[2]

|

|

J. W. Asa Ben-Hur, A User's Guide to Support Vector Machines, Humana Press, 2010.

|