There are two types of model errors when making an estimate; bias and variance. Understanding both of these types of errors, as well as how they relate to one another is fundamentally important to understanding model overfitting, underfitting, and complexity.

Various sources of error can lead to bias and variance in a model. Understanding how these sources of error help us improve the data fitting process, resulting in more accurate models.

Bias

Most models make assumptions about the functional relationships between variables, which allows the model to estimate a target variable. Not all models make the same assumptions, which is why a data scientist or analyst needs to determine the best possible assumptions for a given data set.

Bias is the difference between a model’s estimated values and the “true” values for a variable. Bias can be thought of as errors caused by incorrect assumptions in the learning algorithm. Bias can also be introduced through the training data, if the training data is not representative of the population it was drawn from. In the fields of science and engineering, bias is referred to as accuracy.

Bias can cause an algorithm to incorrectly identify or miss important relationships, which in turn can cause underfitting. Underfitting describes when a model is unable to capture the "true" underlying pattern of the data set (i.e., the model fits the data poorly).

Variance

Variance can be described as the error caused by sensitivity to small variances in the training data set, or how much an estimate for a given data point will change if a different training data set is used. High variance can cause an algorithm to base estimates on the random noise found in a training data set, as opposed to the true relationship between variables. In the fields of science and engineering, bias referred to as precision.

Some variance is expected when training a model with different subsets of data. However, the hope is that the machine learning algorithm will be able to distinguish between noise and the true relationship between variables. Small training data sets often lead to high variance models. A model with low variance will be relatively stable when the training data is altered (e.g., if you add or remove a point of training data).

High variance is associated with overfitting a model, where a model will perform well on its given training data set but generalize to unseen observations poorly. Overfitting happens when the models capture and describe random noise in the training data set, as well as the underlying pattern in the data.

Underfitting and Overfitting

Underfitting and overfitting are very important concepts in machine learning and statistics, particularly because the data used to train a model is often not the data the model will be applied to in production.

Underfitting will cause poor predictions because the fundamental relationship generated by the model does not match how the data behaves. No matter how many observations you gather for your data, the algorithm won’t be able to model the true shape of the data (e.g., a linear regression on an exponential data set). As mentioned in the previous section, underfitting is caused by bias.

Underfitting

Underfitting

Solutions for underfitting might include using a more complex model, or a model that better fits your data. Providing better predictor variables (feature engineering) or reducing the constraints applied to the model (regularization).

Overfitting will cause poor predictions because the model is overmatching the training data (in some extreme cases, memorizing the training data), and not making any inductive leaps about the true relationship. Overfitting is associated with high variance, and therefore the models produced in an overfitting scenario will differ wildly depending on what training data is used. Overfit models handle their training data perfectly, but fail to generalize to new data sets.

Overfitting

Overfitting

Solutions for overfitting include simplifying your model (e.g., selecting a model with fewer parameters or reducing the number of attributes), gathering more training data, or reducing noise in the training data (e.g., finding and correcting errors, removing outliers. Hooray for data investigation and pre-processing!).

An ideal model will exist in the happy place between overfitting and underfitting, where the “true” relationship between the data is captured, but the random noise of the data set is not.

Happy place

Happy place

Finding this happy place involves finding a balance between bias and variance.

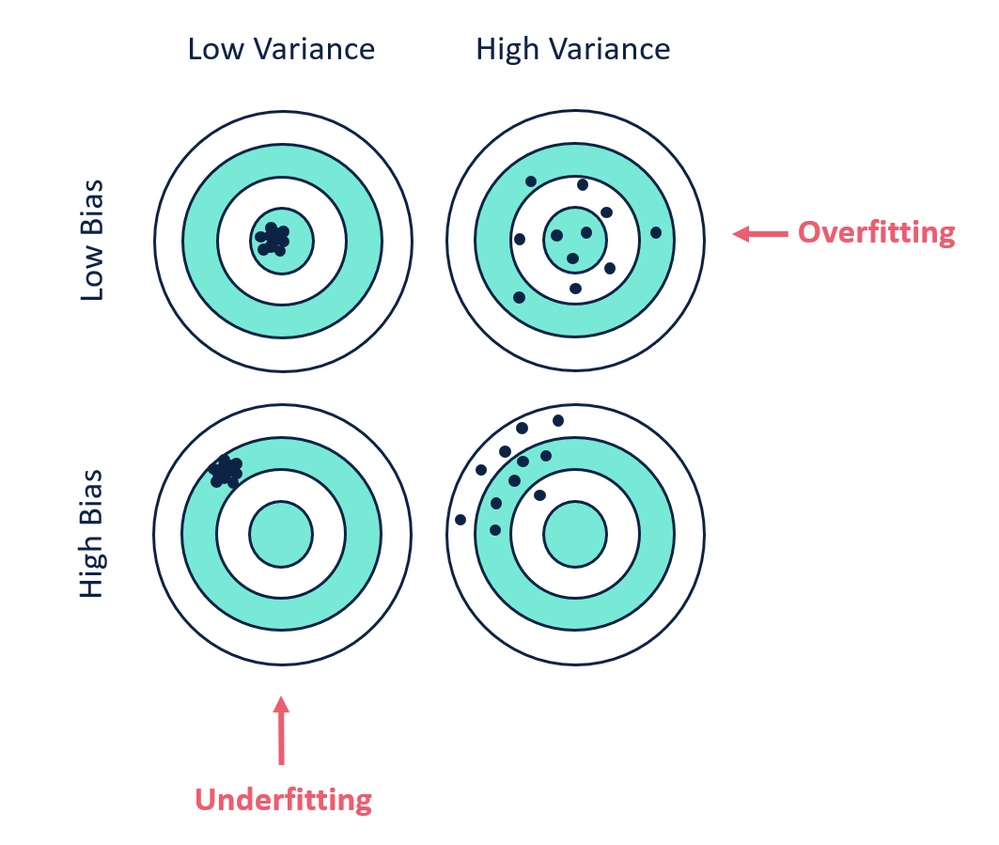

Bias and Variance: The Target Illustration

This figure describes Bias and Variance through hitting points on a target. Each point on the target represents a different iteration of a model, fit for the same problem with different training data sets.

Models with low variance tend to be less complex with a simple underlying structure. They also tend to be more robust (stable) to different training data (i.e., consistent, but inaccurate). Models that fall in this category generally include parametric algorithms, such as regression models. Depending on the data, algorithms with low variance may not be complex or flexible enough to learn the true pattern of a data set, resulting in underfitting.

Models with low bias algorithms tend to be more complex, with a more flexible underlying structure. The higher level of flexibility in the models can allow for more complex relationships between data but can also cause overfitting because the model is free to memorize the training data, instead of generalizing a pattern found in the data. Models with low bias also tend to be less stable between training data sets. Non-parametric models (e.g., decision trees) typically have low bias and high variability.

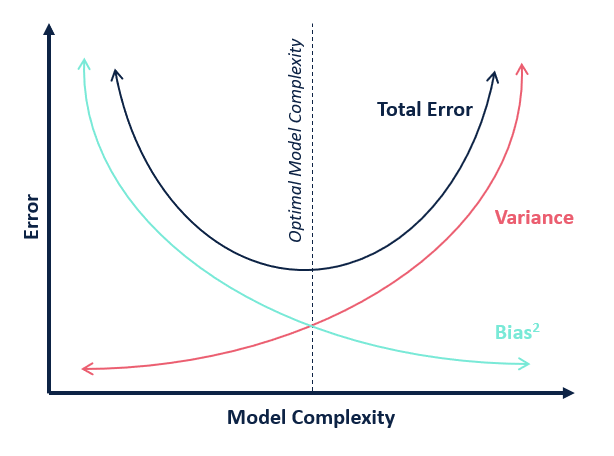

The Bias-Variance Tradeoff

The bias-variance tradeoff is a property of supervised machine learning models, where decreasing bias will increase variance, and decreasing variance will increase bias.

Often, the goal in relation to bias and variance is to find the balance between the two that minimizes overall (total) error.

Unfortunately, there is not a quantitative way to find this balanced error point. Instead, you will need to leverage measures of accuracy (preferably on an unseen validation data set) and adjust your model’s complexity until you find the iteration that minimizes overall error. Never forget that model building is an iterative process. You are looking for the most parsimonious model possible (the simplest model with the highest possible explanatory power). Also, remember that you have many model types to choose from, and there is no reason to prefer one over the other prior to knowing what your data looks like. Selecting a model that makes assumptions that match your data is key.

Additional Reading

Understanding the Bias-Variance Tradeoff from Scott Fortmann-Roe provides a comprehensive explanation of the bias-variance tradeoff and walks through an applied example on modeling voter registration.

A Gentle Introduction to the Bias-Variance Trade-Off in Machine Learning from Machine Learning Mastery is a nice overview of the concepts of bias and variance in the context of overfitting and underfitting.

WTF is the Bias-Variance Tradeoff? from Elite Data Science includes a snazzy infographic.