Recently, I provided the opening keynote for the Great Lakes Data & Analytics Summit where we adapted the experience to encourage both in-person and live virtual participation because there's no social distancing when it comes to analytics.

While my talk focused on the importance of becoming an insight-driven organization, the questions I received after sparked a number of conversations. Here, I take a deeper dive into that Q&A, covering everything from bad data to buzzwords and more, because together we solve.

Q: How do you handle bad data? Do you worry that you can draw incorrect conclusions from it?

A: I don’t necessarily believe there is such a thing as “bad” data. But it is true that data sources frequently have erroneous and/or missing data. I have met very few real-world data sets that were perfectly clean. That said, most data can still be used effectively, as it is good enough to provide value, insight, and a solution to a problem.

Let’s start with an example of how data can mislead people — or, more accurately, how people’s biases can cause them to use data in ways that might provide the wrong answer.

We’ve had moments throughout history in which biased historical data has allowed people to draw the wrong conclusions. Let’s take a famous story about Abraham Wald from World War II. The legend goes that to make planes safer and more likely to survive battle, a team of researchers evaluated every plane that came back from missions with bullet holes to assess where to place more armor.

This is a great data science problem. Adding more armor to the wrong places makes a plane heavier, slower, and more likely to be shot down; adding armor to the right places makes the plane more likely to survive battle, a great optimization problem that would have meaningful consequences — exactly the kind of stuff that data scientists love to work on!

“Survivorship Bias.” The damaged portions of returning planes show locations where they can sustain damage and still return home; those hit in other places do not survive. The data in the table is hypothetical.

“Survivorship Bias.” The damaged portions of returning planes show locations where they can sustain damage and still return home; those hit in other places do not survive. The data in the table is hypothetical.

Normalizing the number of bullets by square footage, the team concluded that the best place to add armor would be where they saw the highest number

of bullets per square foot, which in this case was the fuselage.

As the legend goes, when they reviewed this data with Wald, he responded that the armor doesn’t go where the bullet holes are; it goes where they aren’t (the engine).

Why did he come to this conclusion? He kept asking questions about the data. Where are all the missing holes, the ones that aren’t in the data set? Why weren’t the holes spaced evenly all over the airplane? Wald’s conclusion was that the planes with holes on the engines weren’t coming back, whereas planes with holes in the fuselage were making it back. Therefore, adding armor to the engine would be more important.

This method was used throughout World War II as well as the Korea and Vietnam wars. It’s a great example of how the initial analysis would have been fatally incorrect if it wasn’t more carefully examined.

There are many other types of cognitive biases beyond this one (which is called “survivorship bias”), and each can affect an outcome. It’s important to consider these as you’re looking at data to ensure you get the best possible result. And keep in mind, the data wasn’t “bad”; it just wasn’t used in the right way.

Image source: https://www.businessinsider.com/cognitive-biases-that-affect-decisions-2015-8

We’ve got to be careful to avoid biases and being blindsided by our assumptions and the conclusions we make with data, and never forget to include clever humans (like Wald) as part of the process. Avoiding cognitive bias starts with being aware it exists, and then actively combatting it. The internet is full of tips, including checking your ego, not making decisions under time pressure, and avoiding multitasking.

But one of the most powerful tools in your arsenal is in computer augmentation, aka using data science tools that free people to think deeper about the analysis and create better answers.

Q: Are the machines going to take over? Do you see a day coming when data science will be performed without much human intervention or is that pie in the sky?

A: The narrative that computers will “take over” is certainly one that plays well in the movie theaters and has been in the news for decades. However, most people who are experts in this space do not share the same worries. While there are many repetitive tasks that machines are quite good at, I’m not concerned that computers will replace the data scientists. To give you some perspective, let’s start with areas where computers excel and how this relates to people.

Let’s say I hand you all the invoices that came in today and need them added together to understand our total amount due. A computer is great at the mundane task of adding these numbers together. This frees me up to do higher-order functions, like talk to customers and think about what new products I might want to design and sell.

There are many tasks humans do that would be hard to envision a computer fully taking over autonomously:

- Creating a strategy for a business

- Designing a new product

- Assessing risk when a new set of circumstances arrive

Think about some of the most advanced computerized tasks today, like teaching a computer to drive a vehicle. Again, I would argue that driving a car is a mundane task for humans, even boring to most, and if we were asked to drive a car every day for eight hours, we would struggle to stay interested. Yet teaching a computer to do this task is one of the most challenging efforts underway in computer and data science today.

Computers can help augment our skills and provide an amplification to us, but at this point, I don’t see Artificial Intelligence (AI) taking over most of these types of tasks. Data science, it turns out, is a very creative and complex field, and in this profession specifically, I see many examples of amplification with AI techniques but very few cases in which the process is completely taken over by the machines.

The most significant challenge for most data scientists is in the first step of the process: problem formulation. In this step, we are trying to understand the business problem, the objective, unintended consequences that could arise and a methodological approach that might be used. This is certainly not the domain for which most computers can help. That said, data cleansing, finding correlations between values, monitoring a model that is in production — these are all areas in which computers can help augment the process.

There have been many others who have weighed in on this debate, with Elon Musk and Mark Zuckerberg famously taking different views on the discussion. We’ll see where this one ends up, but for now, I’m squarely with Mark. What are your thoughts?

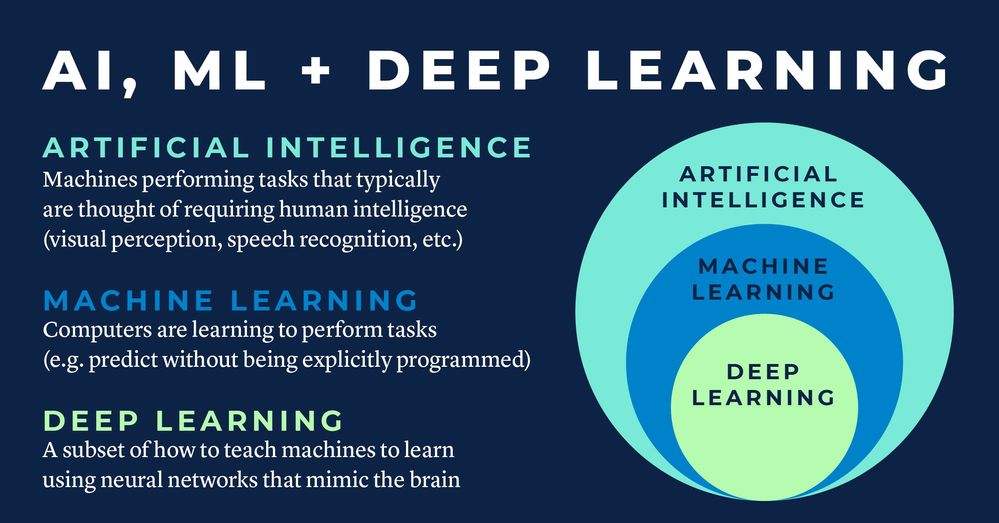

Q: We hear a lot of buzzwords like Artificial Intelligence (AI) and Machine Learning (ML) being thrown around by executives who want to jump into AI/ML and skip the analytics maturity curve. What is your definition of AI and ML?

A: It worries me when people ask this question. The reality is that the focus of our work should be on achieving amazing outcomes. Does it really matter whether you solved the problem using AI or ML? Does it matter how big or small the data? I would suggest that if you solved the problem well with very simple math, we should all celebrate more than using AI and ML and having a poor solution. It’s all about the outcomes. What are you asking the data to solve? What is the challenge? We need to refocus our thought process to the outcomes we’re looking to answer.

As far as definitions go you could ask different people and likely get varied responses as AI and ML continue to evolve. For AI, it’s about computers acting like humans. As the name implies, Artificial — the machine — is acting Intelligent. For example, many would say that tasks like natural language processing, understanding the meaning of spoken words, would be a skill reserved for humans. When machines do this task, it is considered artificial intelligence. The problem is that over time, what we believe is reserved for humans has changed — and so opinions of what counts has changed over time.

ML is more of a sub-category in which we have machines that learn from data and predict an outcome — like a regression analysis in which you have the data, you perform some math, and can predict a future value — or perform a what-if scenario. There is a subset of the overall ML field called Deep Learning in which we use techniques that mimic the human brain, with neural networks, to predict an outcome.

But when people ask this question, I am more concerned with why they are asking it — as it shouldn’t matter if I performed AI or ML. Instead, the question should be, can you solve my problem? Do you really care if I did it with addition or multiplication? Would that really make a difference in the value of what we did?

Q: One of the challenges we struggle with is poor data. Are you seeing data management strategies updated to improve integrity?

A: There will always be messy data, as there are very few examples of perfect data sets in large-scale production systems today. But I believe that many messy data sets can still be highly useful. And it’s this second part that is where we should be focusing nearly all our time and effort.

Let me first rewind to a problem I’ve seen many teams experience in the early days of the analytic journey. Teams became very focused on their data; they thought of it as the “new oil” and believed that if they could just clean it all up and “refine” it, then like magic, a value would come out of the process. Unfortunately, there are a few issues with this. The first is that people tend not to want to expend energy doing a task unless they know it will deliver value for them. Asking people to go clean data when they aren’t connecting it with an outcome is just not a sustainable endeavor. Instead, if we intend to solve a problem and we find that cleaning the data can help, then we may be more likely to work on cleaning the data. A second issue that is related is that simply cleaning data doesn’t provide any ROI by itself. So all of this effort doesn’t directly yield an outcome.

Here’s what I would not recommend:

- Don’t form a committee to determine how you’ll clean up your data. You will be at it forever, and cleaning data is not what delivers ROI.

- Don’t focus on how much data you can put into your data lake. The size of the data is not what matters, it’s the size of the outcome — the ROI. If you can solve a huge problem with a small amount of data, that’s great.

- Don’t form teams to force other teams to collectively agree on the same definition for key terms in your business. There is a reason why legal, accounting, and marketing have different definitions of who a customer is. Gaining alignment is not going to save your business any money, and you will likely burn countless hours in futility. [Example shown below]

So what should we do?

- Start solving real problems. Don’t wait for “perfect data” – use what you have and if it’s good enough – roll it out. Drive ROI and positive outcomes as the key imperative. The first way data gets cleaned is almost always by making it visible and useful. As people start to see available data and use it, they’ll understand the value of providing better data into the system.

- If people can’t see or use the data, there’s no good reason for them to invest effort into cleaning it up. Make sure the right people can access the data and see it. Having data without being able to give it to the people that can use it is a sure way to eliminate ROI.

Clean data clearly provides value in an enterprise, but prioritizing where to focus efforts and ensuring there is a natural feedback loop to those who create the data is key to successfully improving your data pipeline. By focusing on business solutions while making the data issues visible, there are natural incentives that will ultimately drive better data quality.

Q: How can we accelerate data democratization in higher education?

A: What a great place for analytics and democratization: the ultimate knowledge industry, higher education. There are so many places where analytics plays a role. I’ll provide a top 10 list here, but I could easily have generated a top 100 list and would be happy to do so if anyone is interested! I also see analytics and specifically Alteryx being used in K-12 as well, with many of the same drivers.

- Marketing: In any business, understanding your customers, their patterns, and how your competition is affecting your pipeline is critical to optimizing your results. We see use cases provide incredible insight into where to recruit new students, how to increase the number of applicants, and how to improve the strength of your candidate pool. Once you’ve identified target geographies, A/B testing of marketing materials, as well as cost effectiveness analysis on marketing programs, you can drive significant efficiencies throughout your operations.

- Hiring: Analytic methods can be applied to the HR process to automatically strip personally identifiable information (PII), as well as gender, race, and other information that could bias the hiring process. Whether leveraging models to perform initial screenings of candidates in this non-biased way or ensuring pay is set blind to these characteristics, analytics can improve the overall hiring processes. Have you analyzed your data to ensure you’re eliminating any gender pay gaps? Have you put in place processes to ensure you remain compliant?

- Admissions: Analytics also can be used to help select students that will most likely have the best outcomes at your institution. Do you use data-driven techniques in your process? Have you analyzed your most successful and diverse graduates and created lookalike models that find new applicants?

- Fundraising: Alumni contributions are a significant source of revenue for many institutions. By combining the numerous data sources within a university, it’s possible to significantly increase the level of participation in these programs. Have you combined your graduation data with the athletic department data, theater program data, and the myriad of other data sources at your institution? Do you know how combining these additional data sources could provide a better picture of not only who to market to, but how to engage in the right conversations that will result in a successful campaign?

- Payroll, Tax, Accounting: Institutions have the same issues every business has when it comes to payroll, tax, and accounting. These are areas in which analytics are quickly becoming a staple, and Alteryx is the proven Platform in all these areas. With 100% of the top strategic consulting firms using Alteryx and over 65% of the top finance and insurance companies leveraging the Platform, it’s an obvious win for these departments with savings in time, more accurate analysis, better insights and fewer mistakes.

- Scheduling: Whether it’s adjusting staffing of a cafeteria, solving the classroom scheduling challenges that come each semester, or analyzing the best dates to hold events, analytics can help.

- Brand Monitoring and Product Development: Institutions deliver an important service to their constituents, and knowing how they’re performing and where there are gaps is key to building and maintaining a strong brand. The use of sentiment analysis and monitoring both general public opinion as well as student feedback can inform what courses and programs to offer and identify key issues that are critical to student success. What changes are students wanting to see? What will drive a more significant improvement: adding a new program of study or a new food offering at your dining hall? Are you performing the analytics that can help quantify these answers?

- Research: Whether working to solve medical challenges or studying themes and patterns in social media, Alteryx provides a broad Platform that is usable by professionals in every discipline.

- Sport Analytics: How do the top sports teams get a competitive advantage? Analytics. Whether performing on-field analysis to determine team strategies or performing individual assessments to help players identify key areas to develop, analytics has become a critical element for sports teams at nearly every level.

- In the Classroom: Alteryx is committed to helping schools educate our future data scientists. Through our Alteryx for Good program, schools can obtain free licenses of Alteryx for teaching purposes to help students in every discipline learn to use data science quickly and easily.

If you are interested in additional use cases, you can see more detail here.

Q: If we democratize data, how do we prevent negative outcomes from happening?

A: This is one of the most common questions I hear from IT organizations. How are we going to prevent people from creating “bad analytics”? How are we going to govern and control this? Or more bluntly, I’ve heard some say, “We can’t allow people outside IT or the data science team to perform analytics; they might make a mistake.” And while I totally understand where the question is coming from, I think frequently we miss the reality of what is happening.

Are we afraid of people all over our business using scientific methods to solve problems? Are we really worried about giving businesses better technology to perform math?

Governance is important, and good governance is like good government, an enabler to achieve your goals. Implementing successful governance programs is all about focusing on how to enable users to perform analytics using best practices and helping users achieve better outcomes.

The great news is that yes, new technologies are providing even better ways to govern and help people avoid many of the pitfalls they ran into before. That said, much of this takes work and is not as simple as buying a technology solution. Your organization will need to put processes in place to make it all happen and invest human capital to make it work seamlessly. But with a few key actions and modern analytics, we have seen many companies put great governance processes in place and have incredible people flourish across their businesses delivering amazing outcomes and ROI.

Here are a few of the best practices you’ll want to implement as part of a governance approach:

- Form a Center of Excellence (COE): Whether you have a dedicated Data Science team under the direction of a CDAO or a federated model of experts, it is important that there is a team driving the analytic process within your company. This team needs to help democratize analytics throughout the company and work with each line of business to drive success. It will be difficult to drive governance without some sort of central team to own the process.

- Design Review/Coffee Hours: Have a forum for people to review their analytic work. Whether in more formal Design Review style forums or with Coffee Hours during which people can casually talk to experts and peers, analytics is a team sport. Providing a way to review your work with others is a sure way to increase the quality of the results. Having analytics that are published to a server get scheduled into a review drives an ability to provide enablement and will improve the quality.

- Celebrate (and track) Success (ROI): It’s important to understand what’s working and the successes that are occurring in your organization. While you shouldn’t attempt to measure every analytic endeavor’s ROI (just as you don’t try to measure every HR project or Finance Analysis in your business today), you should look for successes that occur with analytics. Sharing and showcasing these examples will provide education to others on what they can do, will encourage an acceleration of similar results, and will allow management to connect their investment in this space to the outcomes.

Q: So how are new technologies like Alteryx changing the game for those of us that are using spreadsheets today?

A: First, you can implement solutions on a server as well as a desktop versus only being able to deploy to a desktop. This allows visibility and an ability to support solutions that is very different from a desktop-only solution like most spreadsheets.

Second, solutions like Alteryx provide self-documentation of a process — so the need to create desktop procedures is reduced, as it’s automatic. This makes processes more sustainable and understandable, allowing very direct reviews of the “code.”

Third, with Alteryx, each process step is made transparent where inputs and outputs are seen by default. This allows full traceability.

Fourth, Alteryx creates repeatable processes that can be run on a schedule without human intervention. This automation reduces the risk of a copy-and-paste blunder. (Search for largest spreadsheet blunders and you will see quite a few multi-million and even multi-billion dollar problems).

Fifth, using solutions like Alteryx Connect, you can monitor lineage and provide guidance to users on which workflows and data sets are of high quality, which are certified, and who to go to for help.

All of these are great examples of how Alteryx helps companies improve their control and quality of analytic outcomes. No technology can eliminate human error. The real question in my mind is whether you’re making it better than it was before.

But again, if we go back to the original question and my original angst, a lot of this comes back to the question of who do we trust to use math? Is it really any better to have Accounting ask IT to build a solution? And when IT builds it, is it right? Who checks it to ensure it’s working as expected — and signs off? Wait, it’s Accounting? If that’s the case, why do we think IT or a data science group is going to know better than Accounting if an accounting process, forecast, or model is accurate? While having IT and data science professionals available to provide support makes a ton of sense, telling Accounting that we aren’t going to allow you to use a more modern solution because we’re concerned you will make a mistake seems completely wrong.

Knowledge industries are comprised of incredibly knowledgeable workers. Data science thrives where we provide these domain experts with the tools and education needed to succeed. Is your organization providing your most valuable assets, your people, with the best tools available and the training and support to leverage the modern capabilities? Do you have a Center of Enablement made up of data scientists that can help take them on this journey? If you do, you are likely well on your way towards digital transformation.