Hello clever people,

I've built a macro that goes to a 3rd party website and fetches a considerable amount of data (we're talking millions of rows, dozens of columns). It is very stable and has delivered great value to a lot of people. The users input their API key, the model ID, the table ID, and click the run button.

It is, however, slow. It takes about 9-10 minutes to fetch about 1M rows, and it's not uncommon for me to get as much as 10 M rows. The data changes frequently, so I do need to update the whole thing every time.

The 3rd party has a page limit of 10 K rows and 10 MB, and they can't change that. However, they have recently been two new pieces of information that I learnt:

1) the 3rd party developed a new API endpoint

2) the 3rd party can issue multiple API keys for service accounts

So with that new info: can I finally make my macro faster?

Now I will explain how the version of the endpoint works.

This new endpoint returns a unique page key given the target page size, table ID and model ID. So if we have a table with 15000 rows and we set the page size to 5000, we can call the data using these three URLS:

https://api.blablabla/pageSize=5000&key=[###]

https://api.blablabla/pageSize=5000&key=[###1]

https://api.blablabla/pageSize=5000&key=[###C]

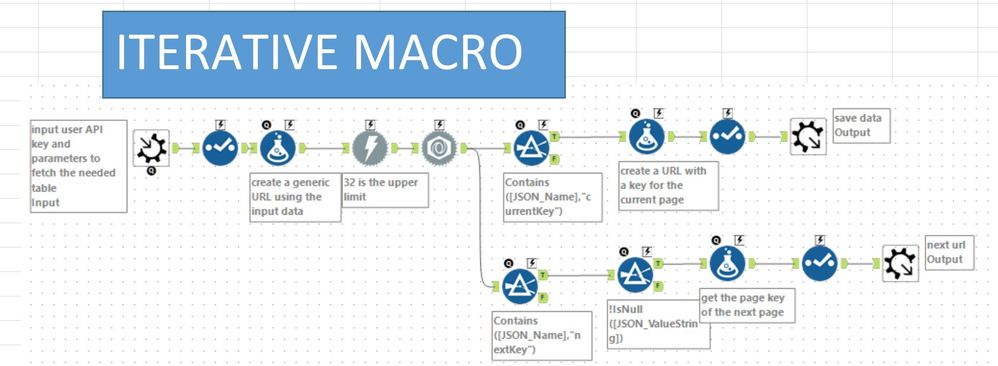

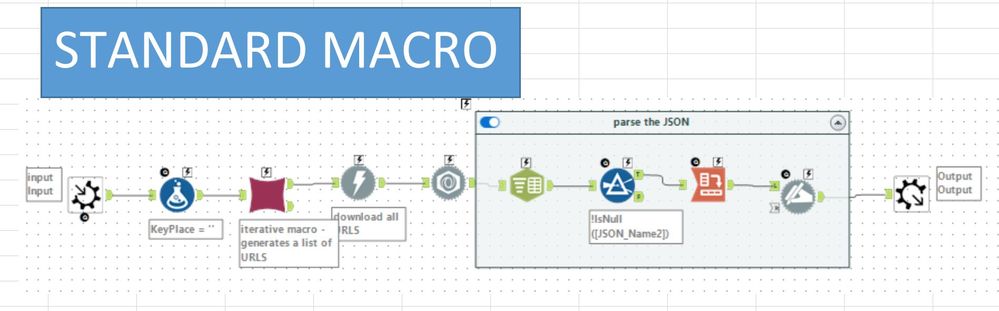

My macro is an iterative macro within a standard macro. The iterative macro fetches all the URLS that are needed to get the data from the target dataset. The standard macro takes those URLs and sticks them into a download tool, then parses out the JSON.

I'm looking for creative ideas on how to make this whole thing work faster, considering that I can use several different API keys. I want to get a substantial speed improvement (x2 at least).

What I have tried so far (and nothing produced results):

1) In the standard macro piece, tile the output of the iterative macro and parallel the downloads

2) Increasing the number of connections in the download tool - cannot go over 32

Many thanks in advance for the help to this wonderful community!