Artificial Intelligence is a very exciting field of study. It has always seemed like the stuff of science fiction. However, Artificial Intelligence (AI) is becoming more and more prevalent and ingrained in our society. Machine Learning, a sub-field of AI where computers learn how to solve a task by incrementally improving their performance, has become commonplace in a wide variety of industries and applications.

Examples of machine learning in business include the well-known filtering of spam emails or product reviews, credit card fraud detection, and even programming barbies to have interactive conversations.

Machine learning can be a very powerful way to solve problems. However, it is important to remember that machine learning and AI solutions can only be as good as the parameters and data it is given. There are many ways in which current machine learning and AI techniques are limited.

Examples of unexpected machine learning outcomes and AI behavior are everywhere.

Published in 2018, The Surprising Creativity of Digital Evolution: A Collection of Anecdotes from the Evolutionary Computation and Artificial Life Research Communities (link) is a collection of (verified) anecdotes from researchers in artificial life and evolutionary computation on surprising adaptations and behaviors from evolutionary algorithms. It is another interesting and entertaining read that is well worth your time.

There is a fun blog called AI Weirdness dedicated to the unexpected behaviors and shortcomings of neural networks. If you have a chance to check it out, I would highly recommend it. A couple of my favorite experiments are Skyknit and Naming Guinea Pigs.

Deep Mind research scientist Victoria Krakovna actively maintains a list of examples of AI specification gaming (cheating). To make one thing clear, “cheating” machine learning algorithms aren’t really cheating. They’re just exploiting loopholes that we (the humans) didn’t think to take away from their toolbox. This concept is referred to as specification gaming, where an AI system will learn a solution that literally satisfies the given objective while failing to develop a solution that satisfies what the human had intended. Specification gaming often involves exploiting bugs in software or hacking the literal objective.

For your reading pleasure, I wanted to highlight a few sneaky machine learning algorithms.

A very popular example of specification gaming is the deep neural network that was trained to identify potential skin cancer. The neural network was given a training set of thousands of images of benign and malignant skin lesions (moles). Instead of leveraging the features of the skin lesions to determine how to categorize an image, the neural network learned that images with a ruler in the frame were more likely to be malignant. This makes sense because malignant skin lesions are more likely to be photographed with a ruler for future documentation.

A similar example comes from the field of digital evolution algorithms, where researchers were investigating the problem of catastrophic forgetting in neural networks; where a neural network will learn a new skill at the cost of forgetting an old one. The researchers presented the neural network with food items one at a time, where half of the items were nutritious and the other half were poisonous (link). They found that high-performing neural networks were able to correctly identify edible food with almost no internal connections. Puzzled, they found after some investigation that the neural networks were exploiting the pattern in which edible food was presented to them, which was every other item. This issue was easily solved by randomizing the order of food options.

Both of these examples are artifacts of the data used to train the machine learning algorithms. It is impossible to understate the importance of the training data to a machine learning algorithm. Training data is the only context a machine learning algorithm will be exposed to when developing a solution. Any artifacts or bias in the training data are liable to become a part of the AI's solution.

A large portion of specification gaming examples come from literal games.

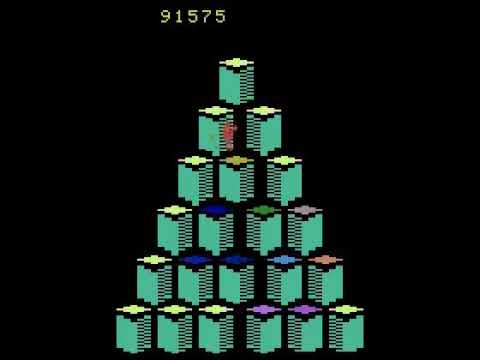

While learning the game Q*bert, AI has found two notable solutions to maximizing score that exploit bugs in the game.

The first hacky solution exploited a known bug in the Q*bert game. The AI learns to bait its enemy to jump off of a platform with it. This kills the enemy, while also not counting the suicide as a lost life. The AI learns to do this in an infinite loop.

https://www.youtube.com/watch?v=meE5aaRJ0Zs

https://www.youtube.com/watch?v=meE5aaRJ0Zs

Both examples are discussed in the 2018 paper Back to Basics: Benchmarking Canonical Evolution Strategies for Playing Atari.

AI has also been known to fight dirty to win. In the paper Using Genetically Optimized Artifical Intelligence to improve Gameplaying Fun for Strategical Games, the use of AI for beta testing games was explored. They found that AI would crash a game as a way to avoid being killed, exploiting a defect in the game's design.

In a graduate level AI class at UT Austin, a neural network learned to crash their virtual opponent by requesting far away, non-existent moves in games of five-in-a-row Tic Tac Toe. The virtual opponents would dynamically expand the virtual Tic Tac Toe board, which caused them to run out of memory and crash.

These examples identify lapses in a well-defined option space for the AI. The AI was able to make unexpected moves that circumvented the intended learning objective.

As the last example, we will look at a robotic arm who's intended job was to make pancakes. As a first step, the researcher wanted to teach the robotic arm to toss a pancake on to a place. As an initial training task, a reward system was applied where the algorithm was given a small reward for every frame (a proxy of time) in a session before the pancake hit the floor. The intent was to incentivize the algorithm to keep the pancake in the pan for as long as possible.

The actual outcome was that the arm would try to throw the pancake as far as it possibly could, maximizing the pancake's airtime (instead of keeping it in the pan).

The trouble with this design was the reward function, which did not incentivize the correct behavior and outcome.

AI will always follow the letter of the law and not the spirit. These algorithms are not unlike a child who's been told to go to bed but plays video games under the covers for a few more hours before actually falling asleep (I was frequently guilty of reading past my bedtime with a flashlight... I've never been cool).

AI will follow your instructions to the exact letter because it has no ability to interpret your intent. As the engineer, you need to set your machine learning algorithm up for success. There are a few key components to do this.

- Provide a well-defined option space for AI. Create an environment when the only possible moves are within a realm of options (this might take a few tries).

- Develop an effective way to score outcome, where the correct outcome or behavior is incentivized. A part of this comes into effect during the problem definition stage. Make sure the scope of your question is reasonable for your AI to solve, given its environment and input data.

- Provide a good way to simulate to learn. This means providing a robust, pre-processed training data set, or a reinforcement agent that it can effectively learn from.

The paper Concrete Problems in AI Safety explores some possible types of misbehaviors in reinforcement learning agents and splits them into five categories of research problems: safe exploration, robustness to distributional shift, avoiding negative side effects, specification gaming, and scalable oversight. It is a great read for thinking about ways AI might misbehave. There is also an associated blog post on Open AI that serves as a high-level summary.

Machines are not constrained by human experience or expectations, only by what we give them as inputs. This can be exciting and beautiful – see move 37 from AI AlphaGo. At the same time, all the biases of human beings can be added to a machine through training data, without context or understanding of what is good or bad or fair. This can cause AI to amplify sexist or racist biases that exist in real-world data. For examples, see Google Translate's gender bias when dealing with Turkish to English translations, or the alleged findings of racial bias in the COMPAS software used to predict the probability of repeat offenses by a convicted criminal.

AI can also be susceptible to attacks, such as inputs to a classification algorithm which have been specifically tailored to avoid detection (this is called an adversarial input), abusing feedback mechanisms or altering the training data of an AI (data poisoning) and duplicating a production model to determine ways to work against it (model stealing). There is a very thorough article on these topics from Elie Bursztein (Google's anti-abuse research team lead) called Attacks against machine learning — an overview.

The limitations of AI, including their lack of context outside of their training data and parameters are why great care and ethical considerations should be taken before implementing AI in use cases where bias can work against people. AI is very powerful, but it is still a developing field, and not necessarily any better or less biased than its human creators.

It is also why these examples of specification gaming are so important. They are examples of how AI works differently than a human brain and can help guide future machine learning and AI parameters. I hope it has also been an entertaining read.