Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Introducing: The Azure Machine Learning Training a...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Last December, Microsoft announced the general availability of their Azure Machine Learning service. An exciting component of this service is the automated machine learning functionality, which intelligently generates multiple algorithms and hyperparameters for any provided input data set, selecting and returning the best-performing model using an Artificial Intelligence technique Matrix Factorization instead of the more traditional brute force methods.

Today, we are very excited to announce the public preview of two Alteryx Designer tools that connect to Azure Machine Learning automated ML (Machine Learning), allowing you to easily leverage the power of automated machine learning from inside Designer.

With the Azure Machine Learning Training tool, you can send data directly from Designer to be trained by Azure Machine Learning automated ML. Using the Azure Machine Learning Scoring tool, you can use the model you trained for predictions on new data.

Both of these tools are available to download now through the Alteryx Gallery. They are a part of the Predictive District. You do not need to have the R-based Predictive tools installed to use these tools.

To demonstrate how these tools can be leveraged for your own use cases, I thought it would be fun to experiment with a Microsoft-published data set on identifying machines that are likely to be hit with malware. This data set was published as a part of a Kaggle competition hosted earlier this year. The bad news is that the competition ended about a month ago, but the good news is, that means we have benchmarks to compare our results against.

Data Preparation

The most important step of any good data science project is data preparation and data exploration. Having a good understanding of the data set you are working with enables you to make sure the data you provide to the model is clean and accurate, as well as create more effective features (hooray for feature engineering!) A model can only ever be as good as the data you feed into it, so it is important to spend some time here.

The great news is that Azure ML does perform pre-processing and featurization automatically, including missing value imputation and hashing (one-hot encoding). To get a lay of the land, I am still going to take a look at our data in Alteryx and remove some features manually.

Reading in the raw data downloaded from Kaggle, the first thing we need to note is that this data set is on the large end (8,921,483 records and 84 columns – a total of 6.9 GB of data).

For this baseline run, I am just going to take a quick look at the data and remove any features that are unlikely to be useful for predicting the target variable. This will help reduce the overall size of the data being passed to the Azure Machine Learning service.

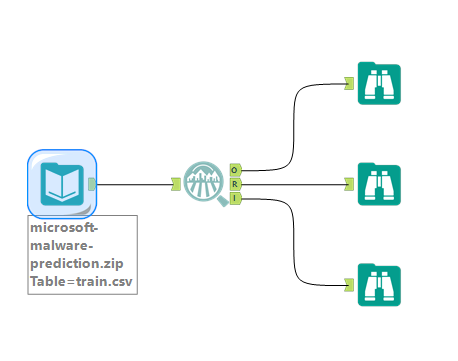

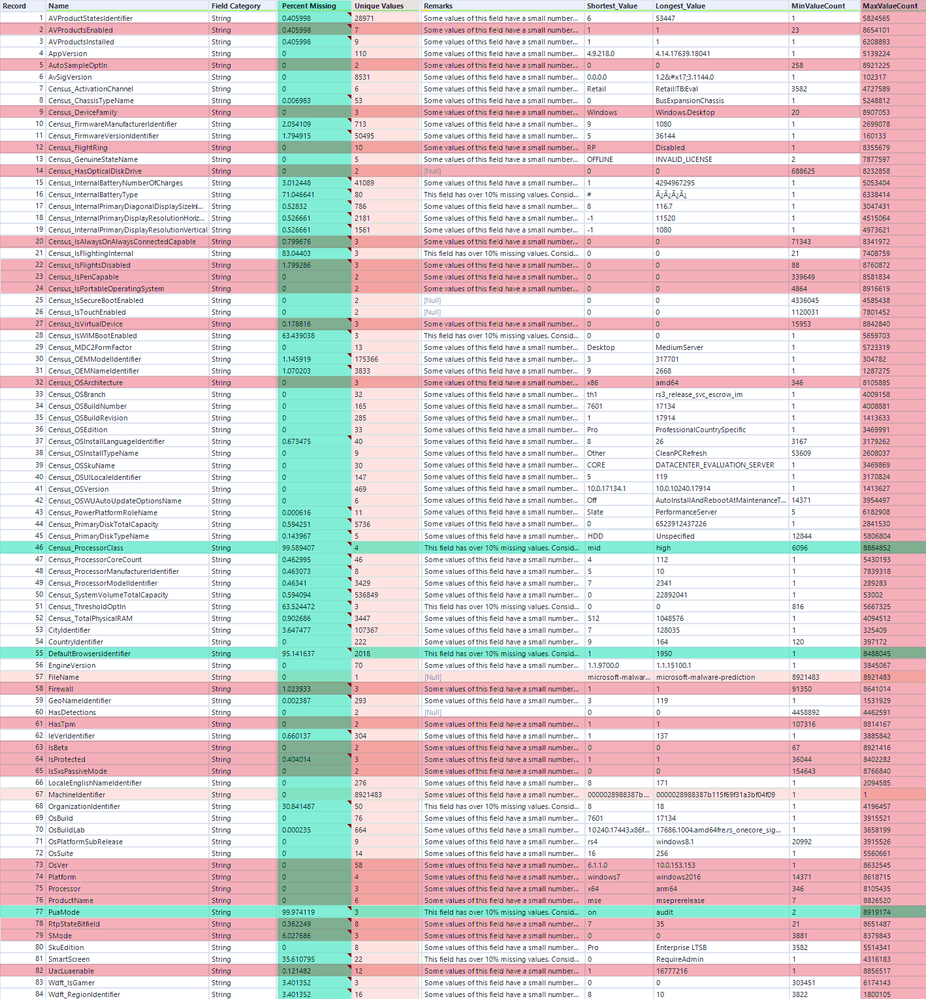

One of the first things I like to do when working with a new data set in Alteryx destined for machine learning is to plug it into a Field Summary tool.

In the O output of the Field Summary tool, we can see that there are a few fields with more than 95% missing values, as well as fields where more than 95% of the observations have the same value. Because these fields are so skewed, they aren’t going to be particularly meaningful. Using a Select tool, I am going to remove any fields that have more than 95% nulls (highlighted in AYX Blue Razz (light blue)), fields where more than 95% of records have the same value (highlighted in AYX Hot Sauce (dark pink)), or that have a constant value (highlighted in AYX Cotton Candy (light pink)). I’ll remove these fields with a Select tool.

We can also see that there is a nice, nearly 50/50 split of values in the target variable [HasDetections]. Excellent.

Removing these fields has reduced the data set from 84 to 63 fields, and from 6.9 GB of data to 5.2 GB. This is still pretty large, and working with a dataset this size to establish a baseline is going to be slow and somewhat inefficient. To deal with this, I am going to randomly sample the data down to 1 in 20 observations for each target variable value with a Sample tool. I’m also going to add on a Create Samples tool to divide the remaining records into train and test data sets. I’ll hold on to the test data set to run it through the Azure Machine Learning Scoring tool a little later.

Removing these features, as well as down-sampling, still leaves nulls as well as fields with high cardinality, which can be ineffective in machine learning models. For this first pass, I am going to leave this preprocessing to the Azure Machine Learning service, which will impute nulls and drop fields with high cardinality. I can always come back and perform additional cleansing and processing in Alteryx to have a little more control, including some custom feature engineering - model building and refinement is an iterative process after all 😊.

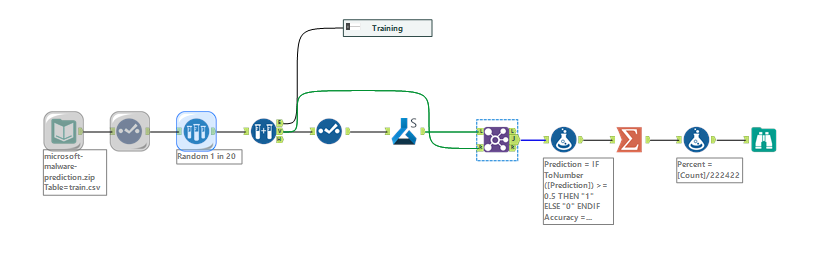

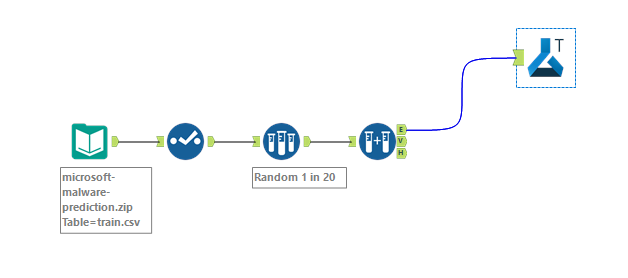

Now I can connect the Azure Machine Learning Training tool to my data stream. Here is what the workflow looks like at this point:

The Azure Machine Learning Training Tool

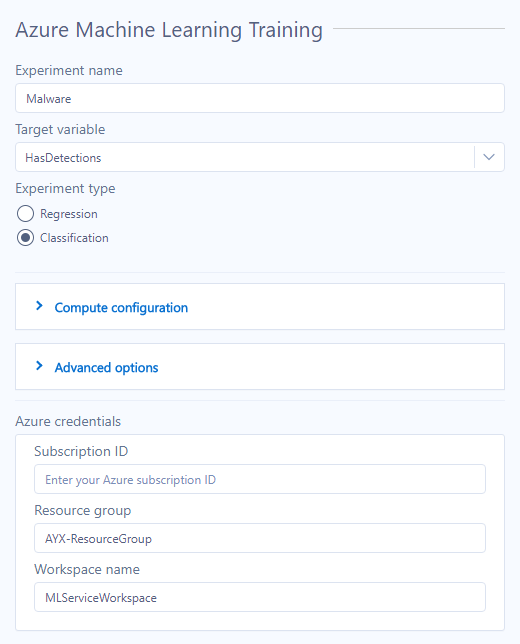

With the first pass at preprocessing set up as an Alteryx workflow, I can connect an Azure ML Training tool to my data stream and configure the tool in the configuration window. To keep things streamlined, I am going to breeze through the configuration of the tool, but if you’d like to know more please check out the help documentation.

The Configuration window for the Azure Machine Learning Training tool, first we need to name our experiment. The experiment name can be anything – I think for this project, “Malware” has a nice ring to it.

In the Target variable option, I’ll select the variable I’m interested in estimating from a drop-down menu. In this example, it is [HasDetections].

Next, I need to specify what type of model I am trying to build – either a classification experiment or a regression experiment. This option determines what set of algorithms the Azure automated ML will try applying the data to and should depend on the target variable. If the target variable is made up of continuous values, then it’s a regression experiment. In my case, the target variable is made up of categorical values ([HasDetections]: Yes (1) or No (0)), so I’ll select a classification.

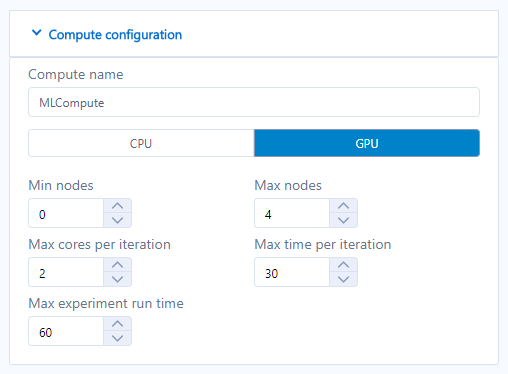

The next configuration option, Compute configuration, expands into a larger menu. The Azure Machine Learning Training tool sends data from Alteryx on our local machines to a remote compute resource. This is where we can define the compute resource that is used.

This can all be left as default values, which is what I am going to do! A new compute resource will be created if the one specified does not already exist. Note that if you specify the Min Nodes argument to greater than zero, the Azure Machine Learning services will create a cluster with the minimum nodes specified, and continue to run the nodes after the compute resource is no longer needed.

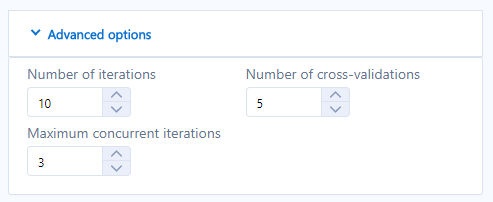

Advanced Options is another expanding menu. I’m going to leave these values as default as well.

Finally, I need to provide my Azure account information, so the tool knows what to connect to; this includes a Subscription ID, Resource Group, and Workspace Name.

Now we can hit the run button and see what happens!

Checking the Experiment in Azure Portal

The Azure Machine Learning Training tool will give you incremental updates as it pushes data up to the service, prepares the data, and starts running models.

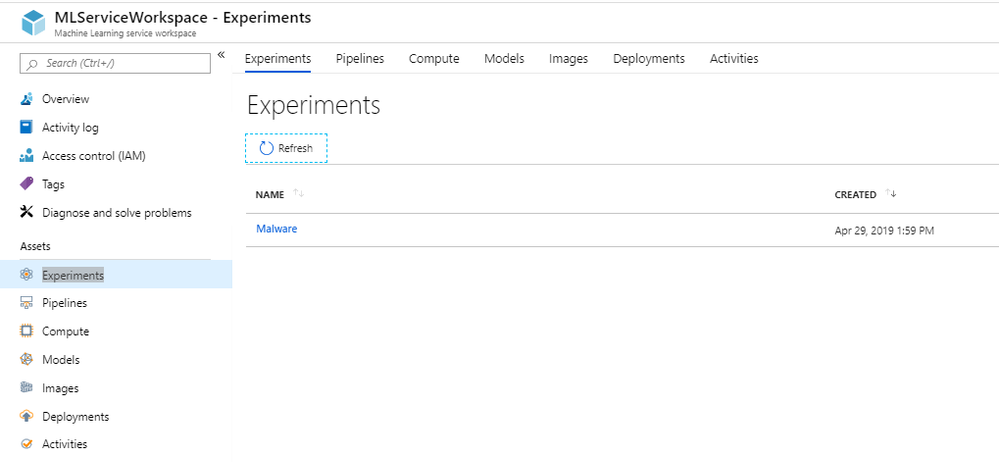

As soon as you see the text Experiment Created: in your results window, you will be able to access the experiment in the Azure portal, in your specified workspace under Experiments.

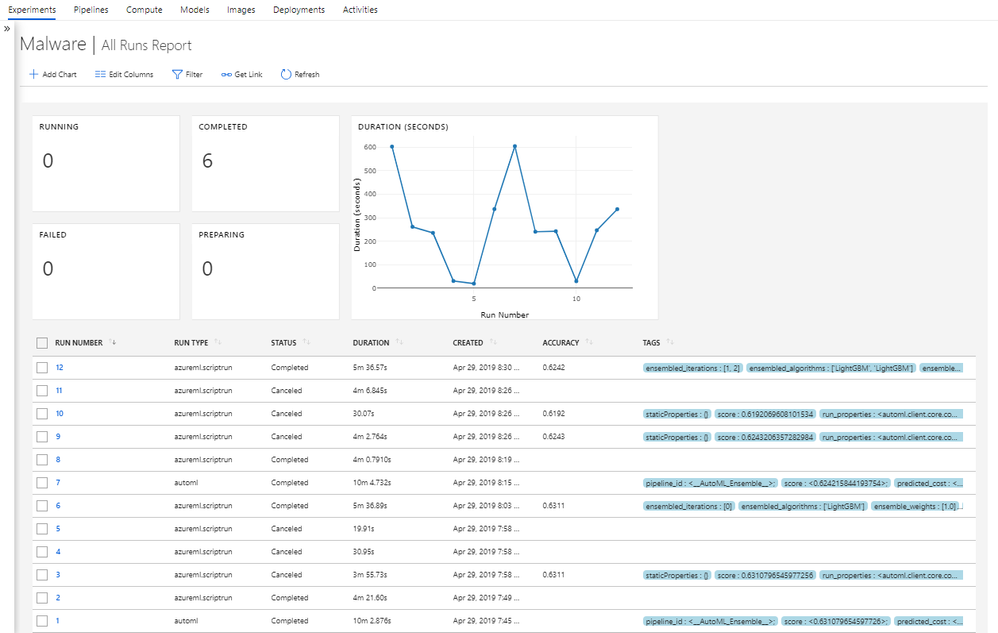

Opening up the experiment by clicking on the name will show you a dashboard of your runs within the experiment.

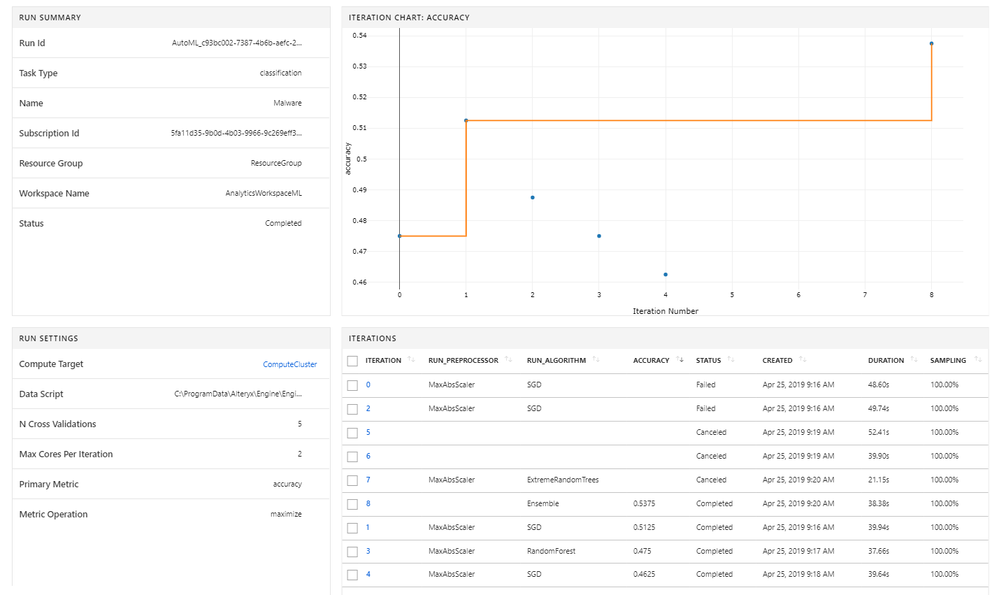

Clicking on the Run Number will open a dashboard detailing the iterations of algorithms that were included in a given run.

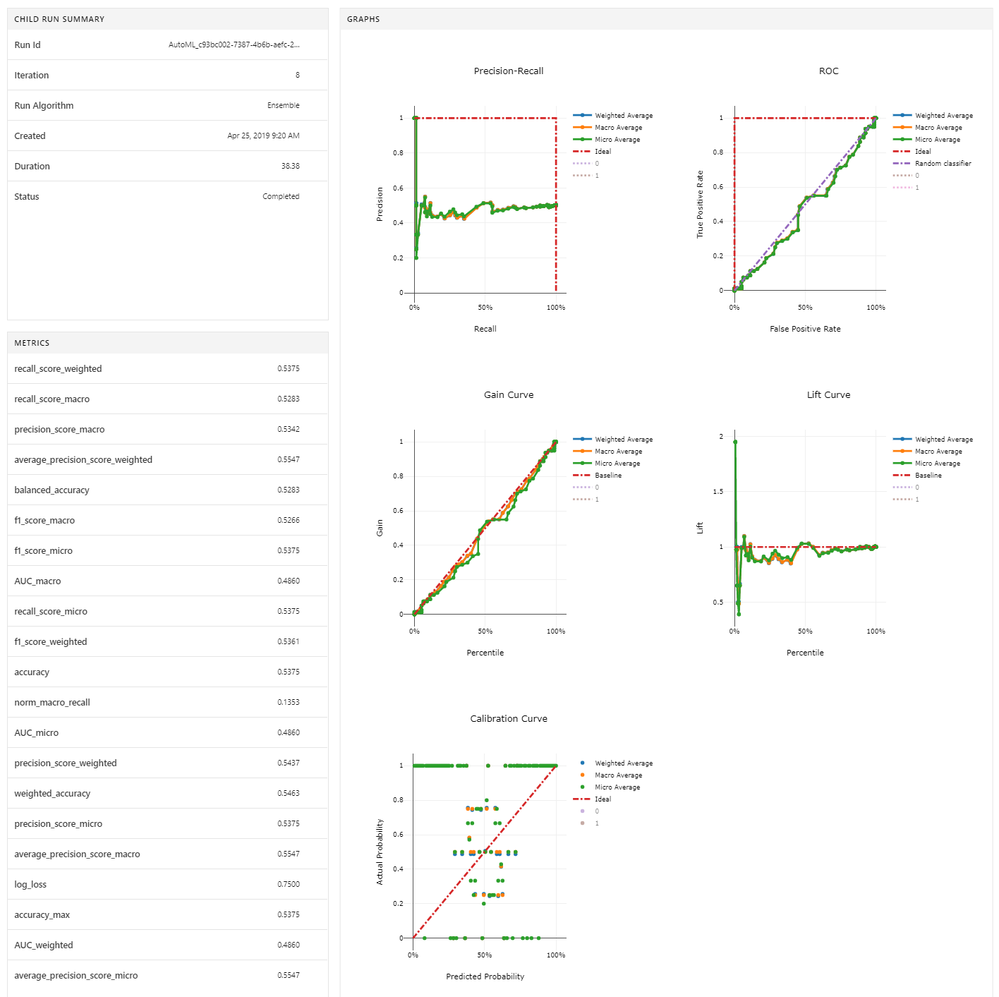

In this dashboard, you can see the accuracy of different iterations based on cross-validation. Clicking in the Iteration number in the bottom right table will open even more information, including a plethora of metrics on model performance for the specific model.

We are rich with model metrics to assess the models trained by the Azure Machine Learning service! With these metrics, you can keep iterating in an experiment until a model is trained to your satisfaction.

At this point, I’m ready to start feeding the model some new observations to see how it performs.

The Azure Machine Learning Scoring tool

With the model trained and living in my Azure workspace, I can send new, unseen test records to the experiment and the service will return estimated values for the target variable [HasDetections] using the best model in the experiment. The best model in an experiment is defined by the primary metric- accuracy for classification and R2 for regression.

It’s important that the data I feed into the Azure Machine Learning Scoring tool has the same schema as the data I used to train the Malware trial experiment – enter the second Select tool into the workflow (to remove the target variable [HasDetections]).

The configuration of the Azure Machine Learning Scoring tool is really straightforward – just give it the directions to find your experiment, and it is good to go. The Azure Machine Learning Scoring tool actually downloads the best model from the Azure Machine Learning service and runs the scoring locally on your machine, so no need to worry about remote computational resources.

I’ll add a few tools (a Join tool, a couple of Formula tools, and a Summarize tool) to calculate the accuracy of the model on the validation data set.

Now we let it run!

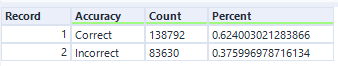

With a quick calculation, we see that the model estimated 62% of records correctly, and 38% of records incorrectly.

Considering the top leader in the Kaggle competition got 67% of the test data set correct after months of meticulous feature engineering and hyperparameter voodoo, I think this is a great start. At this stage, I can explore these results further to see where the model is missing things, go back and perform more targeted data preparation and feature engineering to see if it improves performance, or I can just go for it and try running the model on the Kaggle test data set, submit the results and see where it performs against other people’s hard work and tears.

Machine learning is an iterative process and still does require some expertise and understanding, but these tools enable users to rapidly test many different algorithms and tune hyperparameters without much intervention – something that can be very efficient and powerful for both beginners and experts alike. The Azure Machine Learning tools are a fantastic way to do rapid prototyping, then develop full, robust models ready for deployment.

We are very excited to have this included in the Alteryx toolbox – and even more excited to hear how our users put it to work. When you have something neat and exciting to share, please don’t hesitate to comment on this blog or to reach out to us at community@alteryx.com to write a blog of your own! Please note that these tools are currently in Beta and not supported for production use - check back on the tools in the fall for an update!

A geographer by training and a data geek at heart, Sydney joined the Alteryx team as a Customer Support Engineer in 2017. She strongly believes that data and knowledge are most valuable when they can be clearly communicated and understood. She currently manages a team of data scientists that bring new innovations to the Alteryx Platform.

A geographer by training and a data geek at heart, Sydney joined the Alteryx team as a Customer Support Engineer in 2017. She strongly believes that data and knowledge are most valuable when they can be clearly communicated and understood. She currently manages a team of data scientists that bring new innovations to the Alteryx Platform.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.