Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Using the Runner Macro / Chaining Workflows / Cach...

Using the Runner Macro / Chaining Workflows / Caching Data How-To

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

In my conversations with people at Inspire, I hear a couple of topics come up frequently: How can I cache data that's already been processed, and how can I schedule workflows to run consecutively?

My answer to both of those questions is the Runner macro.

Yes, there is the cache data tool - but I actually have to do something with that. I have to change the settings from input to output.

Using the method I'm describing here, that caching is automatic.

The first step is to plan out what you want to do with your data, where you want to cache it, how you want to process it. Then you divide those steps into logical sections, and make workflows that only address one section at a time.

In the example I've attached, all I'm doing in workflow 1 is creating a .yxdb from my data. That's always a good place to start, because if you have, for instance, a .csv file coming in, Alteryx will process that same data exponentially faster than if it remains a .csv. Yes, there is some processing time to do the conversion, but everything else will run faster after that.

Then, you can make as many other workflows off that yxdb as you like. Maybe in one workflow you do all your joins, and in another you create all your calculations.

The scenario where splitting up my workflows makes the most sense is when I have multiple disparate data sources. I might have to do a lot of processing to get all that data to be homogeneous. But I need to perform different kinds of processing on each of those data sets. So after I convert each data source to a yxdb, I'll make a workflow to process data source 1, another to process data source 2, etc.

Finally, I'll make a workflow that re-unites all my other workflows.

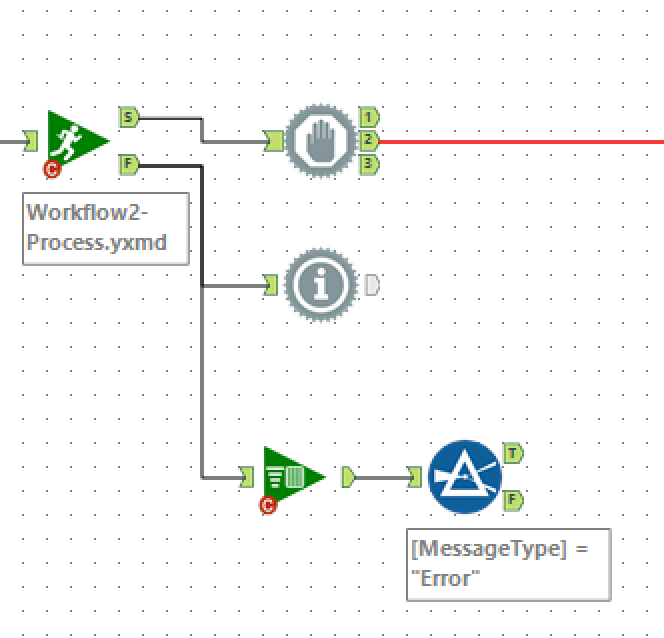

The magic touch is to then create one last master workflow to run all the others, and this is where the Runner macro comes in. I add a Runner tool for each of my underlying workflows, separating them with Block Until Done tools.

Then, when I'm ready to process my data, I only need to run one workflow - the master workflow with the Runner tools - and everything else is automatic.

It makes it a LOT easier to fix things if they break (and we all know they do sometimes), and I don't have to sit and toy with the cache tool. I can hit run and walk away or do something else.

If you haven't tried this method, I encourage you to experiment. If you have any questions, I'm always here on the forums, so feel free to reply or ping me directly.

Happy analyzing!

http://community.alteryx.com/t5/user/viewprofilepage/user-id/2328

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Great use case.

I've just used this same approach for a CRS regulatory reporting project where client data was dotted about with 5 eventual outputs all including some elements from the customer record.

Address information for example was tricky with joint accounts, individual accounts, multiple source systems etc, requiring a decision tree depending on data completeness.

Considerations for this approach ;

1. The workflow and data folders get more cluttered.

2. Someone who doesn't understand might not know to run the controlling workflow.

3. Leave room in your numbering based naming convention for new steps, I ended up with 001_<workflowname> !

4. Name the intermediate yxdb the same as the workflow.

5. Drop/create an Excel output in \ExcelCopies folder with the same name and input as the yxdb

#5 saved me lots of time explaining my "workings" to non-Alteryx co-workers (poor souls!) who could investigate the data lineage if an output was queried.

Finally, think that the Message Expression needs to be "Check Underlying Workflow", quotes are missing at the moment ?

Thanks

dan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

And yes, you're correct about the missing quotes. I would also change the messages to specifically name the underlying workflow.

It also helps if the underlying workflows and the master workflow are set to stop running on error in the configuration.

http://community.alteryx.com/t5/user/viewprofilepage/user-id/2328

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I put Tests on the Error outputs with checking for 0 recs, but that's only because I hadn't investigated messages before =)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

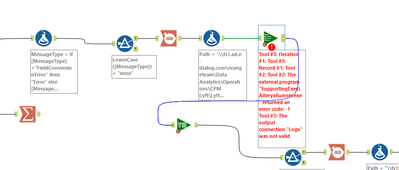

Great post! I love these macros. I also always use these in combination with the log parser tools. This means you can see all the error messages in the actual 'Run All' workflow.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

That's smart, hadn't thought of that, good productionising !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Followup question: do you feel the block until done is needed when using the conditional runner? Is success / fail only determined when the underlying job is complete?

I'm using these tools extensively including grabbing the output with log parser, appending timestamps, filtering for the underlying job name, and logging the info into my database so we have run history including timings between the underlying jobs.

But I hadn't thought about block until done being required. Obviously it wouldn't hurt, but does it actually make a difference here?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

As I recall, the runner tools were running simultaneously without the block until done, and that was making me nervous. Granted, I started doing things this way a LONG time ago, and things might have changed.

Perhaps others who have experimented more can chime in here.

http://community.alteryx.com/t5/user/viewprofilepage/user-id/2328

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello @andre347 ! A quick check.

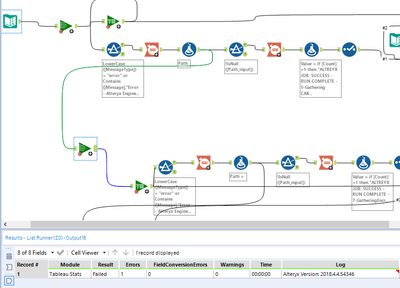

Happened to think in similar methods as you and building a bigger process. Apparently when I hit the rock, looks like you have already tried it out here. Have you faced issues where you happen to pass nulls to the list runner and the Macro don't fail, but run the macro assuming it got the path and passes out?

Heads up: I don't use conditional list runner as it fails to process if I have even a filed conversion error, which happens to be in most of my WFs due to incoming data at times. Can't rule out.

Logic:

Am trying to loop a conditional run based on the messages from prior WFs. So the PATH formula in the first stream send in the path to the second List runner only if few conditions are satisfied, if not I want to stop the process. So, I send a dummy file path ideally a NULL to second list runner so I want to error out. Also I collect logs to make some conditional run processing for a larger process.

ASK: When I run this logic on a new workbook it works as expected with the error, but when I save the WF and run it the control passes to the next list runner and process the consecutive pipes ahead of it.

Is there a specific reason for the macro to store the directory and behave like this? Any thought would be of great help!

Test:

Expected outcome.

Actual:

Post saving , differed outcome.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

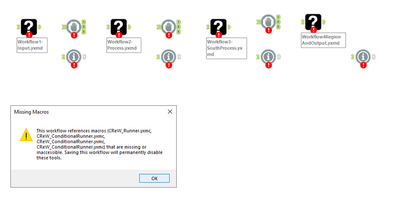

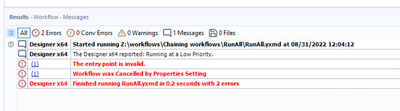

@ThizViz, thank you for the post!

I was not able to run the examples attached. Tried running the RunAll workflow first, but got error message importing (attached below). Then i thought maybe i need to run the sub workflows first to generate the inputs for the RunAll workflow. That did not help either. Am I missing something?

Thank you

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

26 -

Alteryx Designer

7 -

Alteryx Editions

95 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,208 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

15 -

Batch Macro

1,559 -

Behavior Analysis

246 -

Best Practices

2,695 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,825 -

Community

26 -

Computer Vision

86 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,938 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,487 -

Data Science

3 -

Database Connection

2,220 -

Datasets

5,222 -

Date Time

3,227 -

Demographic Analysis

186 -

Designer Cloud

742 -

Developer

4,372 -

Developer Tools

3,530 -

Documentation

527 -

Download

1,037 -

Dynamic Processing

2,939 -

Email

928 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,258 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

712 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,708 -

In Database

966 -

Input

4,293 -

Installation

361 -

Interface Tools

1,901 -

Iterative Macro

1,094 -

Join

1,958 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

260 -

Macros

2,864 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

751 -

Output

5,255 -

Parse

2,327 -

Power BI

228 -

Predictive Analysis

937 -

Preparation

5,169 -

Prescriptive Analytics

206 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,434 -

Resource

1 -

Run Command

575 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

630 -

Settings

935 -

Setup & Configuration

3 -

Sharepoint

627 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

431 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,730 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,980

- « Previous

- Next »