Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- In Database unions/joins - performance bottleneck

In Database unions/joins - performance bottleneck

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi, I'm using the in database tools and coming across a performance issue, wondering if anyone has experienced similar?

This isn't the exact scenario, but shows a close enough example.

There are 2 tables in an Amazon AWS Redshift server:

ProductRecords

--------------------

personid | product type | startdate | enddate

ActionRecords

--------------------

personid | action type | action date

I need to find out what product type was active at the time of action records.

So I need to join the actions to the product records, by personid and also ensure that action date falls between ProductRecords.startdate and ProductRecords.enddate.

If there is no corresponding Product record for the action, I still want to keep the action record.

Alteryx doesn't yet allow conditional joins, so I do the following:

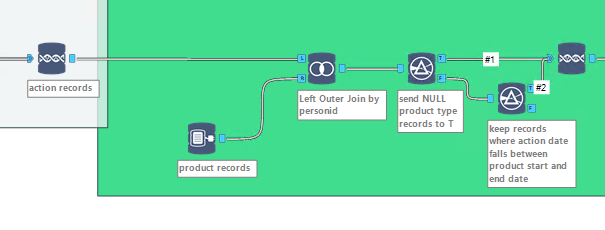

1. Use In DB join tool (Left outer) to join the 2 tables by person id, left side being the action records so the action is still kept if there are no product record for the personid

2. Use a filter tool on the results from step 1, in order to send the records with NULL product type value to T output

3. For the records sent to F output in step 2, they are sent to another filter tool so only records where actiondate falls between startdate and end date are kept

4. Union the T output from step 2, with the results from filter in step 3 It works fast up until the union step in step

Even using a small subset of data (less than 10,000 rows), it takes atleast 5 minutes for the union tool to show as complete. It must be the way the nested sql statements are being created in the background?

I've also tried doing something similar by using multiple joins and came across same bottleneck.

I'm also getting the performance bottlenecks when using in DB unions later on in the workflow

Any ideas? It would be great if it was possible to see what SQL statements are being generated in the background which could assist in figuring out where the problem is.

Solved! Go to Solution.

- Labels:

-

In Database

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

*Correction, above would lose records if a action record was joined to a product record but then was discarded due to failing the date check in the filter tool.

I tried doing it this way, but got the same performance issue on the second join tool

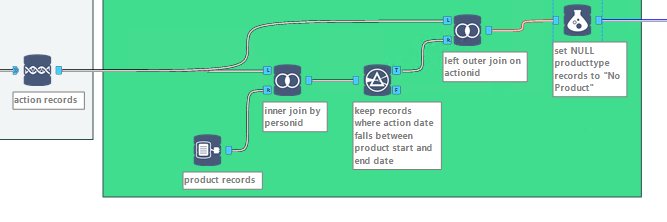

1. Use In DB join tool (INNER) to join the 2 tables by personid

2. Use a filter tool on the results from step 1, so only records where actiondate falls between startdate and end date are kept

3. Use In DB join tool (LEFT OUTER) join by actionid, with left side being the original list of action records, so actionids that couldnt be joined to products by personid + date range, are still kept

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

update:

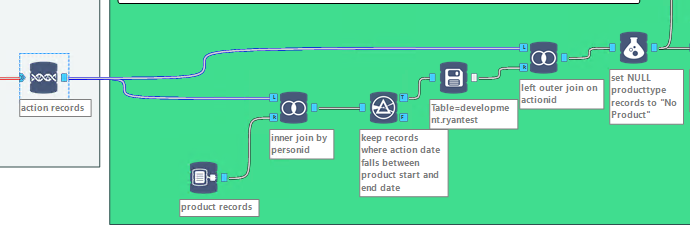

Firslty pushing the results of the filter tool, to a "Write In DB" tool prior to the second join solves the performance bottleneck issue on the second join

This isn't ideal though, because when the workflow is to be run normally, there will be hundreds of millions of rows going to it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

The solution ended up being getting the settings of our redshift user changed so the first schema in the priority order was a schema where we have CREATE TABLE access.

This was required as trying to push data to a temp table using the "create temp table" option in the "Write Data InDB" tool, it is actualy creating a permanant table in Redshift. This has been emailed to Alteryx client services.

Thanks to Kane Glendenning for point us in the right direction regarding the in db tool using the redshift bulk loader for write actions, which appears as if CREATE TABLE access is required on the schema (which we didn't have on the schema that used to be 1st priority for our user)

Also info on being able to see the SQL generated in the background - http://community.alteryx.com/t5/Data-Preparation-Blending/View-SQL-Generated-by-In-DB-Tools/m-p/5682...

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

26 -

Alteryx Designer

7 -

Alteryx Editions

95 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,209 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

15 -

Batch Macro

1,559 -

Behavior Analysis

246 -

Best Practices

2,695 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,825 -

Community

26 -

Computer Vision

86 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,939 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,488 -

Data Science

3 -

Database Connection

2,221 -

Datasets

5,223 -

Date Time

3,229 -

Demographic Analysis

186 -

Designer Cloud

743 -

Developer

4,375 -

Developer Tools

3,532 -

Documentation

528 -

Download

1,037 -

Dynamic Processing

2,941 -

Email

928 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,262 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

714 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,711 -

In Database

966 -

Input

4,296 -

Installation

361 -

Interface Tools

1,902 -

Iterative Macro

1,095 -

Join

1,960 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

260 -

Macros

2,865 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

751 -

Output

5,258 -

Parse

2,328 -

Power BI

228 -

Predictive Analysis

937 -

Preparation

5,171 -

Prescriptive Analytics

206 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,434 -

Resource

1 -

Run Command

575 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

631 -

Settings

936 -

Setup & Configuration

3 -

Sharepoint

628 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

432 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,731 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,982

- « Previous

- Next »