Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Error transferring data: Failure when receivin...

Error transferring data: Failure when receiving data from the peer

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello Community,

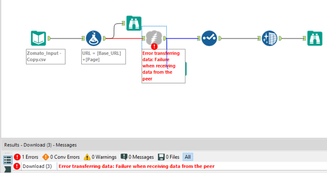

I had built a couple of workflows to scrape a few websites.

All of these worked and was able to extract the data I intended to.

A few months later when I am trying to run these workflows (without any modification), I get the below error:

Error: Download (3): Error transferring data: Failure when receiving data from the peer

All the workflows are now throwing the same error at the download tool.

Could you please point me, what should I look for to fix this issue?

I have attached one of the workflows and below is the error screenshot.

Thanks and Regards,

Chaithanya

- Labels:

-

Connectors

-

Download

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @raochaithanya,

Would you have an example of URL and page please? Unfortunately, your workflow doesn't include the CSV file so it is not possible to test it.

Does it work better if you tick "Encode URL Text" in the Download tool?

Thanks,

Paul Noirel

Customer Support Engineer

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hello Community,

Any suggestions/ pointers to fix it?

Thanks in advance.

Best Regards,

Chaithanya

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @raochaithanya,

I have played a bit with your workflow. Something seems to prevent download tool from loading web page easily.

I have then tried the following, in case Run Command tool could be a better option in this particular case:

Test 1 - Curl (version 7.55.1)

C:\>curl https://www.zomato.com/tampa-bay/restaurants?page=1

curl: (56) Send failure: Connection was reset

=> It didn't work. This is consistent as Download tool uses libcurl in the background

Test 2 - Powershell (test with PSH 5.0 but it should work with version > 3.0)

PS C:\temp> invoke-webrequest -Uri "https://www.zomato.com/tampa-bay/restaurants?page=1"

invoke-webrequest : The underlying connection was closed: An unexpected error

occurred on a receive.

At line:1 char:1

+ invoke-webrequest -Uri "https://www.zomato.com/tampa-bay/restaurants? ...

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : InvalidOperation: (System.Net.HttpWebRequest:Htt

pWebRequest) [Invoke-WebRequest], WebException

+ FullyQualifiedErrorId : WebCmdletWebResponseException,Microsoft.PowerShe

ll.Commands.InvokeWebRequestCommand

Test 3 - Python

C:\>python

Python 3.6.2 |Anaconda, Inc.| (default, Sep 19 2017, 08:03:39) [MSC v.1900 64 bit (AMD64)] on win32

Type "help", "copyright", "credits" or "license" for more information.

>>> from urllib.request import urlopen

>>> html = urlopen("https://www.zomato.com/tampa-bay/restaurants?page=1")

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

[...]

v = self._sslobj.read(len, buffer)

TimeoutError: [WinError 10060] A connection attempt failed because the connected party did not prope

rly respond after a period of time, or established connection failed because connected host has fail

ed to respond

I am sorry but something with the website seems to disturb traditional method or web scraping or even access.

I have not tried the Windows version of wget when I am afraid I would obtain similar results.

You mentioned that you had managed to download the page in the past. Maybe they have updated the website or the servers in a way that is causing issues.

If I come with a solution, I will post it here.

Kind regards,

Paul Noirel

Customer Support Engineer

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Paul,

Thanks for the detailed tests.

Ok, I had a hunch that was the case as well.

As I have other similar workflows built in the same fashion that still extracts the data.

Thanks again!

Best Regards,

Chaithanya

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Is this solved?

If not, are you behind a firewall or work in an organization that has a proxy set up in your LAN connection?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Patrick - That is the case for me! Do you have a solution?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

It could be this:

https://community.alteryx.com/t5/Data-Sources/Download-Fail-Proxy-Authentication-issue/m-p/764#M2

Or you may have to just disable the proxy.

If the proxy address is in your machine's connection settings you should be able to just disable it.

To do that:

Internet Explorer --> Tools (or the gear icon) --> Internet Options --> Connections --> LAN Settings --> Clear all settings and uncheck the boxes. --> Click Ok --> Click Apply, Ok. Now, try again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Patrick,

No, I am not using any proxy or any firewall service.

As I mentioned, I had developed 4-5 workflows, all of which used to work.

Now, only one of it fails with the above error and as @PaulN mentioned in his post, I think it might be because the website is not somehow blocking the read requests.

Thanks,

Chaithanya

-

Academy

6 -

ADAPT

2 -

Adobe

203 -

Advent of Code

3 -

Alias Manager

77 -

Alteryx Copilot

23 -

Alteryx Designer

7 -

Alteryx Editions

85 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

250 -

Announcement

1 -

API

1,205 -

App Builder

115 -

Apps

1,358 -

Assets | Wealth Management

1 -

Basic Creator

13 -

Batch Macro

1,550 -

Behavior Analysis

244 -

Best Practices

2,689 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

267 -

Common Use Cases

3,817 -

Community

26 -

Computer Vision

85 -

Connectors

1,422 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,933 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,484 -

Data Science

3 -

Database Connection

2,215 -

Datasets

5,212 -

Date Time

3,226 -

Demographic Analysis

185 -

Designer Cloud

736 -

Developer

4,356 -

Developer Tools

3,523 -

Documentation

525 -

Download

1,035 -

Dynamic Processing

2,932 -

Email

925 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,251 -

Events

196 -

Expression

1,867 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

711 -

Gallery

666 -

GenAI Tools

2 -

General

2 -

Google Analytics

155 -

Help

4,701 -

In Database

965 -

Input

4,288 -

Installation

359 -

Interface Tools

1,895 -

Iterative Macro

1,090 -

Join

1,954 -

Licensing

250 -

Location Optimizer

60 -

Machine Learning

259 -

Macros

2,854 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

749 -

Output

5,239 -

Parse

2,323 -

Power BI

227 -

Predictive Analysis

936 -

Preparation

5,157 -

Prescriptive Analytics

205 -

Professional (Edition)

4 -

Publish

257 -

Python

850 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,338 -

Reporting

2,428 -

Resource

1 -

Run Command

572 -

Salesforce

276 -

Scheduler

410 -

Search Feedback

3 -

Server

627 -

Settings

931 -

Setup & Configuration

3 -

Sharepoint

624 -

Spatial Analysis

598 -

Starter (Edition)

1 -

Tableau

511 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

430 -

Tips and Tricks

4,178 -

Topic of Interest

1,123 -

Transformation

3,719 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,955

- « Previous

- Next »