General Discussions

Discuss any topics that are not product-specific here.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- General

- :

- Re: Advent of Code 2022 Day 10 (BaseA Style)

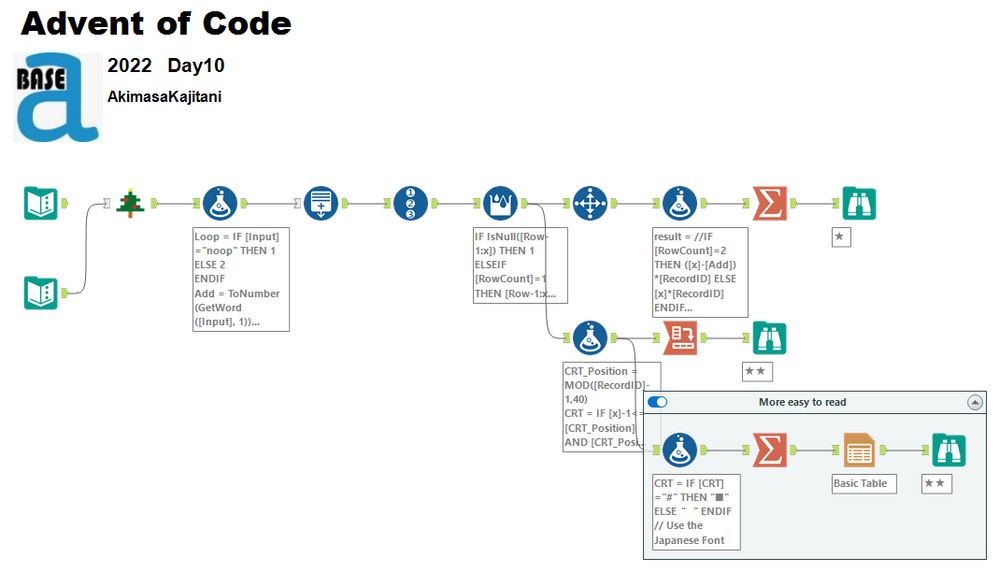

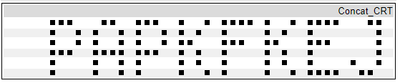

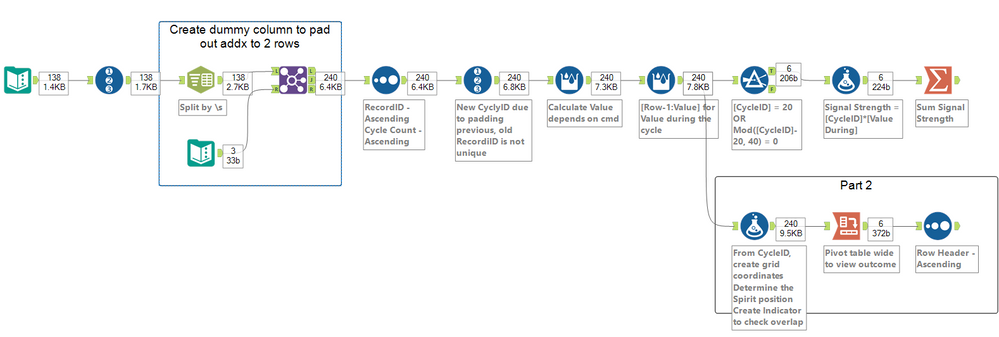

Advent of Code 2022 Day 10 (BaseA Style)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

The hardest part was reading part to of the question

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

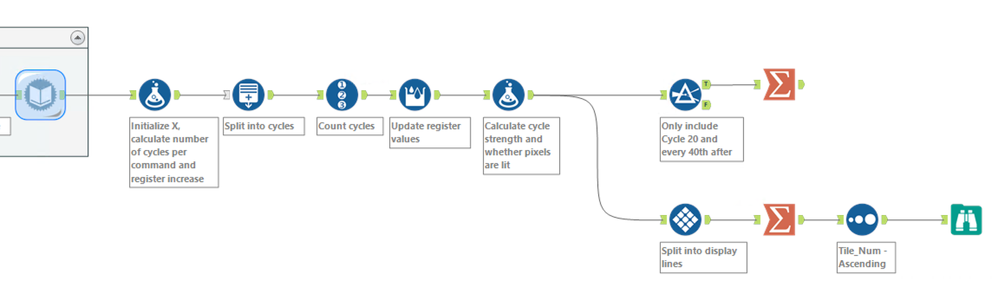

Technical and complex description for a comparatively straightforward challenge. This one is possible with only core-level tools.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

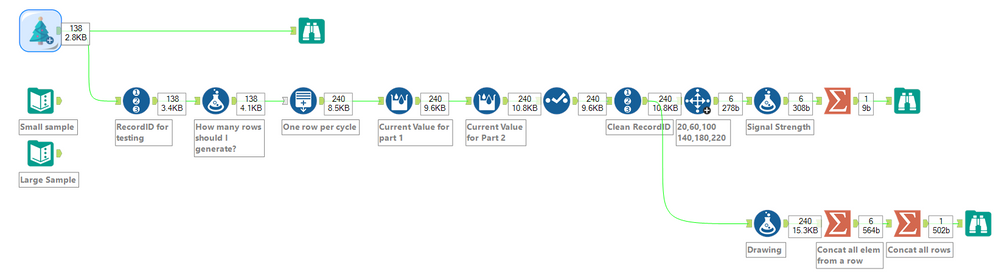

Created a gif for the solution for part 2

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I thought it was an easy question upon initial read but for some reason took me way longer than expected. My solution is similar to the others, nothing too exciting.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

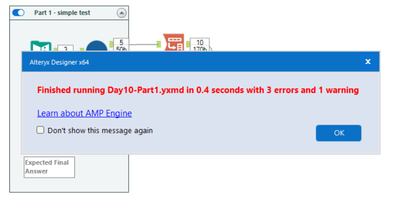

Surprising no macros needed for today, the hardest part is understanding part 2 🙄

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

This is not a solution post - it's about the way to think about building software that is reliable; stable; and super quick to deploy with zero UAT. In other words - Test Driven Development

So - we'll do this together on day 10's challenge.

Build Test Data

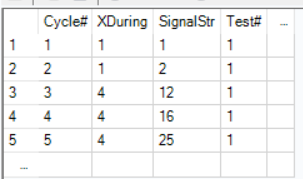

First: Start with capturing some test data that reflects our current understanding of the problem

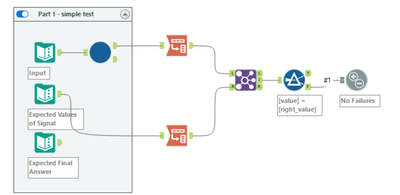

Build a failing test:

Now you want to build a failing test. This seems like a silly idea - why build a failing test? Well - what you want is to know whether or not your test process is actually working, not just telling you what you want to hear :-)

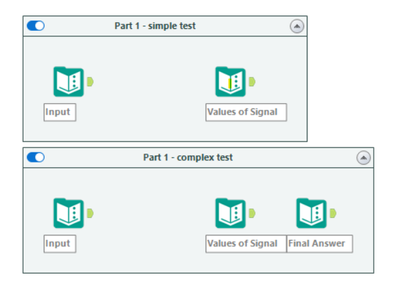

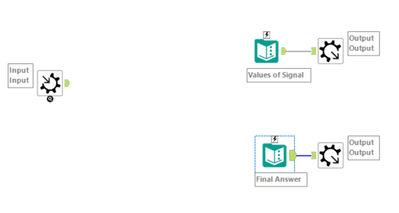

So - we take this test data, and put it through an empty shell of code, and then build the piece that checks the results.

At this stage, our solution is an empty shell - so all of our tests should fail.

Build an empty Shell solution that gives the wrong answer

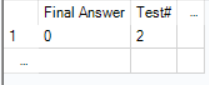

Now build the tester:

Run it to make sure you get a failure:

Where are we now?

If you have done this right - you now have

- Good test data that reflects the solution we WANT

- a good way of testing whether our software does this right

- an empty shell solution that does not work properly

The last 2 steps:

Red->Green: Now that you are confident that you can immediately tell if what you built is working or not - you can build your solution iteratively and quickly, testing as you go along. You build until your test cases pass and then stop.

Refactor: Finally, when you're done - you then look around at other people's solutions and say "Aaah - that was clever - I wish I'd done it differently" Also - I often find that when I've finally got a green solution - I hear myself say "Oh - that's what they meant in the requirement" - so I only really understand the problem once I've solved it once in a messy way. So now that you've solved it once, and have test cases - you can feel confident in refactoring (cleaning up / restructuring) to be the code that you would be proud of / proud to show others / know is stable and well built. And because you have test cases - you can do this iteratively and at any point you know if your code still works. In other words - this doesn't have to be a big bang - you can clean up in small sprints - with complete confidence.

Why go to all this trouble?

Several reasons - but in my mind the major 3 are:

- At any time, you know if your solution is ready for production - there's no guess work or human UAT. You could even schedule this to run automatically like Google does with their test cases, every time you change anything

- If you change HOW you build the solution - you can still be confident that it works according to the required outcome. This is super important 'cause often we build something which is so complex and so hard to test that we're just afraid to update it or clean it up or do it better when we have better ideas (this cleanup is called "Refactoring")

- If you discover a new scenario that didn't work in your solution - you can just add another test case - and you'll never have to worrry about this particular defect again (i.e. regression testing)

Summary:

This is the essence of Test driven delivery / also known as Red Green Refactor:

- Red: Build some failing test cases and an empty shell solution

- Green: Build software / solution until the test cases pass

- Refactor: Based on what you now understand about the problem, and ideas from others - you can change the way the solution is built and still be confident that it will still give the right output

It is slower for development in competitive situations like this - but for building software / data flows that need to work reliably in your day-job and in a production context over time (as requirements change and evolve) - this is one of the best work habits to instill in your team.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Struggled a lot with part 2 as my English wasn't good enough for it. I used deepL to translate the task and gave it a second shot later. Similar to the last couple of days, I'm sharing a "not cleaned up" version that is only annotated, but nothing was removed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

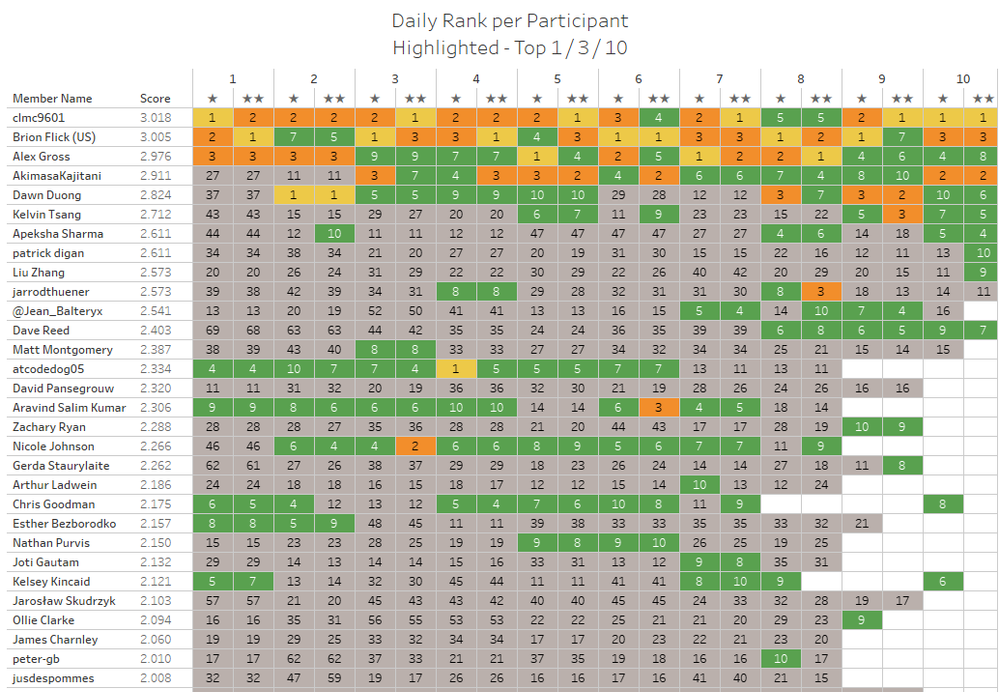

I decided to try another type of chart in Tableau today on the AoC leaderboard dataset. A highlighted map of the daily ranks per part :-)

-

.Next

1 -

2020.4

1 -

AAH

3 -

AAH Welcome

8 -

ABB

1 -

Academy

222 -

ADAPT

9 -

ADAPT Program

1 -

Admin

1 -

Administration

2 -

Advent of Code

135 -

AHH

1 -

ALTER.NEXT

1 -

Alteryx Editions

5 -

Alteryx Practice

442 -

Analytic Apps

6 -

Analytic Hub

2 -

Analytics Hub

4 -

Analyzer

1 -

Announcement

73 -

Announcements

25 -

API

3 -

App Builder

9 -

Apps

1 -

Authentication

3 -

Automation

1 -

Automotive

1 -

Banking

1 -

Basic Creator

5 -

Best Practices

3 -

BI + Analytics + Data Science

1 -

Bugs & Issues

1 -

Calgary

1 -

CASS

1 -

CData

1 -

Certification

270 -

Chained App

2 -

Clients

3 -

Common Use Cases

3 -

Community

817 -

Computer Vision

1 -

Configuration

1 -

Connect

1 -

Connecting

1 -

Content Management

4 -

Contest

49 -

Contests

1 -

Conversation Starter

159 -

COVID-19

15 -

Data

1 -

Data Analyse

2 -

Data Analyst

1 -

Data Challenge

188 -

Data Connection

1 -

Data Investigation

1 -

Data Science

102 -

Database Connection

1 -

Database Connections

3 -

Datasets

3 -

Date type

1 -

Designer

1 -

Designer Integration

4 -

Developer

5 -

Developer Tools

2 -

Directory

1 -

Documentation

1 -

Download

3 -

download tool

1 -

Dynamic Input

1 -

Dynamic Processing

1 -

dynamically create tables for input files

1 -

Email

2 -

employment

1 -

employment opportunites

1 -

Engine

1 -

Enhancement

1 -

Enhancements

2 -

Enterprise (Edition)

2 -

Error Messages

3 -

Event

1 -

Events

110 -

Excel

1 -

Feedback

2 -

File Browse

1 -

Financial Services

1 -

Full Creator

2 -

Fun

156 -

Gallery

2 -

General

23 -

General Suggestion

1 -

Guidelines

13 -

Help

72 -

hub

2 -

hub upgrade 2021.1

1 -

Input

1 -

Install

2 -

Installation

4 -

interactive charts

1 -

Introduction

25 -

jobs

2 -

Licensing

3 -

Machine Learning

2 -

Macros

3 -

Make app private

1 -

Marketplace

8 -

Maveryx Chatter

12 -

meeting

1 -

migrate data

1 -

Networking

1 -

New comer

1 -

New user

1 -

News

26 -

ODBC

1 -

Off-Topic

125 -

Online demo

1 -

Output

2 -

PowerBi

1 -

Predictive Analysis

1 -

Preparation

3 -

Product Feedback

1 -

Professional (Edition)

2 -

Project Euler

21 -

Public Gallery

1 -

Question

1 -

queued

1 -

R

1 -

Reporting

1 -

reporting tools

1 -

Requirements

1 -

Resource

117 -

resume

1 -

Run Workflows

10 -

Salesforce

1 -

Santalytics

9 -

Schedule Workflows

6 -

Search Feedback

76 -

Server

2 -

Settings

2 -

Setup & Configuration

5 -

Sharepoint

2 -

Starter (Edition)

2 -

survey

1 -

System Administration

4 -

Tax & Audit

1 -

text translator

1 -

Thursday Thought

57 -

Tips and Tricks

6 -

Tips on how to study for the core certification exam

1 -

Topic of Interest

167 -

Udacity

2 -

User Interface

2 -

User Management

5 -

Workflow

4 -

Workflows

1

- « Previous

- Next »