Last weekend was the climax of nearly two months work as part of my Alteryx for Good project. This work has encompassed huge amounts of data preparation (particularly using open data that was recently released by the UK’s Department of the Environment, Food and Rural Affairs – DEFRA), spatial analytics and advanced analytics. All this hard work culmlinated in a 12-hour focussed data dive to show the charities involved the ‘art of the possible’ in terms of using such amazing data sources in combination with analytics.

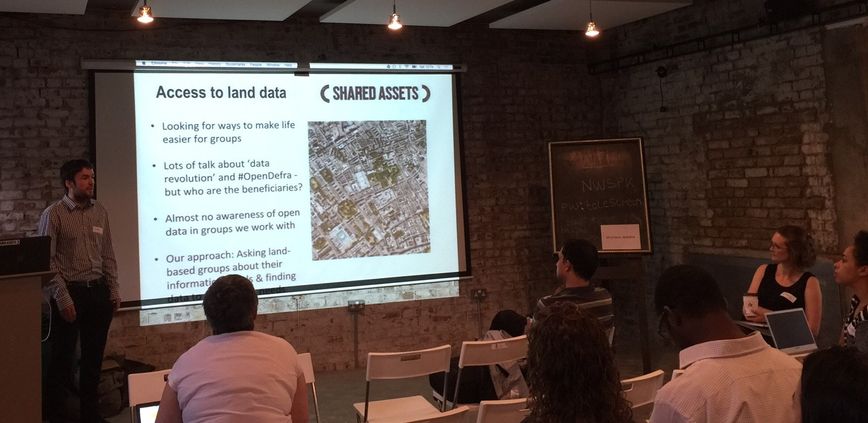

First, a bit of background – the Ecological Land Cooperative (ELC) is a small charity that aims to address the lack of affordable sites for ecological land-based livelihoods in England. Their core focus is the creation of small clusters of residential smallholdings for food production: protecting the environment and reducing greenhouse gas emissions by reducing fossil fuel usage. Shared Assets is a think-tank that offers practical advice to charities, landowners and communities who want to manage land as a sustainable and productive asset. The Shared Assets team were particularly interested in taking the initial learnings from the ELC data dive and applying them more widely across the UK.

Finally, DataKind are a charity that bring together data scientists to solve data-related charities for focussed ‘data dives’, helping charities make the most of the data assets that are available to them.

The Brief

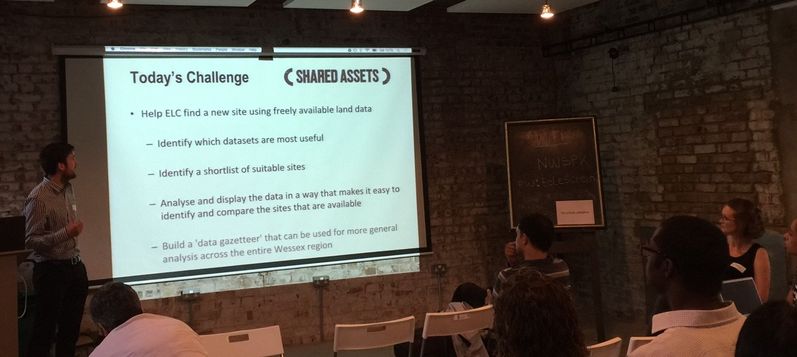

ELC recently purchased its second plot of land in England in order to set up a smallholding community. This process was extremely manual, time-consuming and expensive with many false leads and sites being discovered to be unsuitable only at a late stage in the purchase process. ELC were looking to use the data dive to see if it was possible to optimize this cycle so that automatic filtering could be applied to pre-select suitable locations for further investigation.

Shared Assets had been working closely with DEFRA on the release of over 8,000 data sets related to the Environment – by combining these with other open data sources (such as Ordnance Survey) could the data dive quickly identify whether there were any proverbial needles in the data haystack that could really add value to the site-selection process.

Shared Assets had been working closely with DEFRA on the release of over 8,000 data sets related to the Environment – by combining these with other open data sources (such as Ordnance Survey) could the data dive quickly identify whether there were any proverbial needles in the data haystack that could really add value to the site-selection process.

The Preparation

Over the six weeks leading up to the data dive itself, the three data ambassadors assigned to lead the programme (including myself) worked on evaluating the most likely candidates from the extensive catalogue of open data available from DEFRA. Most of these sources weren’t too relevant (annual expenditure on stationary for a DEFRA office in Dorset?!) but using Alteryx we found some real nuggets, including:

- Detailed soil data for chemistry (pH values, indicating how acidic or alkaline the soil was, nutrients present in the topsoil, etc.) and physical characteristics (rock, environment types and the presence of flowing water under the earth in aquifers).

- Climate data (rain, snow, frost and wind)

- Agricultural land classification (hugely important for the farmland types)

- Information on Sites of Special Scientific Interest

- Locations of nearby landfill sites

- Flood warning zones

- Noise zones (especially near roads or rail lines)

- Contour lines for height above sea level

We also found data on land plots currently for sale and historical price-paid data.

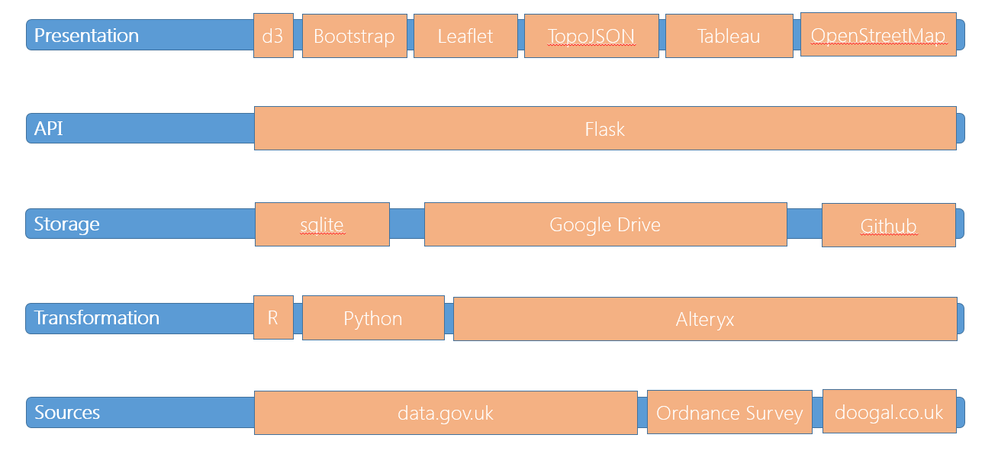

What made the data preparation challenging for the traditional data scientists in the team (using R, Python, etc.) was the spatial aspect of the data – most of the open data was provided in ESRI SHP format which was incredibly easy to load into Alteryx and using tools like Spatial Match, Spatial Process and Spatial Info readily gave up their secrets. In contrast for R and Python, this data required much more coaxing – often through the use of many intermediate modules such as OGR.

A great blog post ( http://blog.landregistry.gov.uk/inspire-index-polygons/) helped identify a data source for each unique land parcel in the UK which acted as the lowest level of granular data in our work – this was a really large dataset, so some pre-processing (focussing on the Wessex area of south west England, and only retrieving land parcels of between 10-30 acres) was needed to keep the processing to a reasonable level on the day.

After a considerable period of geospatial data munging, we produced feature data sets for all these sources in: SHP, KML, geoJSON, CSV and relational database (sqlite) formats so that everyone had the chance to work with the data in the format that best suited their individual skills on the day of the data dive itself. Alteryx was instrumental is producing clean, usable datasets with the spatial overlap between complex polygons already pre-computed so that the data scientists could simply access the information they needed.

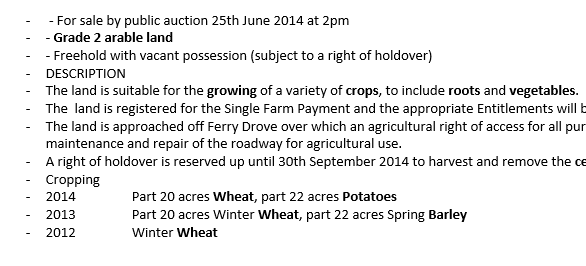

Another interesting use of Alteryx was in the initial analysis of the textual descriptions of the land currently for sale. Building a simple connector in Alteryx to communicate with an online text analytics service (http://monkeylearn.com/) it was almost trivial to extract a series of n-gram features for each plot of farmland for sale. Using an initial analysis of around 400 farm properties in the UK and training a simple classifier with the n-gram features, it was then easy to use this model to provide a relevance score for each future farm site that was evaluated.

Quickly, it became clear that there were two real types of farmland on offer: genuine land for sale, and country-houses. The former often mentioned the grade of land and the types of crops grown, the latter mentioned details about the house itself (e.g. ‘farmhouse kitchen’, ‘bedrooms’), nearby amenities (‘yachting’, ‘local pub’) or commuting distance back to London. Thus a noisy dataset of available properties was easily filtered to retain only those plots that contained real land opportunities.

The Event

On the day, the teams split into three groups – one for each data ambassador. One team decided to analyse the historical pricing and available farmland for sale data. A second decided to review the geochemical and climate data for the region. Both these groups were taking a ‘top-down’ approach in terms of starting with a defined set of properties for sale and then evaluating these sites for suitability – pruning the sites available until only a few desirable plots remained. These plots could then be reviewed individually (thus saving the charity a large amount of time by removing unsuitable sites).

On the day, the teams split into three groups – one for each data ambassador. One team decided to analyse the historical pricing and available farmland for sale data. A second decided to review the geochemical and climate data for the region. Both these groups were taking a ‘top-down’ approach in terms of starting with a defined set of properties for sale and then evaluating these sites for suitability – pruning the sites available until only a few desirable plots remained. These plots could then be reviewed individually (thus saving the charity a large amount of time by removing unsuitable sites).

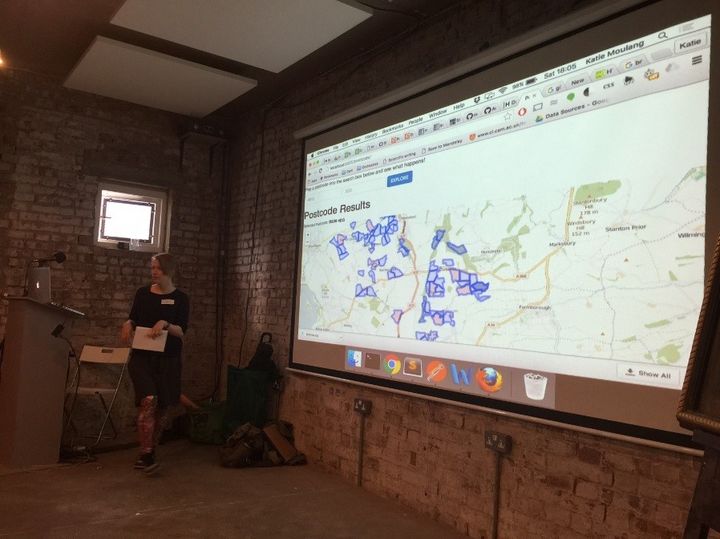

The final team (led by myself) worked on an alternative ‘bottom-up’ approach – taking the vast repository of open data that we’d collected and making it all available for faceted querying in a simple web application (DataKind is quite specific in that it doesn’t like to showcase or implicitly recommend any software vendors, so Alteryx remained quietly competent in the background at this point!)

application used a simple architecture of sqlite/python (Flask) for the backend and HTML/Javascript/Bootstrap/d3/Leaflet for the front-end. Leaflet, in particular, was (in hindsight) a great choice as it produces OpenStreetMap maps that are very easy to customise.

My team also made the decision that it would be vital for the application to serve both humans (via a browser) but also other analytical tools (e.g. Tableau) by providing the underlying data through a JSON API. We created a large number (20+) of endpoints for the API that served up the data at the postcode level and even lower at the individual land parcel level.

DataKind kept us fed and watered during the day (bagels for breakfast, pies for lunch, pizza for dinner…more carbs than anyone needs!) and we regularly came together as a whole group to present back our interim findings and decide next steps.

The Outcome

Each attendee was encouraged to talk about their findings, even if that meant showing little or no progress (the effort was applauded nonetheless). The historical price analysis revealed some underlying relationships between time of year and sale price as well as correlations around land quality and price. The text analytics work was extended and again produced a useful classifier to help segment the data for ongoing analysis. The soil analysis team managed to find several outcomes that challenged popular preconceptions by the charities themselves (which was fascinating) and has led to some actions on their part to start incorporating this additional intelligence into their general approach.

Finally, the bottom-up data platform delivered its initial visualisations with a nice, extensible approach for adding new data in the future (the charities have budget for a web developer resource that can be brought in to productionise and extend this).

Personal Thoughts

It’s been fascinating watching self-styled ‘data scientists’ in action: both as fellow data ambassadors and participants. I’ve been surprised by how rigid a definition of ‘data science’ is applied by some of these practitioners: almost a withering sigh is given if you say that you’re using something other than RStudio or Python. Yet, on the day the team members with the greatest level of success actually used Tableau, QGIS, Qlik and…yes Alteryx(!) to get their analytical pipeline complete (especially as the Python team needed to spend an hour or more ensuring that the various python libraries were all talking together correctly).

I guess my biggest takeaway from the event was that data science requires the combination of mental tools from so many disciplines: statistics,

mathematics, computer science, engineering, geography, etc. as well as a good dose of inspiration, curiosity (luck?) and flexibility.

For all the team members who looked over my shoulder while I was running around on the event day, they were genuinely astonished that a tool like Alteryx could enable all these disciplines to come together through such a simple and easy-to-use environment.

I’m proud to have achieved something worthwhile. I’m proud that Alteryx supported me through Alteryx for Good and I’m proud to be an advocate of such amazing technology to solve real problems!