Engine Works

Under the hood of Alteryx: tips, tricks and how-tos.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Engine Works

- :

- Guide to Building a Simple Application for Collect...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Download the workflow below as a 7z-archive (use 7zip to open the archive).

Before running the tool, make sure to obtain your own API key from newsapi.org and to insert it into the macro workflow!

Introduction

For whatever reason, you might want to (or need to) collect, store and process unstructured text items with associated metadata. Examples could be to set up a library for research purposes or to build a tool to watch the public discourse for relevant conversation pieces. This guide will showcase a just-the-basics approach to build one possible implementation of such a tool in Alteryx (there are surely many alternative ways to do it differently or better).

To reduce the overall complexity, it makes sense to split the complete workflow into smaller components. It is then possible to explain separately what goes into a component, how the component works and what comes out of the component. A component may further contain smaller modules that can also be explained separately (apologies if this conflicts with your definition of "component" and "module").

The guide describes an actual application which was built as a proof of concept (and was then somewhat simplified for use as a tutorial). Alteryx beginners to intermediate users should be able to follow along. The structure of the guide is inspired by the great series "Guide to Creating Your Own Connector" and the presentation "Natural Language Processing with Alteryx, Spacy, and Tensorflow" by Alteryx's own JP Kabler.

Before we start

The first step is to decide which kind of item will be collected. Many kinds of items are possible, including:

- News articles

- Blog articles

- Generic websites

- Data rows from any table or database

- Social media comments such as tweets

- Text and metadata from PDF documents

- …

There are also several ways to obtain such items, including:

- Manually collected and put into a "hot folder" as individual files

- Crawled or scraped directly from websites (please mind the potential legal pitfalls of crawling and scraping yourself)

- Obtained from a provider with a searchable collection via an API

- Via a direct database connection

- …

In theory, an ideal application should be able to collect, store and process any item with an unstructured text corpus (for example a news article's headline, text, and/or summary) and associated structured metadata (date published, the author's name, etc.). However, the scope of this guide will be limited to news articles obtained through a keyword search from a provider via an API.

Before starting the implementation, decide on a provider that you want to use. This Quora question provides a good and relatively current overview of news article providers. I decided to go with News API, as their documentation is very straight forward, they seem to have a good selection of sources, and they offer a free service tier with 250 requests per every 6 hours.

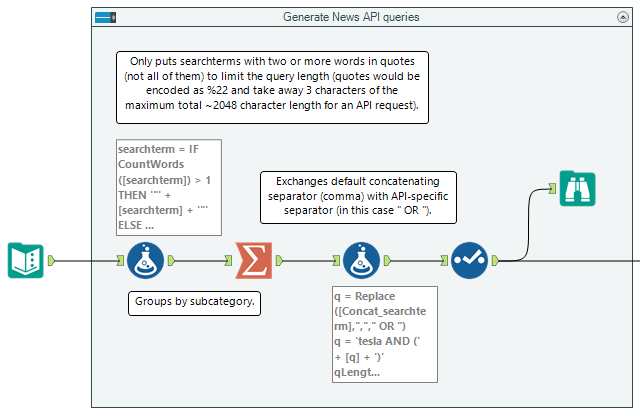

Generating query strings

Into the component goes a list of search terms. Out comes a query string suited to the API.

To search the News API collection of news articles, the API expects a query string ("q") that consists of individual or logically combined search terms. The list of search terms that make up the query string can become fairly complex depending on what you are searching for. The following is an example of how I structure my search term lists (and also the content of the Text Input tool in the above workflow):

|

searchterm |

searchterm |

searchtermRoot |

searchtermTerm |

searchterm |

|

|

1 |

good |

very good |

adjective |

positive |

positive |

|

2 |

good |

very good |

adjective |

positive |

positively |

|

3 |

good |

very good |

adjective |

positive |

positives |

|

4 |

good |

good |

adjective |

fine |

fine |

|

5 |

good |

good |

adjective |

ok |

ok |

|

6 |

bad |

very bad |

noun |

catastrophe |

catastrophe |

|

7 |

bad |

very bad |

noun |

catastrophe |

catastrophes |

|

8 |

bad |

very bad |

noun |

catastrophe |

catastrophic |

|

9 |

bad |

bad |

verb |

do bad |

do bad |

|

10 |

bad |

bad |

verb |

do bad |

did bad |

|

11 |

bad |

bad |

verb |

do bad |

does bad |

|

12 |

bad |

bad |

verb |

do bad |

doing bad |

|

13 |

bad |

bad |

verb |

do bad |

bad doings |

|

14 |

bad |

bad |

verb |

do bad |

bad doing |

|

15 |

bad |

bad |

adjective |

negative |

negative |

|

16 |

bad |

bad |

adjective |

negative |

negatively |

|

17 |

bad |

bad |

adjective |

negative |

negatives |

|

18 |

bad |

bad |

adjective |

negative |

negativity |

There are ways to optimize this (for example by using wildcards to cover all the derivatives of a root search term such as "catastroph*" or by using search operators such as "term1 within 3 words of term2"), however whether one can use them depends on the API.

The amount of search terms you can pass to an API in one request is also limited. And one can't really search for all of the search terms separately. Searching separately would mean hundreds or thousands of requests with multiple pages each. To avoid this, the search terms can be organized into categories and potentially subcategories. The search terms in each category are then concatenated into one query string via a Summarize tool. In my production workflows, I organized the subcategories so that they contain a maximum of 100 search terms each. In general, you should avoid query strings with more than 1500 characters (a maximum of 2048 characters in total for the whole API request including the URL and all parameters seems to be common).

The character limitation is also the reason why I'm only putting search terms with more than one word into parentheses:

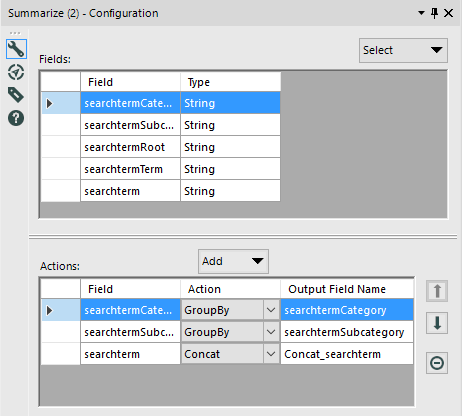

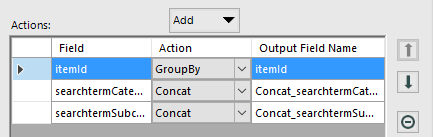

The following is the setup of the Summarize tool:

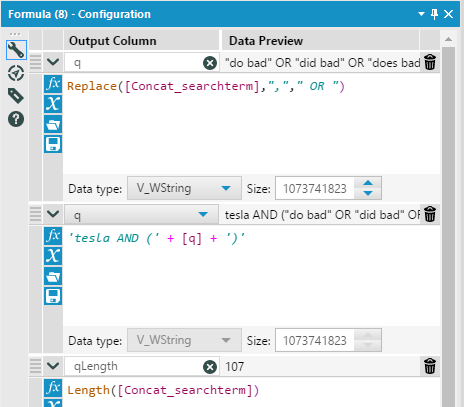

In addition, "tesla AND" was added via a formula tool so as to limit the number of results for the tutorial by specifying an additional search condition:

The search terms in the table above with the logic of the Summarize and Formula tools results in the following concatenated queries:

|

searchtermCategory |

searchterm |

Q |

qLength |

|

bad |

bad |

tesla AND ("do bad" OR "did bad" OR "does bad" OR "doing bad" OR "bad doings" OR "bad doing" OR negative OR negatively OR negatives OR negativity) |

107 |

|

bad |

very bad |

tesla AND (catastrophe OR catastrophes OR catastrophic) |

37 |

|

good |

good |

tesla AND (fine OR ok) |

7 |

|

good |

very good |

tesla AND (positive OR positively OR positives) |

29 |

Different APIs also have different advanced search parameters (see News API's as an example). For this guide, we're just using "OR", "AND", """ quotes, and "()" parentheses.

Executing API requests

In goes the previously generated query string. Out comes an API response for further processing.

The next step looks pretty simple at first, but the magnifying glass icon hides an iterative macro that gave me a headache. Either macros are not implemented as easy as they could be or I'm just not smart enough for them. Or maybe I'm just spoiled by the ease of use of most other tools in Alteryx.

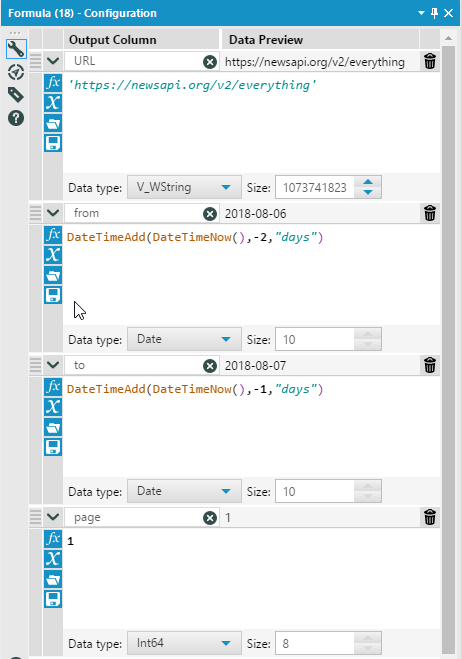

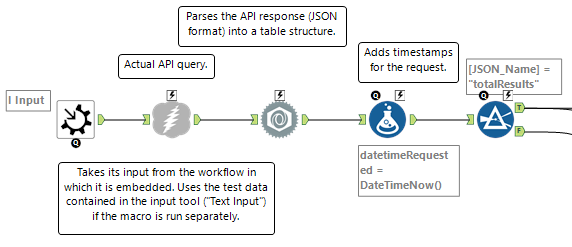

The iterative macro takes the data provided by the macro input. This consists of the queries generated in step one (including the respective categories and subcategories) as well as the contents of the Formula tool:

I'm not exactly sure whether one needs to "initialize the page number variable" outside the macro, but I'm leaving it in there because it feels right. I read several tutorials and community questions when building the macro, this tutorial was particularly helpful.

This is what the first half of the iterative macro looks like:

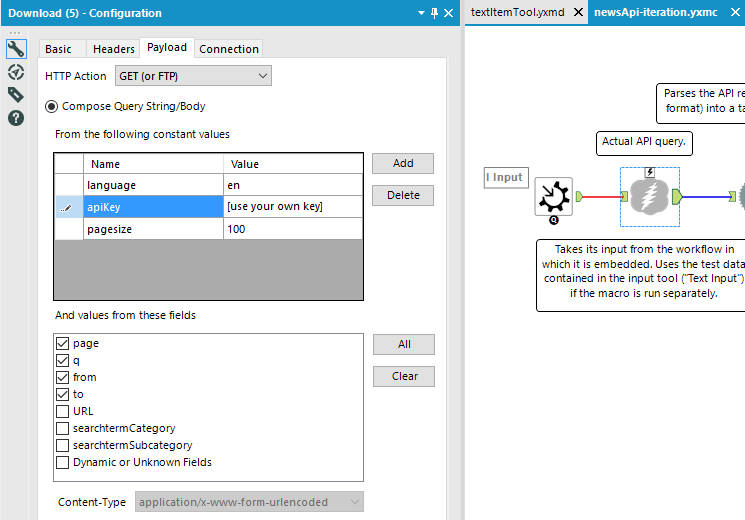

In my version of the workflow, the parameters for language, apiKey, and maximum page size are hard coded into the Download tool (the one with the lightning icon) that performs the actual API requests. One might also pass them into the macro from the workflow in which the macro is embedded.

In many cases, an API key can also be provided to the API via the request headers. This can better hide the key from potential eavesdroppers (it's an HTTP request after all) and may reduce the total length of the query URL. I'm leaving it in the query body as it's a bit easier to understand that way.

Executing the request will result in a JSON formatted answer by the API. Fortunately, Alteryx has the JSON Parse tool to convert it into table format:

{"status":"ok","totalResults":244,"articles":[{"source":{"id":null,"name":"Cleantechnica.com"},"author":"Guest Contributor","title":"Tesla’s [Ahem, Elon’s] Unconventional Approach To Media Criticism","description":"Negative media coverage is a fact of l...

becomes:

To keep track of the requests, I'm adding a timestamp via a Formula tool.

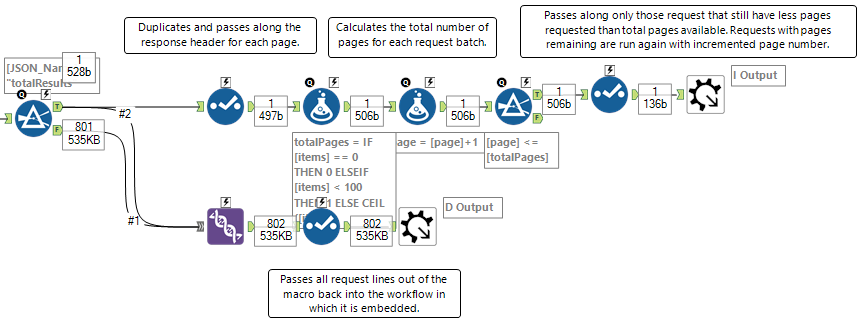

The second half of the iterative macro has two branches:

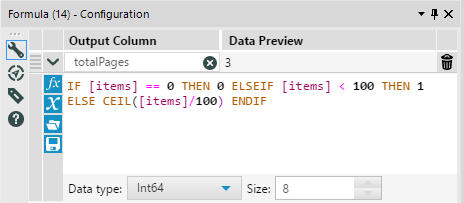

The upper stream takes only those lines that contain the values for total results per search query. If the number of results is above 100 (the maximum per page for News API), the tools calculate the number of total pages and the iterative macro then runs a certain query until all pages have been obtained. I'm making use of an IF statement and the ceiling function CEIL() to calculate the total pages. I'm not sure whether this is the most elegant way, but it seems to work:

Only those requests for which the current page value is lower than or equal to the total number of pages remain in the iteration and get passed back to the beginning for another run with page number incremented by 1.

All other lines (and the line with the total results merged back in for potential statistics and logging purposes further down the workflow) are passed outside the macro (back into the workflow above) via the bottom Output tool. There, they're cached in an Alteryx database file (.yxdb).

Hint: After a successful API request, collapse the first two toolboxes ("Disable Container") via the icon in the upper left corner to preserve your allotted number of API calls.

Converting API responses

In goes the raw response data from the API request, out come deduplicated items with associated metadata.

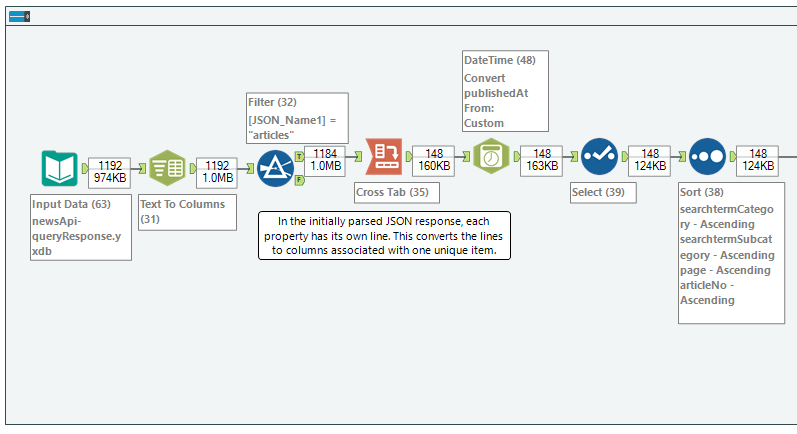

In the first half of the conversion workflow, the tabular JSON data is transformed into a much more usable regular table structure. Before this, each line is a property of an item from the JSON response, potentially split up further into sub-properties such as a list of tags within a general "tag" property.

The Cross Tab tool is the opposite of the "transpose" option in Microsoft Excel (of course, Alteryx also has a Transpose Tool). It converts the lines into columns. In the Cross Tab tool, select the properties which describe a unique item and on which to do the grouping of lines (this also means you will keep these properties as columns).

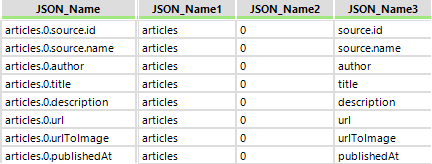

Everything except "JSON_Name2" should be clear from the descriptions above. JSON_Name2 is the part of JSON_Name that contains the article number of a certain item on a page.

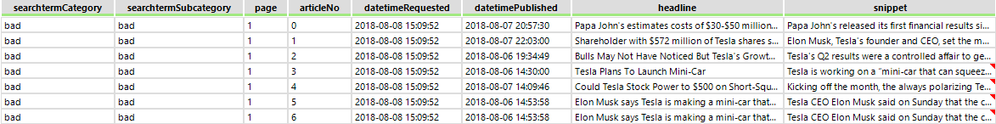

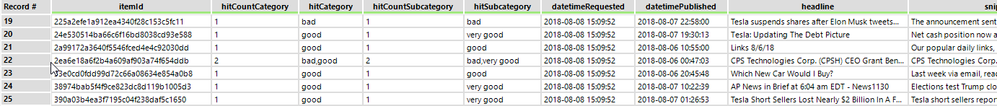

After some re-arranging and sorting, the data looks like this:

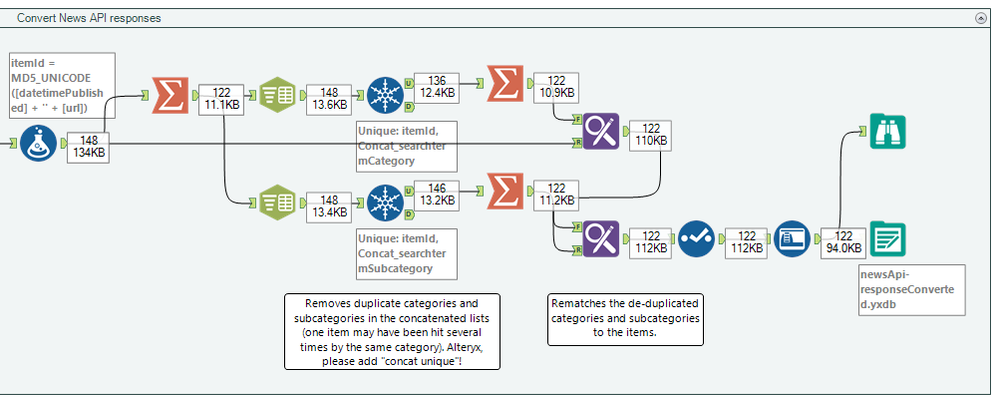

It may happen (and depending on the search terms, it may happen a lot) that articles are found through different search term subcategories. As a remediation, I calculate a unique ID for each item at the beginning of the second half of the workflow. The assumption behind this is that no article will be published via the same URL at the exact same time more than once without it being an exact duplicate. To create the ID, the URL and publication timestamp are concatenated and hashed as an MD5 checksum (this is just to shorten it, the raw concatenated string would work as well).

The Summarize tool that follows groups by item IDs and concatenates values that may differ even for the same item. After grouping, items may have duplicate category and even duplicate subcategory values. The latter may be due to the API not deduplicating according to URL and timestamp. As Alteryx does not have a "Concat Unique" option for Summarize (feature request!), the deduplication of metadata within each field must be done manually by splitting the values up into rows again, using the Unique tool and re-concatenating the unique values.

The end result is a list of items with associated metadata (see the item with duplicate categories in line 22). Of course, there will still be duplicates as the URL and the timestamp are not sufficient for content deduplication. For example, some news sites will publish one article several times to different websites in some kind of federation (which results in slightly different URLs but exactly the same content).

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.