Introduction

In light of the upcoming 2021 World Chess Championship match between Magnus Carlsen (NO) and Ian Nepomniachtchi (RU), I decided to marry my so-called analytics skills and my long-term, love-hate relationship with chess.

The marital ceremony occurred exclusively in Alteryx Designer — this included everything from the inputting of the data, its cleansing, transformation and blending, along with the feeding of the scrubbed data into a linear regression, a gamma regression, and a boosted model.

And — wait for it — this was all done completely code free!

Before the machine learning gurus of the world come after me with pitchforks and torches, I have no doubt that this could have been whipped up as a much more thorough, intense, and accurate final prediction using a combination of TensorFlow, Keras, and scikit-learn.

The inspiration behind this side project was to showcase that it is possible to automate data manipulation and predictive capabilities without being a Python whiz or a stats PhD holder, or having a 200 point IQ. All it takes is someone with the right tool and a desire to step up their analytics game — a “citizen data scientist,” if you will.

Beyond sharing the approach below, I’ve also attached the workflow itself to this post. Feel free to take a look at it and play with it yourselves. I’m sure there are many potential points of improvement. 😊

Data

The publicly available data used in the exercise came from Kaggle and was comprised of 3 main components:

- Openings Data — a collection of 2,750 opening variations with number of games played, ECO code, win/loss % by colour, etc.

- Game Data — a collection of 158,454 professional chess games from the Chess Tempo database, including players, opening played, number of moves, result, etc.

- Player Data — a collection of information about 128 Super Grand Masters (GMs) with max and current ELO ratings, total games played, etc.

Approach & Building

With the data downloaded, I took the following three-step approach in tackling the problem:

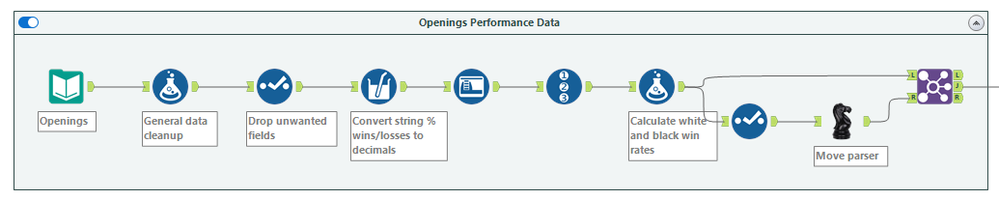

1) Cleanse the data — If you want to feed your data into any form of machine learning model, it needs to be squeaky clean. The predictive tools available in Alteryx Designer are R-based; and although each underlying programming language comes with its own idiosyncrasies, I find that R and R-based models can be particularly finicky if your data isn’t spick-and-span.

Thankfully, Alteryx made this trivial to do. A combination of automated data cleansing, parsing, and selection tools made the cleaning of hundreds of thousands of rows of data something so simple I could teach a high schooler to do it.

(Note: That black knight tool in the bottom right corner was a macro built to parse out opening theory moves into individual columns. They weren't used in this analysis, but open up the opportunity for deeper analysis in future iterations!)

2) Blend the data — This step involved combining all three data sets into one stream that would ultimately be fed to train the model.

Once again, there’s not much to write home about here. Combining multiple disparate sources of data through one or more common identifying variables was as simple as dragging the data sources into one tool which could simulate a multi-parameter INDEX MATCH without the headache of having to write any expressions out.

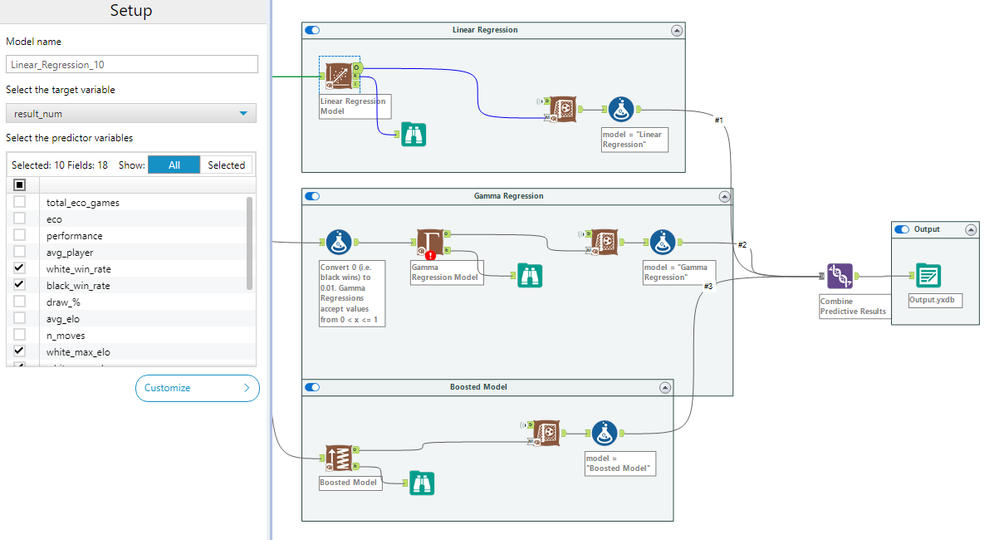

3) Create the predictive model — It may sound too simple to be true, but it’s not. Creating a model using the Predictive tool palette is as simple as configuring two items:

a) selecting the target variable (in our case, the match result), and

b) selecting the predictor variables.

That’s it! Whether you’re creating a linear regression, a gamma regression, a forest model, a boosted model, a decision tree, or any one of the many pre-built models available in Designer, the two steps above are constant.

Now, it goes without saying that you shouldn’t just be throwing you-know-what at the wall and seeing what sticks when selecting your predictor variables. And if you haven’t guessed by now, yes, there’s a tool for that. The Association Analysis Tool helped me spit out a full-blown correlation matrix with bivariate associations between each variables, along with the associated p-values.

In layperson's terms, it identifies which variables are statistically significant predictors of the result of the final match.

Result

Admittedly, the result here was partially anticlimactic when I’d learned that the only statistically significant predictors were both players’ current and max ELO ratings, as well as the number of games they’ve played throughout their careers (real shocker).

It does not mean that the analysis was for naught, but part of me was hoping for a juicy variable to fly in from left field and tell us that a player’s favourite pasta dish would be a determining factor in the outcome of the match. For the integrity of the analysis, it’s probably a good thing that no such variable appeared.

With diversity of results in mind, I opted to go for three different models: a linear regression, a gamma regression, and a boosted model. The last of the three was used with the intention of introducing a more modern statistical analysis, compared with the first two which are very much traditional predictive methods. Nothing wrong with that, but variety is indeed the spice of life.

The models were now trained, locked, and loaded — all that was left was to feed the models and the final match data into the scoring tool to get our result. Since the model features included only the players’ rankings and total games, the input data was simple to put together. Since piece colour will be determined by a draw, I ran two rows of input, giving each player a turn at white and black to see if it would be a deciding factor (spoiler: it was not).

Funny enough, the results came out almost identically across each model, each of which gave Magnus the slight edge over Nepo. Although there are a handful of tools that allow you to automate model comparison and hypothesis testing, I relied on the old school technique of eyeballing it and calling it a day when I saw how close the numbers were. Thrilling, I know.

Conclusion

All in all, it most definitely does not take a machine learning model to peg Magnus as the favourite in this match — he’s been the undisputed world champion for seven straight years now.

That said, it’s pretty **bleep** cool that we could quantify the extent to which he is the favourite without writing a single line of code.

I’m certain that there are plenty of improvements which could be made to the model, so feel free to leave some suggestions in the comments below! 😊

Blog teaser photo by ConvertKit on Unsplash