Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Predicting Employee Attrition with Alteryx Machine...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Retaining valuable employees is a critical success factor for organizations. Employee turnover lowers morale, decreases productivity, and is costly. According to Gallup [1], employee attrition costs U.S. businesses $1 Trillion annually! It can cost from one-half to two times an employee's annual salary to replace them, depending on job level and other factors. Further, 52% of departing employees indicated that their manager or organization could have done something to retain them. Identifying what factors are driving employee attrition and predicting which employees are at risk of leaving empowers an organization to take corrective actions that can lead to huge benefits and cost savings.

Fortunately, Alteryx Machine Learning is here to help human resources professionals and other leaders leverage the power of machine learning to complement their understanding of what factors are influencing employee attrition and who is at risk of leaving so they can take positive action.

Use Case

Susan is an analyst in her company’s human resources department. She has assembled data on employees who have left voluntarily and those who have stayed. She’ll use Alteryx Machine Learning to gain insights about her data and build machine learning models to assess its predictive power. If she can identify factors influencing attrition, she’s confident she and her HR team can drive improvements that will reduce it. And, if she can build a model with strong predictive power, her team will be able to direct their energies to retaining the employees at the greatest risk of leaving.

Data Acquisition

Susan assembled data related to a cohort of employees that voluntarily left the company and a cohort that remained with the company. A copy of her data is attached. Her data contains the following information:

- Employee Identifier

- Employee e-mail

- Salary

- Performance Rating

- Job Grade (current, or at departure)

- Job Grade upon hire

- Department

- Hire Date

- Job Level (i.e. title)

- Recruiter Identifier

- Recruiter Company

- Months Tenure

- Manager Identifier

- Termination Type

- Termination Reason

- Quit (did the employee leave the company)

Data Preparation and Exploration

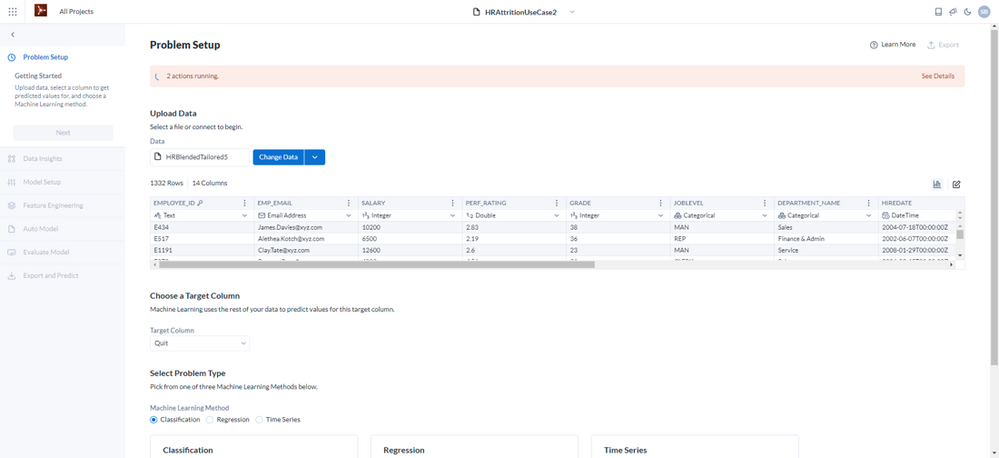

Susan loads her data into Alteryx Machine Learning. On the Problem Setup screen, she turns on data profiling to get an understanding of the distribution of values in each column of her data and what data types AYX ML has inferred, e.g, numeric vs. categorical, etc. Since she is using machine learning to model who may leave the company, ‘Quit’ is the target variable she will use during the modeling process, so she selects that. Alteryx Machine Learning recommends to use a Classification machine learning method, which makes sense to Susan because her target variable ‘Quit’ is not numeric and has two values(classes), ‘Yes’ and ‘No,’ so she sticks with the recommendation. Since there are only two classes, Susan’s case is an example of binary classification.

Susan reviews her data. She knows that data fields that act as record identifiers are of no use to machine learning as they have no statistical significance because they are unique for each record. So, she inspects her EMPLOYEE_ID, RECRUITER_ID, and MANAGER_ID fields.

She recognizes that RECRUITER_ID and MANAGER_ID cannot act as record identifiers because they don’t contain unique values for every record. But, she’d like to know whether they influence the likelihood of quitting so she retains them for use during the modeling process. She knows that EMPLOYEE_ID is unique for every employee at her company, so she clicks on the column heading and chooses 'Mark as ID Column' so that Alteryx Machine Learning will not use it during the modeling process.

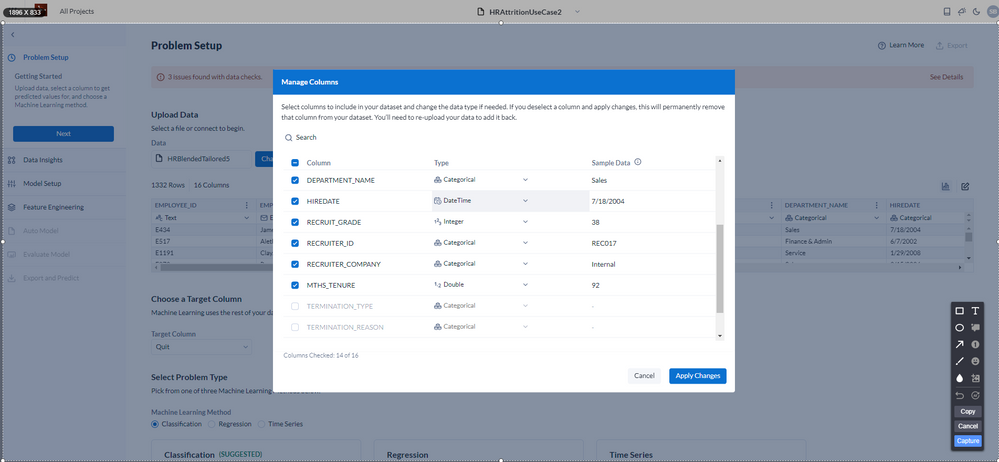

She studies the data distribution of each column and sees that TERMINATION_TYPE and TERMINATION_REASON are highly null because most rows are for employees who remained with the company. Highly null columns are not helpful during the modeling process. Further, since a null value in either column always corresponds to a ‘No’ in the ‘Quit’ column, they contain information about the target variable. This is known as target leakage and is detrimental to building generalizable models. Lastly, she is aware that when she uses her model to make predictions for new cohorts of employees, she won’t have those columns in her data. For these reasons, Susan wisely chooses to drop those columns.

Susan then notices that the HIREDATE column was not inferred as a DateTime logical type, so she changes the logical type from Categorical to DateTime.

Data Insights

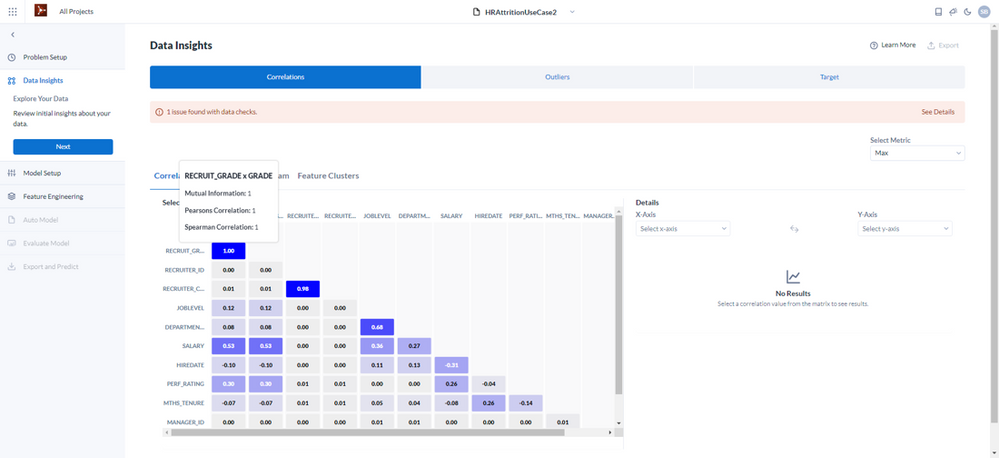

Susan proceeds to the Data Insights panel to learn how her data is correlated and whether there are problematic outliers in her data. She discovers that GRADE and RECRUIT_GRADE 100% correlated. She also discovers that RECRUITER_ID and RECRUITER_COMPANY are 100% correlated. She makes a note that this is something to consider during the feature selection step. She also is interested in what her target variable ‘Quit’ is correlated with and sees there is a relationship between Quit and MTHS_TENURE and MANAGER_ID and looks forward to discovering more about that during the modeling process.

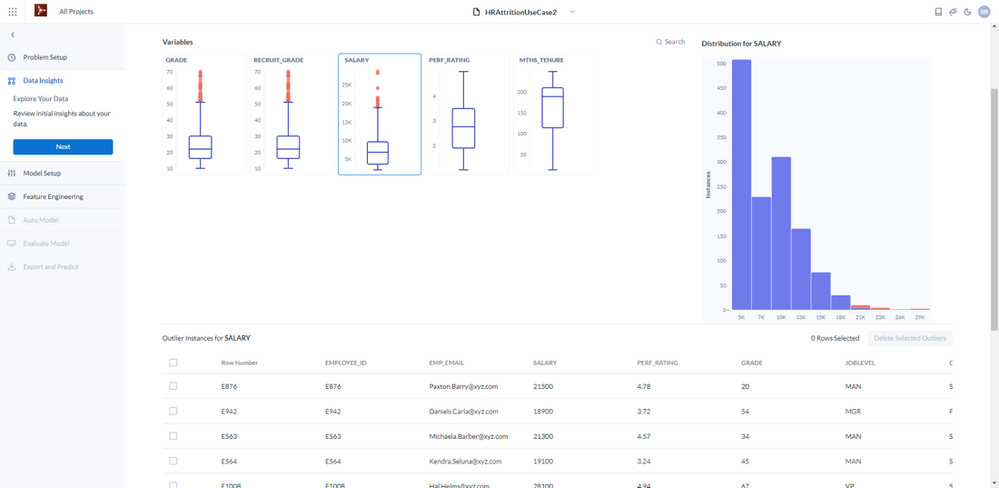

Next, she checks for Outliers. She sees there are outliers in the GRADE, RECRUIT_GRADE, and SALARY columns. She inspects the outlier values, and they all are legitimate in her company’s business context, so she chooses not to drop any of the outliers.

Model Setup

Susan opens the Model Setup panel. Since Susan is not a machine learning expert, she chooses to accept the default settings.

Feature Engineering

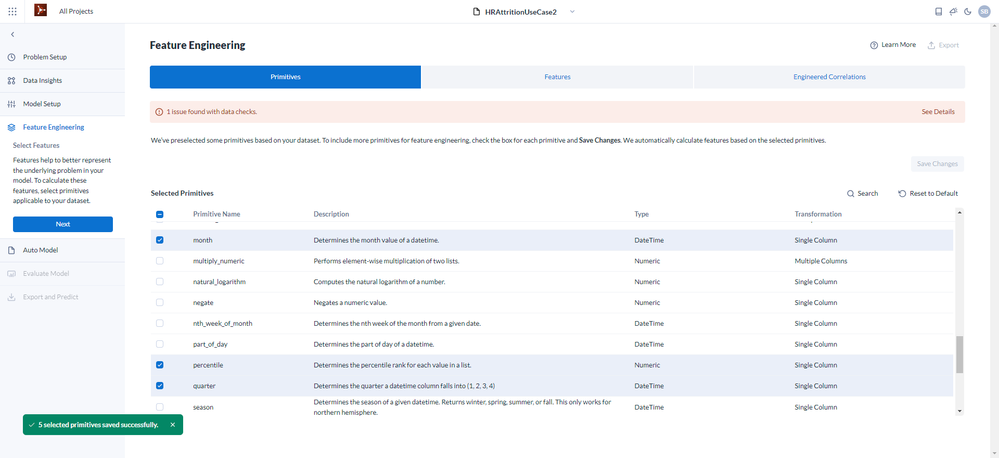

Susan proceeds to the Primitives tab to see if there are potential signals in her data that might be uncovered through feature engineering. Feature engineering is the process of using domain knowledge to discover new features (characteristics, properties, attributes) from raw data. The motivation is to use the new features to improve the quality of results from a machine learning process versus supplying only the raw data. Primitives are data operations that are used to create new features. Susan identifies several primitives she thinks may generate features that uncover more information from her data to improve her model. Since she’d like to explore whether the timeframe of hire has any influence on the likelihood of quitting, she selects percentile, month, day_of_year, quarter, and year primitives.

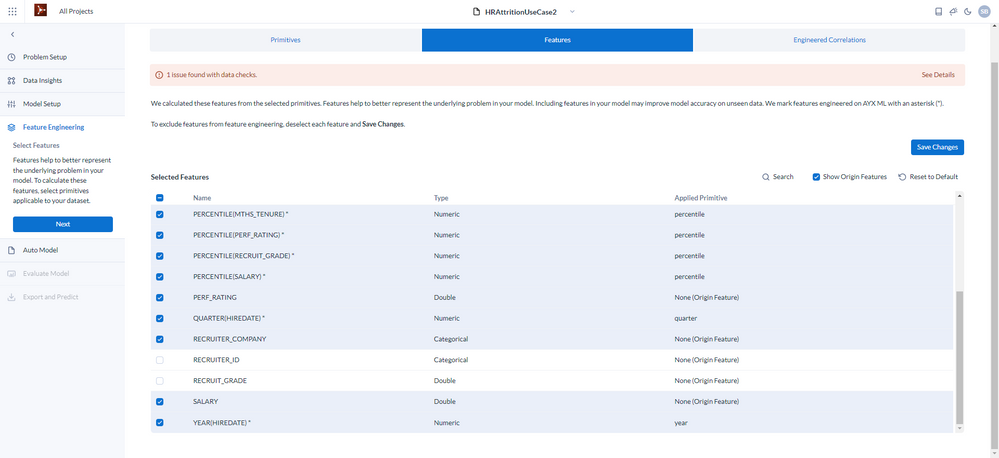

Susan opens the Features tab to review the total set of features she’ll use in the modeling process. She is pleased to see the new engineered features derived from her selected primitives. She recalls from the correlation matrix that GRADE and RECRUIT_GRADE are highly correlated, as well as RECRUITER_ID and RECRUITER_COMPANY. Highly correlated columns do not result in less accurate models, but they do make it harder to explain a model. She decides to keep only one column from each set of highly correlated columns and deselects RECRUIT_GRADE and RECRUITER_ID.

Modeling

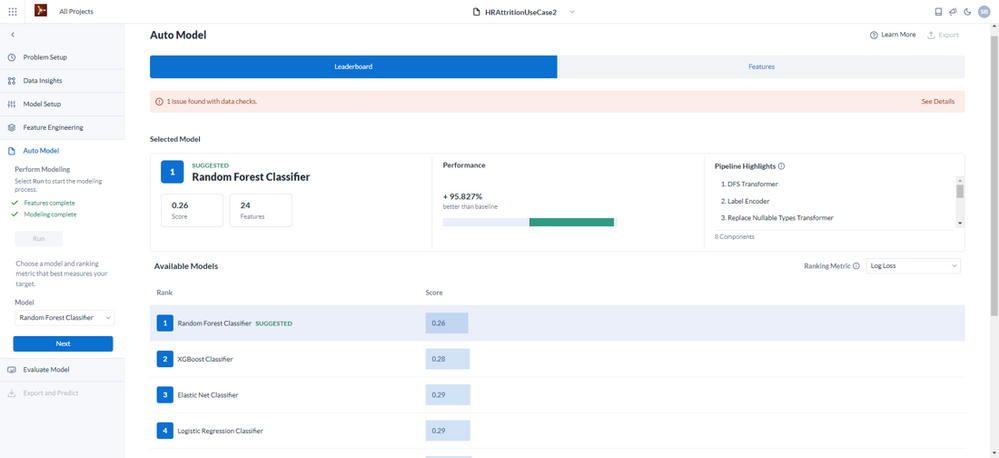

Susan kicks off the auto modeling process by clicking ‘Next.’ Alteryx Machine Learning then runs a suite of modeling algorithms to find the best one and provides results on the Leaderboard. The Random Forest Classifier model was the best performing based on the Log Loss metric. She clicks on ‘Learn More’ to educate herself on the various ranking metrics. The Random Forest Classifier model also performed well on binary classification-specific metrics like Accuracy, and F1 Score, which balances Precision and Accuracy. This increases her confidence that her model will perform well.

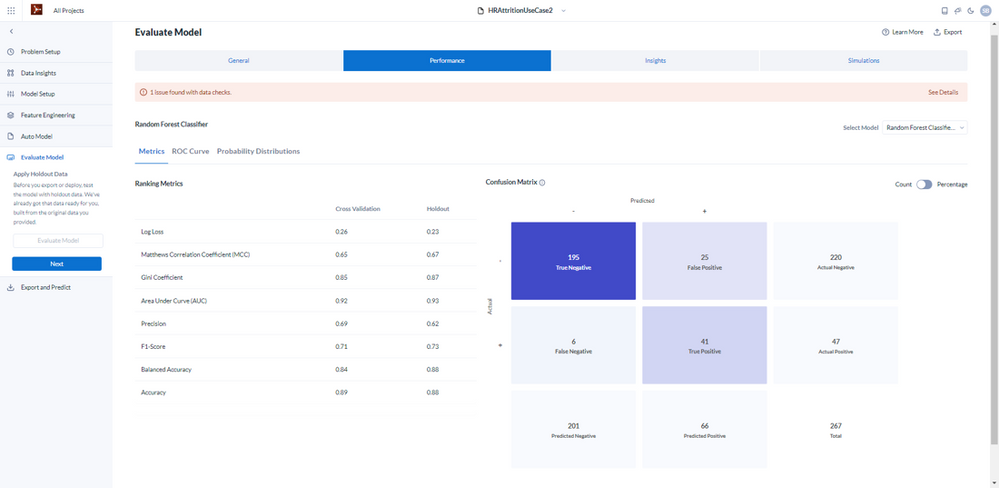

She clicks 'Next’ to apply holdout data to see how the Random Forest Classifier performs with it. She opens the Performance tab and sees the Confusion Matrix, which represents the performance of binary classification models. Since ‘Quit’ is the target variable, ‘Yes’ entries are considered positive, and ‘No’ entries are negative. She sees that of the randomly selected 267 records comprising the holdout set, there were 47 actual positives (employees who quit) and 220 actual negatives (employees who stayed). Her model correctly predicted 195 of the actual negatives and 41 of the actual positives. 195+41/267= .88, which represents the Accuracy of her model against the holdout dataset. The precision was 41/41+25= .62, and recall 41/47=.87.

However, what matters most to Susan is to learn whether her model is powerful enough to justify the HR department using it to guide their attrition reduction efforts. Her management team wants her team to focus on retaining the employees at the most risk of leaving.

Studying her results further, she focuses on the precision score against the holdout data. The precision score represents the percentage of predicted positives that were actually positive. Her model scores .62 for precision, meaning that if she uses it to make predictions about attrition for a similar cohort to the one she built the model from and focuses on employees in the predicted positive group, 62% of her team’s effort is likely to be directed at employees that have a substantial risk of quitting and 38% toward employees with a low likelihood of quitting.

She is concerned about wasting energy on employees likely to stay, but what’s most important is taking positive action to retain employees in the high-risk category, missing as few as possible. Her model correctly predicted 41/47 = 87% of those truly at risk of attrition. Based on its results and what she knows about the costs and benefits of targeted employee retainment efforts, she has confidence her model will significantly improve their chances of success.

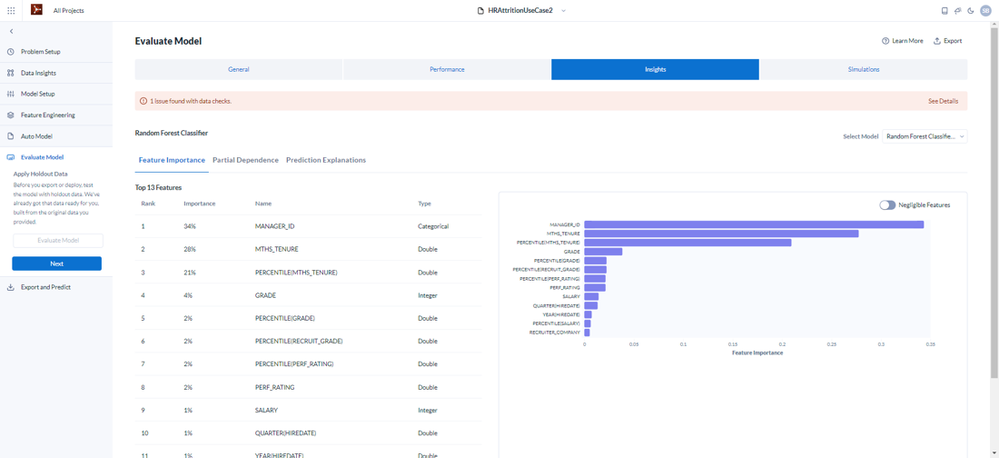

Now that Susan is confident her team can focus on the right people, she needs to know if her model can help her team address the factors influencing employee attrition. She moves on to the Performance tab to see which features are most important to the model. On the Feature Importance tab, two things stand out - MANAGER_ID (34%) and MTHS_TENURE (28%) in conjunction with PERCENTILE(MTHS_TENURE) (21%).

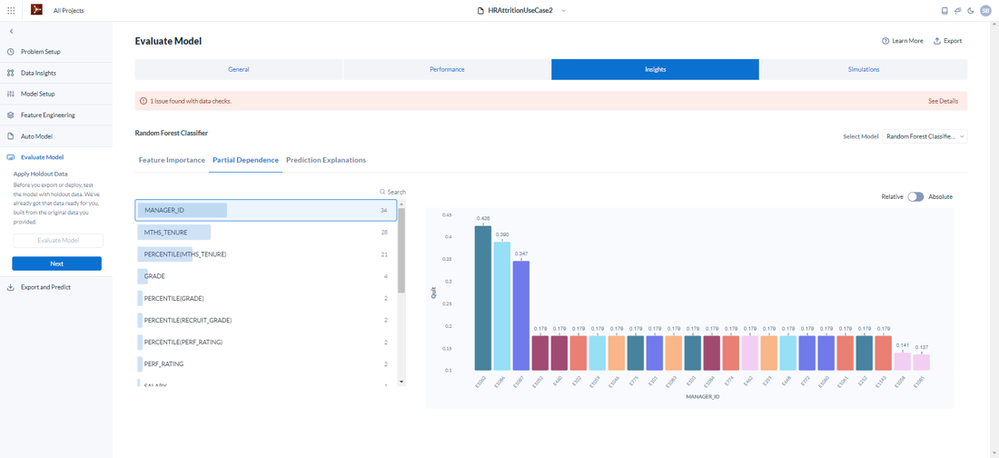

She digs deeper by looking at Partial Dependence and thinks about what actions could be taken based on these driving factors.

- MANAGER_ID

- 39% of the variance in likelihood to quit is driven by who the employee’s manager is!

- There are 3 managers who drive especially high attrition and 2 managers associated with especially low attrition.

- Susan will recommend that her company considers investing more in manager training

- Susan recommends that the HR department have conversations with the 3 managers with problematic attrition to alert them to the problem and work out ways to improve. She also recommends having conversations with the 2 managers with low attrition to discover best practices.

- MTHS_TENURE, PERCENTILE(MTHS_TENURE)

- The partial dependence plot for these two features unmistakably indicates a strong relationship between attrition and shorter employee tenure. The engineered feature PERCENTILE(MTHS_TENURE) paints a clearer picture and will be helpful in determining which cohort of shorter-tenured employees to focus retention efforts on.

- Susan suggests to her management they consider instituting a mentoring program for employees early in their tenure.

Summary

Susan was able to use Alteryx Machine Learning to quickly gain actionable insights into her company’s employee attrition problem and build a model that she can use to make predictions that will allow her team to more effectively and efficiently direct their efforts to retain employees. She also learned about critical factors influencing attrition that will lead her team to craft programs with a high likelihood of reducing employee turnover. She accomplished this quickly and without previous machine learning experience, gaining new skills and understanding of the process.

Sources:

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.