A monotonically constrained ML model is a type of machine learning model that enforces monotonicity constraints on the input features. Monotonicity means that as the value of one input feature increases, the output of the model either always increases or always decreases, keeping other variables unchanged.

These constraints are important because they can help improve the interpretability of the model by ensuring that the model behaves in an intuitive and explainable way. For example, in some applications, it is important that a model's predictions increase or decrease as certain input features increase, such as in credit risk modeling, where the likelihood of default should increase as a borrower's debt-to-income ratio increases.

Additionally, models with monotonicity constraints may be more robust to changes in the input features and less susceptible to overfitting noise in the data. By constraining the model to behave in a certain way, it may also reduce the risk of making unreasonable predictions outside of the range of the training data.

Overall, monotonically constrained ML models can provide additional safeguards and interpretability that may be important in certain applications.

The insurance and banking industries are heavy users of ML models that may need to be monotonically constrained, with some examples of use cases being:

- Credit scoring models: In credit scoring models, the likelihood of a borrower defaulting on a loan should generally increase as their credit score decreases. Therefore, it may be important to impose a monotonicity constraint on the credit score input feature to ensure that the model behaves in an intuitive and explainable way.

- Insurance pricing models: Insurance pricing models typically use a range of factors to determine the risk profile of an insured individual, such as age, gender, and driving record. In some cases, certain input features may have a monotonic relationship with the likelihood of making a claim. For example, in auto insurance, the likelihood of an accident may increase as the number of miles driven increases. In these cases, it may be important to impose a monotonicity constraint on the relevant input features to ensure that the model's predictions behave in an intuitive and explainable way.

- Fraud detection models: Fraud detection models in the banking industry often use a range of transactional and behavioral data to identify potential instances of fraud. In some cases, certain input features may have a monotonic relationship with the likelihood of fraud. For example, a sudden increase in the frequency or amount of transactions may be indicative of fraudulent activity. In these cases, it may be important to impose a monotonicity constraint on the relevant input features to ensure that the model behaves in an intuitive and explainable way.

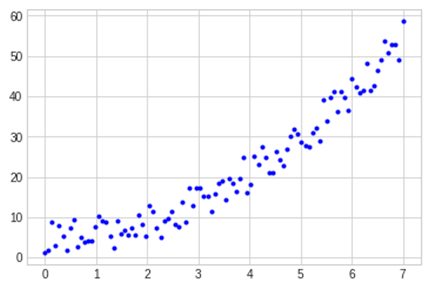

But usually, your data plotted against a variable that should have a monotonic effect will actually show something like this:

This is because reality is not perfect. So, in these cases, you need to be able to enforce the monotonic effect for some variables in the model before it is approved for business use.

While monotonicity constraints can be beneficial in many cases, there may be situations where enforcing monotonicity can lead to unintended consequences. One example of a case where monotonicity may seem like a good idea but is not always ideal is in models that involve interactions between input features. For example, consider a model that predicts customer satisfaction with a product based on two input features: price and quality. One might assume that customer satisfaction would increase monotonically with quality, meaning that higher quality products would always lead to higher satisfaction. Similarly, one might assume that customer satisfaction would decrease monotonically with price, meaning that lower-priced products would always lead to higher satisfaction. However, in reality, there may be interactions between these features that make monotonicity constraints less desirable. For example, if the price is too low, customers may perceive the quality as being low, leading to lower satisfaction. On the other hand, if the price is too high, customers may have high expectations for quality and be disappointed if the quality does not meet their expectations, also leading to lower satisfaction. In this case, enforcing monotonicity constraints on either feature alone may not capture the complex interactions between price and quality and may lead to suboptimal predictions.

Therefore, it is important to carefully consider the relationships between input features and the overall goals of the model before deciding to enforce monotonicity constraints. While monotonicity can be a useful tool for improving interpretability and reducing overfitting, it is not always the best approach for every problem.

We have uploaded the new macro prepared for binary classification models to the Gallery here. We may publish additional macros for multi-class classification or regression problems if there's interest in them.

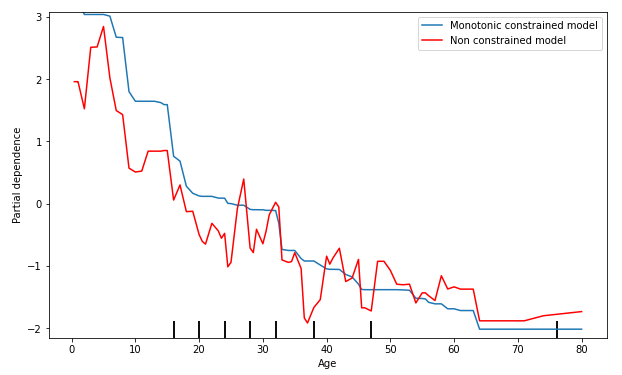

In addition to the macro uploaded to the Gallery, we've included two workflows in this post as examples of how the macro can be used. As a demo dataset, we've used the Titanic dataset, which allows you to predict a passenger's chance of survival based on demographic information. In this example, it was the case that younger passengers had a higher chance of surviving, so we've marked this feature as a decreasing monotonic constraint. We can see the effect of having this variable constraint versus not having it in the following figure:

Even if the general trend remains the same, with the monotonic constraint variable, it's always the case that younger passengers are more likely to survive, all other things being equal, which reduces possible overfitting and makes the model easier to explain. This may even result in a better model because of its ability to generalize better.

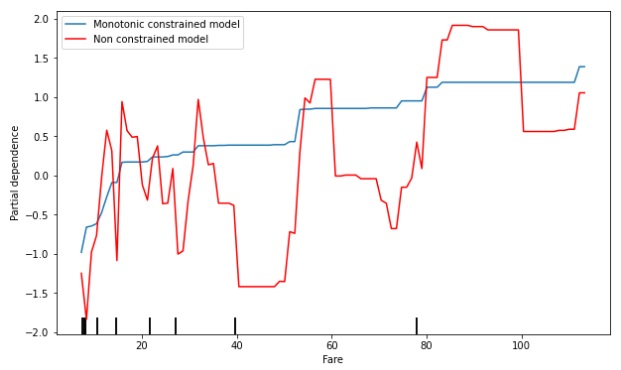

The second variable marked as a constraint is the price the passenger paid for the ticket. It is the case that passengers in higher classes had more chances of surviving, so we can see the effect of checking the ticket price for an increasing monotonicity constraint:

This macro has been tested with different versions of the Scikit-learn library, so it should work in out-of-the-box Designer installations, but it will work better if the version of this Python library is 1.0 or higher, especially with some variables that may have a missing partial dependence plot in lower versions of this library.

And as always with machine learning models, please make sure you understand the data and the model and check it with an expert before using it for a real scenario.

Hope this is useful for your models!