Data Science

Machine learning & data science for beginners and experts alike.- Community

- :

- Community

- :

- Learn

- :

- Blogs

- :

- Data Science

- :

- Realtime ML Forecasting and Anomaly Detection in A...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Notify Moderator

Read Tangent Works + Alteryx: Partnering on a Series of Predictive Analytics to learn more about Tangent Works and the partnership with Alteryx.

TIM Forecasting Tool in Alteryx

The TIM Forecasting Tool is an implementation of the TIM RTInstantML technology in Alteryx Designer. The image below shows the tool icon with one input and five outputs. The tool is available on the Alteryx Gallery or from Tangent Works’ documentation page.

Overview Video

Bike Sharing Example

To demonstrate the TIM Forecasting Tool, we will use a bike-sharing dataset from Washington, D.C. This dataset is available on Kaggle.

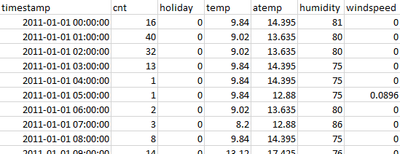

In the following example workflows, we slightly modified the dataset. The image below shows the first lines of the dataset. There are two years of data with hourly granularity. The target variable is the number of bike rentals, or cnt. Weather forecasts and holidays are available as explanatory variables.

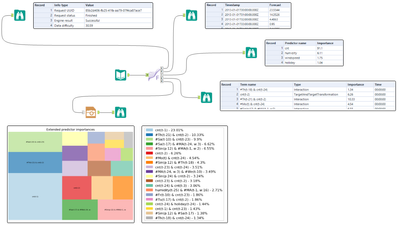

The following workflow loads the data into the TIM Forecasting tool and displays a sample of the output. The workflow can be downloaded here.

Tool Inputs:

D (Data) — The training data on which the TIM engine is trained

Tool Outputs:

S (Status) – Status of the prediction task

P (Prediction) – Rows with timestamps and corresponding predictions

I (Importance) – Percentual importance of individual predictors

E (Extended Importances) – Percentual importance of features that are built by the engine

G (Graph) – Path to the graph with visual representation of both importances and extended importances

The Tool directly communicates with the TIM restful API. When the Tool is running, it first sends the training data with user configuration, then waits for the end of the job, and lastly returns the outputs.

Under the hood, the TIM Engine performs automatic feature engineering, feature selection, and model building. The model is then used to produce the forecast. Each new call of the TIM Forecasting Tool repeats the entire process, which usually takes no more than a minute.

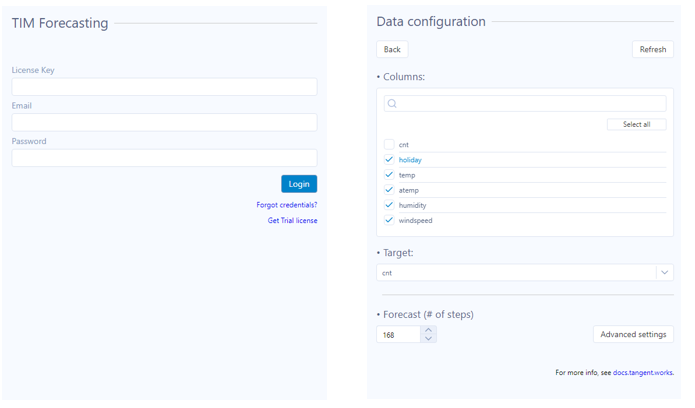

Configuration Panel:

The configuration panel has two sections. The first page contains the authentication form, and the second page deals with the data configuration. You can select which columns will enter the model as predictors, which column is the target variable, and how many steps from the last target variable you want to forecast.

Data Availability

A relatively neglected topic in time series modeling is data availability. The image below illustrates three cases with different data alignment. The majority of time series modeling techniques require the predictors to be aligned with the end of the forecasting period (the left case on the image below). If the data is aligned with the target variable (the case in the middle) there is usually some pre-processing needed to use the predictors for the forecast. The most challenging case is when the predictors/target have different alignment (the case on the right).

The advantage of TIM RTInstantML technology is that it can deal with all three cases in a fully automatic way. The engine uses all the information from explanatory and target variables that is available. The more information available in the data, the higher the quality of the forecast.

Let’s assume a scenario where we want to forecast ahead seven days. However, the humidity and wind speed forecasts are available only one day ahead and the temp and atemp forecasts are available three days ahead. The holiday predictor is, of course, available through the entire prediction horizon since it is known in advance if a given day is a holiday or not.

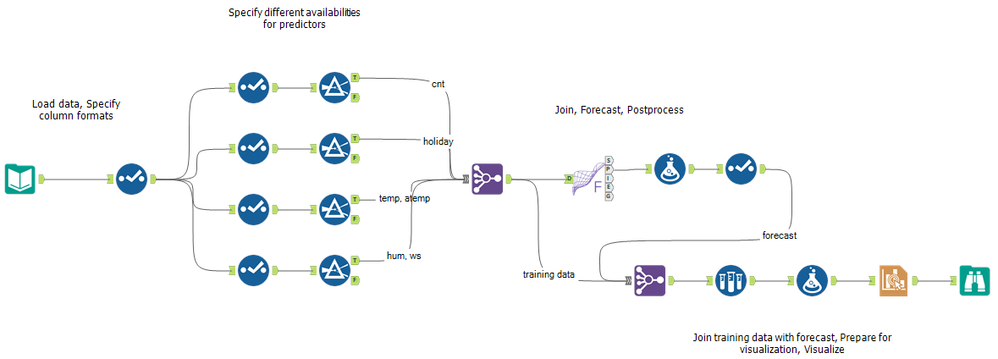

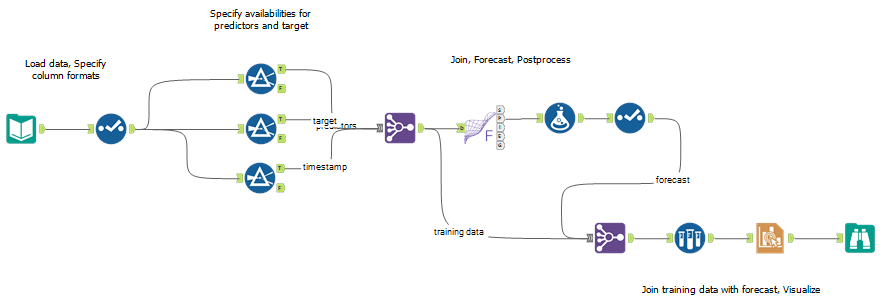

The following workflow demonstrates the ability of the TIM Forecasting Tool to deal with different availabilities of predictors. There is no need to do any manual configuration; the tool runs automatically — same as if the data were aligned. The workflow can be downloaded here.

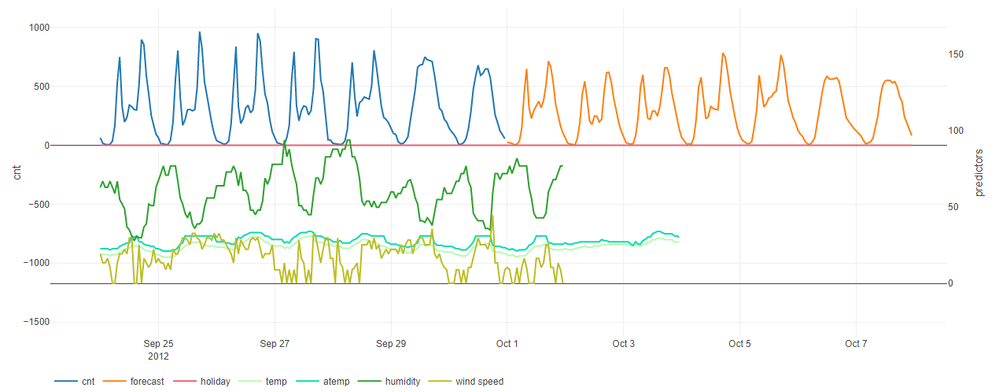

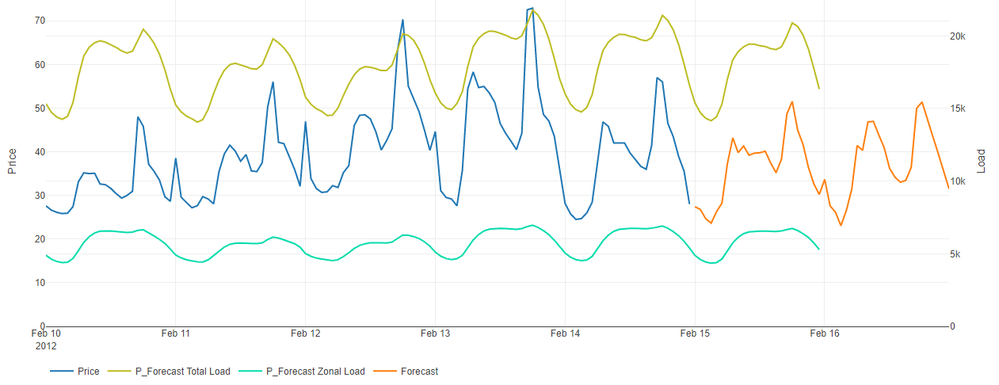

Below is the output of the workflow. The orange line represents the seven-day period of forecast. Notice the difference in the availability of the predictors.

Missing Values

It is often the case that there are missing values in some columns/predictors of the dataset. Sometimes, even entire records are missing. The reasons can be various, such as failure of the data collection system, connection issues, etc. The majority of time series modeling techniques would require the user to pre-process the data manually.

The advantage of the TIM Forecasting Tool is that there is no need to care about missing values. The missing values are supported by TIM as follows:

- TIM Forecasting Tool v1.0 (requires TIM Web Service v4.0 or higher) — the current version omits the entire record if there is a missing value

- TIM Forecasting Tool v1.1 (requires TIM Web Service v4.1 or higher) — coming soon, missing values imputation implemented (the maximum size of the gap that is imputed can be configured)

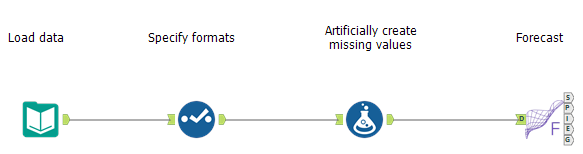

The following workflow simulates the scenario by artificially creating missing values. The workflow can be downloaded here.

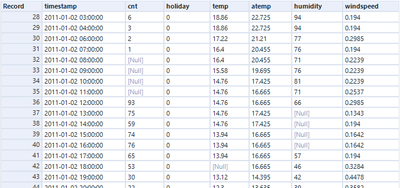

The table below shows a sample of the data that is entering the TIM Forecasting Tool. Notice the missing values in target cnt and predictors temp and humidity. There is also one record missing between 04:00:00 and 06:00:00. The TIM engine is trained only on those rows that are complete. The imputation of missing values in the next release of the TIM Forecasting Tool will bring the advantage of utilizing the information from incomplete rows.

Price Forecasting Example

This section provides one more example of using the TIM Forecasting Tool. The dataset is from The Global Energy Forecasting Competition (GEFCom). GEFCom is a competition conducted by a team led by Dr. Tao Hong who invites submissions from around the world for forecasting energy demand. GEFCom was first held in 2012 on Kaggle, and the second GEFCom was held in 2014 on CrowdANALYTIX. Tangent Works participated in the 2017 competition using TIM and was among the winning teams. Before this competition, Tangent Works first tried using TIM on the 2014 problems.

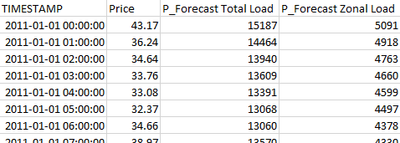

The workflow simulates a two-days-ahead forecasting scenario. There are two predictors that are available one day ahead. Below is a sample of the dataset. The workflow can be downloaded here.

The output of the workflow:

Read More

- The Tangent Works website: www.tangent.works

- Documentation : https://docs.tangent.works

- Contact : info@tangent.works

- Check out the video – Real Time ML Forecasting in Alteryx Designer

- Check out the coverage on Azure Datafest by our US Partner, Bardess

- Try TIM on https://www.tangent.works/trial/

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.