***UPDATE***

ChatGPT v4 is in beta testing and you can apply to join a waitlist to access it here...

https://openai.com/waitlist/gpt-4-api

There is a ChatGPT v4 Connector available here...

https://community.alteryx.com/t5/Community-Gallery/OpenAI-ChatGPT-4-Completions-Connector/ta-p/1116364

There is now a ChatGPT v3.5Turbo Connector that supercedes this v3 version, available here...

https://community.alteryx.com/t5/Community-Gallery/OpenAI-ChatGPT-3-5Turbo-Completions-Connector/ta-p/1108238

INTRO

Warning: This macro uses throttling and requires AMP to be turned OFF.

This macro will allow you to send data to OpenAI's ChatGPT AI.

The ChatGPT AI has multiple API (Application Programming Interface) functions or "endpoints", for generating images, writing code, answering questions and editing text. This Connector points to the Completions endpoint, which takes a natural language prompt string, and tries to reply, answering your questions or chatting to you.

With Alteryx, you are not going to necessarily be chatting, but you could ask the AI to take your data and create natural language messages with it, or summarise the info that you send. The AI is so powerful it is hard to imagine all the uses it could be put to, but this connector will allow you to experiment with it and find new ways to make it work for you, and your business.

QUICK START

To use this macro, you first need to go to...

https://openai.com/api/

... and hit the "Sign Up" button. Here, you will need to create a new API account. Then you create an API Secret Key, and you will be given a Personal Organisation ID. The macro will have two text boxes where you enter your Key and OrgID. Once the macro has the identifying Key and OrgID, very little else is required.

The main macro is called OpenAI_ChatGPT_Completions.yxmc. There are other macros in the package. These are required but can be ignored, they are sub components of the main macro. To use the macro, open the yxzp file and choose to save the expanded files somewhere you will find them easily. As with any other macro, right click on the canvas and "Insert Macro", and then browse to where you expanded the yxzp file to, find the OpenAI_ChatGPT_Completions.yxmc file and select that.

For input to the macro, all you have to provide is a record ID and a prompt string. The other variables are optional, and you can ignore them initially.

The Record ID is just an Int32 value, and the RecordID tool can quickly create this for you. This allows you use a Join tool to hook your responses to your initial prompts, if you wish to process the responses in downstream flows or systems.

The prompt string is an instruction or phrase in normal English, such as "Tell me what the weather is like in Putney" or "Write a limerick about a man from Nantucket". Of course, the beauty of Alteryx is that you can build multiple prompt strings (one per record), perhaps containing info about your customers for example, and ask ChatGPT to write personalised emails to those customers.

IN DEPTH GUIDE

This link takes you to an introduction on the Completion endpoint, and contains background info on how best to make your prompts...

https://platform.openai.com/docs/guides/completion/introduction

The link below details the values you can send to the AI to get a more tailored response.

https://platform.openai.com/docs/api-reference/completions

Here are the variables that the macro requires you to provide...

- RecordID (REQUIRED) (Int32); A simple record ID so that you can join response records back to your input records.

- Prompt (REQUIRED) (String); This is the text that ChatGPT's demo website accepts, and it is effectively where you "say something to ChatGPT". This is where you build a statement, that may be built using your own Alteryx data.

Here are the variables that are optional...

- Suffix (OPTIONAL) (String); This asks the AI to append text to your prompt, based on the prompt you send. See the Introduction link (https://platform.openai.com/docs/guides/completion/introduction) for more detail on this variable.

- Max_Tokens (OPTIONAL) (Int32); The API is free-form in its responses, and might witter on a bit. OpenAI use the concept of tokens, as approximations for words, to limit the size of the reply. The default value in the macro is 200 tokens, and you can override this by putting your own value here.

- Temperature_0to1 (OPTIONAL) (Double); Temperature is a value between 0 and 1, that reflects how "creative" you want the AI to be. If you are engaged in serious factual work, 0 is the suggested value, should produce deterministic repeatable results, and this is the macro default value. If you want the AI to write jokes or lyrics, or be a bit scatty and flighty, you can increase this value up to 1.

- ResponseResults (OPTIONAL) (Int32); You can ask the API to give you multiple replies with this value. If you use 0 for Temperature_0to1, then each reply will probably be the same, so this value defaults to 1 for a single response. If you use a high temperature value like 0.9, each response might be different, so you could select the most appropriate or creative response from the selection of responses.

- Attempts (OPTIONAL) (Int32); ChatGPT is very popular is very busy and is in beta. As such it is sometimes busy, or it may crash. This Attempts value sets how many tries the macro should make to get a good response, and it defaults to 5 attempts per request. This is usually enough to ride over the minor temporary outages that occur, but you can raise this value if it is important for you to get a response for each prompt.

- Stop (OPTIONAL) (String); ChatGPT's Completions endpoint is designed to respond a little unpredictably, as a human might do. You can ask it to cease it's response when it naturally generates a particular string, for example ". ", to indicate the end of a sentence. Again, the API documentation describes this better, and the macro defaults to not sending this string unless you override it here.

OPERATION

The main outer macro calls some submacros. If you copy the main macro, please remember to also copy the numbered sub-macros and DosCommand macro with it, or re-expand the package in your target folder. The macros have relative directory addressing, so they will attempt to call each other in the same workflow folder as the main outer macro.

EXAMPLE

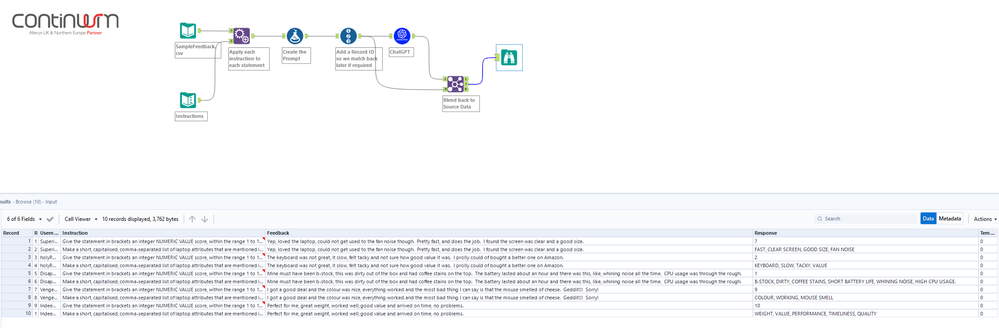

Below is a screenshot showing an example flow.

THROTTLING

Most API's do not have infinite resources, so they ask you to comply with a request rate. ChatGPT's rate is 20 requests per minute on the free trial. The third box in the macro defaults to "Free Trial", and this adds a 3 second pause between each request, to comply with thier throttling requirements. You have other options in this third selection box, but 99% of us are going to be on the default "Free Trial" setting.

OpenAI also have a token limit, which is very generous. As this is a demonstration connector, the token limit has been ignored, in the view that most people will easily stay well away from the maximum amount of tokens returned. If however you send or receive very long prompts or responses, and you fire multiple requests for long periods, you may bust this limit and get a 400 type response from the API.

OTHER OPEN-AI CONNECTOR

This connector is for ChatGPT Completions, which tries to answer questions, write poems, or tell jokes, and other mind blowing stuff. There is a Edits endpoint that can take an input string, and apply an editing instruction to it, so you can cleanse or grammatically correct text strings. You can find it here...

https://community.alteryx.com/t5/Community-Gallery/OpenAI-ChatGPT-Edits-Connector/ta-p/1094050

SUMMARY

So, the most exciting and revolutionary new technology is available, and Alteryx can be used to immediately hook up to it and begin leveraging it. The amazing flexibility of Alteryx allows it to shape data to the requirements of a particular API, and process the responses back into your workflows and onward to downstream systems.

As new technologies come online, they will have their own API's, and Alteryx will be perfectly placed to connect data into these new systems to come. We all know that Alteryx is a simple but powerful toolkit for rapid development, so you know that if you invest in Alteryx, your investment will keep you in step with the revolutions to come that we cannot foresee.

Any problems with the macro or questions, please reply below.

CONTINUUM

This macro is the product of research by Continuum Jersey. We specialise in the application of cutting edge technology for process automation, applying Alteryx and AI tech for creative solutions. If you would like to know how your business could apply and benefit from Alteryx, and the agility and efficiency it provides, we would like to talk to you. Please visit dubDubDub dot Continuum dot JE , or send an email to enquiries at Continuum dot JE .

DISCLAIMER

This connector is free, for any use, commercial or otherwise. Resale of this connector is not permitted, either on it's own or as a component of a larger macro package or product. Please bear in mind that you use it at your own risk. ChatGPT is a beta demonstration of technology, and the services' performance and availability might be inconsistent. This macro connector is just an HTTP bridge to the service, and it will either get a 200-OK response or some kind of error. This connector macro is not responsible for the quality of the returned data. Please observe all usual data protection rules for your business in your jurisdiction when interacting with web services.

FIXES

- First release (prior to 4th April 2023) had a problem where zero characters "0" were being removed and replaced with backspaces in prompt data. This would not effect "chat" type text data, but would effect any numeric data within text. Fixed 4th April 12:40 BST.

- First release potentially lost some prompt characters due to adding escape characters for JSON. Fixed 4th April 16:30 BST.

- Logo updated to include ChatGPT version number. 5th April 12:10 BST.