Alteryx Server Discussions

Find answers, ask questions, and share expertise about Alteryx Server.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Server

- :

- Re: Chained Gallery app Concurrent Users Conundrum

Chained Gallery app Concurrent Users Conundrum

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

We are running into an issue with a chained app in the Gallery that passes data through the chain.

As each app in the chain creates a separate temp file, we are using a network drive so save the files from app#1 that are picked up in app#2 or app#3 etc.

The issue comes in with concurrent users. Depending on selections made, User#2's project may complete before User#1's project causing User#1's data to be overwritten and hence providing incorrect results.

Is there a way pass the information from app#1 to app#2 without writing to a temp file on a network drive, or to identify the temp folder used by app#1 in subsequent apps in the chain?

Thank you.

Solved! Go to Solution.

- Labels:

-

Chained App

-

Dynamic Processing

-

Gallery

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Robb,

I'd likely suggest something like: Use a GUID to create a subdirectory where subsequent jobs look into a private set of data rather than into the general temp pool.

A short and directional answer....

See you at inspire!

Mark

Chaos reigns within. Repent, reflect and restart. Order shall return.

Please Subscribe to my youTube channel.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @MarqueeCrew

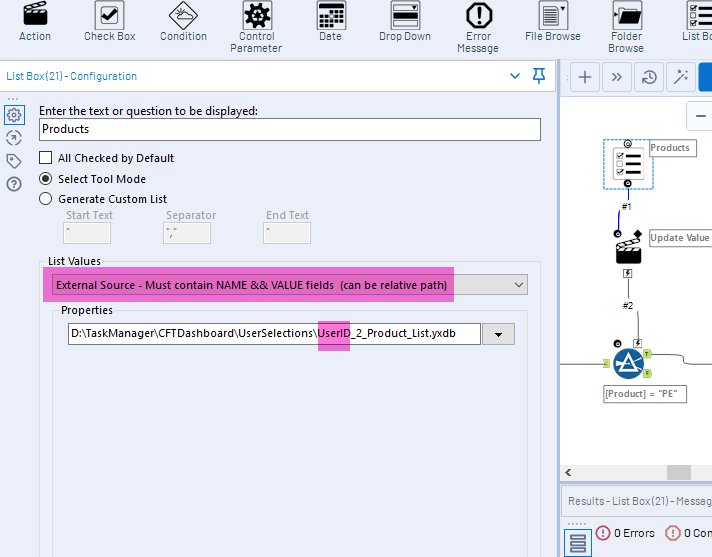

I have this same problem. I have taken a GUID type approach, using the __CloudUserId script to identify the user and create output files from workflow 1 with the users Server id. However I am having trouble figuring out how to get workflow 2 to dynamically select the users input files for a drop down or list box, using an external source. Do you know of a way to update the below file path dynamically in the workflow.

Thanks.

Kevin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @_Robb_ and @MarqueeCrew,

I am having the same issue as @KMiller . I am able to create a unique file to send to the second app but I'm not sure the best way to get the second app to know which unique file to look for. Can you elaborate more on the GUID and subdirectory method you mentioned in your previous post?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I have had success with the following approach that works for the gallery:

1) Put all of your workflows in the same directory. So if Workflow 1 kicks off workflow 2 which kicks off workflow 3, save them all to the same folder.

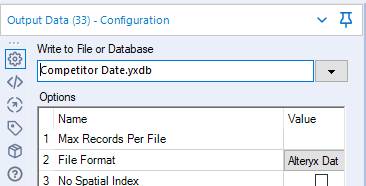

2) Have Workflow 1 output relative files for use in workflow 2. For example, workflow 1 can output to file1.yxdb which workflow2 will then use. Since no folder is specified, it will save in the same directory as the workflow.

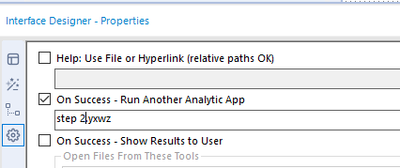

3) In the interface designer settings, type in the filename of your second step. Again, no folder means it's a relative path.

4) In your second workflow, have any input/interface tools that are getting data from your first workflow use a relative reference just like step 2. You just want the filename.

5) When you save workflow 1 to the gallery, be sure to check the box in the workflow options >>> manage workflow assets for your successive workflows. I don't think it should matter whether you check the box for your relative outputs.

This works because when alteryx runs the workflow in the gallery, it will spin up each instance in a separate temp folder. So there is no chance of concurrent users tripping up over each other. And all of your steps are run from the same temp folder, so they can all read/write to the folder.

Hope that helps!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@patrick_diganI will give this a try. Thank you for the detailed description!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I'd like to add a plug for my new idea,https://community.alteryx.com/t5/Alteryx-Designer-Ideas/Chained-App-User-Variables/idi-p/783758

This would be much simpler than other approaches explained here.

I'm not certain all workflows in a chain would run on the same worker server, blowing the relative file directory solution.

Also, I like having the passed data available on a shared drive so I can audit, test, and debug easier

Right now I'm getting __cloudUserid in every workflow in the chain to build into workspace directory names.

I wish we could get the __cloudUserid only in the first app of a chain, and add a timestamp to it, giving a unique meaningful subdirectory name that could pass to the next step in the chain..so I posted the idea and hoping for the best.

-

Administration

1 -

Alias Manager

28 -

Alteryx Designer

1 -

Alteryx Editions

3 -

AMP Engine

38 -

API

385 -

App Builder

18 -

Apps

298 -

Automating

1 -

Batch Macro

58 -

Best Practices

317 -

Bug

96 -

Chained App

96 -

Common Use Cases

131 -

Community

1 -

Connectors

157 -

Database Connection

336 -

Datasets

73 -

Developer

1 -

Developer Tools

133 -

Documentation

118 -

Download

96 -

Dynamic Processing

89 -

Email

81 -

Engine

42 -

Enterprise (Edition)

1 -

Error Message

415 -

Events

48 -

Gallery

1,419 -

In Database

73 -

Input

180 -

Installation

140 -

Interface Tools

180 -

Join

15 -

Licensing

71 -

Macros

149 -

Marketplace

4 -

MongoDB

262 -

Optimization

62 -

Output

273 -

Preparation

1 -

Publish

199 -

R Tool

20 -

Reporting

99 -

Resource

2 -

Run As

64 -

Run Command

102 -

Salesforce

35 -

Schedule

258 -

Scheduler

357 -

Search Feedback

1 -

Server

2,200 -

Settings

541 -

Setup & Configuration

1 -

Sharepoint

85 -

Spatial Analysis

14 -

Tableau

71 -

Tips and Tricks

232 -

Topic of Interest

49 -

Transformation

1 -

Updates

90 -

Upgrades

197 -

Workflow

600

- « Previous

- Next »