Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Workflow Optimisation - Searching Transcripts ...

Workflow Optimisation - Searching Transcripts for Keywords

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi there!

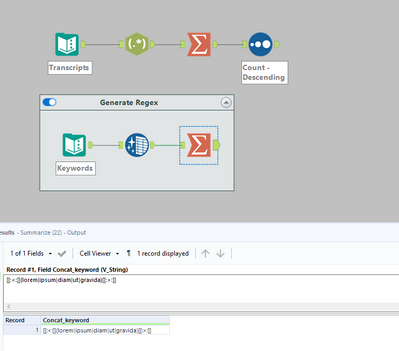

I've built a workflow which searches transcripts for certain keywords (attached a watered down example to show my process)

The workflow works perfectly, however we've reached almost a million transcripts now and there's probably more keywords we'd like to search for going forwards

Does anyone have any suggestions on how to optimise/improve this workflow so it won't take a massive amount of processing power/time to run?

Thanks in advance :)

- Labels:

-

Optimization

-

Transformation

-

Workflow

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hey @CHarrison,

Very interesting problem would make a great weekly challenge!

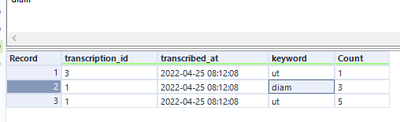

I think find and replace is more efficient as you don't appended every row to one another. Also I think your initial way was miss counting as transcription ID 1. By my count it has 5 ut's not 4. Likewise with Diams it should be 3 not 2.

I think the punctuation it throws off. Could use a data cleaning tool to remove punctuation before processing to solve this though.

Any questions, issues or adjustments please ask :)

HTH!

Ira

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

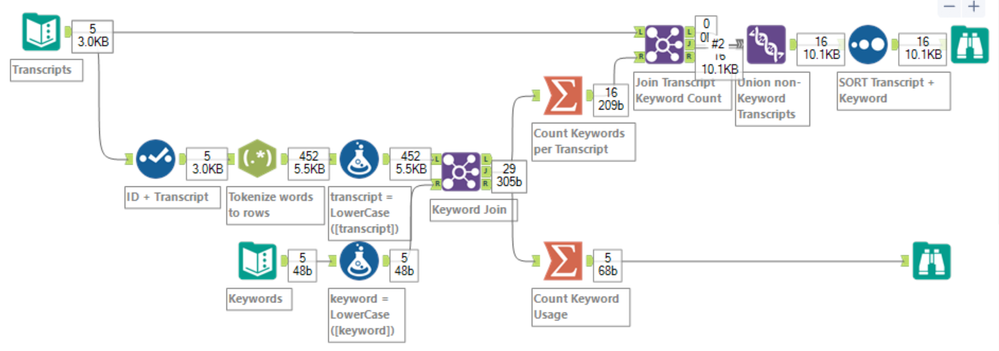

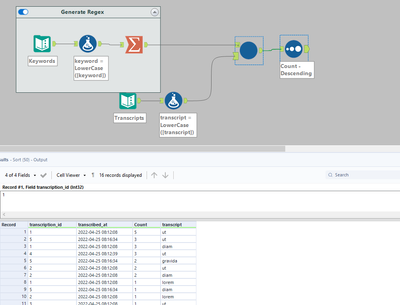

Here's yet another approach. While I agree about the Find & Replace tool as an option, you might consider the JOIN tool. I've got AMP turned on for this workflow ( @TonyaS and @jarrod as well as @NicoleJ will be proud of me) and millions of records won't be an issue for you. Essentially, I use a select to remove all unnecessary data and then opted for the RegEx tool to TOKENIZE (turn each word into their own record). We are now able to count all occurrences of each word that matches to the Keywords (exactly, hence the LOWERCASE function is used). If you don't set the CASE, your results will vary.

Now you can create metrics for matched keywords. I counted matches (ignored unmatched) and gave unique counts of transcripts plus the total occurrences. For each transcript I have counts for each unique keyword.

This is a case where I think that optimisation is in the eyes of the beholder. Clarity of the workflow and ease of updates is important. I think that you might also want to SUMMARIZE each of the tokenized words and count their usage. Word counts from the transcripts might also have value for you.

Cheers,

Mark

Chaos reigns within. Repent, reflect and restart. Order shall return.

Please Subscribe to my youTube channel.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Ah tokenize each word in the transcript and join ! Awesome solution @MarqueeCrew, don't want to admit it but its possibly a tad better then mine XD Though I do wonder how tokenizing every word in the transcript would add to the computational cost? Don't know it there is a good way to do a performance analysis in Alteryx? Could be a good idea suggestion if there's not one.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@IraWatt ,

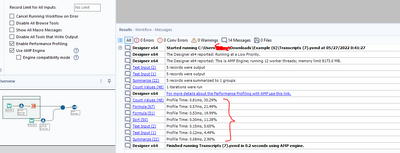

In the runtime settings you can look at performance tuning. But if you AMP the workflow, it runs so fast that I'd be surprised if the tuning will amount to anything. I think in this case you can afford the "cost" of tokenizing the transcripts with the simplification of the workflow. I also think that this approach gives better metrics.

Cheers,

Mark

Chaos reigns within. Repent, reflect and restart. Order shall return.

Please Subscribe to my youTube channel.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hey @CHarrison,

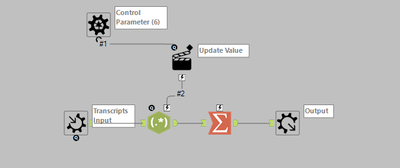

I think this is the most efficient and possibly the simplest way. The regex generated from the keywords lets you tokenise just the keywords. Could just put this in a macro and your sorted!

The regex generated makes sure that each word is it is just the full keyword (punctuation is allowed)!

Any questions or issues please ask :)

HTH!

Ira

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I've attached the example macro workflow:

Should be super efficient hopefully , like @MarqueeCrew said you should be able to compare the workflows efficiency here:

Have to tell us which is the fastest on your real data @CHarrison !

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

27 -

Alteryx Designer

7 -

Alteryx Editions

96 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,210 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

15 -

Batch Macro

1,559 -

Behavior Analysis

246 -

Best Practices

2,696 -

Bug

720 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,825 -

Community

26 -

Computer Vision

86 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,939 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,489 -

Data Science

3 -

Database Connection

2,221 -

Datasets

5,223 -

Date Time

3,229 -

Demographic Analysis

186 -

Designer Cloud

743 -

Developer

4,377 -

Developer Tools

3,534 -

Documentation

528 -

Download

1,038 -

Dynamic Processing

2,941 -

Email

929 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,262 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

714 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,711 -

In Database

966 -

Input

4,296 -

Installation

361 -

Interface Tools

1,902 -

Iterative Macro

1,095 -

Join

1,960 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

260 -

Macros

2,866 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

751 -

Output

5,259 -

Parse

2,328 -

Power BI

228 -

Predictive Analysis

937 -

Preparation

5,171 -

Prescriptive Analytics

206 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,434 -

Resource

1 -

Run Command

576 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

631 -

Settings

936 -

Setup & Configuration

3 -

Sharepoint

628 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

432 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,732 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,983

- « Previous

- Next »