Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Get Weather Data via API

Get Weather Data via API

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Need to pull in weather data into Alteryx? Weather data can be helpful context in many situations - sports predictions, supply chain analysis, flight scheduling, maintenance on parts that can't be outside in extreme weather, and many other situations.

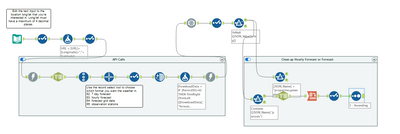

Workflow overview:

This workflow pulls weather data/forecasts from the National Weather Service and transforms it into usable data. This should be considered a starting file for weather data, which can be transformed into a format that is most helpful to you, such as an analytic app or a macro.

Workflow instructions:

- Edit the text input tool

- Fill in the longitude and latitude of the location you want weather data for

- Optional: Change the "user agent" to an email address if you want to receive any support emails for troubleshooting purposes in case their service goes down or changes in the future. See website at end of this post for more information.

- Use the "record select tool" to specify what type of forecast you want.

- Select 62 for a 7 day forecast

- Select 63 for an hourly forecast

- Select 64 for forecast grid data (not parsed in the workflow)

- Select 65 for observation stations (not parsed in the workflow)

- Run the workflow.

If you selected 62 or 63 in the record select tool, your data will be cleaned and provided in the "clean up hourly forecast or forecast" container. If you selected 64 or 65 in the record select tool, your data will be provided in the "true" anchor of the filter tool up top.

Workflow details for configuration and editing:

The National Weather Service's API has to be called twice in order to get the data you want. The first API call tells the website which location you want to pull weather data for. The second API call tells the website what kind of weather data you're looking for (step 2 of the workflow instructions).

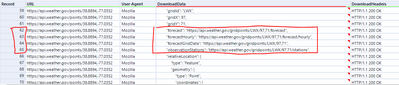

The first download tool pulls information for the specified location. If you look at the output of the "text to columns" tool after the first API call, scroll down to record 62-65 to see each URL option that needs to be fed back into a 2nd API call to get the weather data. Screenshot of output shown below:

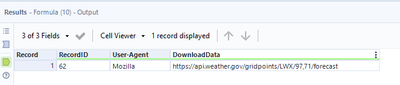

The next few tools pull out the desired record and strips the data down to just the URL. Screenshot of output shown below:

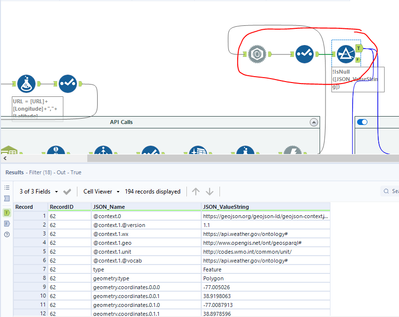

The 2nd Download tool calls the desired URL to pull down the actual data. The next 3 tools clean up the received data and filters out the nulls. Screenshot:

This is as far as I bothered to clean up the data for options 64 and 65 (forecast grid data and observation stations). However, if 62 or 63 were selected, I continued the data cleanup process in the "clean up hourly forecast or forecast" container.

The top filter tool in this container contains the timestamp of when the data was generated. This is helpful to ensure your data is up to date.

The bottom filter tool, along with everything after, cleans out all the metadata included with the data pull, and cleans up the titles to a more usable format.

Troubleshooting:

weather.gov FAQ and API guide: https://weather-gov.github.io/api/general-faqs#how-to-get-forecast

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Wow that's a very good workflow!

Can I make a Macro out of it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Absolutely! My hope for this workflow is that it's easily adaptable to fit wherever it needs to fit, whether that be a macro or any other format.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Nice work @christina-billman!

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

25 -

Alteryx Designer

7 -

Alteryx Editions

94 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,208 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

14 -

Batch Macro

1,558 -

Behavior Analysis

246 -

Best Practices

2,693 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,823 -

Community

26 -

Computer Vision

85 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,936 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,486 -

Data Science

3 -

Database Connection

2,220 -

Datasets

5,221 -

Date Time

3,227 -

Demographic Analysis

186 -

Designer Cloud

740 -

Developer

4,368 -

Developer Tools

3,528 -

Documentation

526 -

Download

1,037 -

Dynamic Processing

2,937 -

Email

927 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,256 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

711 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,705 -

In Database

966 -

Input

4,291 -

Installation

360 -

Interface Tools

1,900 -

Iterative Macro

1,094 -

Join

1,957 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

259 -

Macros

2,862 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

750 -

Output

5,252 -

Parse

2,327 -

Power BI

228 -

Predictive Analysis

936 -

Preparation

5,167 -

Prescriptive Analytics

205 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,431 -

Resource

1 -

Run Command

575 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

629 -

Settings

933 -

Setup & Configuration

3 -

Sharepoint

626 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

431 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,726 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,974

- « Previous

- Next »