Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: EXASOL upload and write speed issues

EXASOL upload and write speed issues

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi,

I have been having some problem with the speed writing to Exasol both through the normal and in-DB output tools.

1) The normal output tool seems to struggle as I upload entire data sets and what takes minutes to upload through the Exasol client takes over an hour in Alteryx if not more. Has anyone encountered this problem and have a solution. Currently I have to exit Alteryx and write SQL to upload or wait for hours to load data.

2) Once the data is in Exasol I find a steep performance drop in the write tool. This seems to occur once the width of the data (in numberr of columns) or the workflow complexity exceed a critical point. I have already looked to minimise browse tool and field sizes, so any further advice on how to improve performance would be appreciated, as the write tool is making up 90-95% of run time at the moment (although my main concern is around the normal output tool, not in-DB)

Thank you!

Solved! Go to Solution.

- Labels:

-

Database Connection

-

In Database

-

Output

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Niklas

It sounds like you are hitting the limitations of Alteryx's input/output tools - they do not (yet) use Exasol's powerful IMPORT/EXPORT functionality.

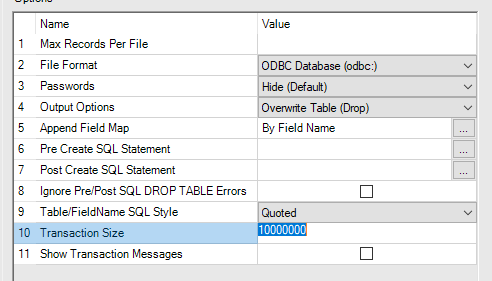

One thing I'd definitely recommend first is changing the transaction size: This defines the batch size for each database commit, and in the case of Exasol can be increased dramatically - ideally so that there is only one commit.

As a longer term solution, there is a slightly more convoluted solution which I've been trying to build: Exasol's JDBC download includes an executable called exajloader - this takes a CSV file (ideally, zipped!), transfer the data in compressed form (rather than individual free-text SQL statements), then import locally into a table in the database. Within Alteryx you can call this using the command tool. Whilst this does require a temp file to be created, you'll get much better load performance if you can get it to work.

I'll try and come up with a cleaner version of my macro, but what I've attached will hopefully give you an idea of how I envisage the process running.

I should also add, we are working with Alteryx to try and improve this functionality - because this isn't the experience anyone should have with Exasol.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@reevery Thanks very much for answering the question and sharing the Macro. I am trying to use the macro, but as a newbie to database (I only started to consider using a database when introduced to Exasol 2 weeks ago), I am struggling to make the macro working.

I have some questions and would be very much appreciated if I can get some advice:

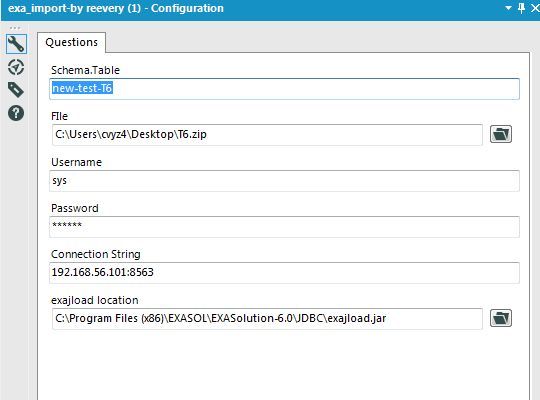

1. The picture below shows my input setup, I have ziped a csv file as suggested, and launched Exasol from Virtual box, is there anything wrong with the configuration? especially the Schema.Table field?

It seems, the data schema is generated inside the macro, is it?

3. The batch macro need some input as control parameter, but I am not sure what to feed in the macro. Would you mind share more information?

Finally, I am looking forward to the updates in Alteryx to better support Exasol.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@reevery Thank you for this. I'm happy to see that you are working on this and the macro is a nice way around it for now.

@steven4320555 Steven, you are meant to enter both table and schema into the first input e.g. ALL_SALES.SALES_DATA_20170929 if your schema is called ALL_SALES and your data is called SALES_DATA_20170929

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@Niklas Hi Niklas. Thanks very much for the reply.

I have to admit that, currently, I am lack of the fundamental knowledge of operating a database. It seems I don't need to create a schema and a table inside Exasol before I can use the macro to import data into the database, do I?

And the batch macro need some control input, what sort of data does it need to connect to the macro? Currently, I just typed 1 in the text input, so the macro just run 1 iteration.

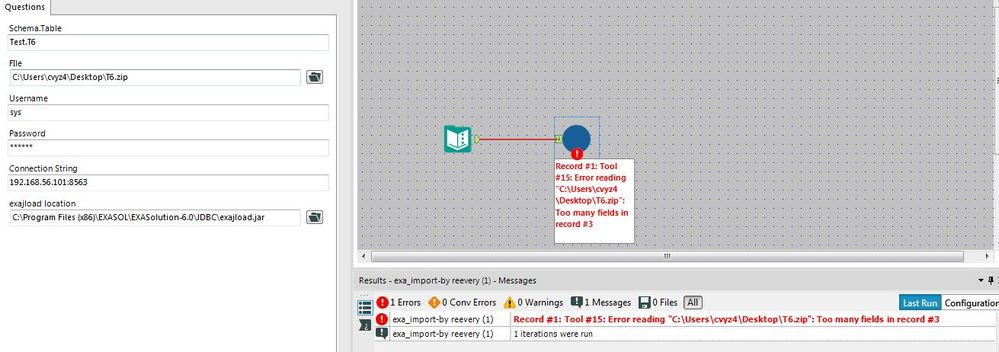

The file inside the zip file is just a column of postcode. I put a new schema and table name, but I got the following error:

Do you may have any idea on what is wrong in my setup?

Many thanks

Steven

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

25 -

Alteryx Designer

7 -

Alteryx Editions

94 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,208 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

14 -

Batch Macro

1,558 -

Behavior Analysis

246 -

Best Practices

2,693 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,823 -

Community

26 -

Computer Vision

85 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,936 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,486 -

Data Science

3 -

Database Connection

2,220 -

Datasets

5,221 -

Date Time

3,227 -

Demographic Analysis

186 -

Designer Cloud

740 -

Developer

4,368 -

Developer Tools

3,528 -

Documentation

526 -

Download

1,037 -

Dynamic Processing

2,937 -

Email

927 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,256 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

711 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,705 -

In Database

966 -

Input

4,291 -

Installation

360 -

Interface Tools

1,900 -

Iterative Macro

1,094 -

Join

1,957 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

259 -

Macros

2,862 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

750 -

Output

5,252 -

Parse

2,327 -

Power BI

228 -

Predictive Analysis

936 -

Preparation

5,167 -

Prescriptive Analytics

205 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,431 -

Resource

1 -

Run Command

575 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

629 -

Settings

933 -

Setup & Configuration

3 -

Sharepoint

626 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

431 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,726 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,974

- « Previous

- Next »