Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Comparing Data from Two Sets of Data, Calling ...

Comparing Data from Two Sets of Data, Calling out Differences

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I need help comparing an old set of data to a new set of data and creating a list of all changes. The problem here, is there is about 15 columns and every column could potentially change in the update.

Any help is appreciated. I have attached one sample document, this would just be updated monthly with updates or changes to the columns.

Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

You could try doing a Union of both data sets, followed by a Unique tool that looks at all 15 columns to sort records into "Unique" and "Duplicate". Where a record shows up in "Duplicates" you know it has a mate in "Unique" where nothing changed in the 15 columns. If a record is in "Unique", then it's either A) new or B) different/changed from one data set to the other, assuming the newer data set does not include any deleted records. Do you have a unique key that does not change for these records? Might want to add new column for "data source" before the Union, and don't include that column in the Unique. Maybe something like that. Hope that's somewhat clear :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@egorman3 I think transposing the data would work. I've included the start of a workflow, along with the sample excel file where I created a new tab to simulate new data (I changed several data points, plus added and removed fields.) You will need to determine what your key field(s) is(are) to compare from one dataset to the next. I've assumed it's the project name for the time being. Then you transpose the rest of your data (which will dynamically take new columns). Then you can join the data to the new data set and see what differences exist. You can play aronund with the data at the end (perhaps a crosstab) if you want your final output to look different.

Hopefully this gets you started!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

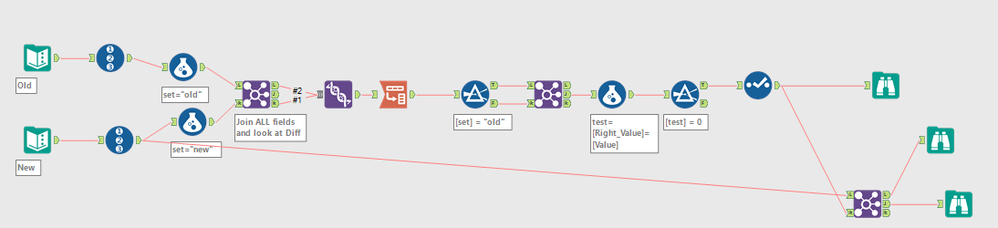

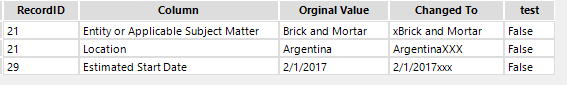

Here is my version - Join all fields(assumes they are same each time*) and look at differences and report out what changed.

*If the new data set could have new columns, then you could use the field tool to get column names and then update the join xml in a macro to handle this case. Would require you to add missing columns with NULLs to original data source with a similar approach and then flagging records/columns that have new columns and data in those columns.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

How easy would it be to adjust this if I don't need a Record ID? I have HR data that includes a unique identified # for each employee. I think I fixed it to use that instead.

However, how do I account if one of the files has that ID # and the file doesn't or vice versa?

Adam

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

I found that a combination of the suggested approaches worked best for me to improve performance.

I was comparing very large data sets with many columns.Transpose and compare when data sets are very large result in millions of rows being checked that are identical. I found it essential to rule out as many identical rows as possible as quickly as possible.

I used the following approach (I'm sure it could be improved further):

- Make sure that each data source has an data set identifier column, add it if not (e.g. a column called "Data Set" with "Data set 1" or "Data set 2"). My data also has a row ID which I created by concatenating some key fields that I know will be the same between data sets (this is needed for the transpose stage).

- Union the two data sets

- Unique tool (select all column except data set identifier which will be different)

- Join Unique and Duplicate outputs, take only the left output (this is removing all of the rows that are identical from the unique output)

- You are now left with two rows for each row, so it's time to find out what is different. Use a filter tool to split "Data set 1" from "Data set 2".

- Transpose the data for both data set 1 and 2, group by Data set ID and Row ID. You will now have one row per field for each of the two data sets. "Name" will be the field name, and "Value" will be the value to compare.

- Now join the data sets based on Data Set ID, row ID, and "Name" (which is the field name). You will now have for every single row a column for the value from the first data set, and a column for the value from the second data set.

- Now compare them using a formula or a filter!

Props to @gc for suggesting unique tool (quick way to rule out a lot of calculations) and @patrick_digan and @anthony for discussion around transpose/join. Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi - Would appreciate if anyone can help. So i tried this below example and it works good and helped partly for my requirement, However, this does not find out newly inserted rows ? for example . I have old and new flow .

old is my Table1 , new is table 2. which has exact same columns , i need to find if any records got inserted or updated or deleted into table 1 and make those updates into table 2. Any suggestions to perform this ? totally new and have been trying multiple approaches.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Sounds like you need to use join tool to find newly inserted or deleted rows. Have you checked out this blog?

https://community.alteryx.com/t5/Engine-Works-Blog/Compare-2-Data-Sets/ba-p/88853

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi - Yes i checked it out. i need to compare 2 data sources and then find out the insert, updated and deleted rows in source 1 and sync that up with source 2. I could acheive that with below tools, by adding the final output tool using the update ,insert new property and this works good.

Im stuck with delete flow. Could some one help with identifying deletes and which tool to use ? i read about delete options, looks there are not much.

thanks a lot !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @SamDrury,

I was using your approach till Step 4.

However, when I was looking at my data on Left output, it was showing 2 sets of unique rows.

And I was unable to identify the differences on this Left output.

Does 'Unique' tool has any limitations as I was comparing double data?

Please advise.

Thanks and Regards

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

25 -

Alteryx Designer

7 -

Alteryx Editions

94 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,208 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

14 -

Batch Macro

1,558 -

Behavior Analysis

246 -

Best Practices

2,693 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

267 -

Common Use Cases

3,822 -

Community

26 -

Computer Vision

85 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,936 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,486 -

Data Science

3 -

Database Connection

2,220 -

Datasets

5,220 -

Date Time

3,227 -

Demographic Analysis

186 -

Designer Cloud

740 -

Developer

4,367 -

Developer Tools

3,528 -

Documentation

526 -

Download

1,037 -

Dynamic Processing

2,937 -

Email

927 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,256 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

711 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,705 -

In Database

966 -

Input

4,291 -

Installation

360 -

Interface Tools

1,900 -

Iterative Macro

1,094 -

Join

1,957 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

259 -

Macros

2,862 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

750 -

Output

5,252 -

Parse

2,327 -

Power BI

228 -

Predictive Analysis

936 -

Preparation

5,166 -

Prescriptive Analytics

205 -

Professional (Edition)

4 -

Publish

257 -

Python

854 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,430 -

Resource

1 -

Run Command

575 -

Salesforce

276 -

Scheduler

411 -

Search Feedback

3 -

Server

629 -

Settings

933 -

Setup & Configuration

3 -

Sharepoint

626 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

431 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,726 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,973

- « Previous

- Next »