Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Re: Can you execute generic SQL commands?

Can you execute generic SQL commands?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi, I'm searching for a way to simply execute generic SQL commands against a server. Specifically, I'd like to execute some stored procedures on the server after loading data into staging tables using alteryx.

I know you can use custom SQL as part of data input connectors, but I'm just looking for a way to run other SQL statements against a server such as exec and delete statements, etc.

Solved! Go to Solution.

- Labels:

-

Database Connection

-

Input

-

Output

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

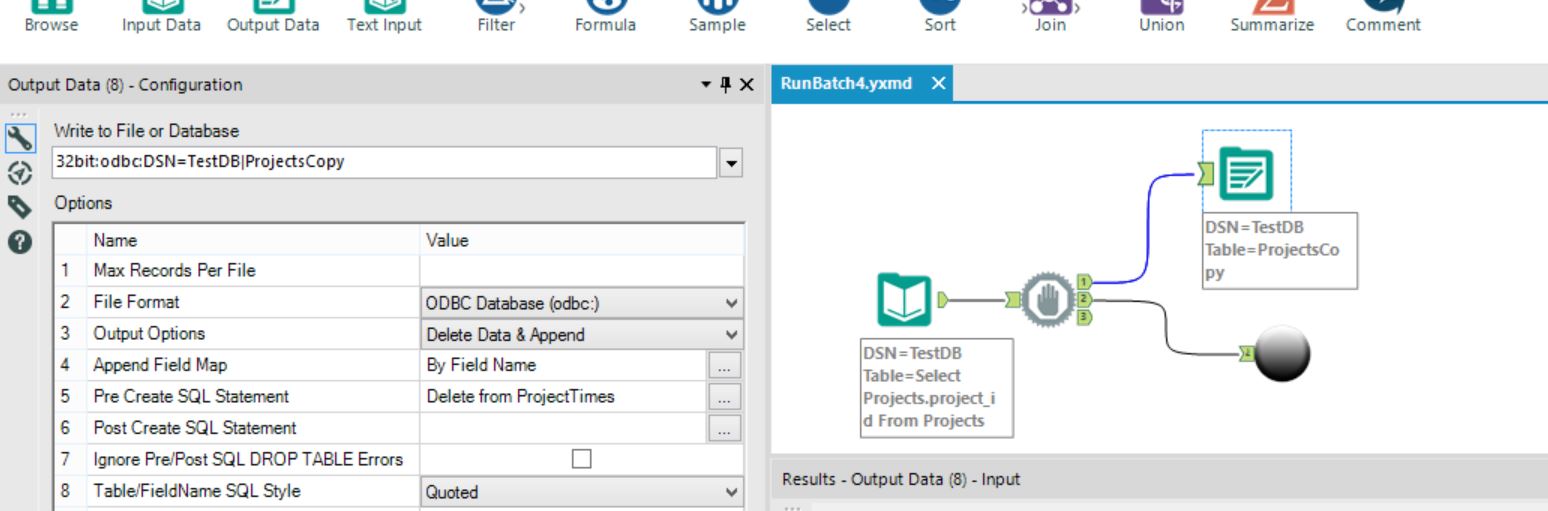

Should mention that the batch target gets cleared once prior to the 100 tables being appended to it

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

A couple of questions...

- What is the purpose of the single table where you aggregate all of the data? Not sure I understand since it sounds like you are ultimately splitting the data up into multiple tables in the final target database? Is it just a "staging" table for all of your data?

- Could you share a screenshot of what is going on inside the batch macro?

The reason I'm asking is because based on what I'm currently understanding of your progress, I'm thinking you could eliminate the "staging" table where you accumulate all of the data and just use a Dynamic Input with an Output that separates into the individual tables. That could eliminate the batch process altogether (or at least put the Dynamic Input and Output into a batch macro).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

No I am obviously not explaining it well, sorry

The target never gets split, this is the final table for analysis in Tableau (for instance)

Think of it like this: (this isnt whats happening but it is similar) you have 100 stores, they all have identical spreadhseets and have to do a budget for you.

You take all 100 excel budgets and load them all to a single table to analyse all stores aganst each other

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Well, or maybe I'm just "thick". ![]()

So since in your screenshot there is no Output from the batch macro, I assume that you are writing to an Output within the macro with an Output Option of "Append Existing"?

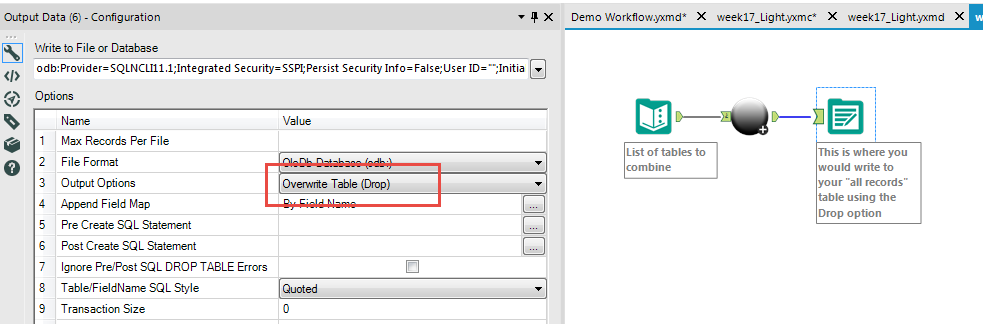

Since a batch macro essentially "unions" all of the records anyway, could you not bring the Output outside of the macro (replace it inside the macro with a Macro Output tool) with the Output Option of "Overwrite Table (Drop)" and just let Alteryx write out to the single table?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Just an aside: you could use sqldf (or maybe RODBC) in the R Command tool if you wanted to simply execute some SQL.

You could use that (I believe) if you wanted to do something like drop a table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Also, you obviously have the loading stuff working inside the batch macro, but you might want to check out using the Dynamic Input tool. That is what I would typically use for the example of having 100 tables I want to combine into one. I would feed the list of table names into the Dynamic Input and modify the SQL Query in it so that it changes the query string for each record coming in. (It basically functions as a batch process this way.) I would then just attach an Output tool with the Drop option.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

So since in your screenshot there is no Output from the batch macro, I assume that you are writing to an Output within the macro with an Output Option of "Append Existing"?

Since a batch macro essentially "unions" all of the records anyway, could you not bring the Output outside of the macro (replace it inside the macro with a Macro Output tool) with the Output Option of "Overwrite Table (Drop)" and just let Alteryx write out to the single table?

I am a newby, and macros are still all new to me, I will investigate this further

are you saying that with an output option in the batch macro, I could have all the output write to a single table that gets dropped once at the start of the batch and repopulated with all of the content (the union of all of my batches)? If yes then this is what I want.

If it deletes on each "batch" then it is not what I need

I will investigate

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Also, you obviously have the loading stuff working inside the batch macro, but you might want to check out using the Dynamic Input tool. That is what I would typically use for the example of having 100 tables I want to combine into one. I would feed the list of table names into the Dynamic Input and modify the SQL Query in it so that it changes the query string for each record coming in. (It basically functions as a batch process this way.) I would then just attach an Output tool with the Drop option.

No it isnt that simple I'm afraid. I mislead you with my 100 excel sheets example I think. It is actually more like 100 URL API calls with multiple XML parsing tools on each batch

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Just an aside: you could use sqldf (or maybe RODBC) in the R Command tool if you wanted to simply execute some SQL.

You could use that (I believe) if you wanted to do something like drop a table.

Thanks, I will check this out

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

No problems with being a "newby"...that's how we all started. ![]()

Hopefully this will help...

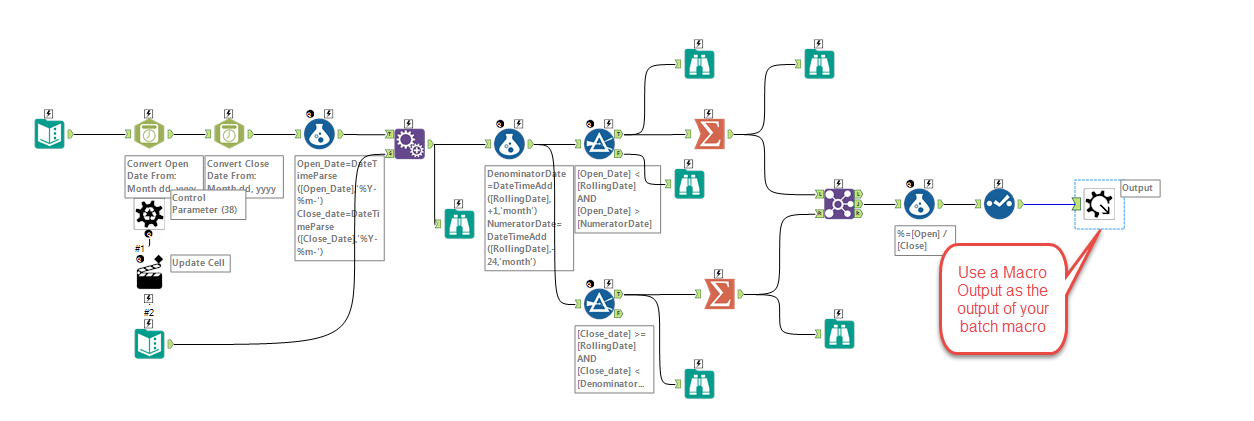

At the end of all of the process you have in your batch macro, you would end it with a Macro Output tool. (I've just taken a screenshot of a batch macro I've created in the past with lots of parsing/blending/manipulation as an example. I assume your's might be even more complex.)

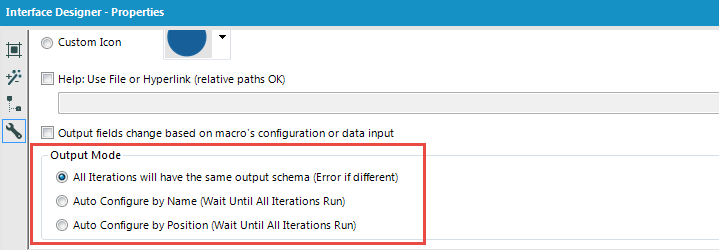

In the Properties of the batch macro (under the Interface Designer view), you can set up how you want the data to come out of the macro. (If you've used the Union tool already, you will recognize the options.)

If the schema for each URL read is the same, you would typically use the first (default) option.

Your batch macro will now have an output anchor that you can attach an Output tool to. This is where you would write to the "all records" table and you would set the options in the Output tool to "Overwrite Table (Drop)".

Hope this all makes sense...

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

26 -

Alteryx Designer

7 -

Alteryx Editions

95 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,210 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

15 -

Batch Macro

1,559 -

Behavior Analysis

246 -

Best Practices

2,696 -

Bug

720 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,825 -

Community

26 -

Computer Vision

86 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,939 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,489 -

Data Science

3 -

Database Connection

2,221 -

Datasets

5,223 -

Date Time

3,229 -

Demographic Analysis

186 -

Designer Cloud

743 -

Developer

4,376 -

Developer Tools

3,534 -

Documentation

528 -

Download

1,038 -

Dynamic Processing

2,941 -

Email

929 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,262 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

714 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,711 -

In Database

966 -

Input

4,296 -

Installation

361 -

Interface Tools

1,902 -

Iterative Macro

1,095 -

Join

1,960 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

260 -

Macros

2,866 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

751 -

Output

5,259 -

Parse

2,328 -

Power BI

228 -

Predictive Analysis

937 -

Preparation

5,171 -

Prescriptive Analytics

206 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,434 -

Resource

1 -

Run Command

576 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

631 -

Settings

936 -

Setup & Configuration

3 -

Sharepoint

628 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

432 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,732 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,983

- « Previous

- Next »