I am working on a prototype for Alteryx of some new charting/visualization ideas. For most prototypes we do the audience would be strictly internal, but this time I am going to try something different. As I move forward developing the prototype, I am going to be sharing it with the readers of this blog in order to get as much feedback as I can as early as possible in the process. The idea is to use JavaScript & SVG for visualization/charting from within Alteryx. We could of course extend the charting tool we have, but there is a lot of energy in the open source world right now going on with JavaScript visualization. We want to evaluate the idea of utilizing some of the work that other people are doing – otherwise known as standing on the shoulders of giants. Some of the specific libraries we are looking at include: D3, NVD3 and RAW. There are many, many others, so if we were to integrate a JavaScript engine in a generic way, we could open up Alteryx to all kinds of cool things.

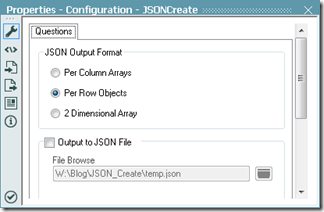

The lingua franca of data in JavaScript is JavaScript Object Notation (JSON for short). For Alteryx 9.0 we will have native tools for both creating and parsing JSON, but I want to create a prototype for users of Alteryx 8.6 so we can get feedback sooner. That means the 1st step is to be able to create JSON in a reusable way. I created a macro for writing JSON data. It works as either an output tool, writing a .json file, or as a pass-through tool so you can get the JSON data as a field. It automatically generates the JSON from the stream coming in, so if you want to remove or rename fields or filter rows, you would use a Select and/or Filter tool before using the JSON Create macro. The macro gives 3 different choice of the way the data goes into JSON:

- A list of columns as arrays – each array named with the field name.

- An array of rows – each row being an object with each field value being named with its field name.

- A 2 dimensional array of simple data with a separate array of the field names.

If you just want to have a tool to output JSON, but don’t care about the technical details, you can download it here. That said, there are some interesting details if you will be creating macros on your own.

Technical Details

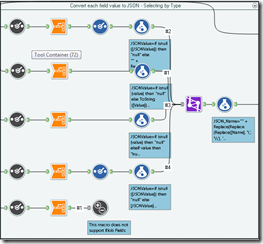

Ultimately, JSON is a text format, so every field will have to be converted to text. The 1st challenge is that different field types need to be prepared in different ways before they are ready to go into JSON. String fields need to be quoted and “ and \ characters need to be escaped.

Ultimately, JSON is a text format, so every field will have to be converted to text. The 1st challenge is that different field types need to be prepared in different ways before they are ready to go into JSON. String fields need to be quoted and “ and \ characters need to be escaped.

Once you get into developing macros such as this, the Developer category of tools comes to the rescue. In particular in this case I needed a Dynamic Select tool. This works similarly to a select tool in that you can pick and choose which fields to pass on, but instead of picking them from a list, you either pick them by type or with a formula. Normally I would have selected by type, but I also wanted a RecordID field to pass through so I had to use a formula.

One interesting and potentially difficult side effect of the DynamicSelect tool is that it might output 0 fields. Very few tools in Alteryx can handle getting an input with no fields, but fortunately the Transpose tool can, because it always outputs Name & Value fields regardless of what is input.

It also happens to be exactly what we need to run a formula on each field of a given type very simply. In short, a DynamicSelect tool will almost always be directly followed by a Transpose tool when using it in a generic way like this.

The one field type this macro does not support going in to JSON is blobs, so in that case, I use a Test tool to give the user an error and stop processing if a Dynamic Select tools finds any blobs. The user can resolve it by removing blob fields with a select prior to going in to this macro.

The next challenge is make sure that fields and rows come out in the order that they came in. The Summarize tool among others will tend to re-sort a data stream in ASCII order. In order to avoid this, I make a version of the field names prefixed with a number, so Field1 becomes __0001_Field1. It is important for me to leave the leading 0’s since it will be sorted as a string, not as a number. I go ahead and pre-sort the stream on the row ID and the adjusted field name. By doing this 1st, I ensure that any summarize that groups by field name later will preserve row order and vise versa. See Stable Sorts below.

At this point, each individual cell is ready to go into JSON; it’s just a matter of packaging it together. Since the configuration of the macro allows a user to choose one of three different ways to pack the data, I have 3 different containers in the module, 1 for each method. I have a actions that enables (or disables) each container based on the users selection. Containers are a handy way to group tools together in a macro or an app, but you have to be careful when disabling them. For instance, if you have a connection going from a disabled container to a Join tool, the Join tool will error. That is because the Join requires 2 connections and when you disable a container, it in effect deletes all the connections coming from tools within it. The Union tool is a good alternative in this case, because as long as it has 1 valid input it will work. In this specific case the union will end up only having 1 input, but there is very little overhead in using it that way, because it can just pass the 1 (and only 1) input through unmodified.

At this point, each individual cell is ready to go into JSON; it’s just a matter of packaging it together. Since the configuration of the macro allows a user to choose one of three different ways to pack the data, I have 3 different containers in the module, 1 for each method. I have a actions that enables (or disables) each container based on the users selection. Containers are a handy way to group tools together in a macro or an app, but you have to be careful when disabling them. For instance, if you have a connection going from a disabled container to a Join tool, the Join tool will error. That is because the Join requires 2 connections and when you disable a container, it in effect deletes all the connections coming from tools within it. The Union tool is a good alternative in this case, because as long as it has 1 valid input it will work. In this specific case the union will end up only having 1 input, but there is very little overhead in using it that way, because it can just pass the 1 (and only 1) input through unmodified.

Stable Sorts

When Alteryx sorts data, it uses a type of sort called a stable sort. This means is that all things being equal in a subsequent sort, it will preserve the original order.

Encoding

From section 3 of the JSON the spec, rfc4627, it clearly states that JSON data should always be Unicode and UTF-8 encoded by default. For this macro, that means that when it writes an output file, it will be written as UTF-8. In the case of the output connection, I leave the type as a V_WString field, which are UTF-16 encoded. I do this specifically because otherwise copy & paste on windows won’t work properly.

Wrapping Up

Once again, you can download the JSON Create macro here. A big thank you to Tara McCoy Giovenco for the icon – she is super creative. Next week we will start to use the JSON this produces to do cool things with JavaScript.

Thanks for reading,

Ned.

This post originally appeared at http://inspiringingenuity.net/2013/10/09/alteryx-json-data-output/