Dev Space

Customize and extend the power of Alteryx with SDKs, APIs, custom tools, and more.- Community

- :

- Public Archive

- :

- Dev Space

- :

- Python SDK: How to Debug an Error using pdb packa...

Python SDK: How to Debug an Error using pdb package

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Printer Friendly Page

- Mark as New

- Subscribe to RSS Feed

- Permalink

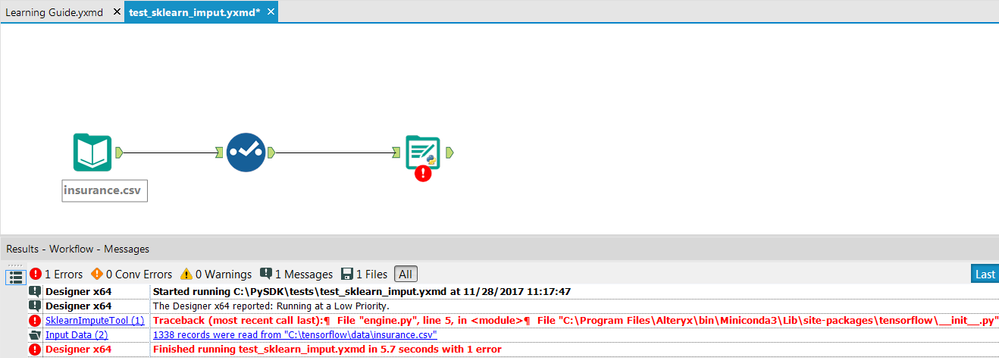

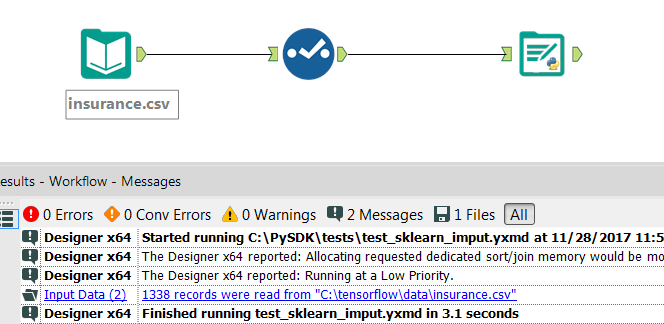

Thought I'd share a little trick that was very handy when trying to diagnose and fix a problem I was having when trying to work on a tensorflow based tool with the Python SDK.

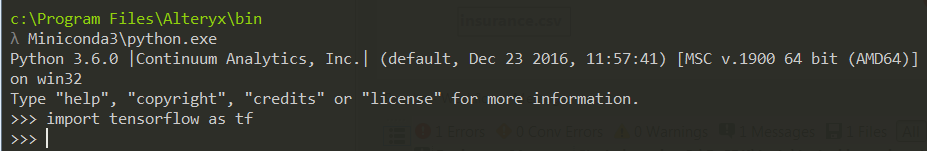

Hmmmmmmm, this is interesting, because I know Tensorflow doesn't have any issues when trying to import from a standard Python session from the SDK.

What's going on here, and how do I dig in and solve the problem?

The key thing to understand is that when a Python SDK tool's code is executed, it is run in a special Python process embedded in Alteryx's main C++ process. This provides a number of huge advantages, by allowing the SDK's "plumbing" oriented operations to be performed by low-level, lightweight, and efficient C++ code, leaving the pure Python for the unique functionality provided by the tool.

The key disadvantage is that most Python IDEs don't support embedded interpreters, leaving you with a smaller tool set for debugging your code. Additionally, there isn't a way to fire up a REPL that runs C++ with an embedded Python interpreter to help debug code in isolation. Additionally, certain libraries may rely on environmental variables that aren't available in an embedded process, and these won't readily import without errors.

The good news:

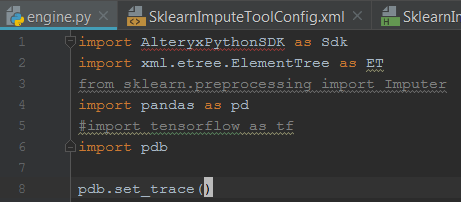

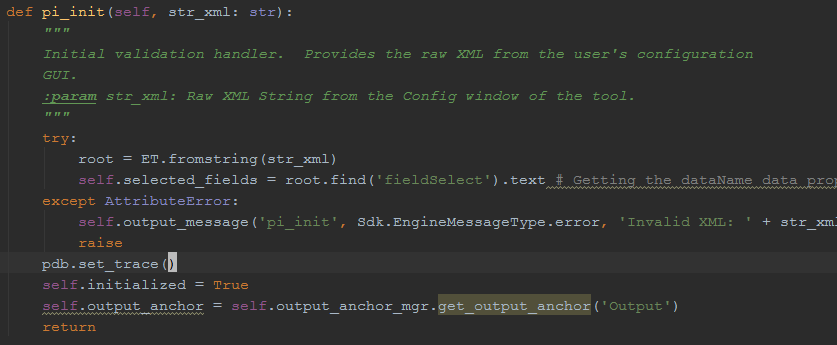

Python comes with a built-in debugger called pdb. Take a wild guess what it stands for.

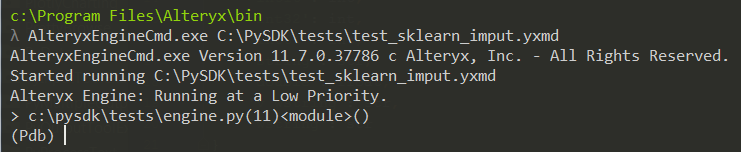

Let's take a spin with pdb and see if we can fix our Tensorflow error.

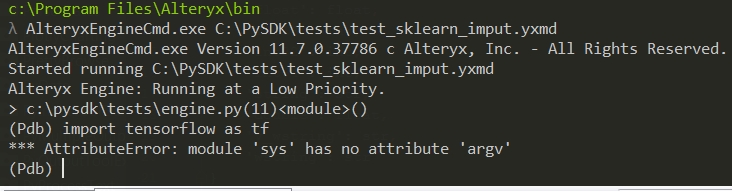

Now that we have a REPL available to us in the context of the embedded Python process, let's try importing Tensorflow again.

No surprise here. Tensorflow wants a variable that is present in the non-embedded process, but not available in our embedded Python.

Let's see if we can fix that, shall we?

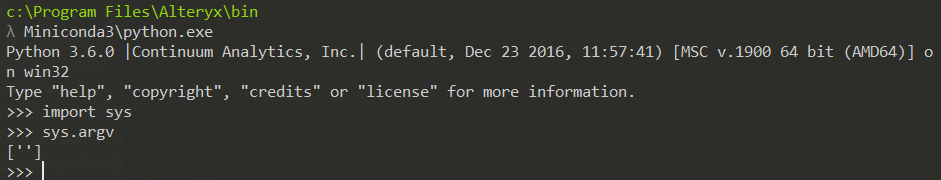

First, let's fire up the non-embedded Python REPL again, and take a look at the sys.argv variable.

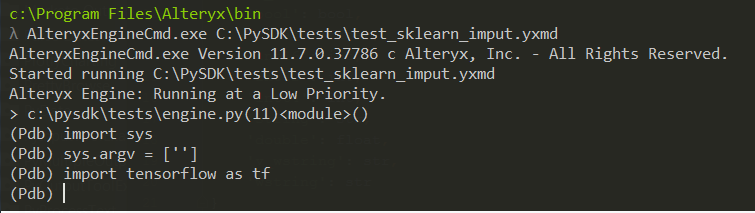

We know that Tensorflow successfully imports in the non-embedded process, and that one delta is the lack of this variable in the embedded process. Let's launch the workflow again, and do a quick test in the pdb REPL to see if we can learn more.

As you can see, the sys.argv variable is the "A/B switch" for the failed Tensorflow import.

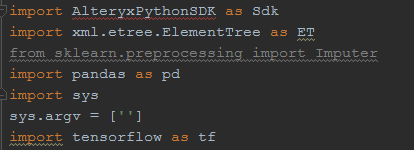

As a pragmatist, I simply add the lines in to my plugin to give Tensorflow what it wants.

Here, we can now see that the problem was solved.

Another neat trick to keep in mind is that this is a great tool for exploring the Python SDK's core data objects and methods, as well as the data being passed around to various methods.

Now that we've added the set_trace command, let's run the workflow again from the command prompt, and inspect the contents of the str_xml variable passed into the method.

Hopefully, this is useful to all of you folks out there hacking away on the Python SDK.

Looking forward to seeing you build great things with it.

Lead Software Engineer, Assisted Modeling

Alteryx

- Mark as New

- Subscribe to RSS Feed

- Permalink

Great article, but are there a debugging strategy for developing .yxi Custom Tools with Python SDK?

- Mark as New

- Subscribe to RSS Feed

- Permalink

@pavloko I'm not sure what you mean? This article is exactly for that purpose. Are you looking for a guide or tutorial?

Lead Software Engineer, Assisted Modeling

Alteryx

- Mark as New

- Subscribe to RSS Feed

- Permalink

Yes, I missed the point that the tool should be part of .yxmd workflow.

Do you need to have a specific license or does it come as part of Alteryx Designer and should be manually turned on?

- Mark as New

- Subscribe to RSS Feed

- Permalink

@JPKA. could also provide some insight into when Alteryx Designer will reload the code? When I make a change in Python code, clicking outside and back on the Tool doesn't work as with JavaScript. I have to reopen the workflow for changes to take effect. Is this normal?

- Mark as New

- Subscribe to RSS Feed

- Permalink

Hi @pavloko, the Python SDK is interacting with the engine, so in order to test changes made within your Python code, you will need to run the tool in a workflow (you shouldn't have to reopen the workflow each time). Some Python backend errors may appear when you click on and off of the tool and they are likely related to the initialization of the tool.

-

.yxi

29 -

Administration

1 -

API

81 -

API Output Tool

18 -

Best Practices

3 -

Connect SDK

9 -

Connectors

4 -

Custom Formula Function

30 -

Custom Tools

136 -

Developer

161 -

Developer Tools

4 -

Gallery

55 -

Help

3 -

HTML GUI

65 -

Input

2 -

Iterative Macro

1 -

JavaScript

32 -

Macro

29 -

Macros

3 -

Optimization

1 -

Python

115 -

Salesforce

1 -

Scheduler

1 -

SDK

143 -

Server

3 -

Workflow

1

- « Previous

- Next »