Alteryx Server Discussions

Find answers, ask questions, and share expertise about Alteryx Server.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Server

- :

- Re: Alteryx Server – AMP is slowing down server?

Alteryx Server – AMP is slowing down server?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Recently a couple of customers turned on AMP on Server and migrated the majority of the workflows to use AMP. While the promise is very simple „AMP provides significant performance and efficiency improvements over the original Engine“ (link) – the results look very different in reality. We have noticed a significant (actually dramatic) decrease in performance.

(screenshot from the SERVER documentation)

I was able to also verify this when testing it on my private server and the reason is very simple: While AMP will increase the performance of a single workflow significantly, it doesn’t do this very efficiently. And this is a false claim by Alteryx stated in the documentation.

I have a 10-core server – therefore I was able to run 5 workflows in parallel on a server with the old E1 engine.

I used a sample workflow that roughly took 10 minutes with E1. Using the E1 setting, it would take 10 minutes to execute this workflow five times.

Switching the workflow to AMP resulted in a great performance increase and took down the time of execution to only four minutes – but it was the only thing running. When running it five times… it takes 20 minutes instead of 10 and therefore decreases the performance by 50%.

The difference became even bigger as soon as we were dependent on internet speeds and interface response times. In many workflows, a lot of time is taken by the Read/Write of files / APIs / DB. For example, let’s assume we have a workflow that simply grabs data from an API and saves it to a database.

The API doesn’t run faster at all when querying it with AMP – the same as the DB when writing it. In these extreme scenarios, this leads to a performance decrease of 80%. This might be an extreme example (that we actually have on many customers), but the premise is the same: As long as read/write are the major time eaters in our workflows, AMP is not a good idea to be used – especially when put on the server.

My friends (who pointed me to this in the beginning) asked me to open this up here as a discussion-starter about AMP and core efficiency as it seems, that Alteryx claims about "efficiency" aren't correctly stated in the documentation or "efficiency" doesn't mean "CPU core-efficiency" and "significant performance improvements" does only mean "single workflow performance improvements" and not "overall performance improvements".

In one of the cases, this became a huge problem. The customer was running his Alteryx server at 90% load factor over the whole week span. Now ... they can't run all the workflows anymore and had to go back to the pre-AMP migration version of it in order to run their business successfully. 100s of hours were wasted optimizing and changing the workflow to be "AMPed" properly and it didn't result in the desired improvements.

I'd hope that we can get some clarification on what Alteryx means by "efficiency" and "performance improvements" as it seems, that this isn't universal and customers are misled by these promises in the server "AMP Engine Best Practices" guide.

Best

Alex

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @grossal ,

Thank you for the observations. 👏

We did not observe the server performance, since we did not enable the AMP engine option in our environments. For better clarity, Alteryx support team can help here.

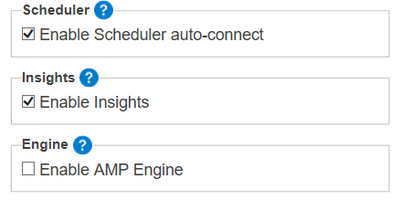

In one of the cases, this became a huge problem. The customer was running his Alteryx server at 90% load factor over the whole week span. Now ... they can't run all the workflows anymore and had to go back to the pre-AMP migration version of it in order to run their business successfully. 100s of hours were wasted optimizing and changing the workflow to be "AMPed" properly and it didn't result in the desired improvements. - If the team has already upgraded the server, the option can be changed in the system setting without degradation. The below two parts need to change in order to use the normal engine.

https://community.alteryx.com/t5/Alteryx-Server-Knowledge-Base/How-to-disable-the-AMP-Engine-on-Gall...

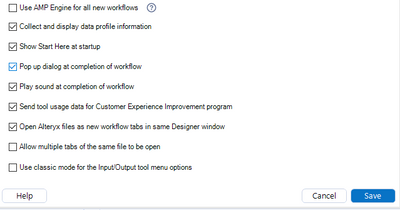

If you want to disable the AMP engine for the designer:

1. Go to Options > User Settings > Edit User Settings.

2. On the Defaults tab, clear the selection from the Use AMP Engine for all new workflows check box.

3. Select Save.

Regards,

Ariharan R

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @Ariharan,

That's a great addition for everyone else and definitely an option. However, we decided to simply go for a rollback and kick off the backup that we did before. The reason for this was simple: No need to delete the AMP'ed optimized workflows and re-upload the E1 workflows (+ scheduling, collections, etc.).

(The AMP-optimized workflows run slower in E1 than the original E1-optimized workflows)

Best

Alex

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi Alex,

I wanted to clarify some guidance that is covered in that same best practices documentation. https://help.alteryx.com/20221/server/amp-engine-best-practices

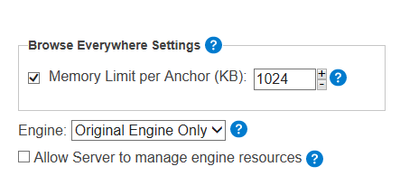

AMP uses resources very differently from original engine (E1). If they simply turned on AMP and converted all workflows without changing how resources are allocated in Server and how many jobs are allowed to run at the same time, then it would not be surprising to see worse performance when AMP is enabled. The original engine really excels at running a lot of jobs in parallel since it only uses 2 cores/threads per running job in most cases. This allows you to run 8 jobs at the same time on a 16 core server (assuming sufficient memory). Because of AMP's heavy usage of multi-threading, it performs much better running jobs in serial rather than in parallel. Due to this difference it is important to adjust how Server is configured.

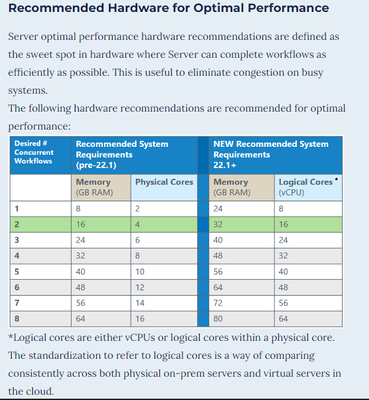

Ideally they would enable the settings that allow Server to manage resources on all workers which should help significantly, but to get the most out of AMP they likely need to increase the CPU and memory resources available on the host machines. Based on our benchmark testing, AMP performance improvements really shine when you can dedicate 6-8 logical cores and at least 8 GB of RAM to each simultaneous job in Server. If you limit AMP by allocating resources the way you used to for original engine you will significantly limit its performance potential.

While maintaining running 5 jobs simultaneously on a 10 core server, you would need a minimum of 40 logical cores/threads and 56GB of RAM to optimize performance with AMP. You could also lower the number of simultaneous jobs and they would run faster serially with AMP (following the guidance below).

Here is the optimization guidance from the documentation, under the What are the minimum specs needed? section:

Sr. Product Manager, Adoption & Expansion

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@grossal ,

Perhaps @jarrodthuener can assist to represent the concerns here.

cheers,

mark

Chaos reigns within. Repent, reflect and restart. Order shall return.

Please Subscribe to my youTube channel.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@TonyaS if only Alteryx' licensing model made this sort of horizontal scaling financially feasible...

I stand with Alex. It doesn't look like we'll get to leverage AMP anytime soon.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Maybe a couple of parts weren’t clear enough or over-read. Let me give you a very simple example:

Let’s say we have a 100m² warehouse and production pipelines. We are currently running a very small E1 engine, which takes about 10m² space and produces only one part per hour.

My boss now wants me to evaluate new AMP-production pipelines, there are multiple options:

- An AMP-1 engine, which takes the full 100m², but produces stunning 7.5 parts per hour!

- A smaller AMP-2 engine, which only takes 50m², but produces 3.6 parts per hour!

- An even smaller AMP-3 engine, which only takes 30m², but produces 2.25 parts per hour!

After running all four pipelines for an hour, the results look like this:

- E1: 10 parts

- AMP-1: 7.5 parts

- AMP-2: 7.2 parts

- AMP-3: 6.75 parts

This is what currently happens on the server when using the AMP engine. And if you are simply saying “10 AMP-1 Warehouse would run faster than your 10 E1 machines” – you are right. But if I would fill up 9 additional warehouses with E1, it would still be faster with E1.

(In my analogy, AMP-1 stands for AMP with 1 parallel workflow, AMP-2 for 2 parallel workflows, etc)

I completely agree with @raychase as well. Server add-on packages are pricy. Even if server cores would be free upgrades, I'd still have the additional hardware cost.

And yes, I know this example was simplified, but I can ensure you, it was tested with a lot of configurations and not a single one played out well for AMP with the limitation "We use the same licenses as we have now" - all resulted in less performance as shown above.

I don't remember all numbers and setups that were tested, but one of the configurations involved 10 physical cores (20 logical), 128 GB RAM - but this was a rather small config, others tried it on larger installations with 32 cores and 512 GB RAM - with similar results.

I hope this example helped to understand the problem that we ran into. I can also ensure you, that weeks and weeks of testing were done on the client's side even before they showed it to me at Inspire Amsterdam. It only took a couple of hours to have similar results on my own server (the most annoying part was building a workflow that eats up a little bit of time).

Best

Alex

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

This is advancing beyond my limited knowledge of Server.

Let me try to get some more Server savvy folks to chime in on this thread.

Sr. Product Manager, Adoption & Expansion

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Alex, thanks for taking the time to write this up in such detail. Let me get this back to the team and I’ll keep you posted on what we find out.

Ryan Klein

Manager, Product Management

-

Administration

1 -

Alias Manager

28 -

Alteryx Designer

1 -

Alteryx Editions

3 -

AMP Engine

38 -

API

386 -

App Builder

18 -

Apps

299 -

Automating

1 -

Batch Macro

58 -

Best Practices

317 -

Bug

96 -

Chained App

96 -

Common Use Cases

131 -

Community

1 -

Connectors

157 -

Database Connection

336 -

Datasets

73 -

Developer

1 -

Developer Tools

133 -

Documentation

118 -

Download

96 -

Dynamic Processing

89 -

Email

81 -

Engine

42 -

Enterprise (Edition)

1 -

Error Message

415 -

Events

48 -

Gallery

1,420 -

In Database

73 -

Input

180 -

Installation

140 -

Interface Tools

180 -

Join

15 -

Licensing

71 -

Macros

149 -

Marketplace

4 -

MongoDB

262 -

Optimization

62 -

Output

274 -

Preparation

1 -

Publish

199 -

R Tool

20 -

Reporting

99 -

Resource

2 -

Run As

64 -

Run Command

102 -

Salesforce

35 -

Schedule

258 -

Scheduler

357 -

Search Feedback

1 -

Server

2,202 -

Settings

541 -

Setup & Configuration

1 -

Sharepoint

85 -

Spatial Analysis

14 -

Tableau

71 -

Tips and Tricks

232 -

Topic of Interest

49 -

Transformation

1 -

Updates

90 -

Upgrades

197 -

Workflow

600

- « Previous

- Next »