Alteryx Designer Desktop Discussions

Find answers, ask questions, and share expertise about Alteryx Designer Desktop and Intelligence Suite.- Community

- :

- Community

- :

- Participate

- :

- Discussions

- :

- Designer Desktop

- :

- Single field text file - causing headaches - troub...

Single field text file - causing headaches - trouble parsing

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi everyone,

I'm having a real headache with a particular file (literally).

The problem: the data is provided as a single-field text file. The field is about 9,000 characters wide, and I have about 500,000 records.

The data does come with a datamap, that I can upload into Alteryx and thanks to a very kind member of this community (@BobMoss) I can get that to parse my data.

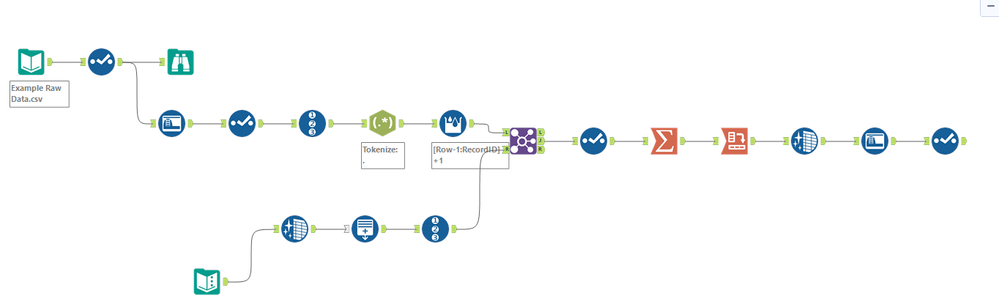

The issue I'm having relates to the regex tokenize function - to map the data, every character in the datafile is parsed out into individual columns, and ultimately regrouped and transformed into the end result I want. But, because of the level of parsing, when dealing with the whole file of 100's of thousands of records everything grinds to a halt. The file size balloons to hundreds of gig, and things get a little impractical.

So, I was wondering if there is another way to do this? I've attached my workflow and sample file (note, the sample file is only 500 records, so you'll be able to see how nicely the workflow works with small files).

Any ideas?

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @EvolveKev

@CharlieS has a great suggestion, to wrap this up with a batch macro and control the volume you're passing through the process.

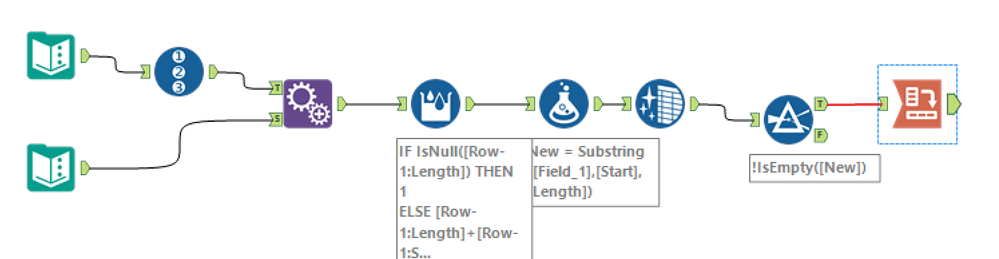

Or, see if this process might work. Instead of tokenizing, I'm using some substring functions, and figuring out the starting position with a multirow formula tool.

Cheers!

Esther

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

There's still one outstanding challenge to my recommended solution (the csv file has quotes around each input and I didn't do any preprocessing for this), but that should be trivial to resolve.

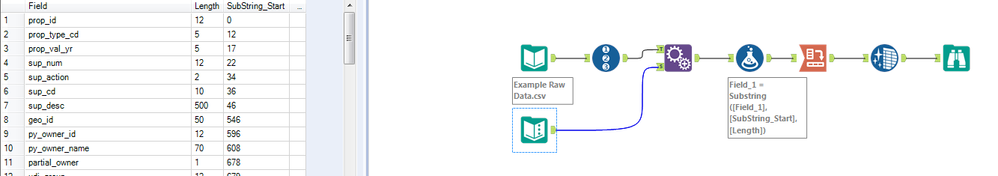

You can read this in pretty effectively using the alteryx Flat file specification. The file is too long to leverage the manual field selector (and we wouldn't want to anyway), but if you point to a .FLAT description file, under configuration option 5 you can then specify the incoming file you want to read in.

Then, if you need to update the schema, all you have to do is modify the .Flat file that has the field-level information. Since this is an XML document, it should be fairly easy to modify either by hand or within Alteryx.

This turns the entire process into a one-tool solution, and takes <600kb of space to display. It also runs in .5 seconds instead of ~16 for 500 records, and doesn't cause columns to reorder.

Hope this helps! Let me know if you have questions or run into problems.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hi @EvolveKev, try this one. It's simpler than your current workflow. I able to reduce the processing time from your current workflow by a quite a bit.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

It looks like @estherb47, and I had the same idea. Sorry! Didn't mean to copy what you had posted. Great minds think alike :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hey guys - thanks for all of your help.

I'm looking through all of your suggestions as we speak, and I'll let you know what questions I have. This is so great!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

Hey guys - all of this is absolutely brilliant. My team and I have learned a whole lot from every single response here. We're blown away!

One thing we don't quite understand is how @Claje got the column headings into that awesome flat ASCII file. That processes the data in a crazy-fast fashion, but I'm missing a critical part of the puzzle and I'm not quite smart enough to figure it out.

I can point the .flat file to the data - super easy...but how exactly did you get the column configuration in there, @Claje ? You mention something about schema/XML?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

So the easiest way to visualize this piece is to go to the folder where my YXZP was exported (you can find this from the workflow by looking at the Workflow pane of Workflow-Configuration).

I'd recommend opening the test.flat file that is in that folder. Usually Windows will say it can't read the file, but if you open it in notepad (or any browser), it should display.

There is some great documentation on the Alteryx Help page related to this flat file structure. I am going to explain some pieces of it here as well:

The document opens with <flatfile version="1"> - this value has to exist for all .Flat files that you use.

Next is the <file> tag. This has several optional elements which can be used to define default behavior for the flat file. I'd recommend reading the Alteryx documentation on each of these, but the example file i used has a default path and end of line character specification which can be used. You can override any behavior from the <file> tag inside the Input Data tool in Alteryx by changing the configuration for that element to be something other than "Use description file". For example, your file has at least one short line, so I configured the input data tool to say "Allow short lines for records" in option 7, but I could also have defined this in the <file> tag by adding a line that said:

allowshortlines="t"

Finally, we have the <fields> tag. This is the piece which would require the most maintenance as the layout is updated.

Each individual field is called out inside of the <fields> tag with a <field name> tag.

As an example, I've grabbed the first field from the .flat file I created and copied it below.

<field name ="prop_id" type="String" length="12" />

Every field has to be configured this way, and each element has to be defined, so if I wanted to add a new field named "test" that was a string with length 50, It would look like this:

<field name ="test" type="String" length="50" />

Note that the order of these fields is critical - if you move "test" before "prop_id" it will be characters 1-50, with prop_id being characters 51-62. If it is below "prop_id", prop_id is characters 1-12 and test is 13-62.

Based on the requirements of a fixed width file, I defaulted everything to a "String". you could change this typing, but you could also manage this via a Select tool in Alteryx later in your process.

To update an individual field's name or length, the XML of the .flat file would simply need to be updated for that field.

To generate this XML format, I actually used Alteryx with your incoming template file, and simply built a Formula that created the <field name> lines of this data, and I manually deleted all <field name> lines and pasted in my new ones. You could definitely build this into an Alteryx workflow so that there was no manual step, but you would need to do a cost benefit analysis to see if that was productive.

My formula looked something like this:

'<field name ="'+field+'" type="String" length="'+TOSTRING(length)+'" />'

The only important piece of this design is that because the XML layout needs double quotes around each value, we had to use single quotes to tell alteryx which strings we were defining in the formula.

Then, as long as your .FLAT file is somewhere that your workflow can reference it, it will dynamically update your input process to match the modified schema.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Notify Moderator

@Claje - thank you! This is absolutely incredible. I'll try this shortly - but it makes complete sense. This is going to save so much time!

Thanks (to all of you) for the really detailed explanations - we're blown away by the support community!

-

Academy

6 -

ADAPT

2 -

Adobe

204 -

Advent of Code

3 -

Alias Manager

78 -

Alteryx Copilot

26 -

Alteryx Designer

7 -

Alteryx Editions

95 -

Alteryx Practice

20 -

Amazon S3

149 -

AMP Engine

252 -

Announcement

1 -

API

1,208 -

App Builder

116 -

Apps

1,360 -

Assets | Wealth Management

1 -

Basic Creator

15 -

Batch Macro

1,559 -

Behavior Analysis

246 -

Best Practices

2,695 -

Bug

719 -

Bugs & Issues

1 -

Calgary

67 -

CASS

53 -

Chained App

268 -

Common Use Cases

3,825 -

Community

26 -

Computer Vision

86 -

Connectors

1,426 -

Conversation Starter

3 -

COVID-19

1 -

Custom Formula Function

1 -

Custom Tools

1,938 -

Data

1 -

Data Challenge

10 -

Data Investigation

3,487 -

Data Science

3 -

Database Connection

2,220 -

Datasets

5,222 -

Date Time

3,227 -

Demographic Analysis

186 -

Designer Cloud

742 -

Developer

4,372 -

Developer Tools

3,530 -

Documentation

527 -

Download

1,037 -

Dynamic Processing

2,939 -

Email

928 -

Engine

145 -

Enterprise (Edition)

1 -

Error Message

2,258 -

Events

198 -

Expression

1,868 -

Financial Services

1 -

Full Creator

2 -

Fun

2 -

Fuzzy Match

712 -

Gallery

666 -

GenAI Tools

3 -

General

2 -

Google Analytics

155 -

Help

4,708 -

In Database

966 -

Input

4,293 -

Installation

361 -

Interface Tools

1,901 -

Iterative Macro

1,094 -

Join

1,958 -

Licensing

252 -

Location Optimizer

60 -

Machine Learning

260 -

Macros

2,864 -

Marketo

12 -

Marketplace

23 -

MongoDB

82 -

Off-Topic

5 -

Optimization

751 -

Output

5,255 -

Parse

2,328 -

Power BI

228 -

Predictive Analysis

937 -

Preparation

5,169 -

Prescriptive Analytics

206 -

Professional (Edition)

4 -

Publish

257 -

Python

855 -

Qlik

39 -

Question

1 -

Questions

2 -

R Tool

476 -

Regex

2,339 -

Reporting

2,434 -

Resource

1 -

Run Command

575 -

Salesforce

277 -

Scheduler

411 -

Search Feedback

3 -

Server

630 -

Settings

935 -

Setup & Configuration

3 -

Sharepoint

627 -

Spatial Analysis

599 -

Starter (Edition)

1 -

Tableau

512 -

Tax & Audit

1 -

Text Mining

468 -

Thursday Thought

4 -

Time Series

431 -

Tips and Tricks

4,187 -

Topic of Interest

1,126 -

Transformation

3,730 -

Twitter

23 -

Udacity

84 -

Updates

1 -

Viewer

3 -

Workflow

9,980

- « Previous

- Next »